【点击观看 AI 速读解析视频,轻松读懂全书重点】

Preface

The book aims to develop empirical Bayes estimators for positive parameters in seven hierarchical models under Stein’s loss function, with theoretical derivations, simulations, and real data examples.

Chapter 1 is an introduction, including introductory texts on the empirical Bayes method, gamma and inverse gamma distributions, hierarchical models with positive restricted parameters, estimating the hyperparameters, Stein’s loss function, Bayes estimators and Posterior Expected Stein’s Losses (PESLs), theoretical comparisons of the Bayes estimators and the PESLs of three methods, simulation techniques, and R codes. Chapters 2–8 contain the main results of the research. Each chapter deals with a different hierarchical model, and calculates the empirical Bayes estimators of the positive parameter of the hierarchical model under Stein’s loss function. Chapter 9 is devoted to 16 common loss functions, namely, squared error loss function, weighted squared error loss function, Stein’s loss function, power-power loss function, power-log loss function, Zhang’s loss function, LINEX loss function, absolute error loss function, weighted absolute error loss function, power loss function, weighted power loss function, log-1 loss function, log-2 loss function, generalized log loss function, generalized Stein’s loss function, and generalized power-power loss function. Chapter 10 contains some summaries and discussions of the book. Appendix A contains some technical derivations of the results in chapters 2–8. Appendix B summarizes some basic results on common univariate distributions.

The contents of chapters 2–8 are summarized in table 1. From the table, we observe the following facts.

1. Each chapter contains a theoretical section, simulations section, and/or a real data section.

2. For the theoretical section, every chapter contains two subsections: Bayes estimators and PESLs, and empirical Bayes estimators of  .

.

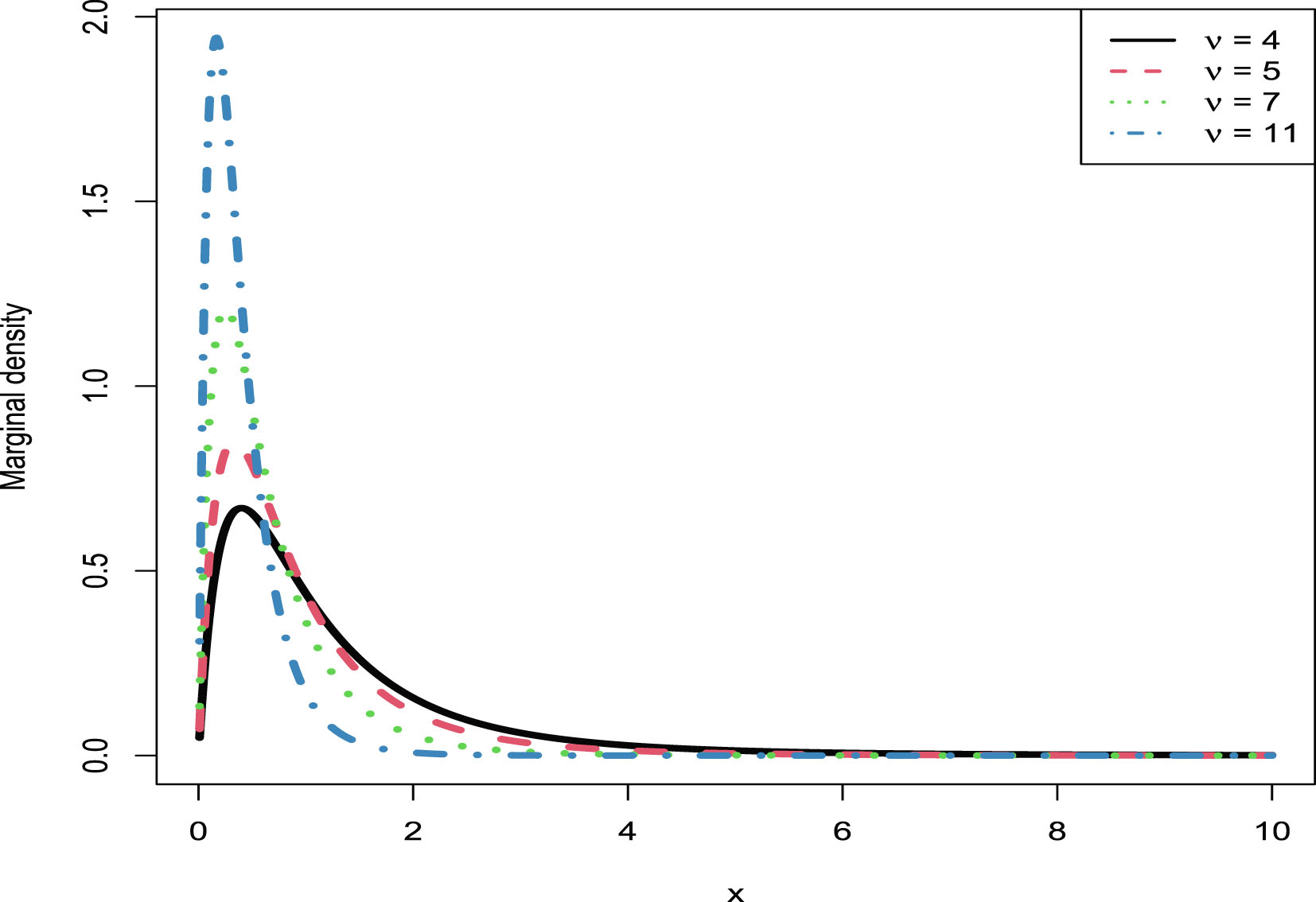

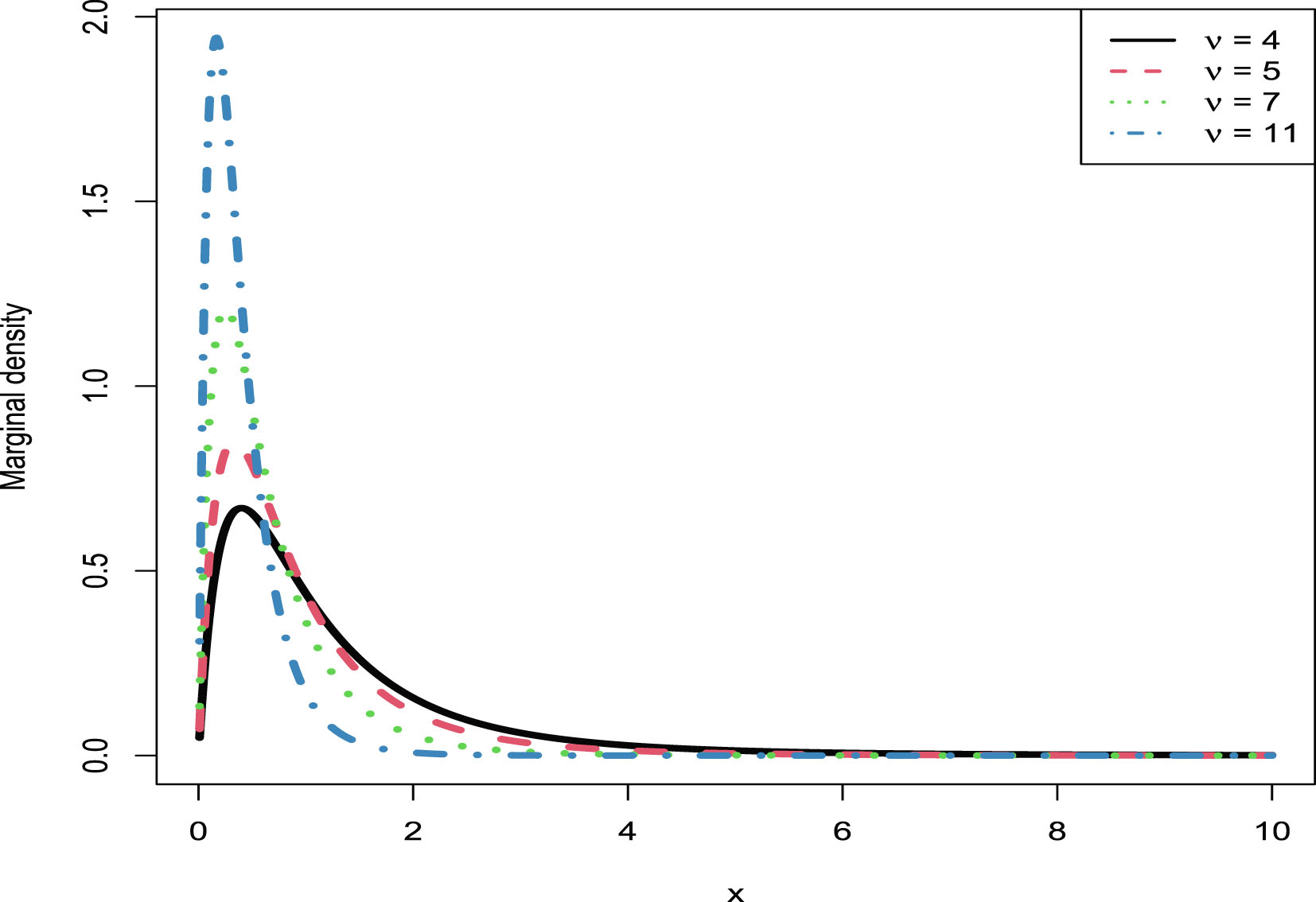

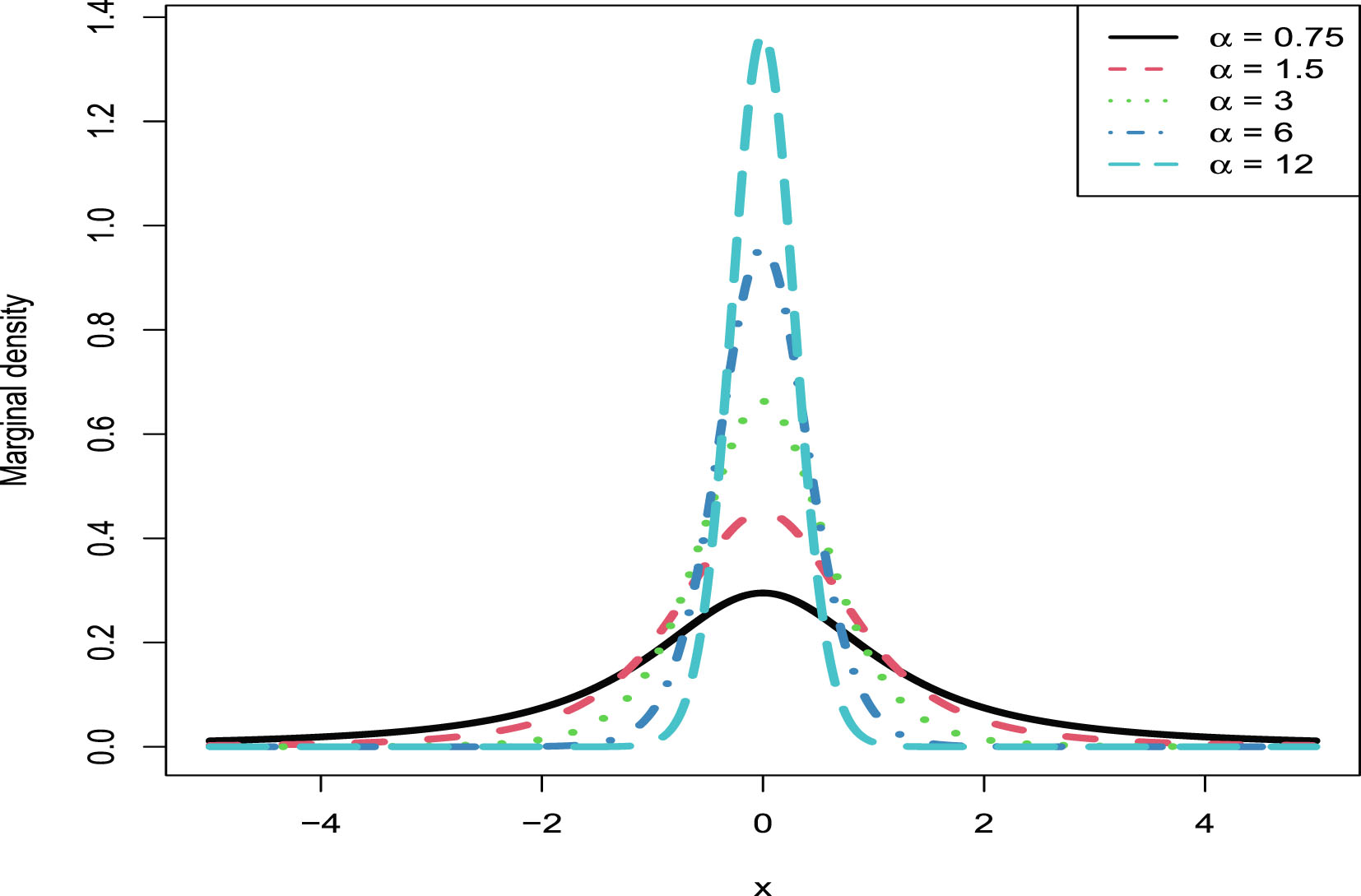

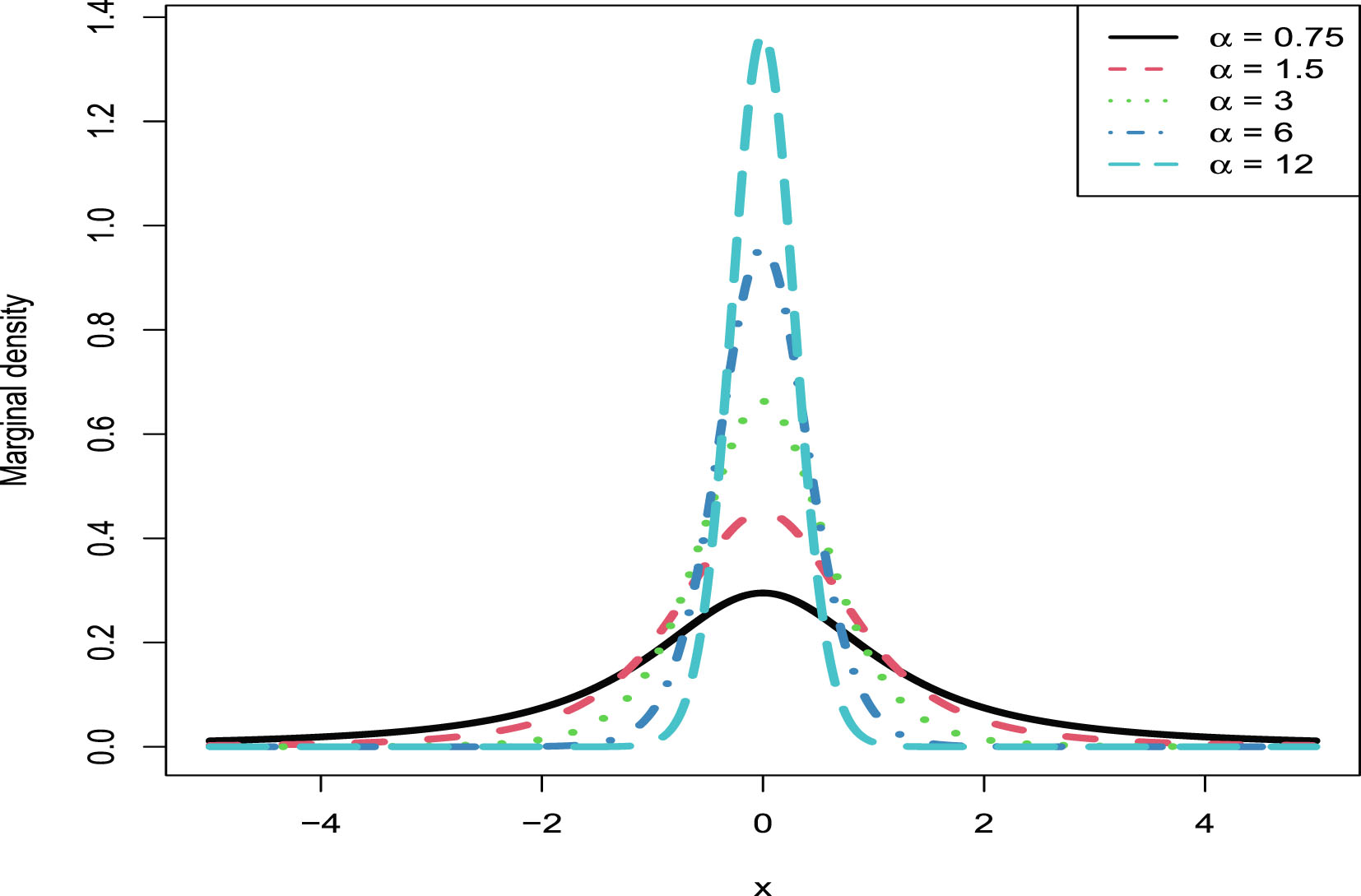

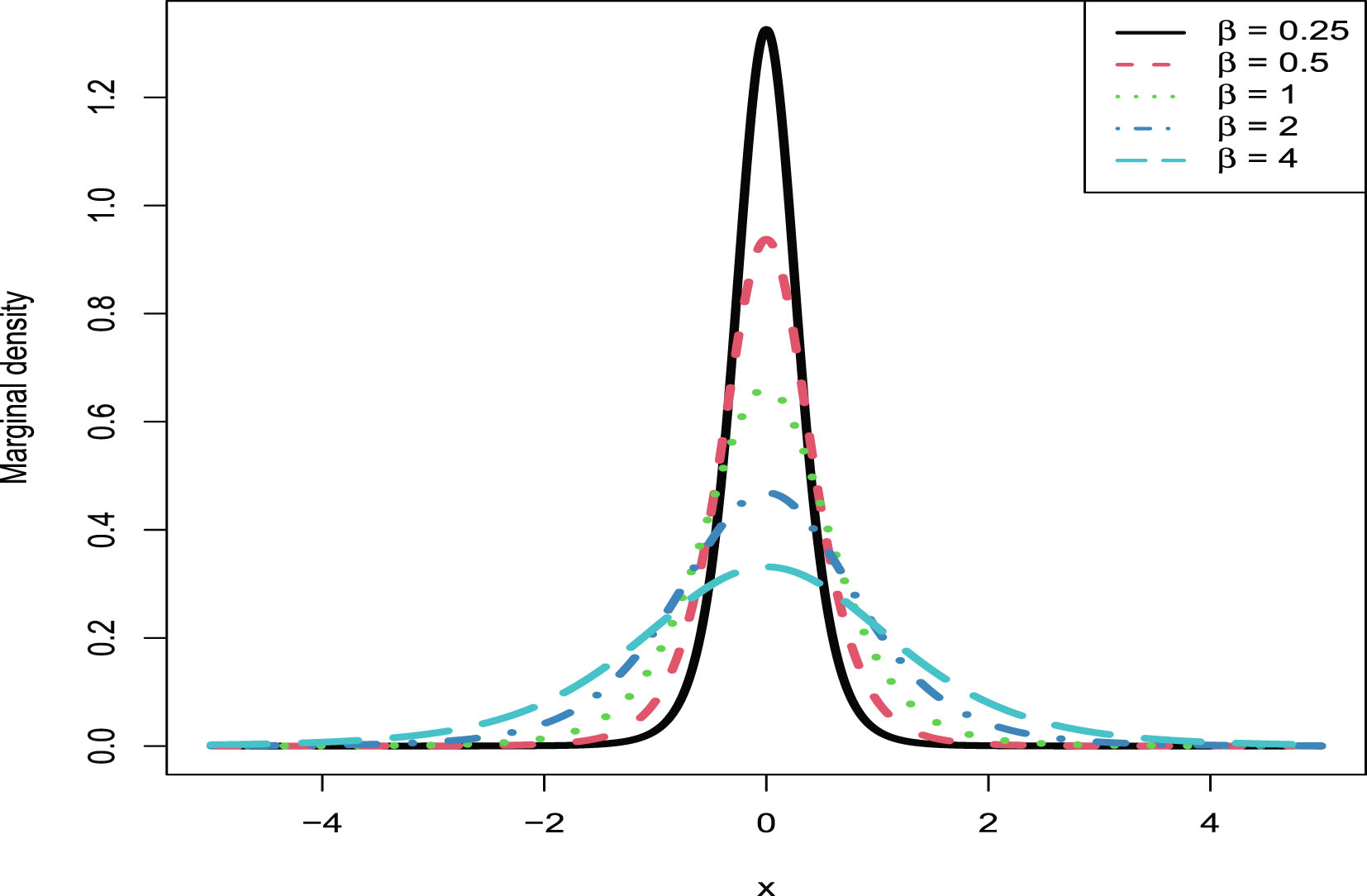

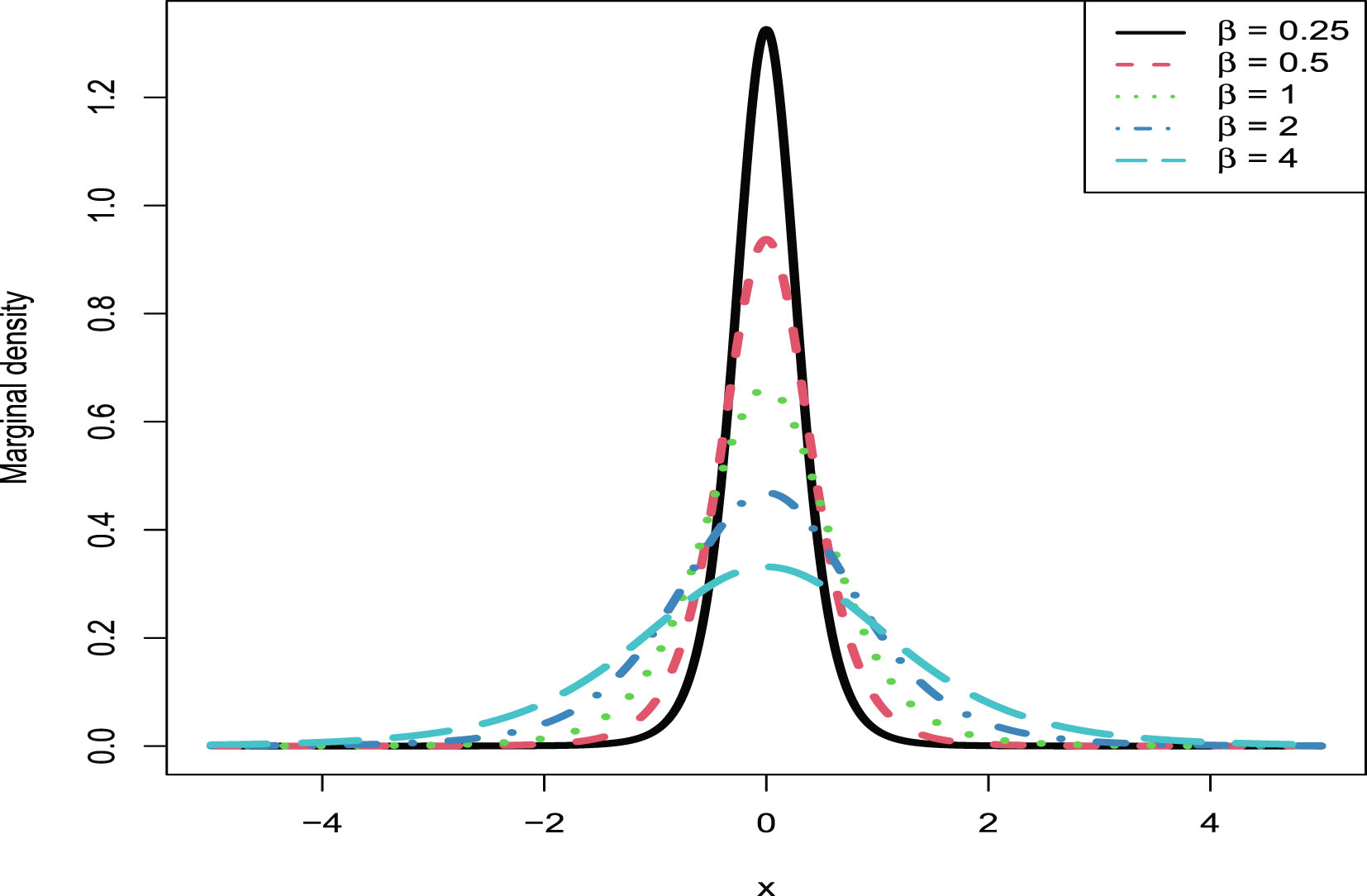

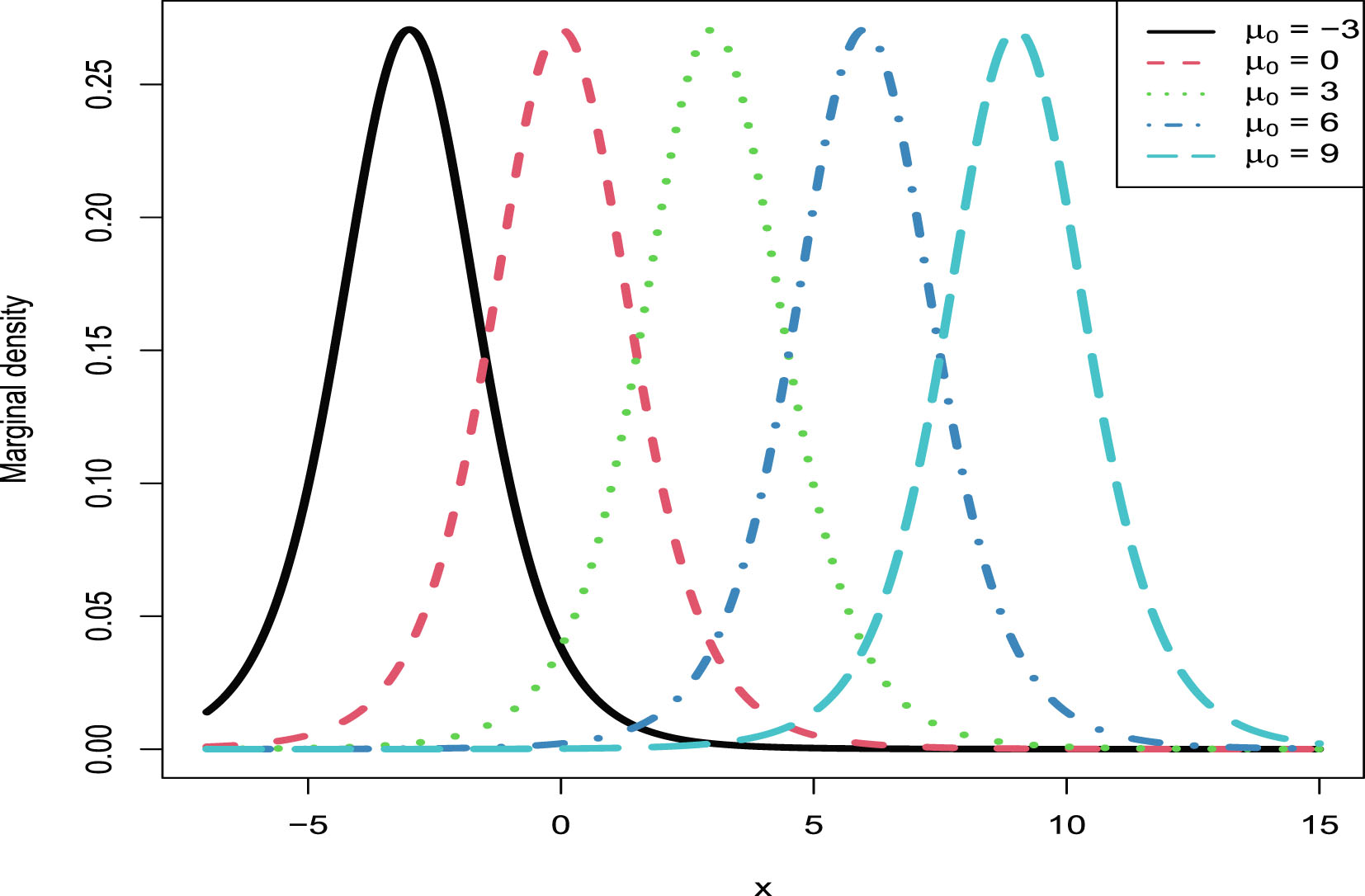

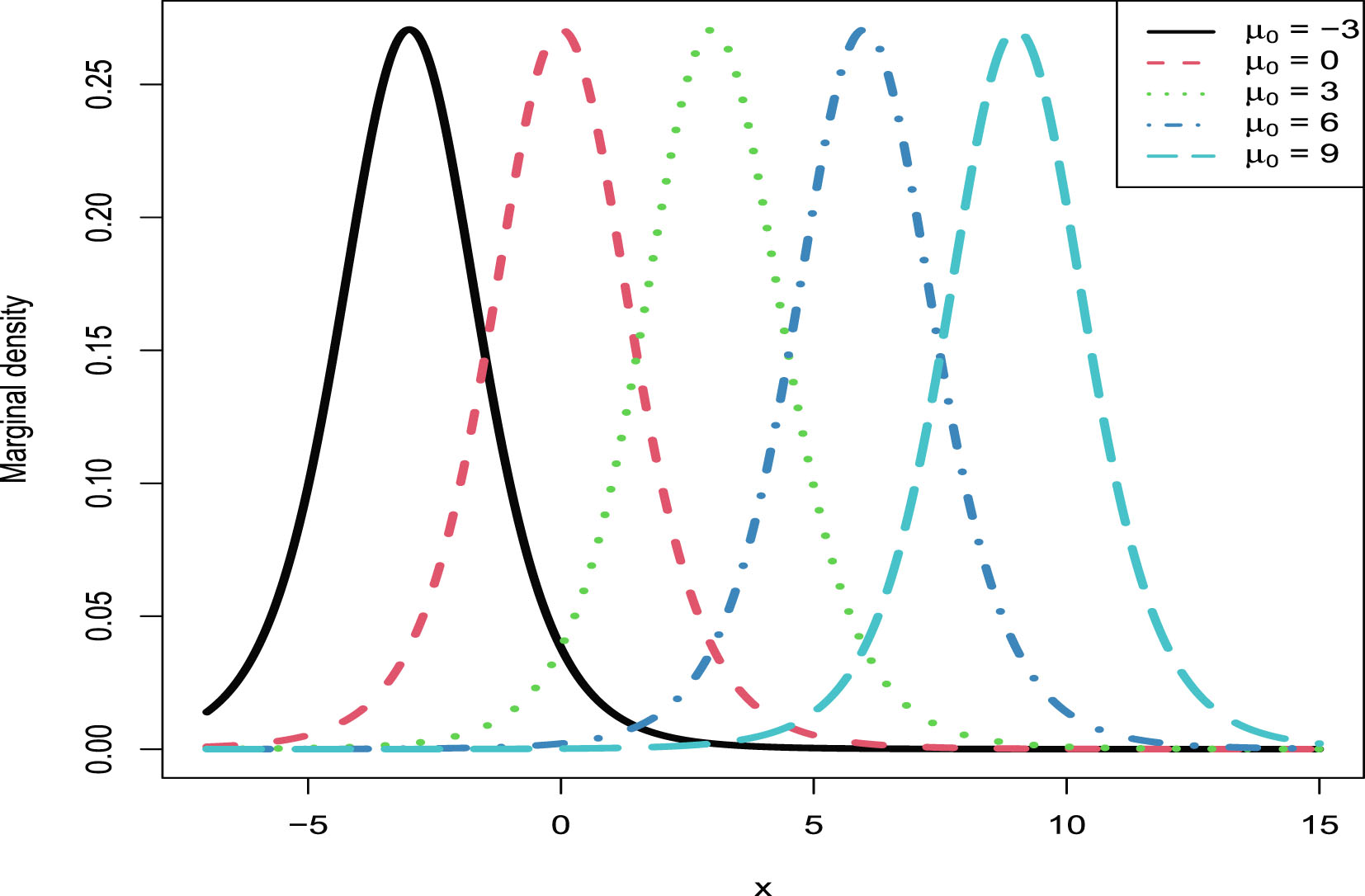

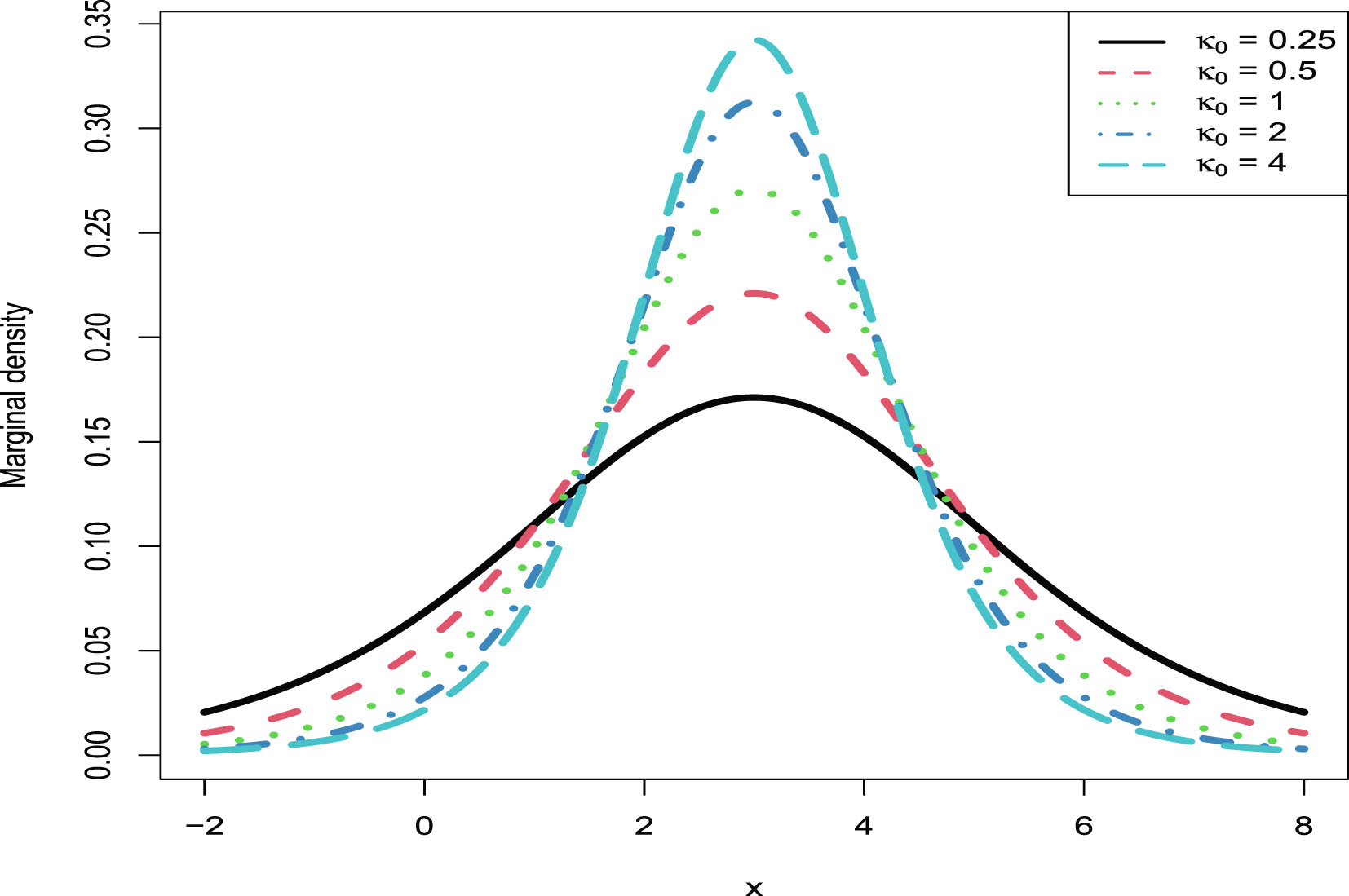

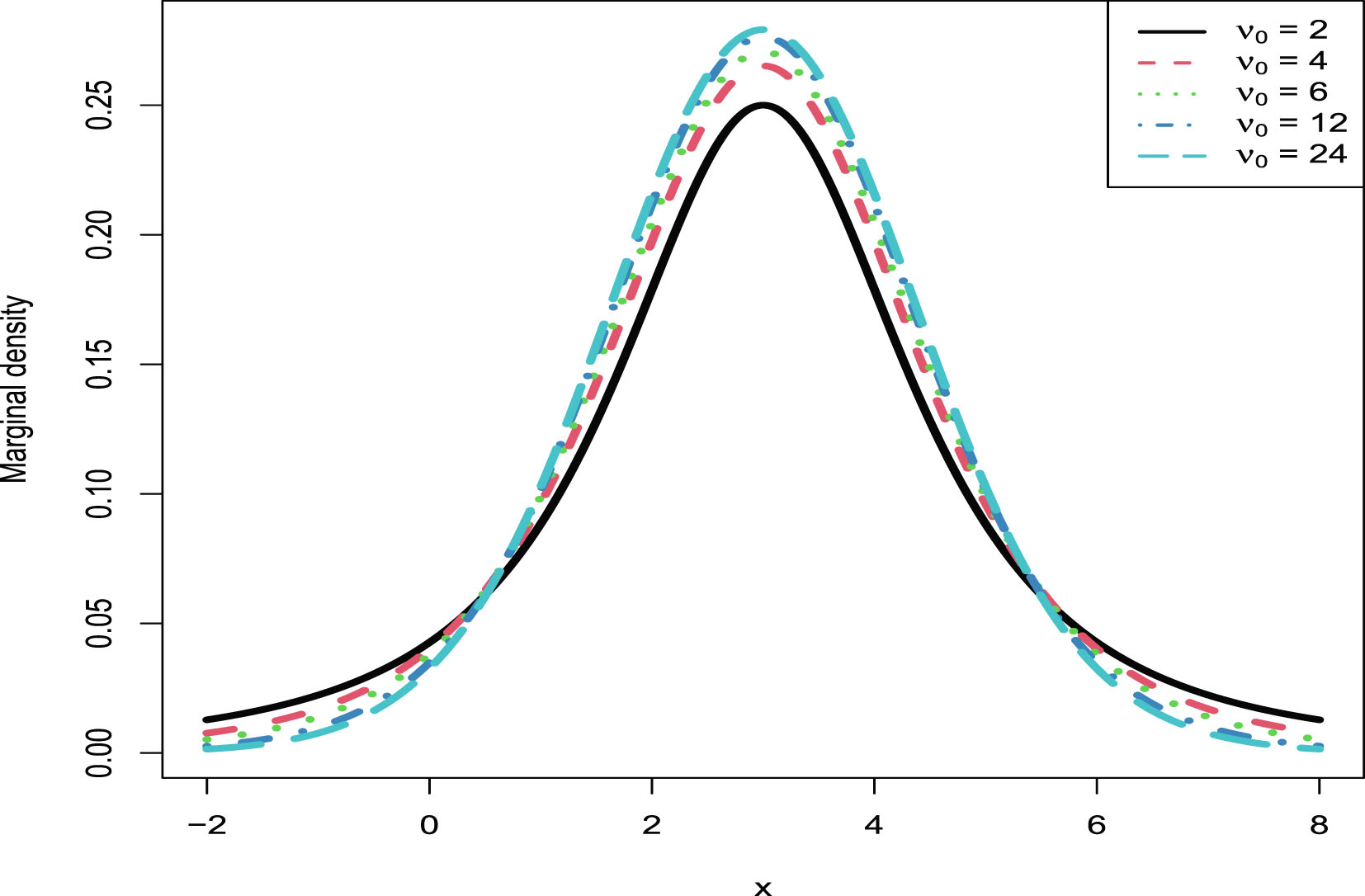

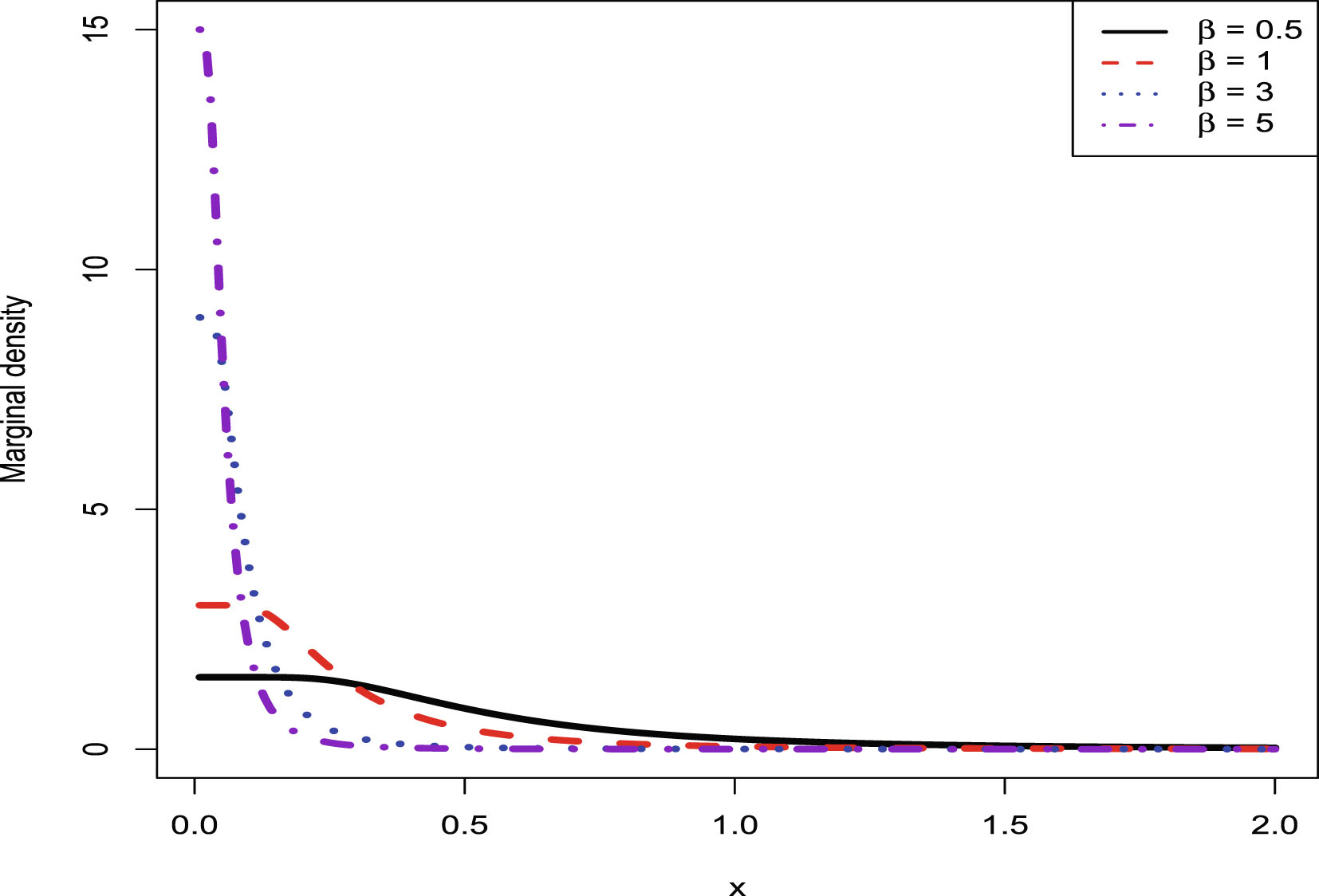

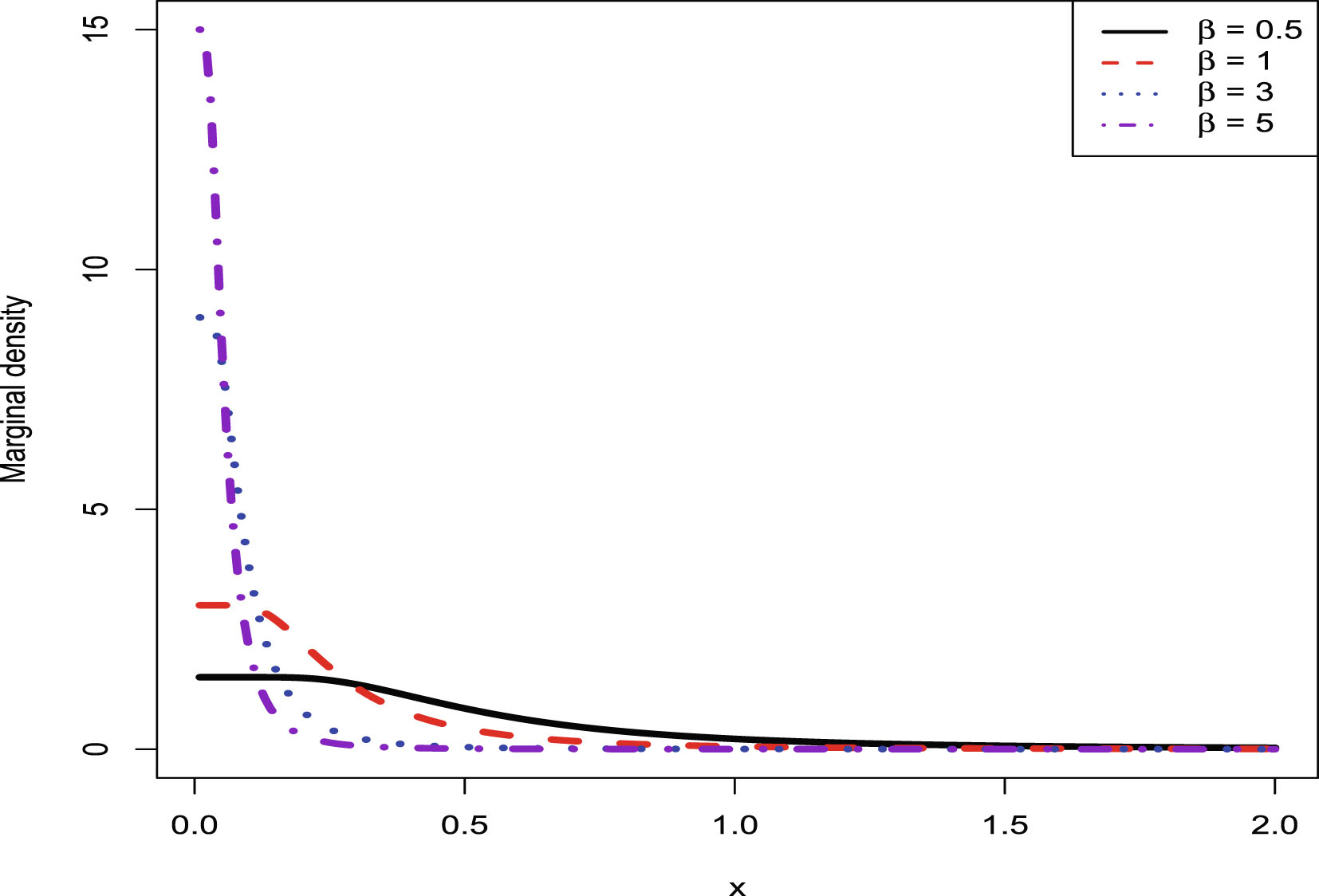

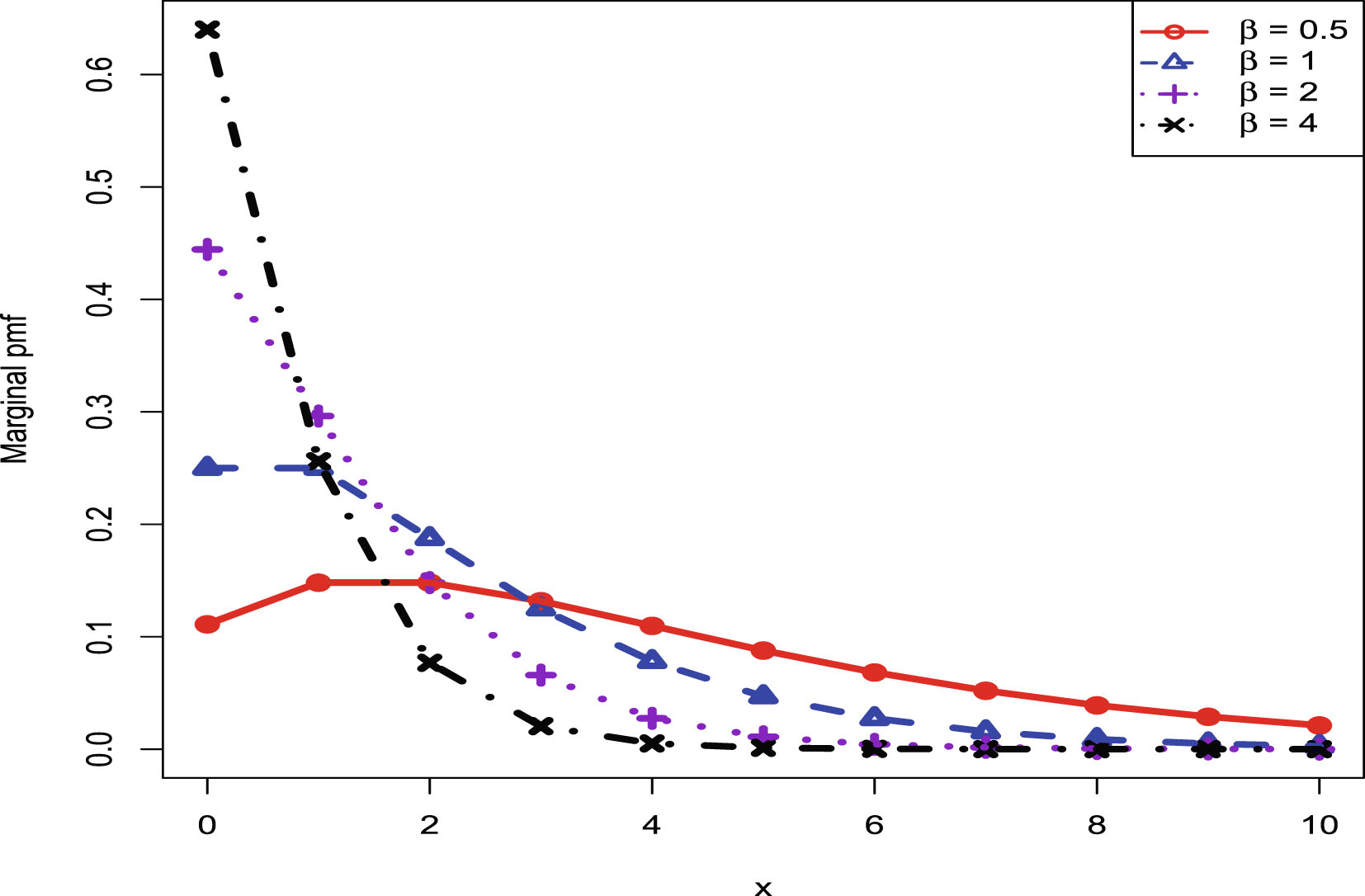

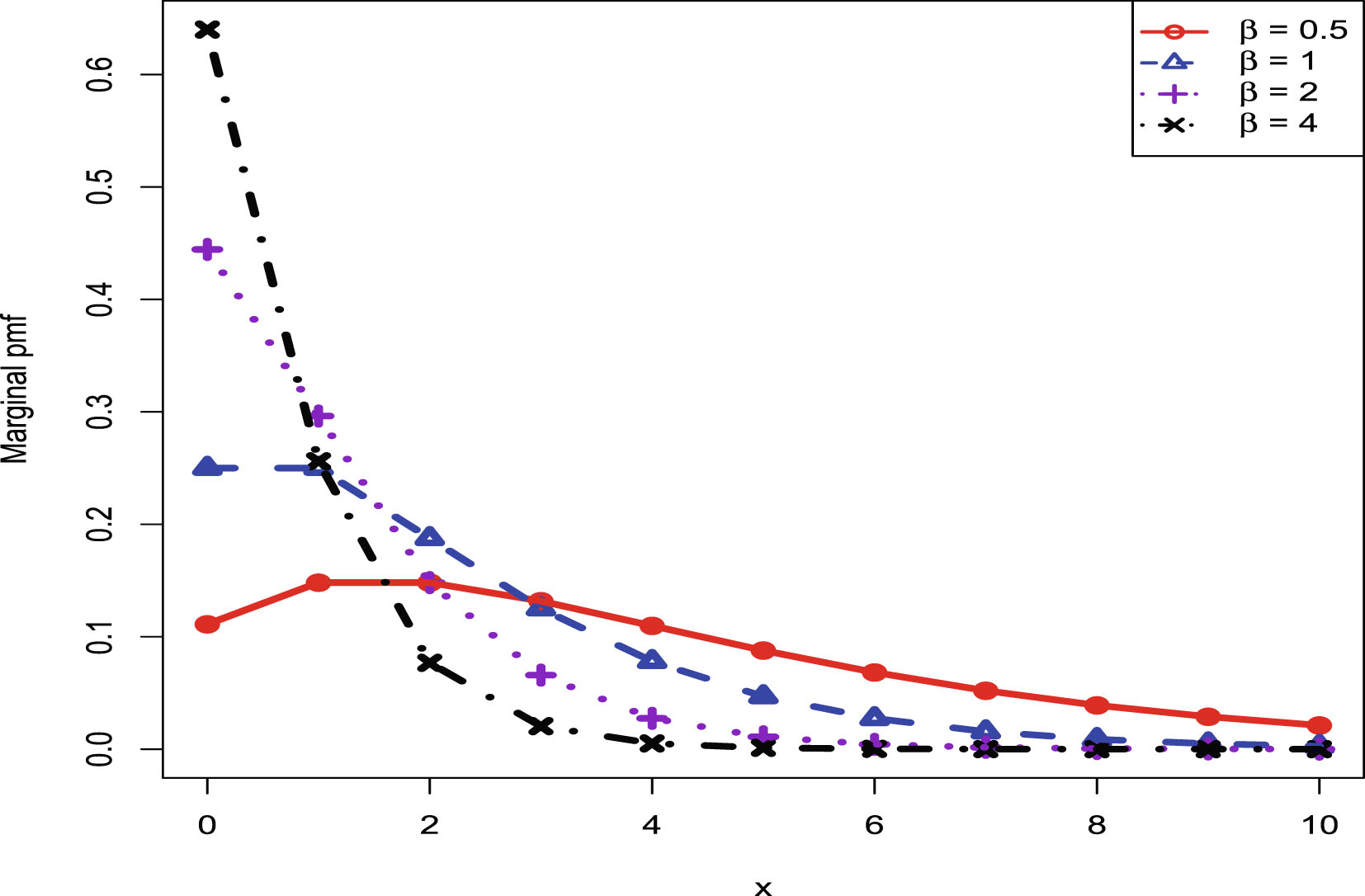

3. For the simulations section, every chapter contains four subsections: Two inequalities of Bayes estimators and PESLs, consistencies of moment estimators and Maximum Likelihood Estimators (MLEs), goodness-of-fit of the model, and marginal distributions for various hyperparameters.

4. If a chapter contains a subsection, then we place a  . However, if a chapter does not contain a subsection, then we place a

. However, if a chapter does not contain a subsection, then we place a  .

.

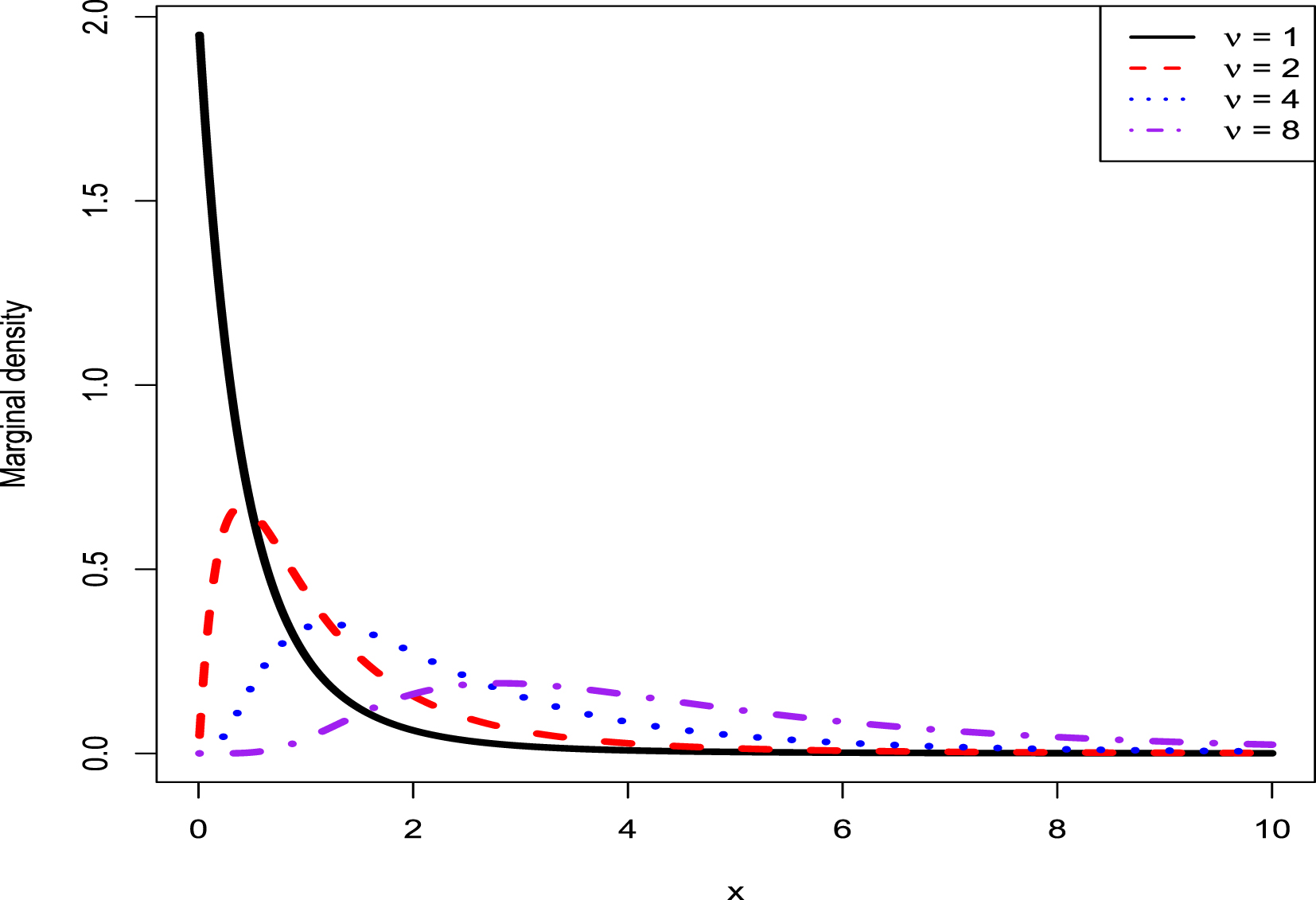

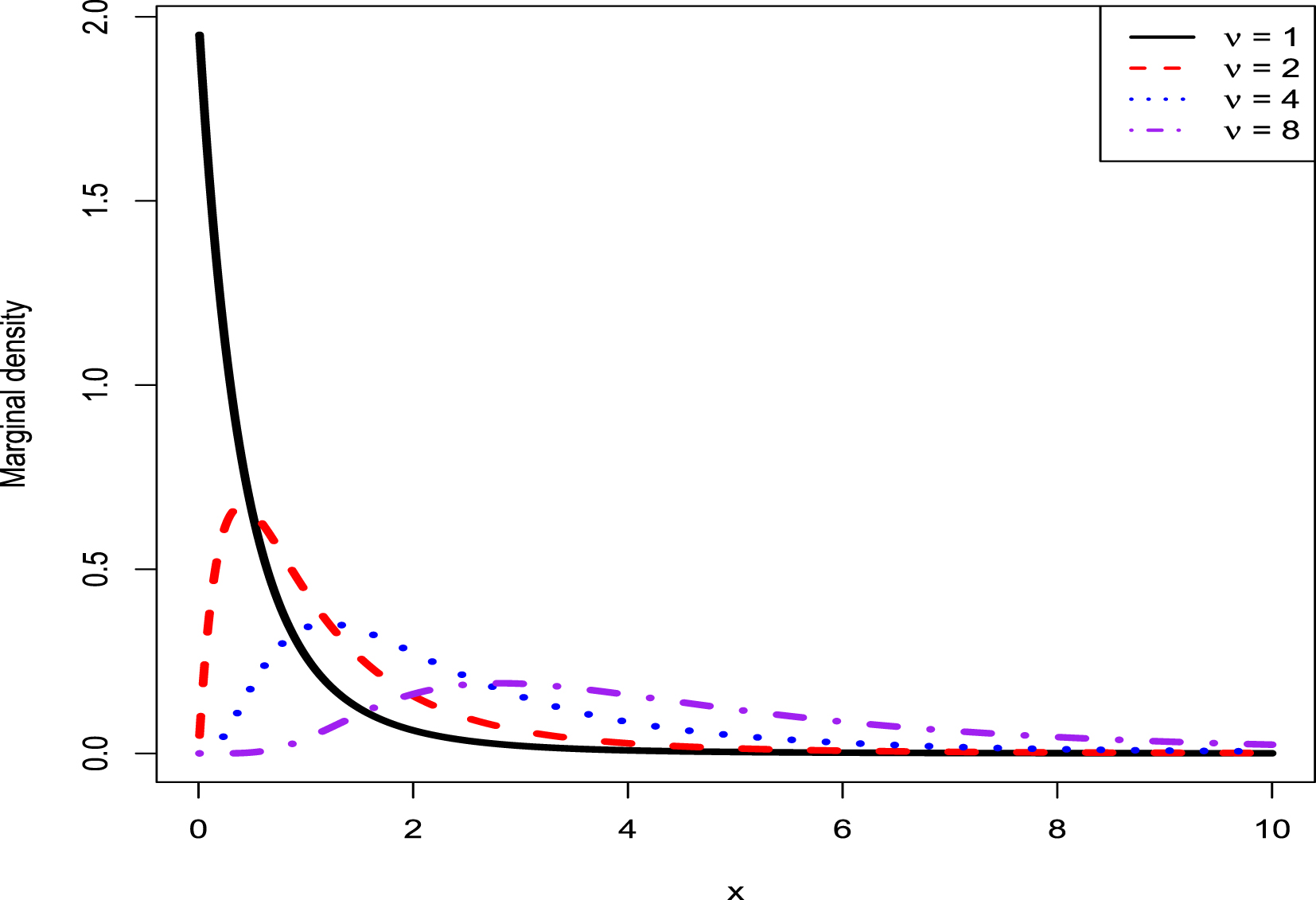

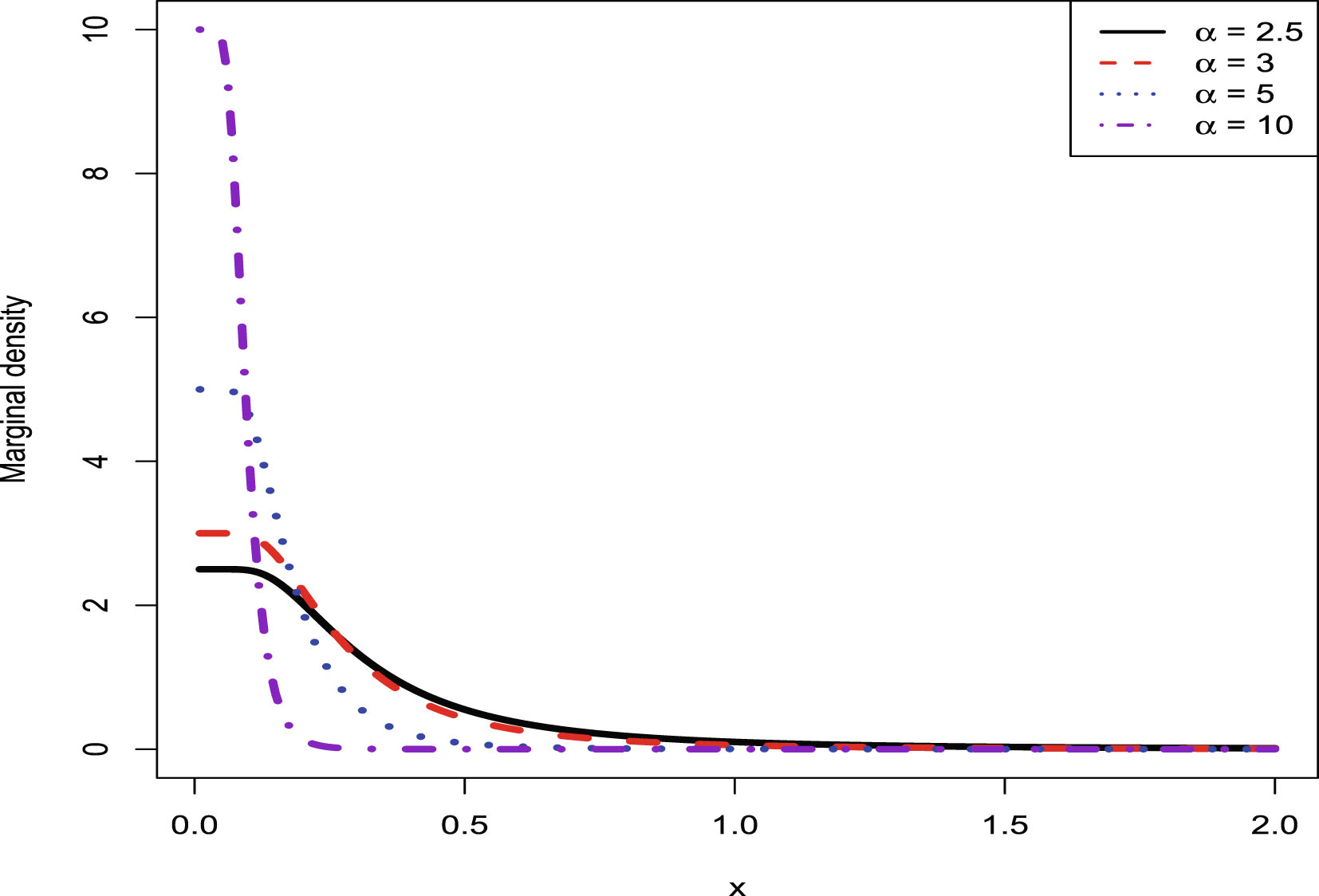

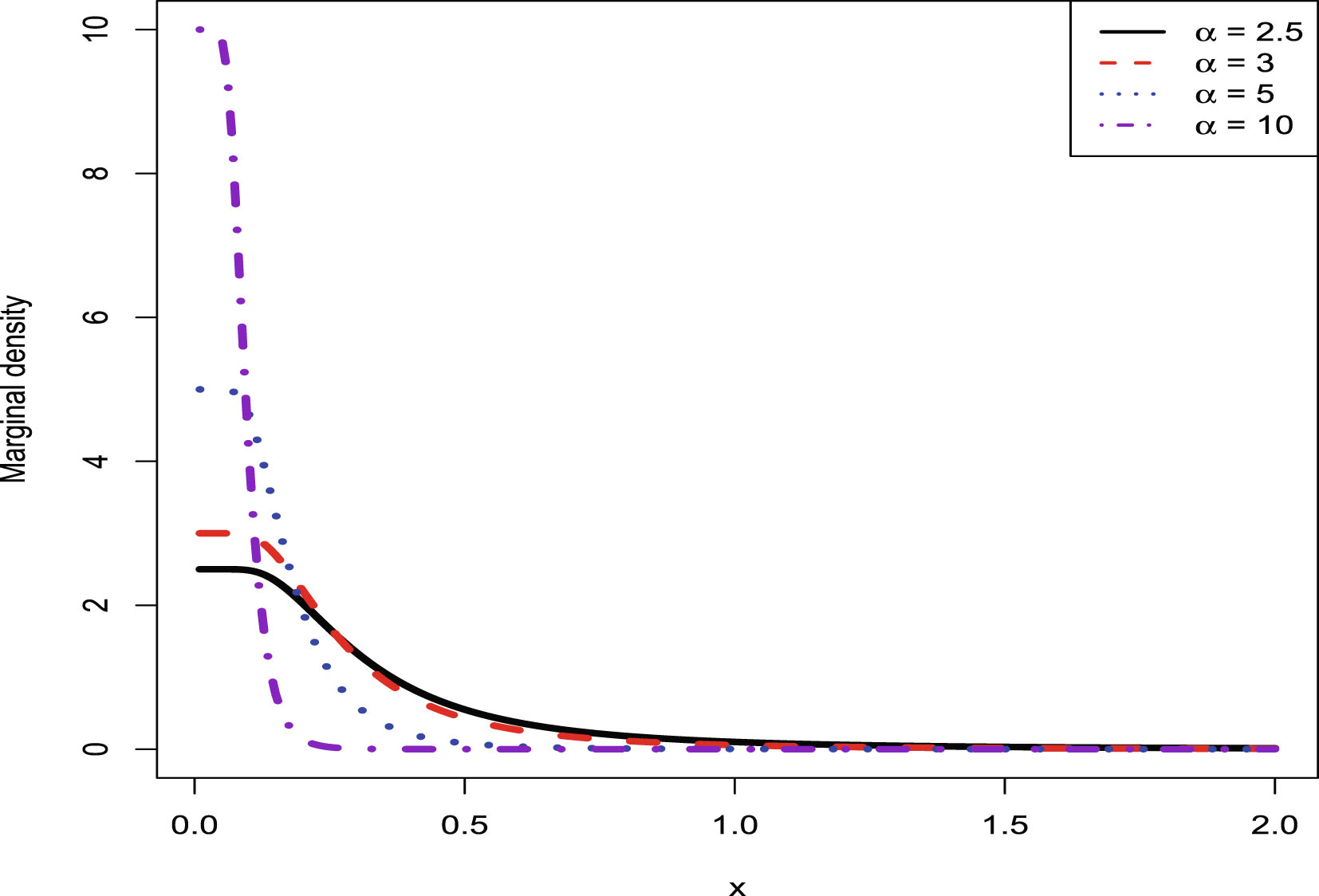

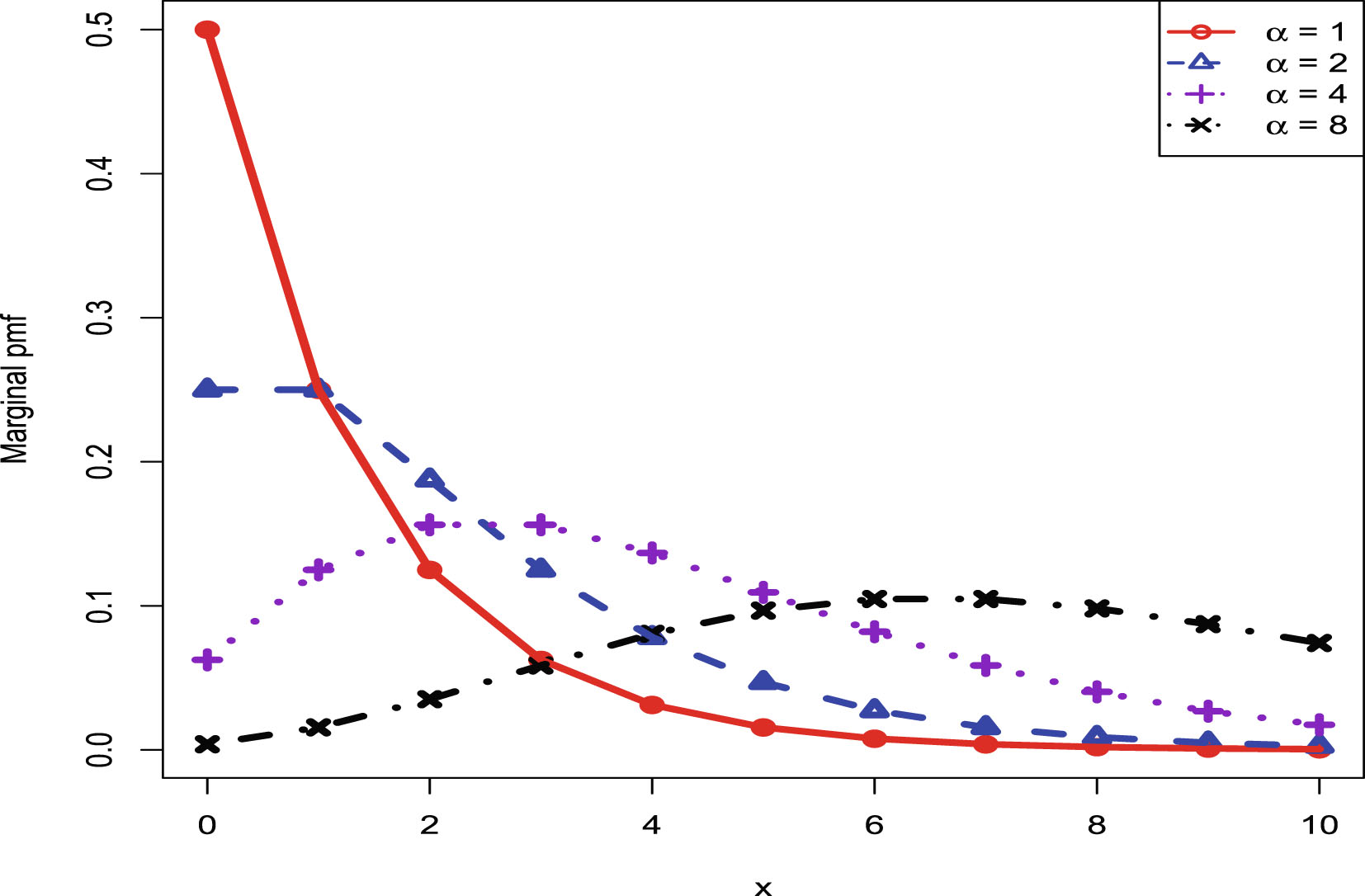

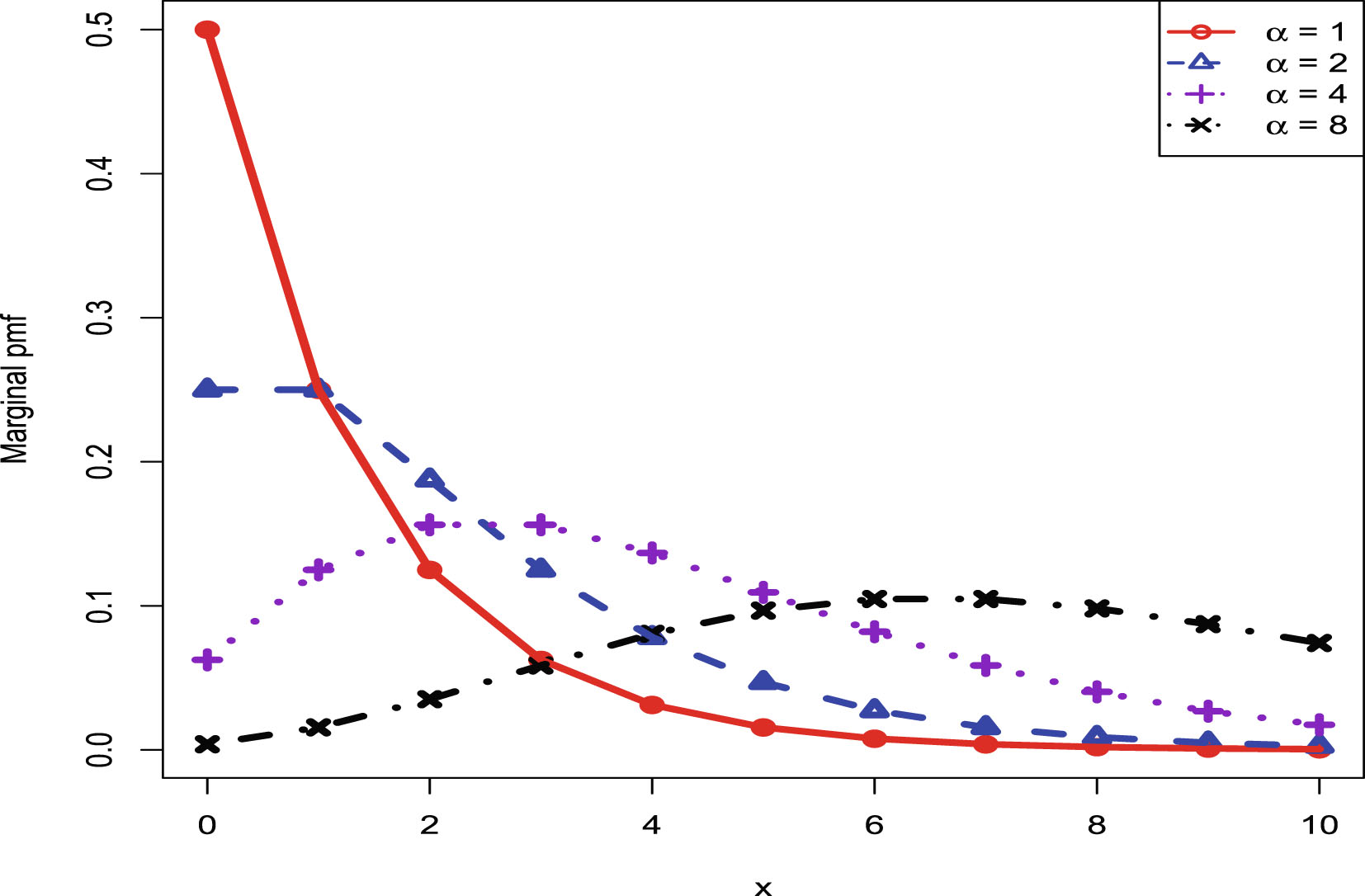

5. The marginal distributions of the first six hierarchical models (Inverse Gamma-Inverse Gamma (IG-IG), Gamma-Gamma (G-G), Exponential-Inverse Gamma (Exp-IG), Normal-Inverse Gamma (N-IG), Normal-Normal Inverse Gamma (N-NIG), and Uniform-Inverse Gamma (U-IG)) are continuous. Thus they can be used to model continuous data. The Kolmogorov-Smirnov (KS) test is used to perform the goodness-of-fit of the model to the data. The marginal distribution of the last hierarchical model (Poisson-Gamma (P-G)) is discrete, and thus it can be used to model discrete data. The chi-square test is utilized to perform the goodness-of-fit of the model to the data.

TAB. 1 — P: The contents of chapters 2–8.

| Subsection | IG-IG | G-G | Exp-IG | N-IG | N-NIG | U-IG | P-G | |

| Theoretical section | Bayes estimators and PESLs | |||||||

| Empirical Bayes estimators of |

||||||||

| Theoretical comparisons of Bayes estimators and PESLs of three methods | ||||||||

| Simulations section | Two inequalities of Bayes estimators and PESLs | |||||||

| Consistencies of moment estimators and MLEs | ||||||||

| Goodness-of-fit of the model | ||||||||

| Numerical comparisons of Bayes estimators and PESLs of three methods | ||||||||

| Marginal distributions for various hyperparameters | ||||||||

| Real data section | A real data example |

In the Table of Contents, List of Figures, List of Tables, List of Abbreviations, and appendix A, there are some abbreviations. They are used to indicate relevant chapters. More specifically, IG-IG, G-G, Exp-IG, N-IG, N-NIG, U-IG, and P-G are used to indicate relevant chapters of hierarchical models. P is short for Preface. LoA is short for List of Abbreviations. I is short for Introduction. SCLF is short for Several Common Loss Functions.

This book is supported by the First Class Construction Fund for Statistics Discipline of Yunnan University and the High-level Talent Research Start-up Fund Project of Yunnan University.

Ying-Ying Zhang

October, 2025

Chapter 1 Introduction

1.1 Empirical Bayes Method

In this section, we will introduce some literature on the empirical Bayes method, statistical inference, and Bayesian books.

The empirical Bayes method relies on a conjugate prior modeling, where the hyperparameters are estimated from the observations, and the “estimated prior” is then used as a regular prior in the subsequent inference. See Carlin and Louis (2000a); Maritz and Lwin (1989); Berger (1985) and the references therein. The empirical Bayes method is introduced in Robbins (1955, 1964, 1983). From a Bayesian point of view, it means that the sampling distribution is known, but the prior distribution is not. The marginal distribution is then used to recover the prior distribution from the observations. More literature on empirical Bayes method can be found, for example, in Li et al. (2025); Shi et al. (2025); Zhang (2025); Sun et al. (2024); Zhang et al. (2024); Sun et al. (2021); Zhou et al. (2021); Mikulich-Gilbertson et al. (2019); Zhang et al. (2019a, 2019b); Martin et al. (2017); Satagopan et al. (2016); Ghosh et al. (2015); van Houwelingen (2014); Efron (2011); Coram and Tang (2007); Pensky (2002); Carlin and Louis (2000b); Maritz and Lwin (1992); Morris (1983); Deely and Lindley (1981).

Statistical inferences are covered by many classical textbooks, see for instance, Shi and Tao (2008); Lehmann and Romano (2005); Shao (2003); Casella and Berger (2002); Stuart et al. (1999); Lehmann and Casella (1998); Bickel and Doksum (1977); Ferguson (1967). Point estimation is an important class of statistical inference. The study of the performance and the optimality of point estimators is usually evaluated through the loss function. In Bayesian analysis, we usually compute the Bayes risk to assess the performance of an estimator with respect to a given loss function.

Bayesian approaches are continually developing, and some of the most important works are Huang (2021); Wei and Zhang (2021); Wu (2021); Jiang (2020); Wu (2020); Han (2017); Huang (2017a, 2017b); Liu and Xia (2016); Wei (2016); Han (2015); Wei (2015); Gelman et al. (2013); Lee (2011); Albert (2009); Robert and Casella (2009); Robert (2007); Robert and Casella (2005); Chen et al. (2000); Bernardo and Smith (1994); Box and Tiao (1992); Berger (1985); Novick and Jackson (1974); Savage (1972); Zellner (1971); DeGroot (1970); Good (1965); Lindley (1965).

1.2 The Gamma and Inverse Gamma Distributions

In this section, we will give the probability density functions (pdfs) of the gamma and inverse gamma distributions.

Suppose that  and

and  . More specifically, the pdfs of

. More specifically, the pdfs of  and

and  are respectively given by

are respectively given by

It is easy to calculate

and

and

Suppose that  and

and  . The pdfs of

. The pdfs of  and

and  are respectively given by

are respectively given by

It is easy to calculate

and

and

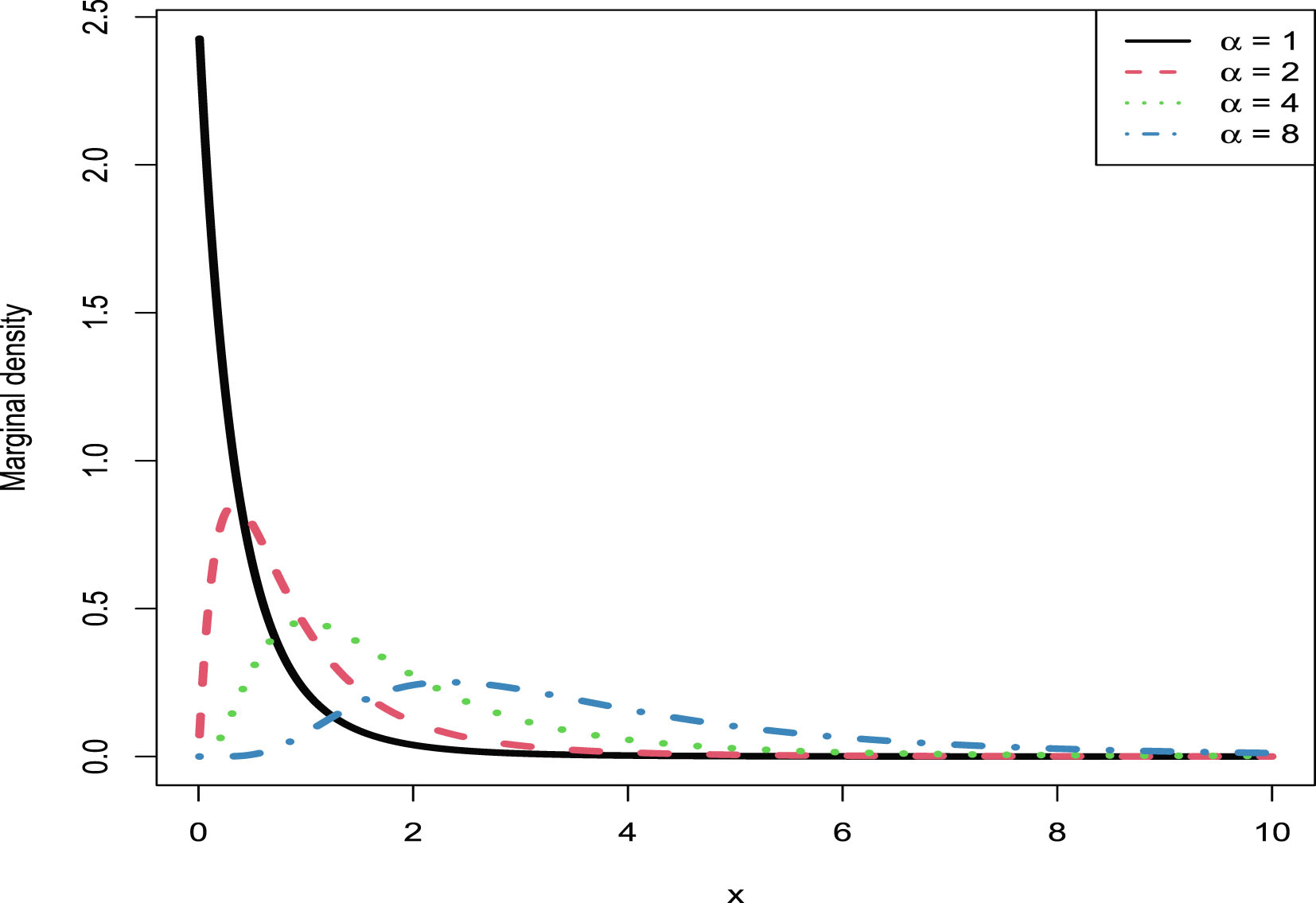

For more results about the gamma and inverse gamma distributions, we refer readers to Zhang and Zhang (2022). Positive, continuous, and right-skewed data are fitted by a mixture of gamma and inverse gamma distributions. For 16 hierarchical models of gamma and inverse gamma distributions, there are only 8 of them that have conjugate priors. They first discuss some common typical problems for the 8 hierarchical models that do not have conjugate priors. Then they calculate Bayesian posterior densities and marginal densities of the 8 hierarchical models that have conjugate priors. After that, they discuss relations among the 8 analytical marginal densities. Furthermore, they find some relations among the random variables of the marginal densities and the beta densities. Moreover, they discuss random variable generations for the gamma and inverse gamma distributions by using the R software. In addition, some numerical simulations are performed to illustrate four aspects: the plots of marginal densities, the generations of random variables from the marginal density, the transformations of moment estimators of hyperparameters of a hierarchical model, and the conclusions about the properties of the 8 marginal densities that do not have a closed form. Finally, they have illustrated their method by a real data example, in which the original and transformed data are fitted by the marginal density with different hyperparameters.

1.3 Hierarchical Models with Positive Parameters

In this section, we will introduce 7 hierarchical models with positive parameters. The hierarchical models are in the following general form:

(1.1)

where

(1.1)

where  are hyperparameters to be estimated,

are hyperparameters to be estimated,  ,

,  , or

, or  in this book,

in this book,  is the unknown parameter of interest,

is the unknown parameter of interest,  is the distribution of

is the distribution of  with parameter

with parameter  , and

, and  is the prior distribution of

is the prior distribution of  with hyperparameters

with hyperparameters  . It is useful to point out that some hyperparameters may exist in

. It is useful to point out that some hyperparameters may exist in  , and thus

, and thus  is more accurate. However, for simplicity, we will use

is more accurate. However, for simplicity, we will use  . The hierarchical

. The hierarchical  models that we will consider in this book are IG-IG (2.1), G-G (3.1), Exp-IG (4.1), N-IG (5.1), N-NIG (6.2), U-IG (7.1), and P-G (8.1).

models that we will consider in this book are IG-IG (2.1), G-G (3.1), Exp-IG (4.1), N-IG (5.1), N-NIG (6.2), U-IG (7.1), and P-G (8.1).

1.4 Estimating the Hyperparameters

In this section, we will introduce how to estimate the hyperparameters of the hierarchical model (1.1).

In empirical Bayes analysis, the hyperparameters are unknown, and the marginal distribution is used to estimate the hyperparameters from the observations. There are two common methods to estimate the hyperparameters by exploiting the marginal distribution: the moment method and the Maximum Likelihood Estimation (MLE) method. In this book, we will use the two methods to estimate the hyperparameters of the hierarchical model (1.1).

The moment method to estimate the hyperparameters is performed by equating the population moments to the sample moments. In general, if there are

hyperparameters, then we need to calculate the first

hyperparameters, then we need to calculate the first  origin moments of

origin moments of  ,

,  . We can use the iterated expectation method to calculate

. We can use the iterated expectation method to calculate  , that is,

, that is,

where

where  . Assume that

. Assume that  can be calculated. Then

can be calculated. Then

and this expectation may be calculated by noting

and this expectation may be calculated by noting  .

.

The MLE method to estimate the hyperparameters proceeds as follows. First, we calculate the likelihood function of  :

:

(1.2)

where

(1.2)

where

is the marginal distribution of the hierarchical model (1.1),

is the marginal distribution of the hierarchical model (1.1),  is the probability density function (pdf) or probability mass function (pmf) of

is the probability density function (pdf) or probability mass function (pmf) of  , and

, and  is the prior pdf of

is the prior pdf of  . Second, we obtain the log-likelihood function of

. Second, we obtain the log-likelihood function of  :

:

Third, taking partial derivatives with respect to  and setting them to zeros, we obtain

and setting them to zeros, we obtain

Fourth, after some algebra, the above equations reduce to

(1.3)

(1.3)

(1.4)

(1.4)

(1.5)

(1.5)

In general, the analytical calculations of the Maximum Likelihood Estimators (MLEs) of  by solving the equations (1.3), (1.4), ..., and (1.5) are impossible, and thus we have to resort to numerical solutions. Finally, we can exploit Newton’s method to solve the equations (1.3), (1.4), ..., and (1.5) and to numerically obtain the MLEs of

by solving the equations (1.3), (1.4), ..., and (1.5) are impossible, and thus we have to resort to numerical solutions. Finally, we can exploit Newton’s method to solve the equations (1.3), (1.4), ..., and (1.5) and to numerically obtain the MLEs of  . The iterative scheme of Newton’s method is

. The iterative scheme of Newton’s method is

where

where  is the Jacobian matrix of

is the Jacobian matrix of  , and

, and  . Note that the MLEs of

. Note that the MLEs of  are very sensitive to the initial estimators, and the moment estimators are usually proved to be good initial estimators. The Jacobian matrix can be calculated as follows:

are very sensitive to the initial estimators, and the moment estimators are usually proved to be good initial estimators. The Jacobian matrix can be calculated as follows:

where

where

It is important to note that in calculating (1.2), we have implicitly used the property of independency of  . And this independency property is guaranteed by

. And this independency property is guaranteed by

(1.6)

(1.6)

If (1.6) is true, then we have

that is,

that is,

(1.7)

(1.7)

Moreover, if (1.7) is true, then we have (1.6) is true. In other words, (1.6) and (1.7) are equivalent. In addition, (1.7) implies

(1.8)

(1.8)

It is also important to note that

(1.9)

can not guarantee (1.8), and vice versa.

(1.9)

can not guarantee (1.8), and vice versa.

1.5 Stein’s Loss Function

In this section, we will introduce Stein’s loss function and justify why Stein’s loss function is better than the squared error loss function on  .

.

The (weighted) squared error loss function has been used by many authors for the problem of estimating the variance,  , based on a random sample from a normal distribution with an unknown mean (see, for example, Maatta and Casella (1990); Stein (1964)). As pointed out by Casella and Berger (2002), the (weighted) squared error loss function penalizes equally for overestimation and underestimation, and it is fine for the unrestricted parameter space

, based on a random sample from a normal distribution with an unknown mean (see, for example, Maatta and Casella (1990); Stein (1964)). As pointed out by Casella and Berger (2002), the (weighted) squared error loss function penalizes equally for overestimation and underestimation, and it is fine for the unrestricted parameter space  . In the positive parameter space

. In the positive parameter space  where 0 is a natural lower bound, and the estimation problem is not symmetric, we should not select the (weighted) squared error loss function, but select a loss function which penalizes gross overestimation and gross underestimation equally, that is, an action

where 0 is a natural lower bound, and the estimation problem is not symmetric, we should not select the (weighted) squared error loss function, but select a loss function which penalizes gross overestimation and gross underestimation equally, that is, an action  will incur an infinite loss when it tends to 0 or ∞. Stein’s loss function has this property, and hence it is recommended to use for the positive parameter space by many authors (see for instance Li et al. (2025); Shi et al. (2025); Zhang (2025); Sun et al. (2024); Zhang et al. (2024); Sun et al. (2021); Bobotas and Kourouklis (2010); Zhang et al. (2019b); Xie et al. (2018); Zhang et al. (2018); Zhang (2017); Oono and Shinozaki (2006); Petropoulos and Kourouklis (2005); Parsian and Nematollahi (1996); Brown (1990, 1968); James and Stein (1961)).

will incur an infinite loss when it tends to 0 or ∞. Stein’s loss function has this property, and hence it is recommended to use for the positive parameter space by many authors (see for instance Li et al. (2025); Shi et al. (2025); Zhang (2025); Sun et al. (2024); Zhang et al. (2024); Sun et al. (2021); Bobotas and Kourouklis (2010); Zhang et al. (2019b); Xie et al. (2018); Zhang et al. (2018); Zhang (2017); Oono and Shinozaki (2006); Petropoulos and Kourouklis (2005); Parsian and Nematollahi (1996); Brown (1990, 1968); James and Stein (1961)).

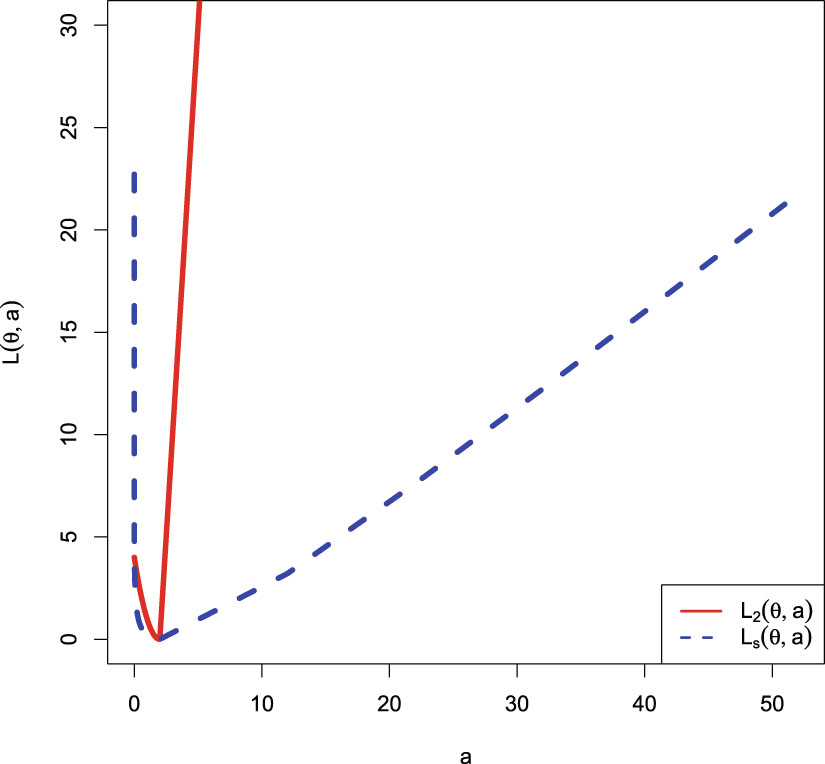

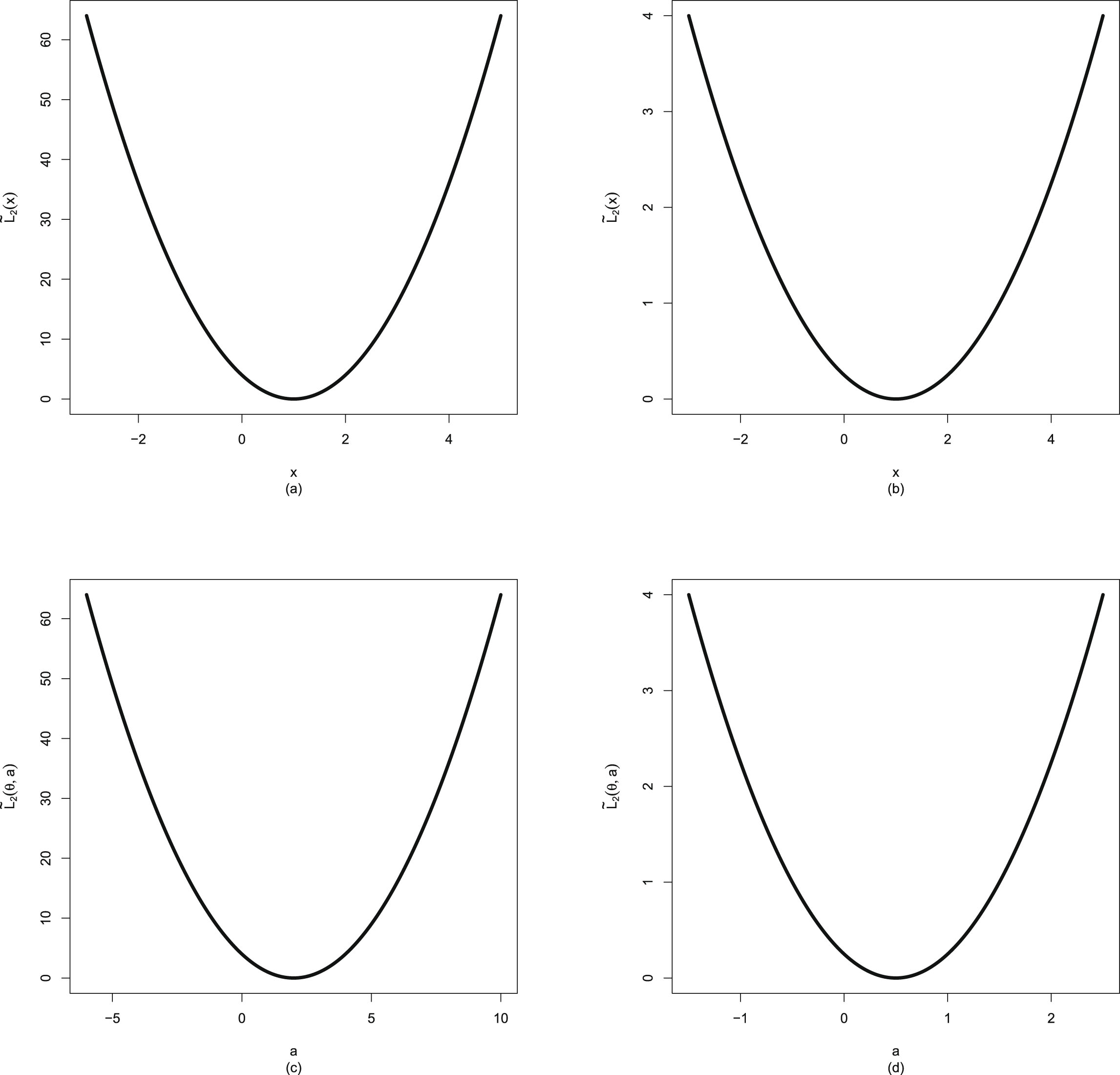

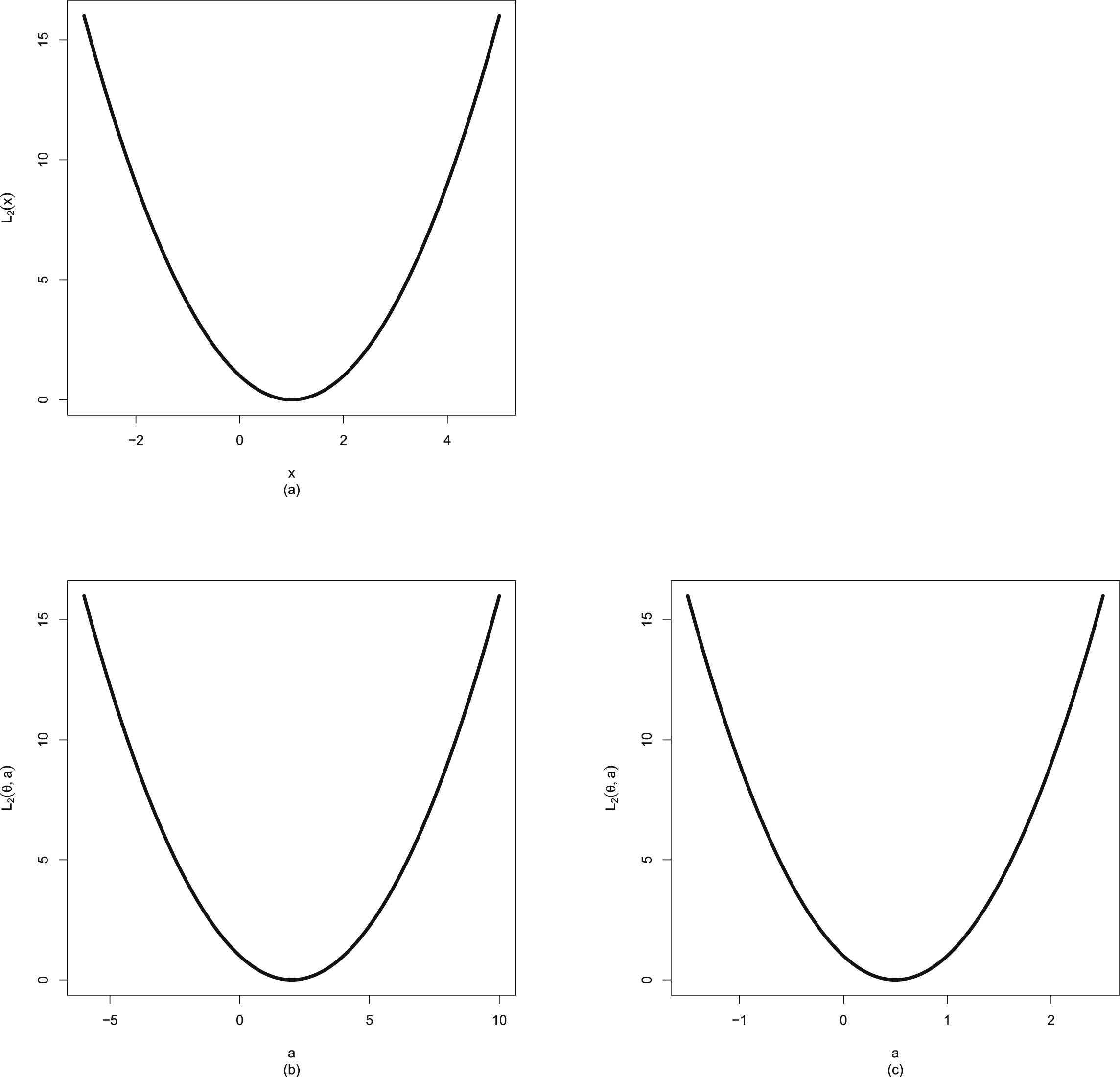

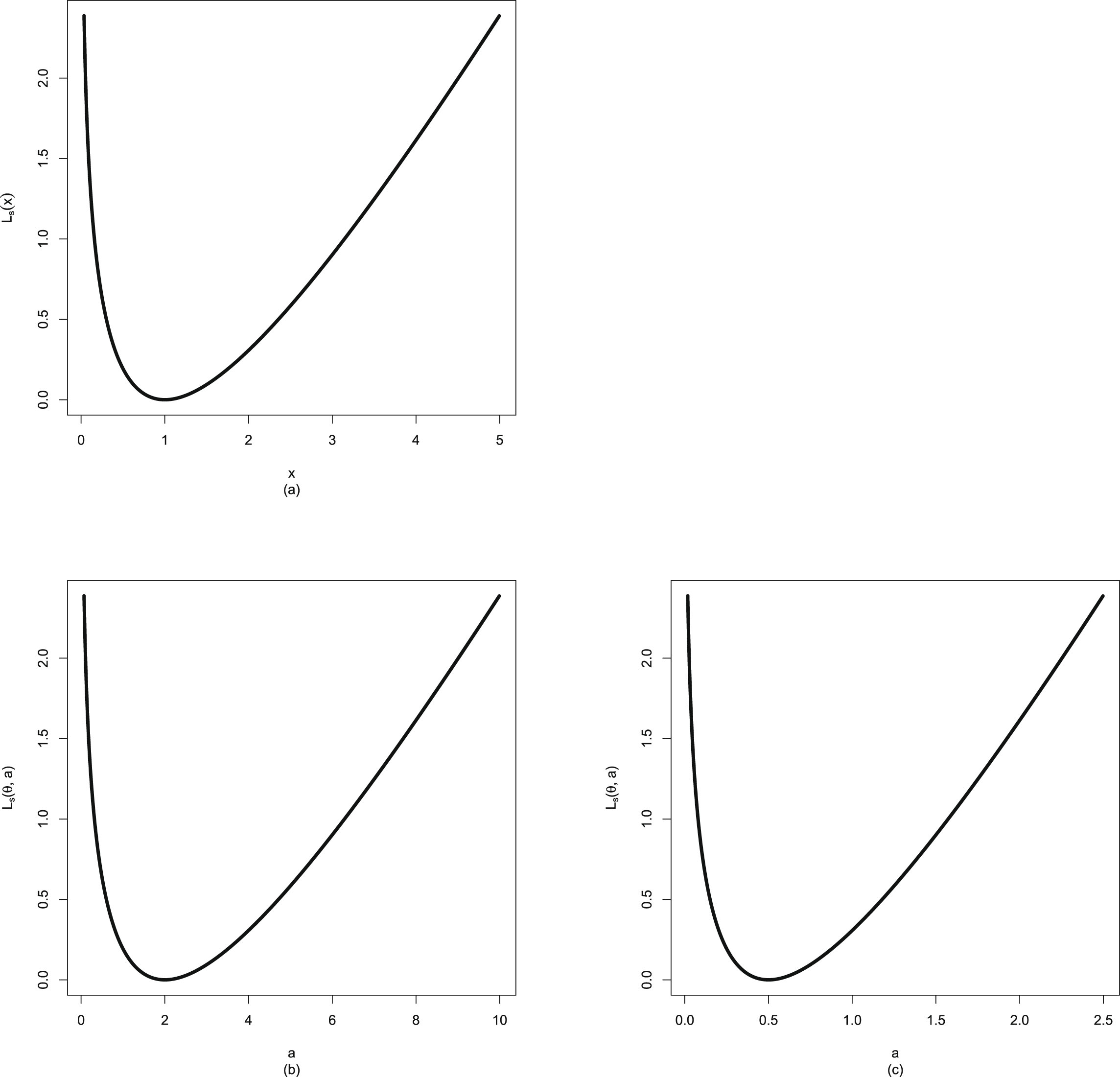

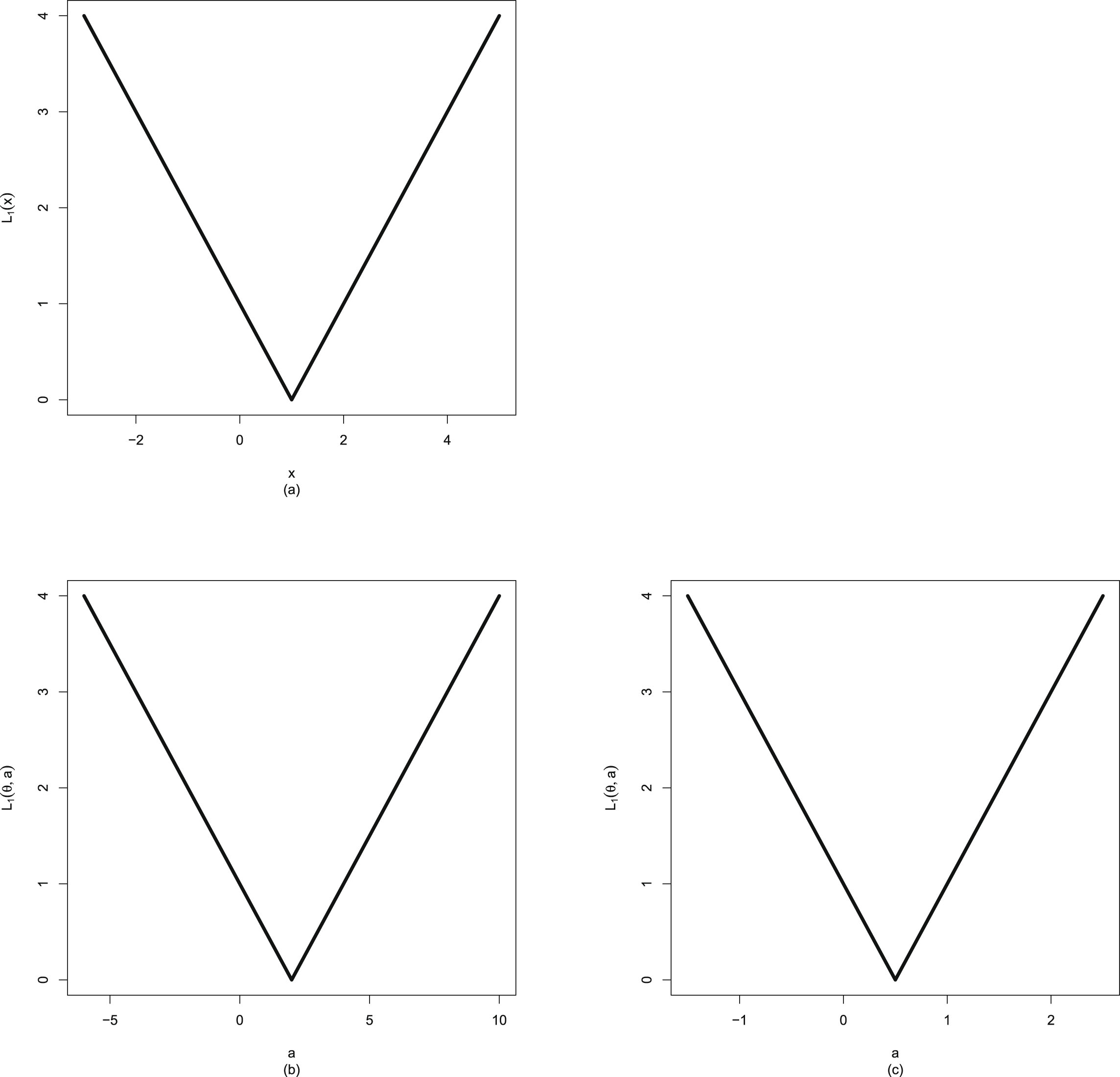

Now, let us give the justifications of why Stein’s loss function is better than the squared error loss function on  . Stein’s loss function is given by

. Stein’s loss function is given by

(1.10)

while the squared error loss function is given by

(1.10)

while the squared error loss function is given by

(1.11)

(1.11)

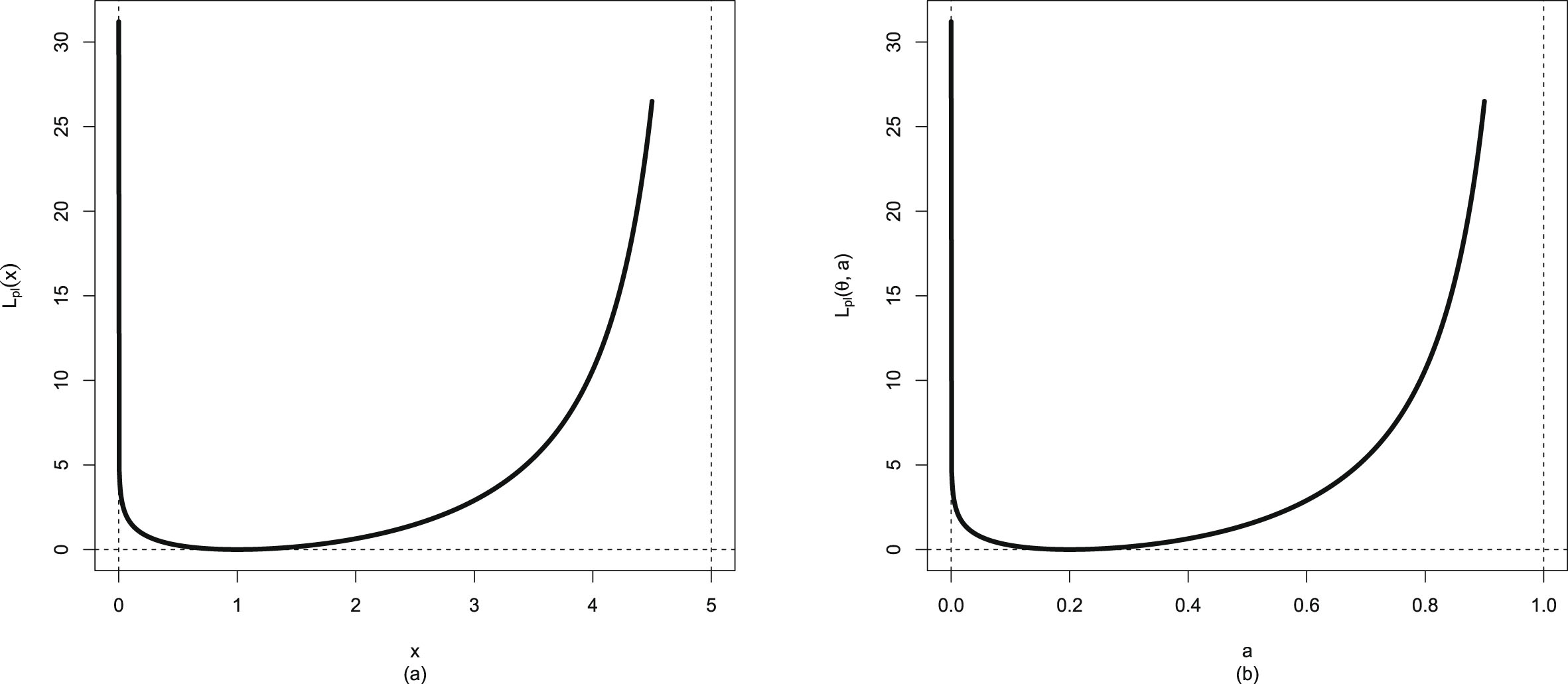

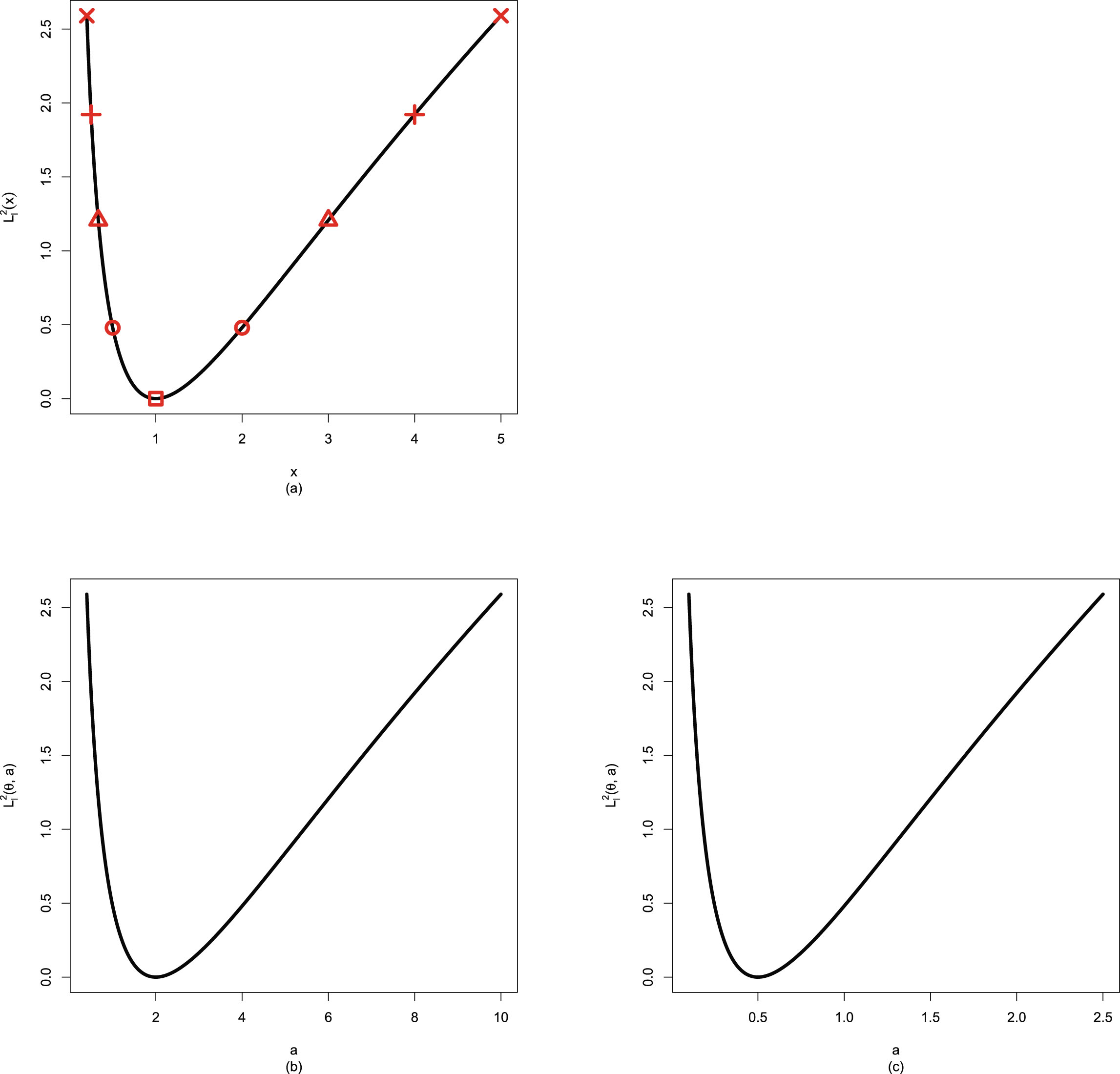

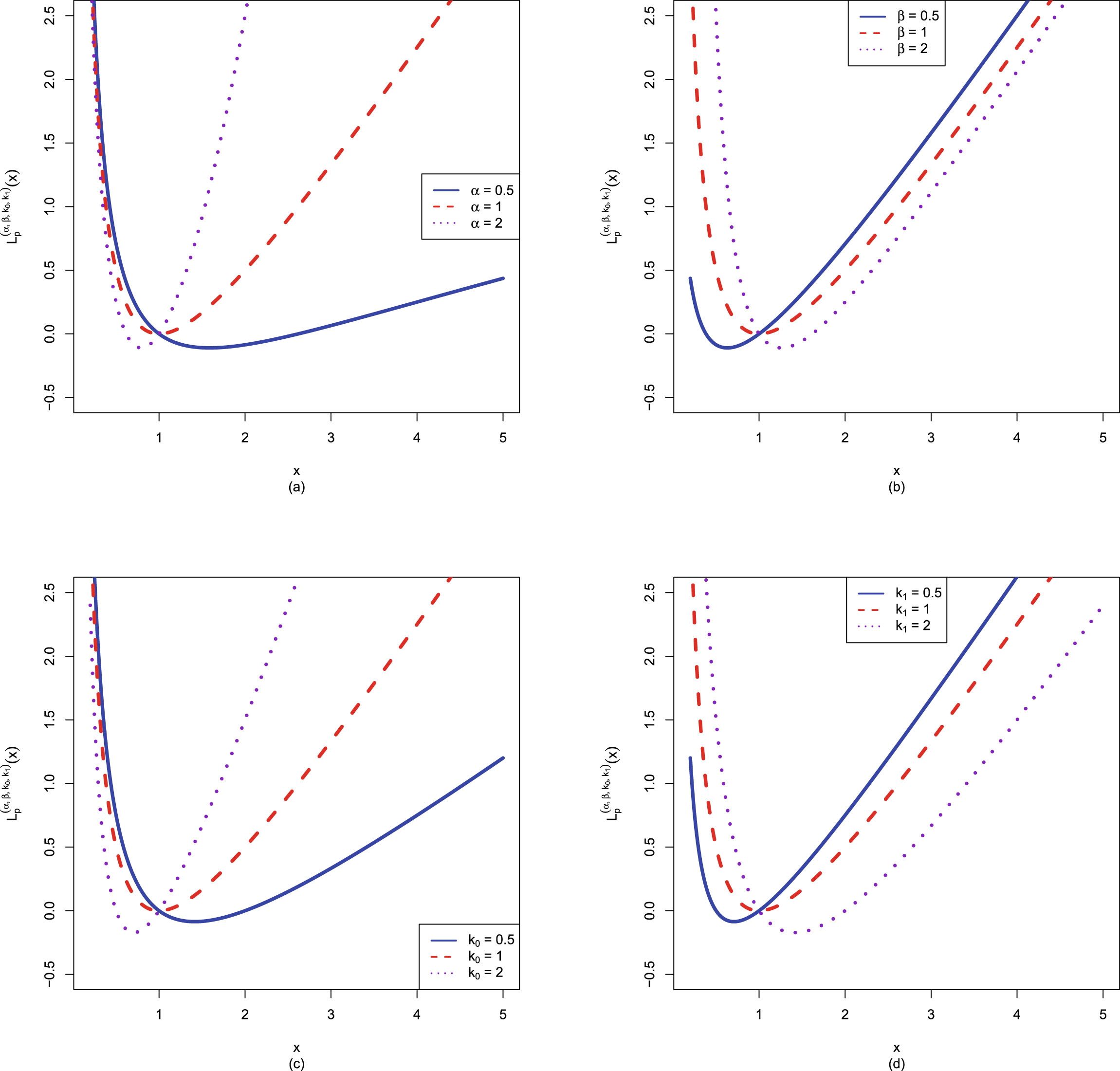

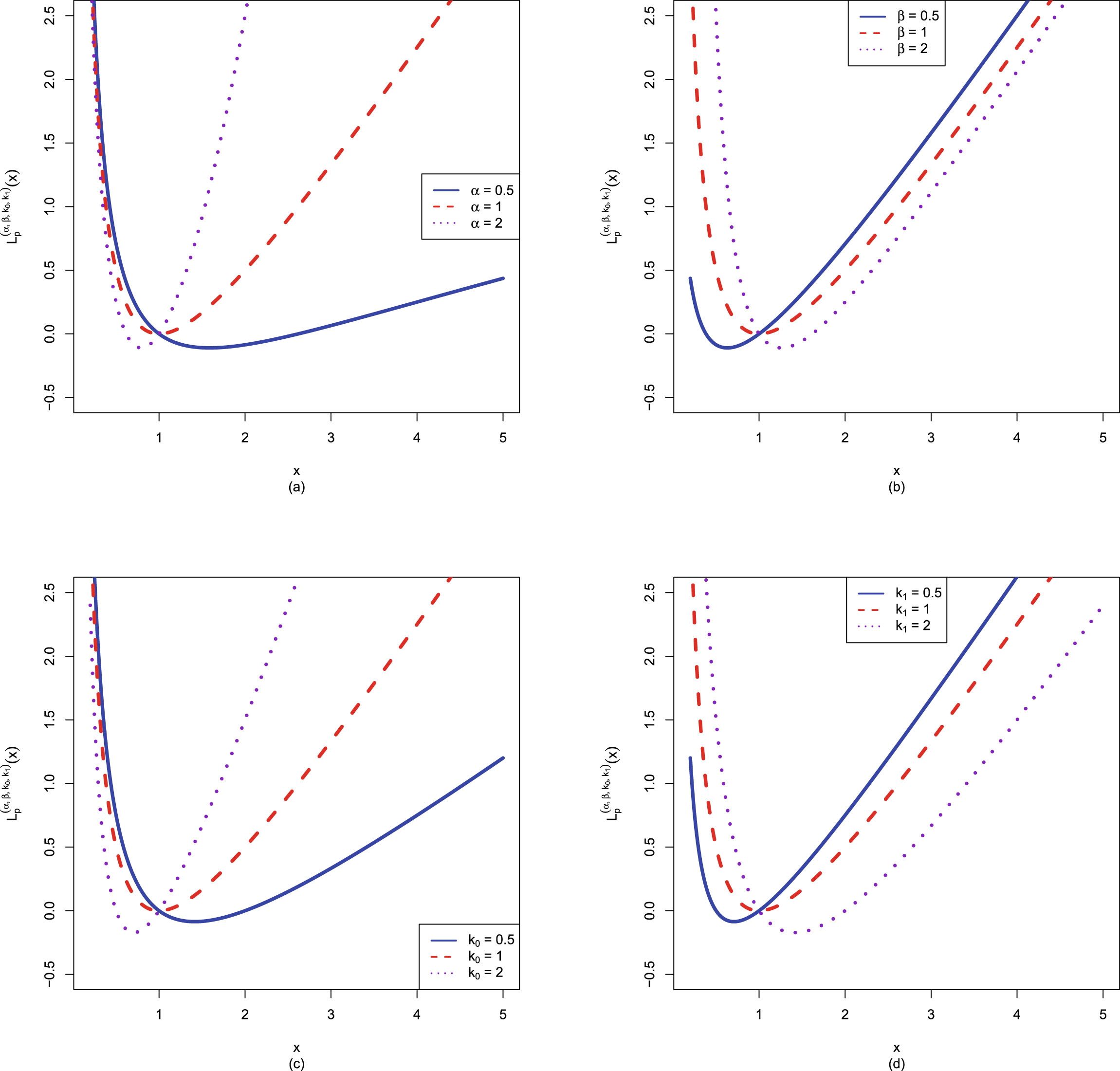

Note that on the positive parameter space  , Stein’s loss function penalizes gross overestimation and gross underestimation equally, that is, an action

, Stein’s loss function penalizes gross overestimation and gross underestimation equally, that is, an action  will incur an infinite loss when it tends to 0 or ∞. However, the squared error loss function does not penalize gross overestimation and gross underestimation equally, as an action

will incur an infinite loss when it tends to 0 or ∞. However, the squared error loss function does not penalize gross overestimation and gross underestimation equally, as an action  will incur a finite loss (in fact

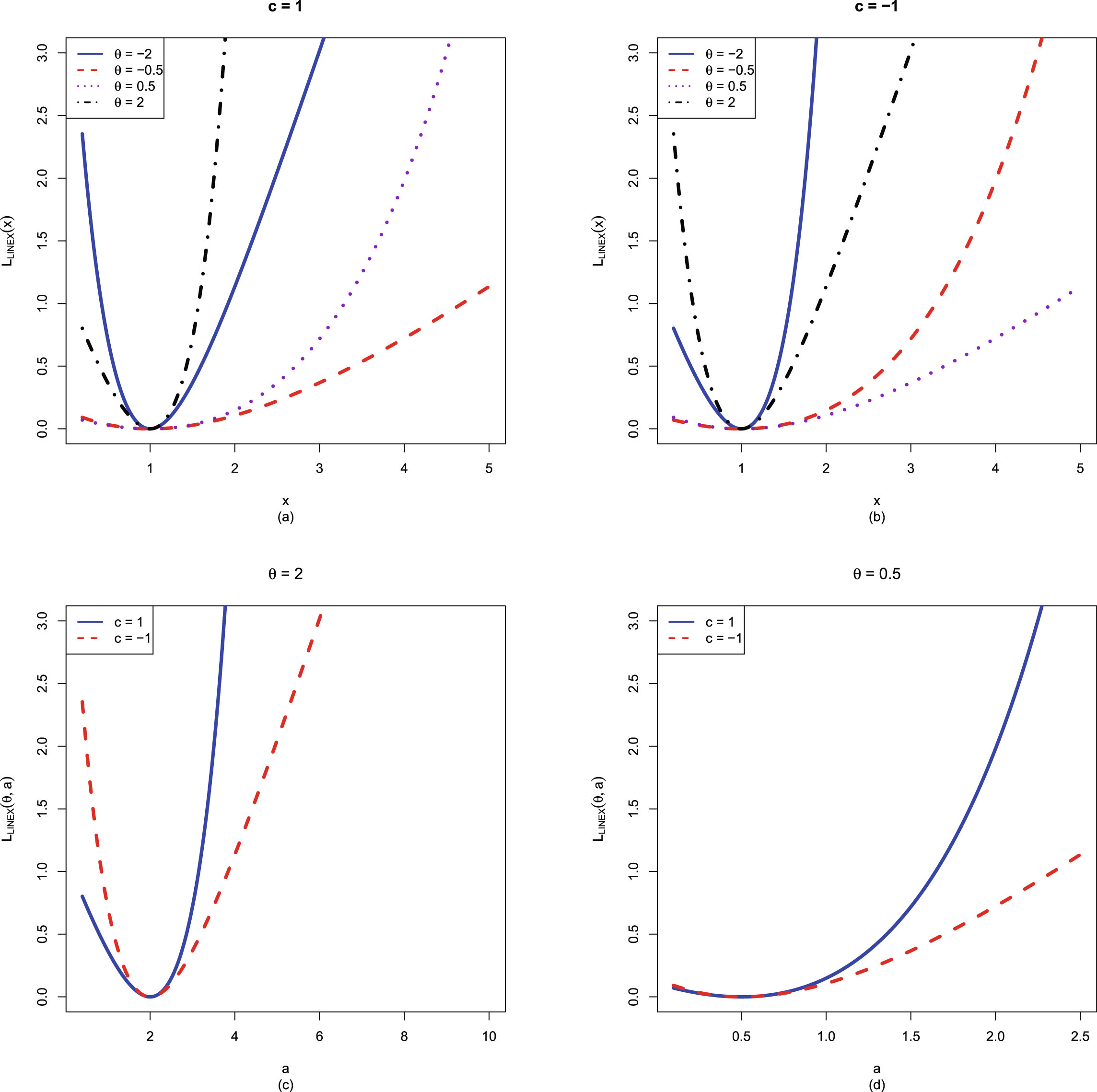

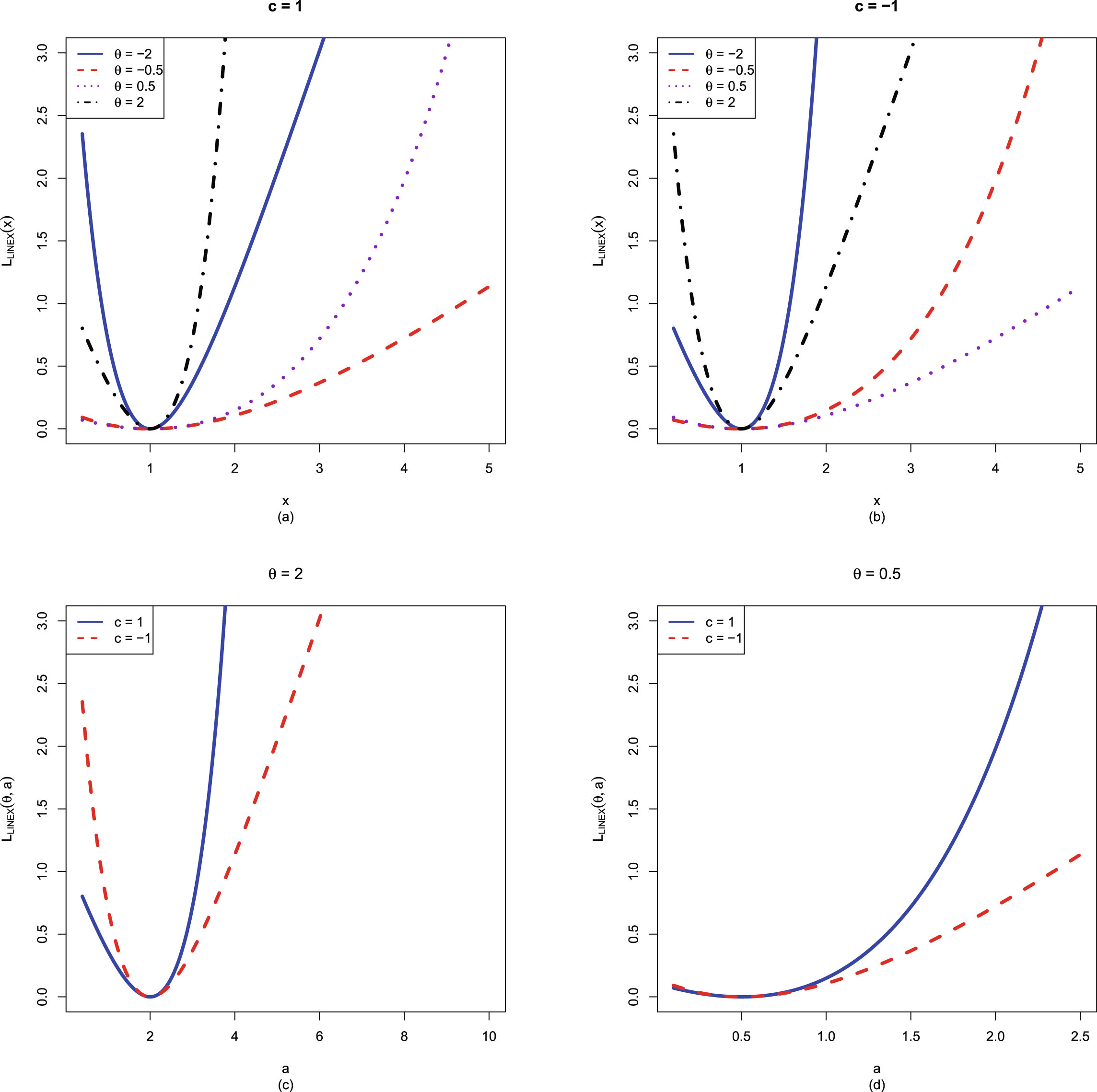

will incur a finite loss (in fact  ) when it tends to 0 and incur an infinite loss when it tends to ∞. Figure 1.1 shows Stein’s loss function and the squared error loss function on

) when it tends to 0 and incur an infinite loss when it tends to ∞. Figure 1.1 shows Stein’s loss function and the squared error loss function on  when

when  .

.

FIG. 1.1 — I: Stein’s loss function and the squared error loss function on  when

when  .

.

For more details of the squared error loss function, the weighted squared error loss function, and Stein’s loss function, we refer readers to relevant subsections in chapter 9.

1.6 The Bayes Estimators and the PESLs

In this section, we will calculate the Bayes estimator of  under Stein’s loss function

under Stein’s loss function  , the Bayes estimator of

, the Bayes estimator of  under the usual squared error loss function

under the usual squared error loss function  , and the Posterior Expected Stein’s Losses (PESLs) at

, and the Posterior Expected Stein’s Losses (PESLs) at  and

and  (

( and

and  ) for any hierarchical model such that the posterior expectations exist.

) for any hierarchical model such that the posterior expectations exist.

Similar to Zhang (2017), the two Bayes estimators and the two PESLs are respectively given by

(1.12)

(1.12)

(1.13)

(1.13)

(1.14)

(1.14)

(1.15)

(1.15)

To calculate the two Bayes estimators and the two PESLs, it remains to calculate

It has been shown in Zhang (2017) that

(1.16)

by exploiting Jensen’s inequality. Moreover,

(1.16)

by exploiting Jensen’s inequality. Moreover,

(1.17)

which is a direct consequence of the general methodology for finding a Bayes estimator. According to construction,

(1.17)

which is a direct consequence of the general methodology for finding a Bayes estimator. According to construction,  minimizes the Posterior Expected Stein’s Loss (PESL). In the simulations section and the real data section, we will exemplify the two inequalities (1.16) and (1.17).

minimizes the Posterior Expected Stein’s Loss (PESL). In the simulations section and the real data section, we will exemplify the two inequalities (1.16) and (1.17).

1.7 Theoretical Comparisons of the Bayes Estimators and the PESLs of Three Methods

In this section, similar to Sun et al. (2021), we will theoretically compare the Bayes estimators and the PESLs of the three methods (the oracle method, the moment method, and the MLE method).

Note that the subscripts 0, 1, and 2 below are for the oracle method, the moment method, and the MLE method, respectively. Similar to the derivations in Sun et al. (2021); Zhang (2017), the PESL functions of the three methods are respectively given by

for

for  .

.

Now we calculate the Bayes estimators  and

and  , and the PESLs

, and the PESLs  and

and

following the route of Sun et al. (2021); Zhang (2017). The Bayes estimators of

following the route of Sun et al. (2021); Zhang (2017). The Bayes estimators of  under Stein’s loss function,

under Stein’s loss function,

, minimize the corresponding PESL, that is,

, minimize the corresponding PESL, that is,

where

where  is the action space,

is the action space,  is an action (estimator),

is an action (estimator),  given by (1.10) is Stein’s loss function, and

given by (1.10) is Stein’s loss function, and  is the unknown parameter of interest. Similar to Sun et al. (2021); Zhang (2017), it is easy to obtain

is the unknown parameter of interest. Similar to Sun et al. (2021); Zhang (2017), it is easy to obtain

(1.18)

(1.18)

The Bayes estimators of  under the squared error loss function are given by

under the squared error loss function are given by

(1.19)

(1.19)

The PESLs evaluated at the Bayes estimators

are given by

are given by

(1.20)

(1.20)

The PESLs evaluated at the Bayes estimators

are given by

are given by

(1.21)

(1.21)

Similar to Sun et al. (2021), our primary objectives are to estimate the Bayes estimators and the PESLs

by the oracle method. However, the hyperparameters are unknown. The oracle method knows the hyperparameters only in simulations. But in reality, the hyperparameters are unknown.

by the oracle method. However, the hyperparameters are unknown. The oracle method knows the hyperparameters only in simulations. But in reality, the hyperparameters are unknown.

The good news is that we can actually obtain the Bayes estimators and the PESLs

by the moment method, and

by the moment method, and

by the MLE method, once the data

by the MLE method, once the data  are given.

are given.

To compare the moment method and the MLE method, we can compare the Bayes estimators and the PESLs by the two methods with those quantities by the oracle method in simulations. The method that produces closer Bayes estimators and PESLs to the ones by the oracle method in simulations, is a better method.

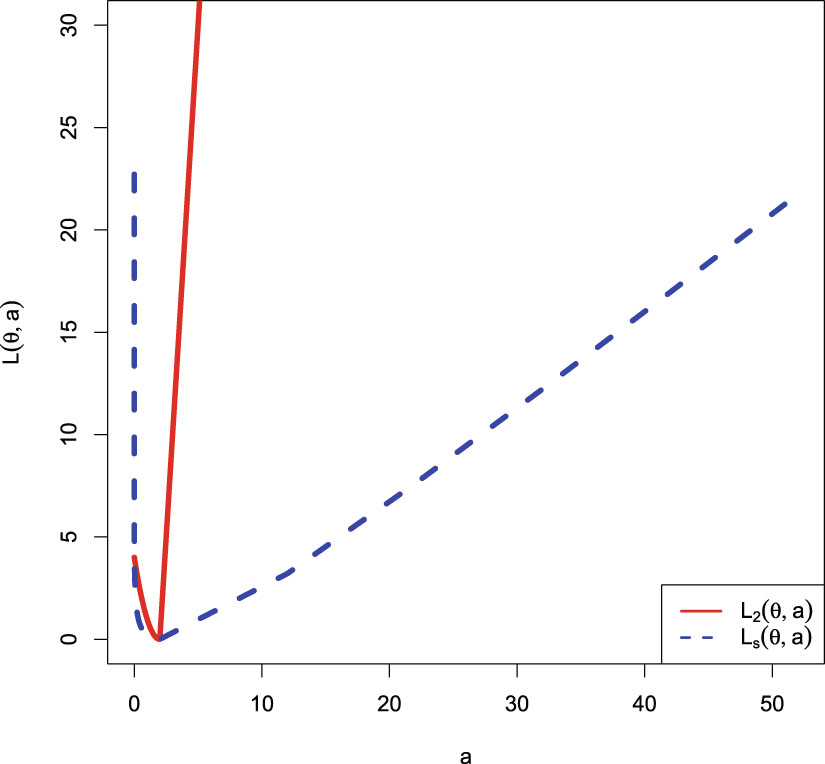

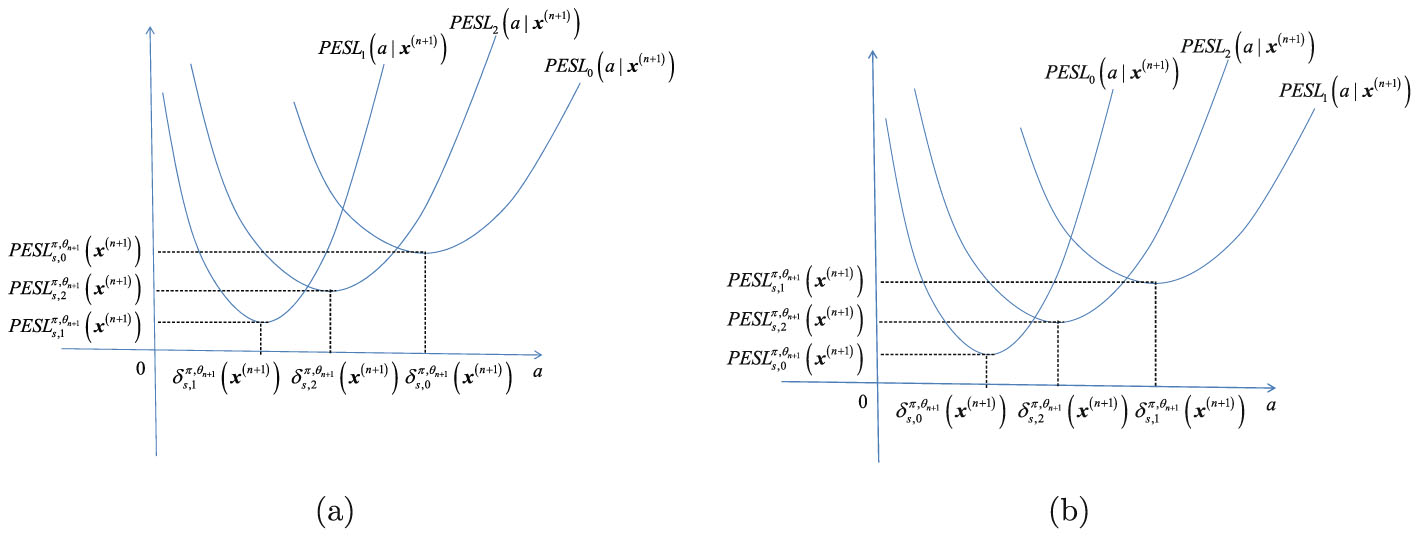

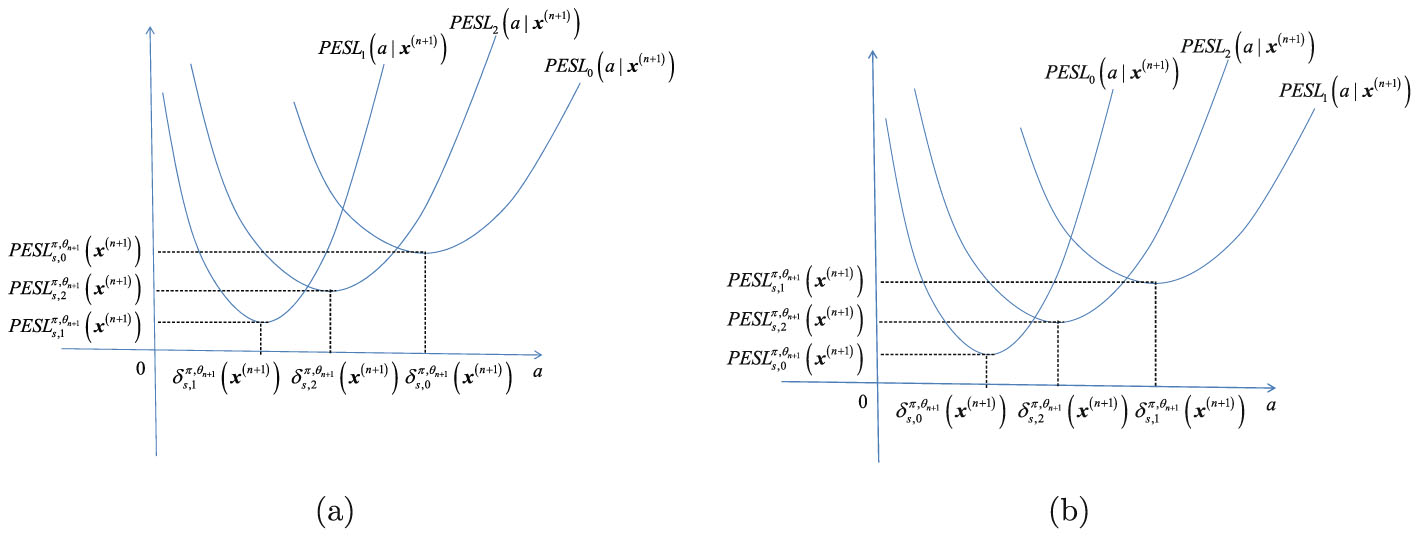

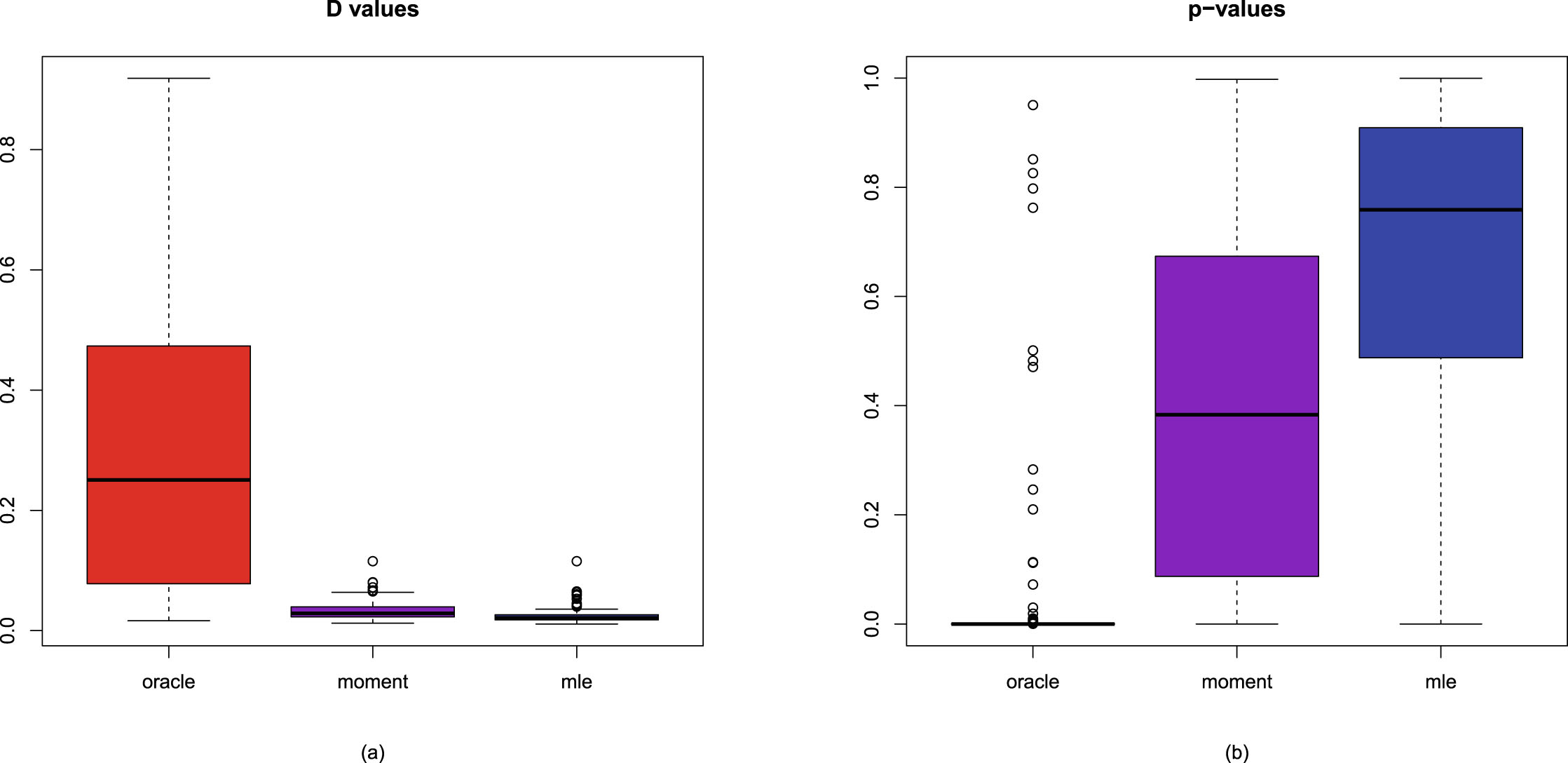

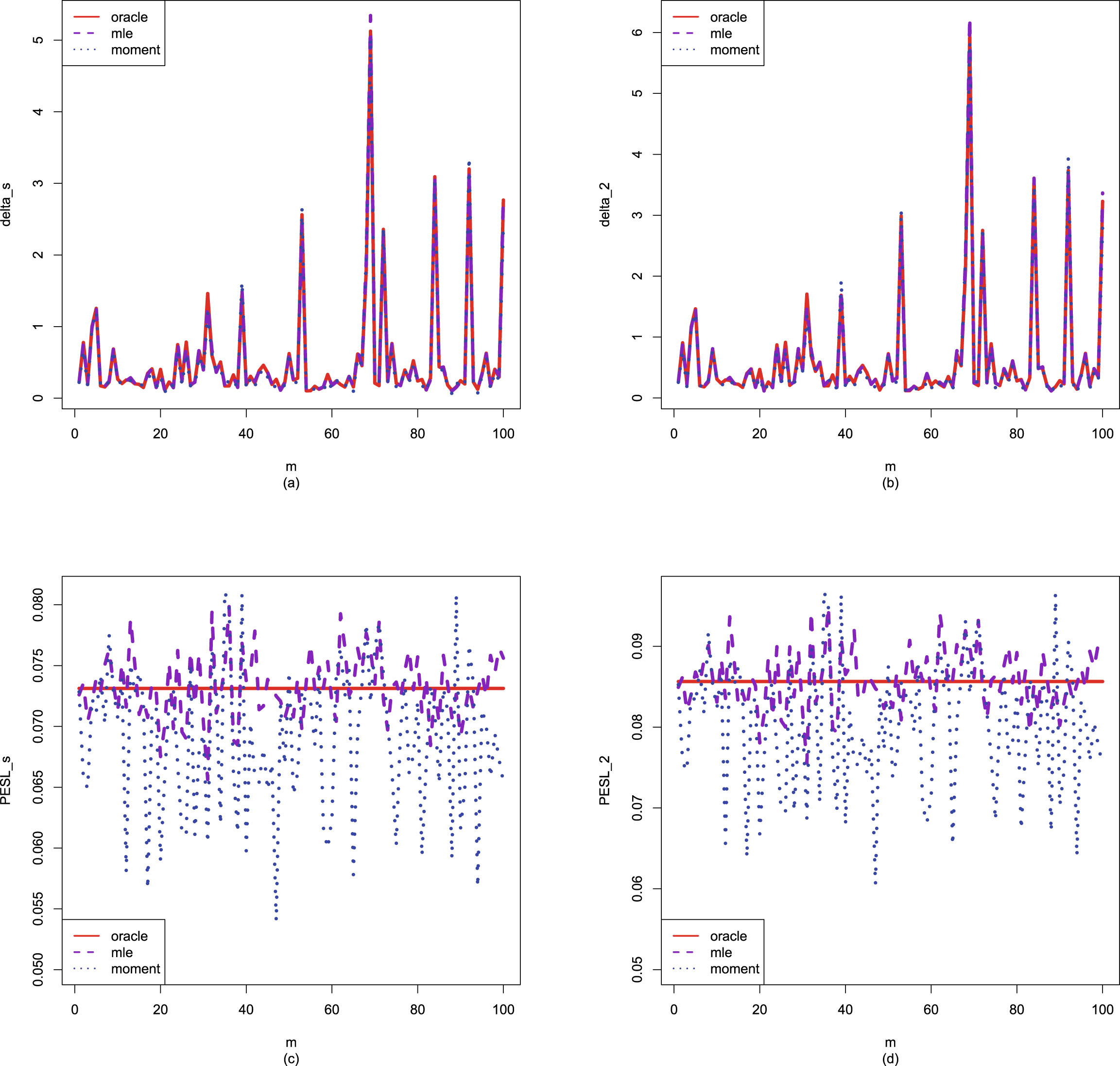

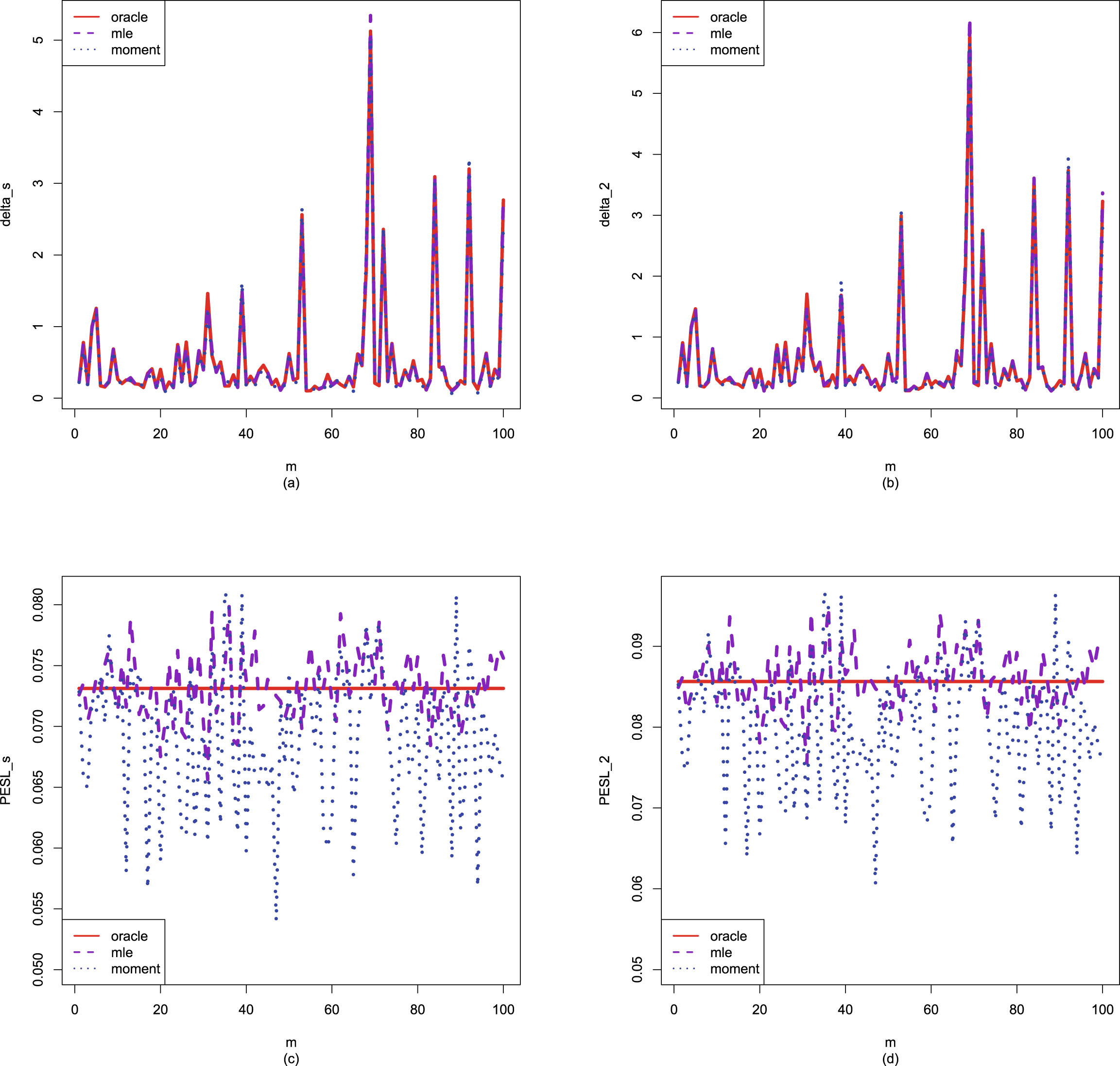

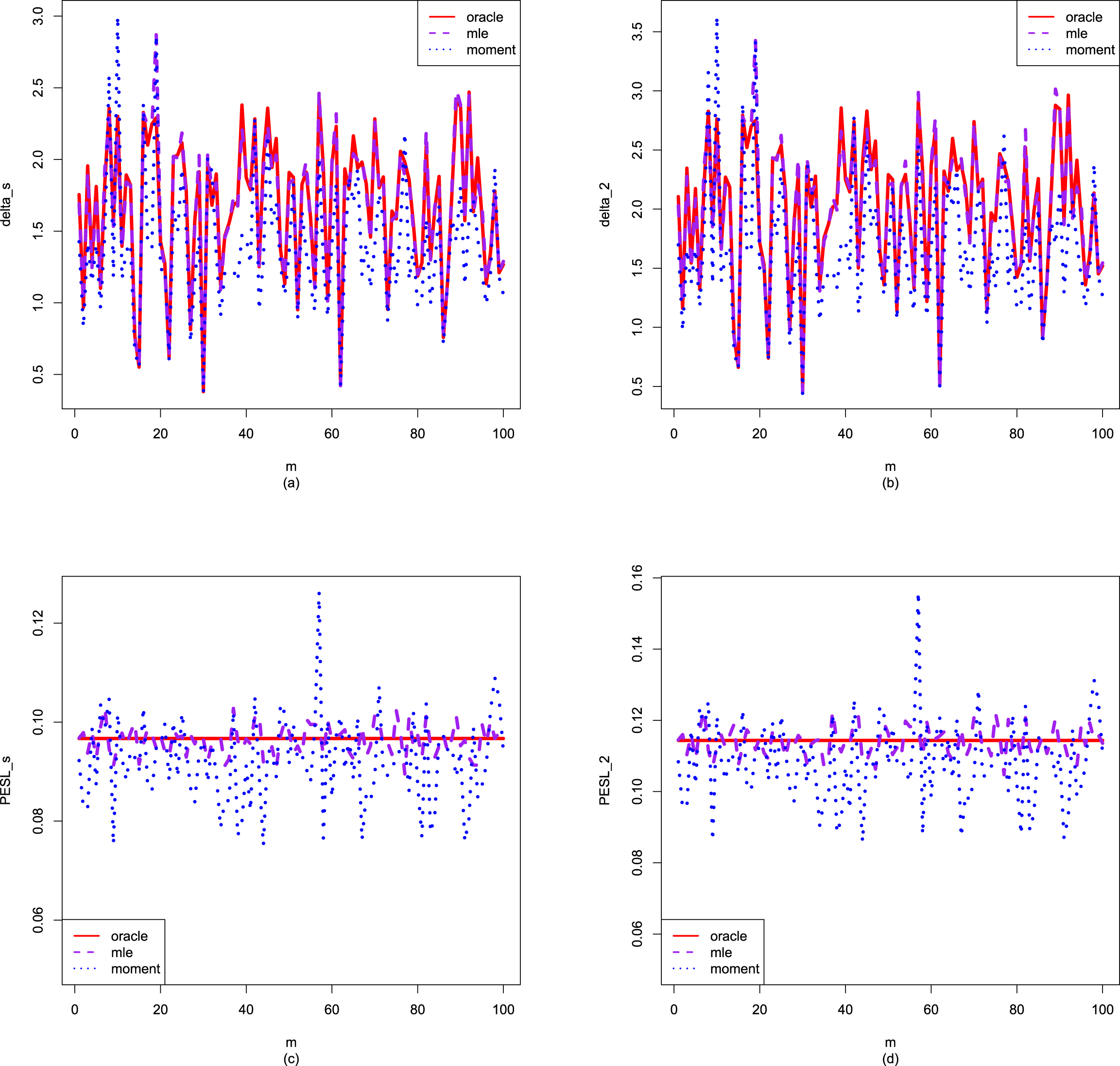

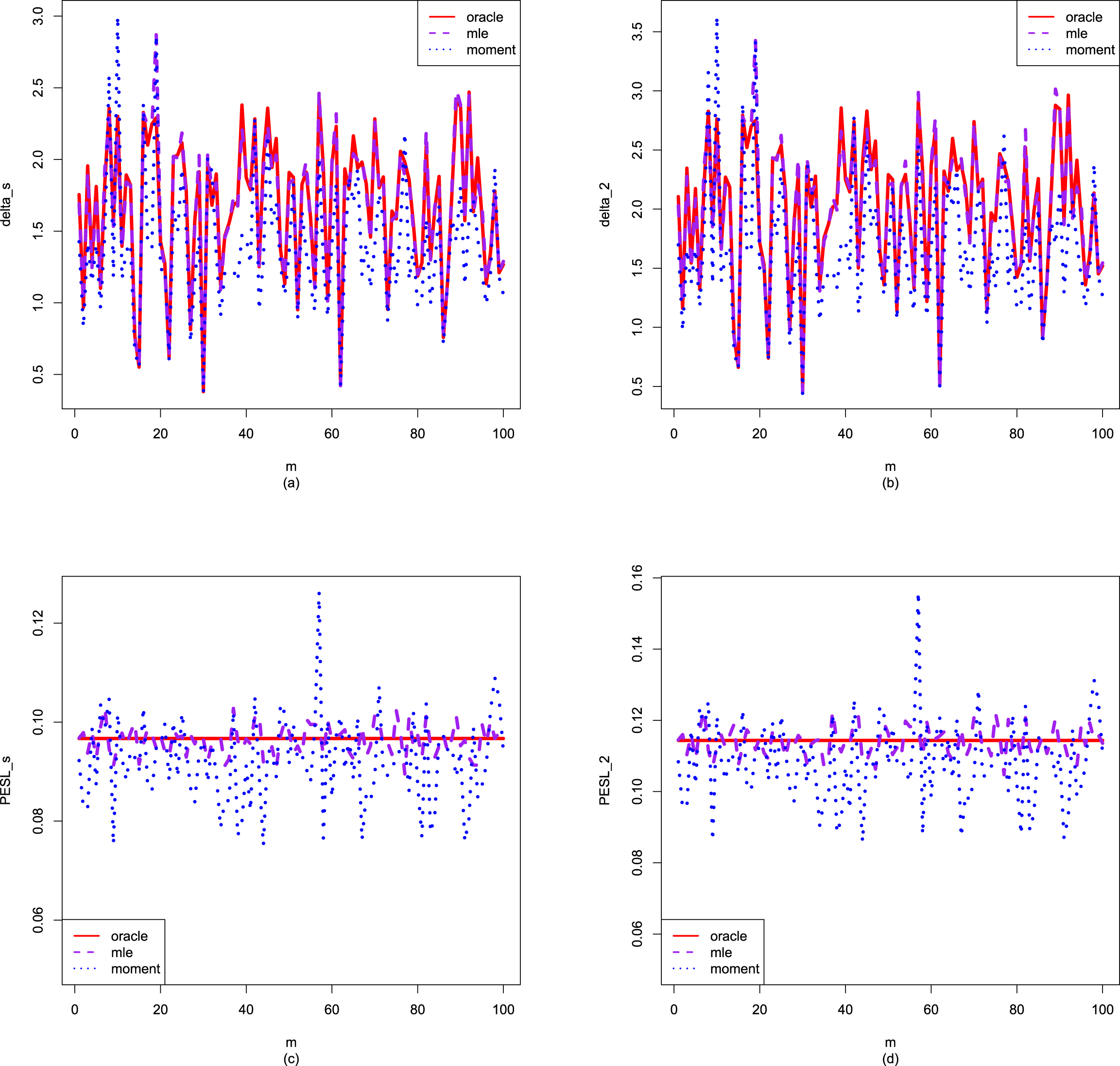

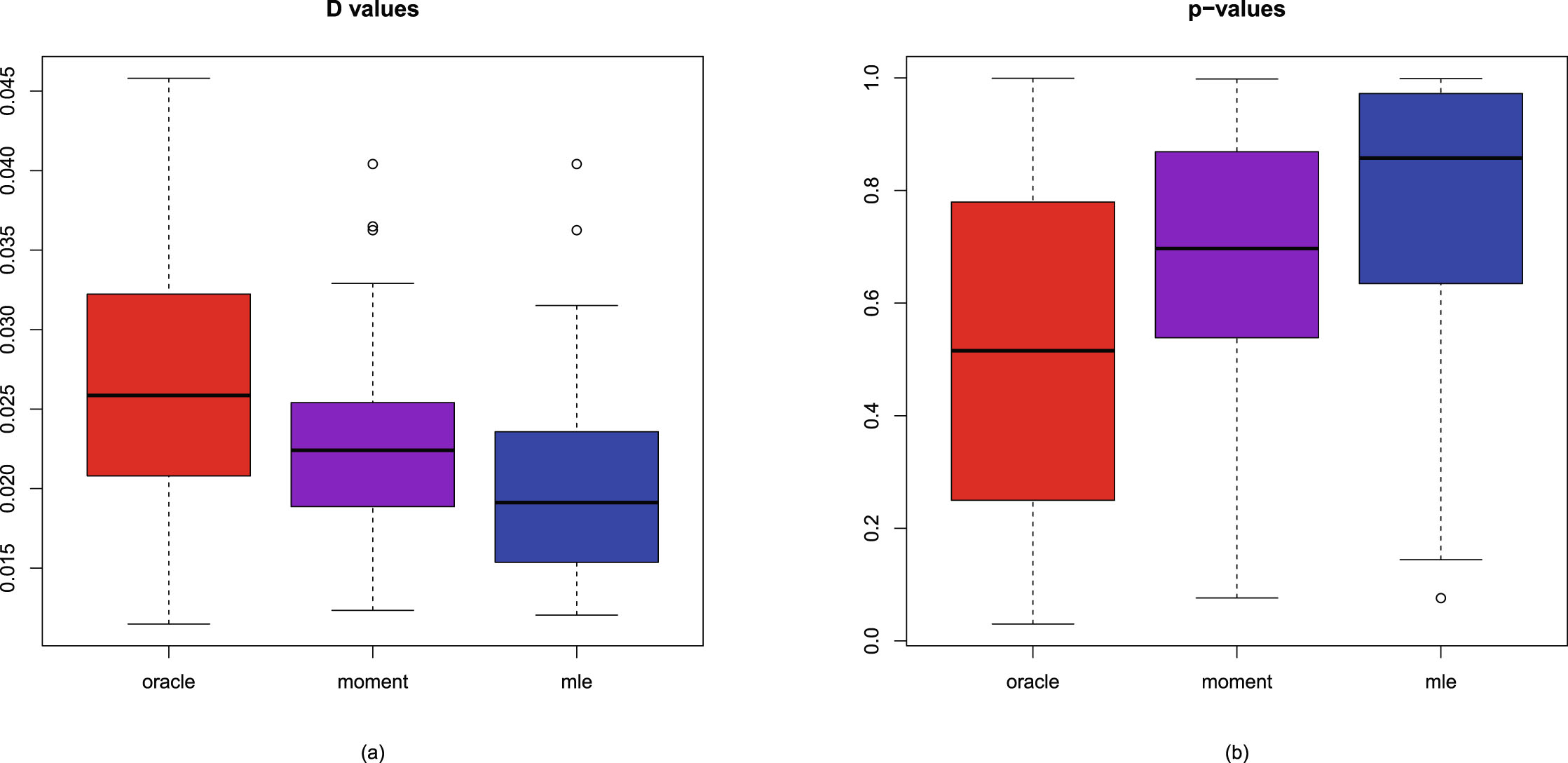

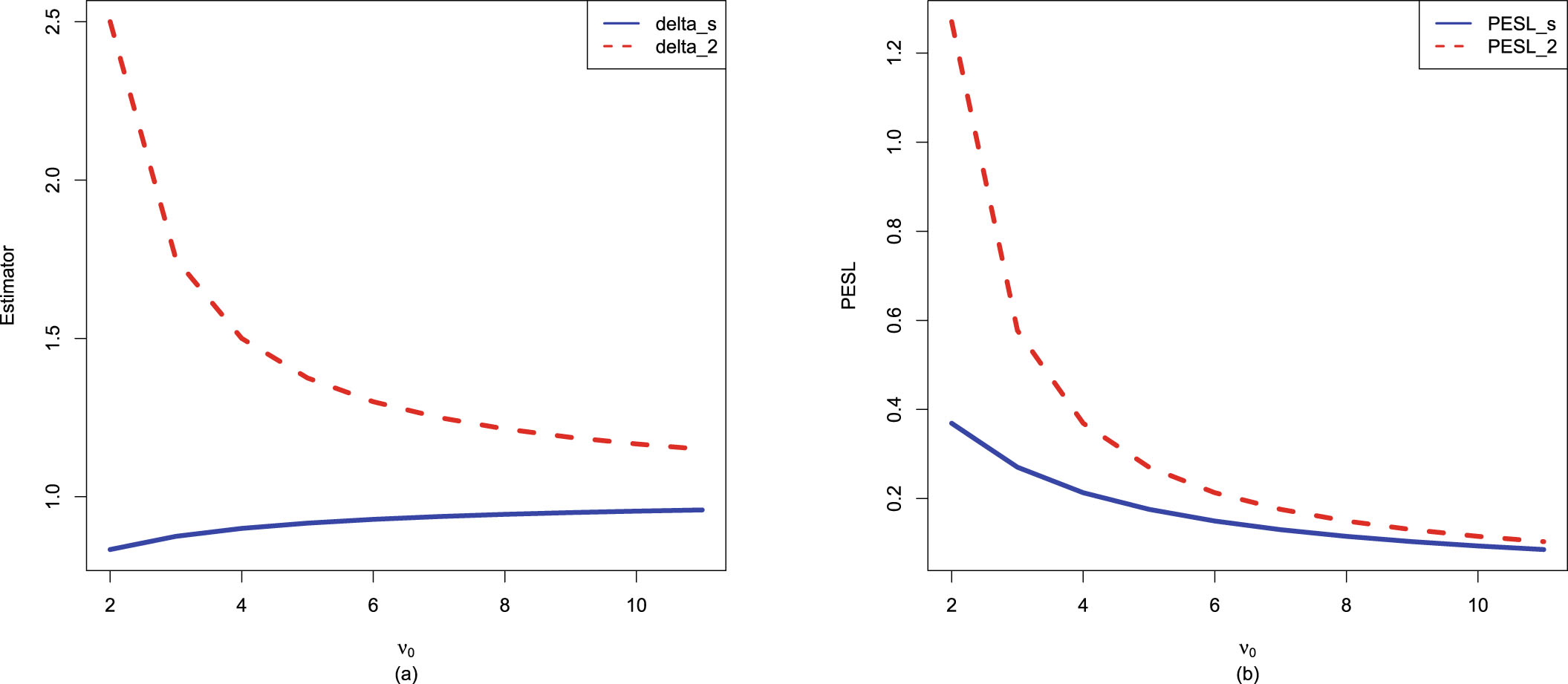

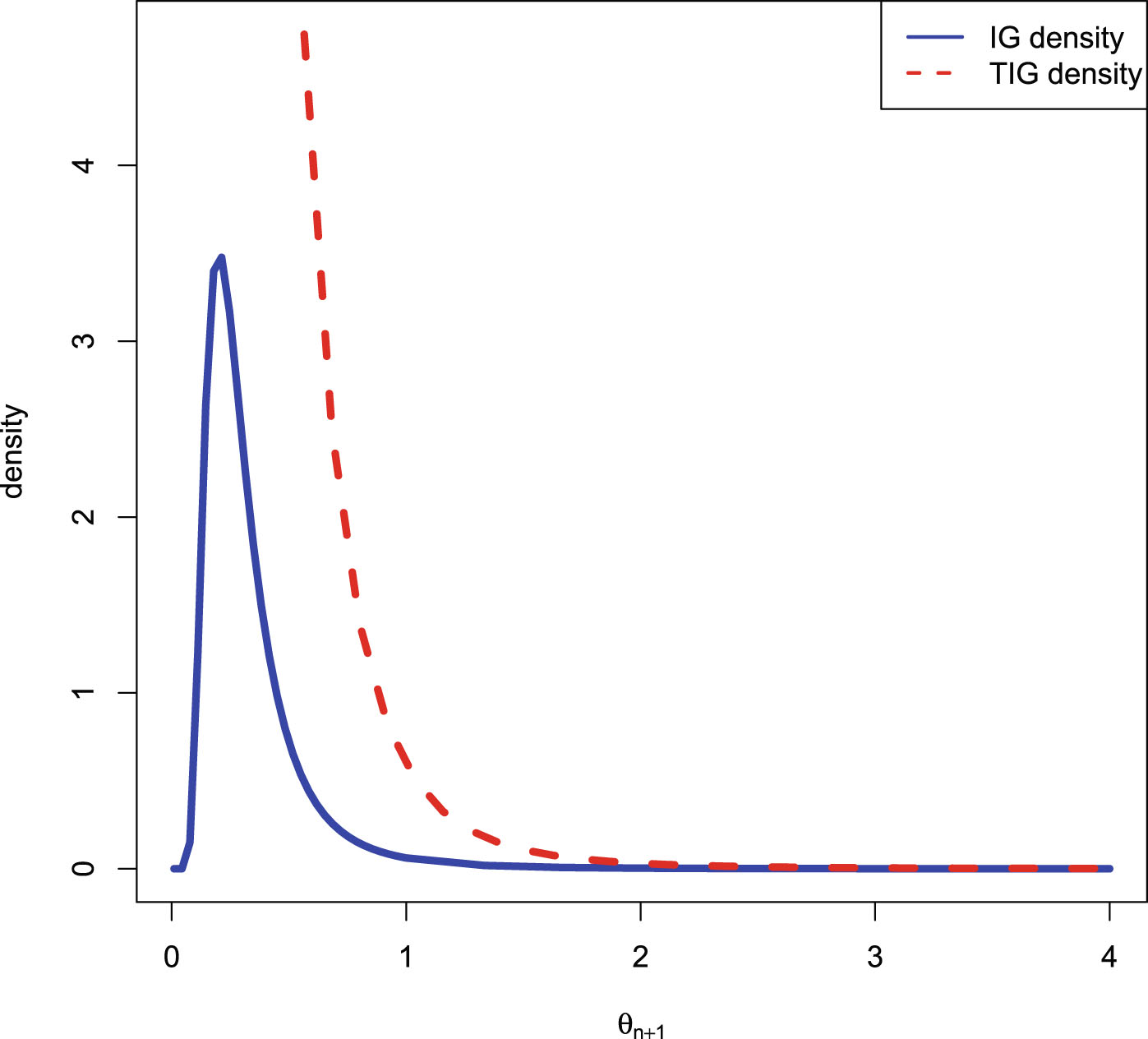

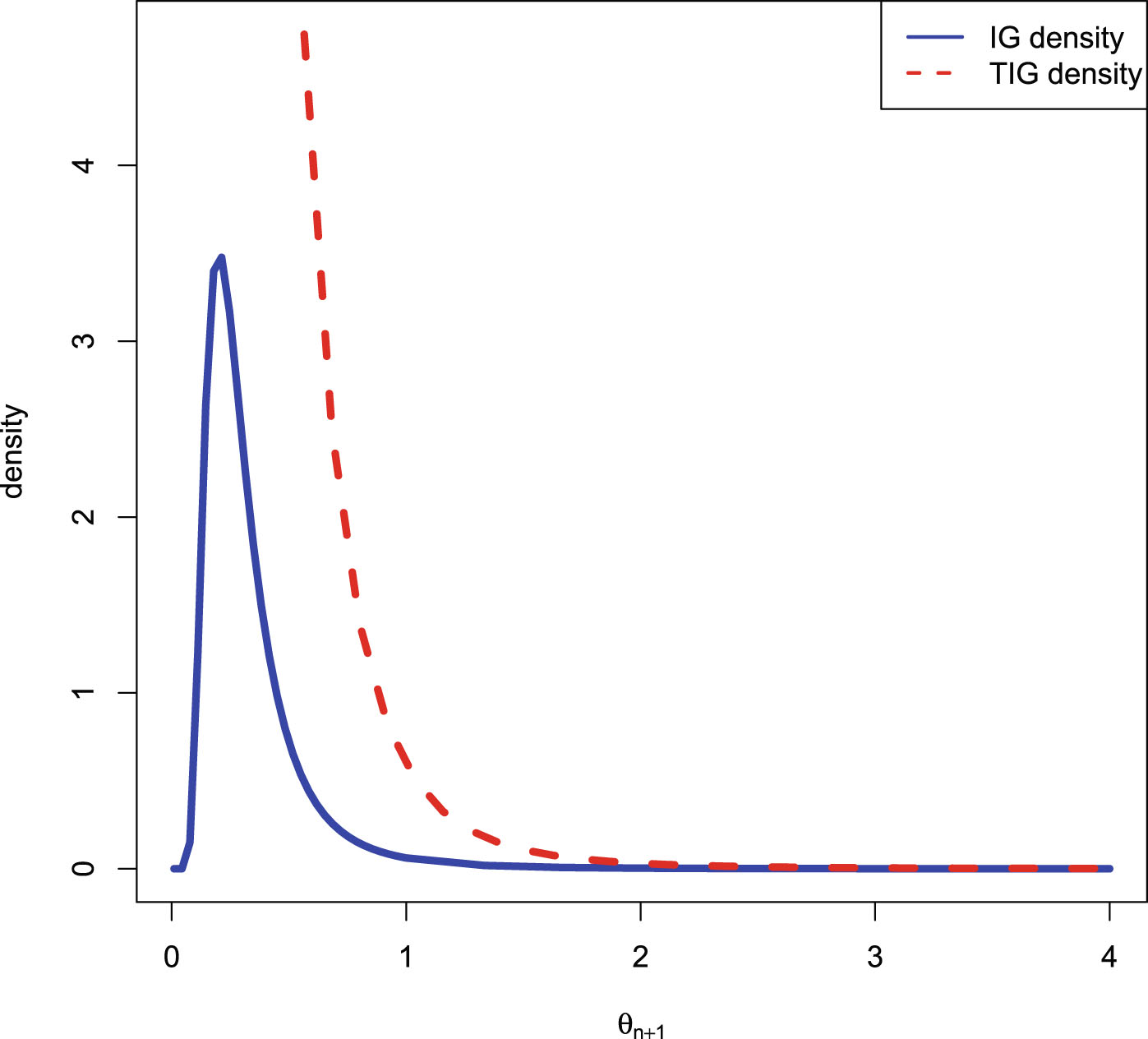

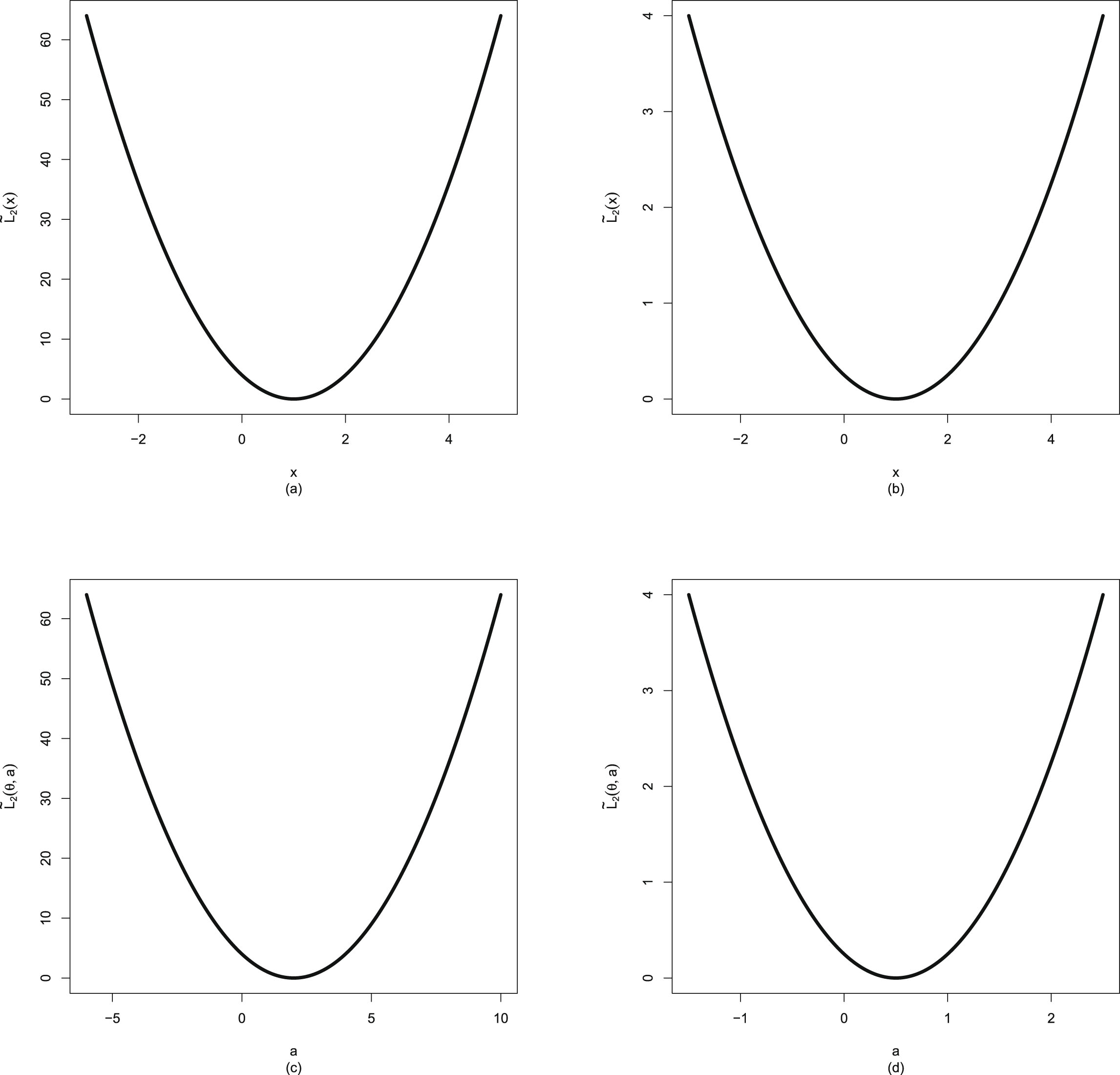

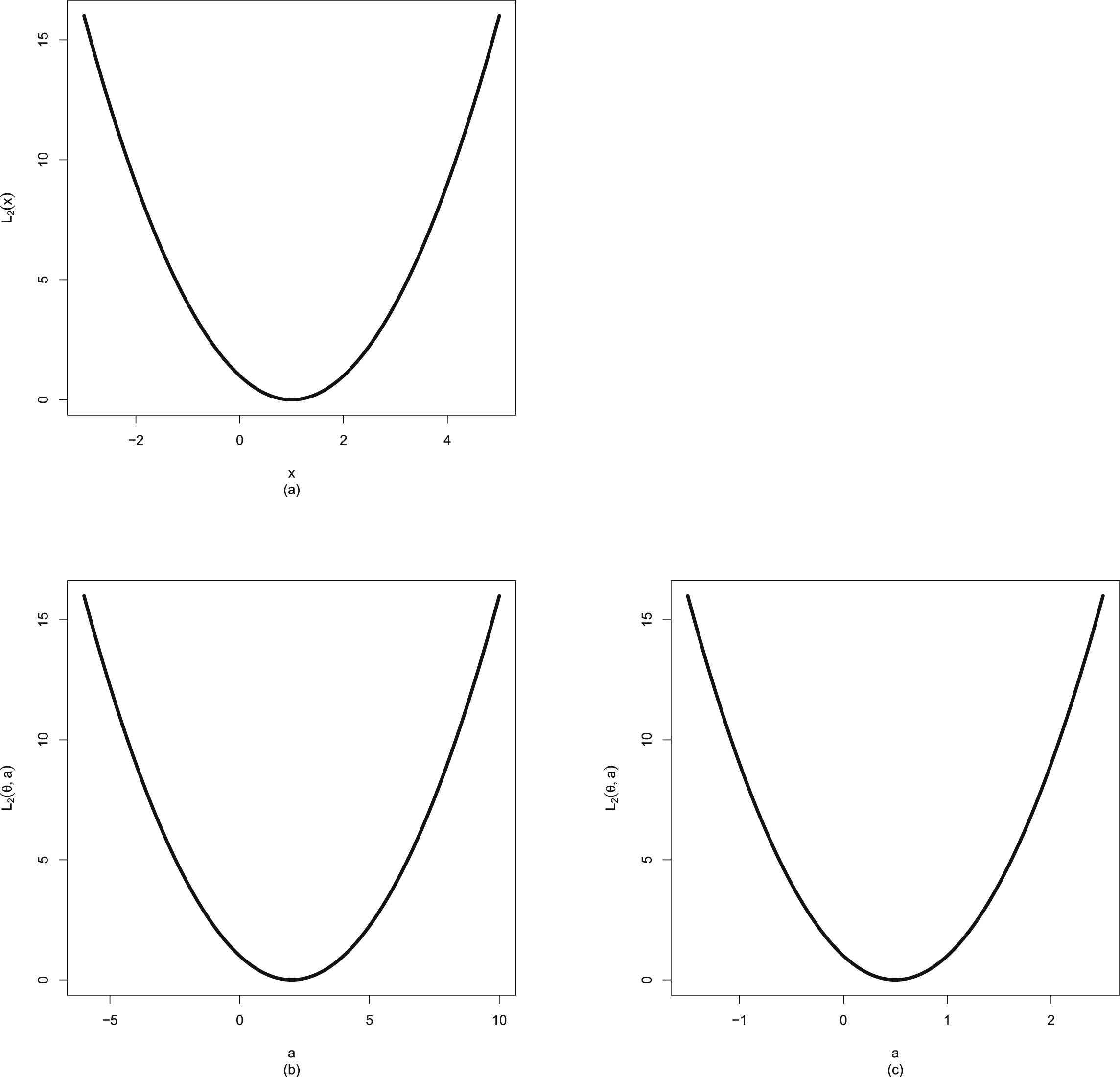

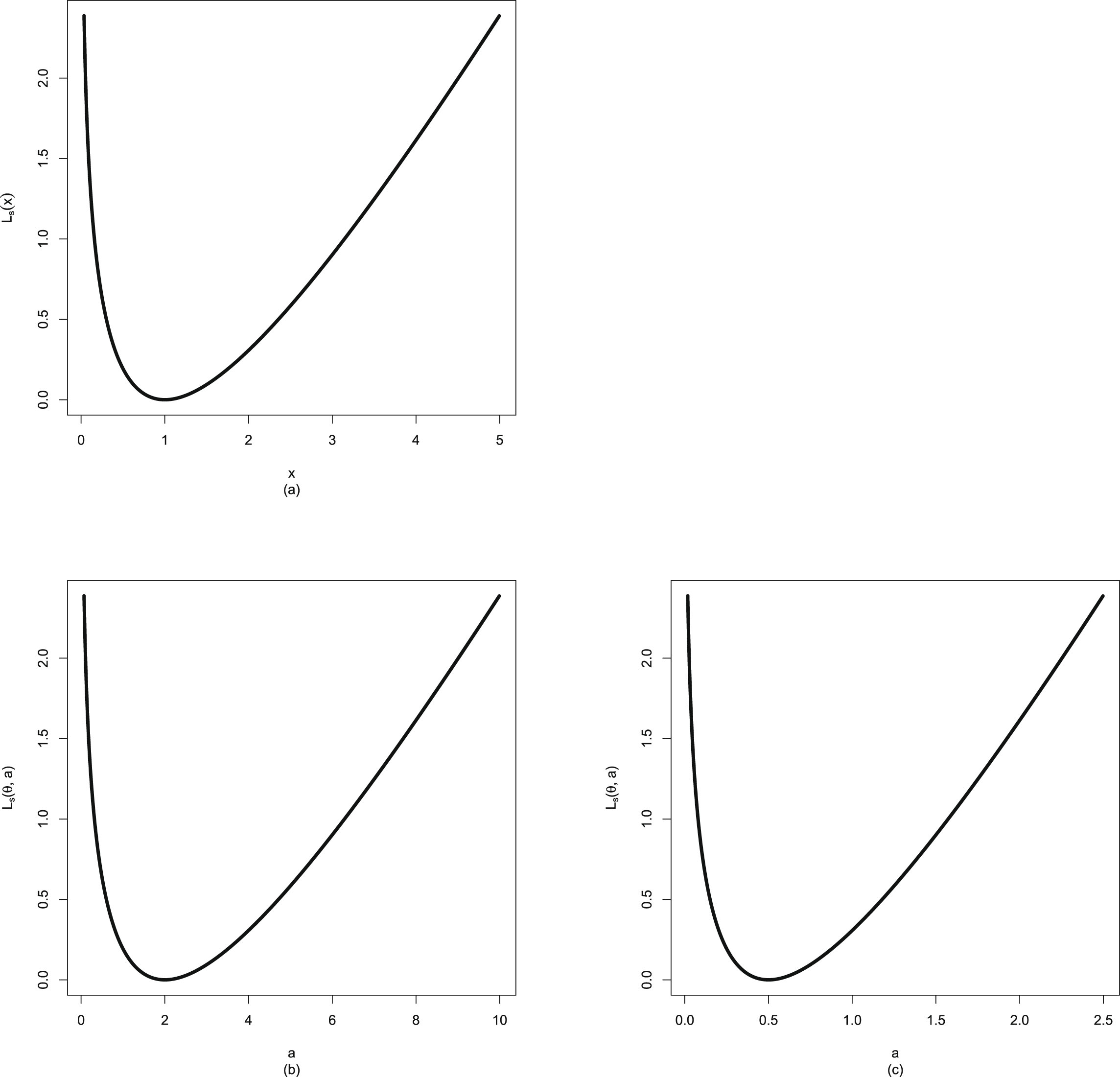

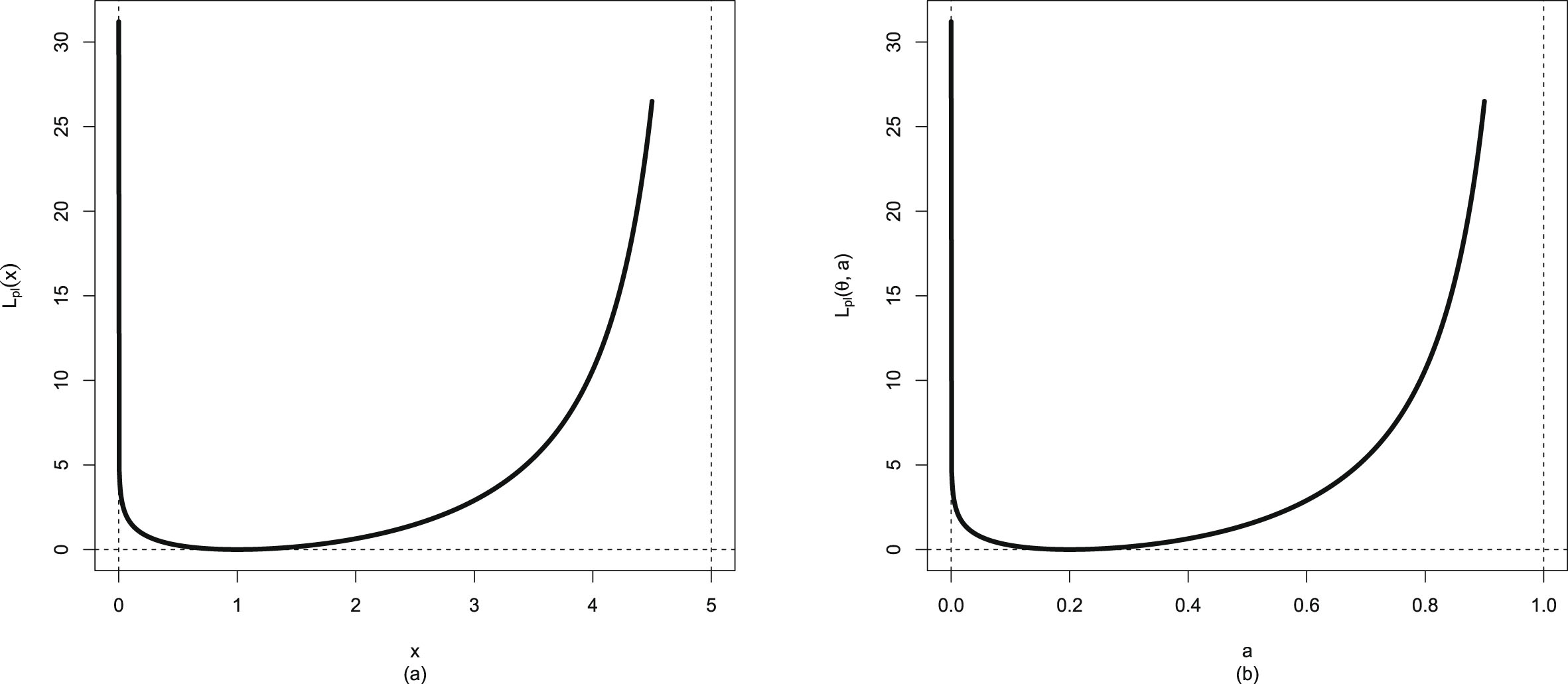

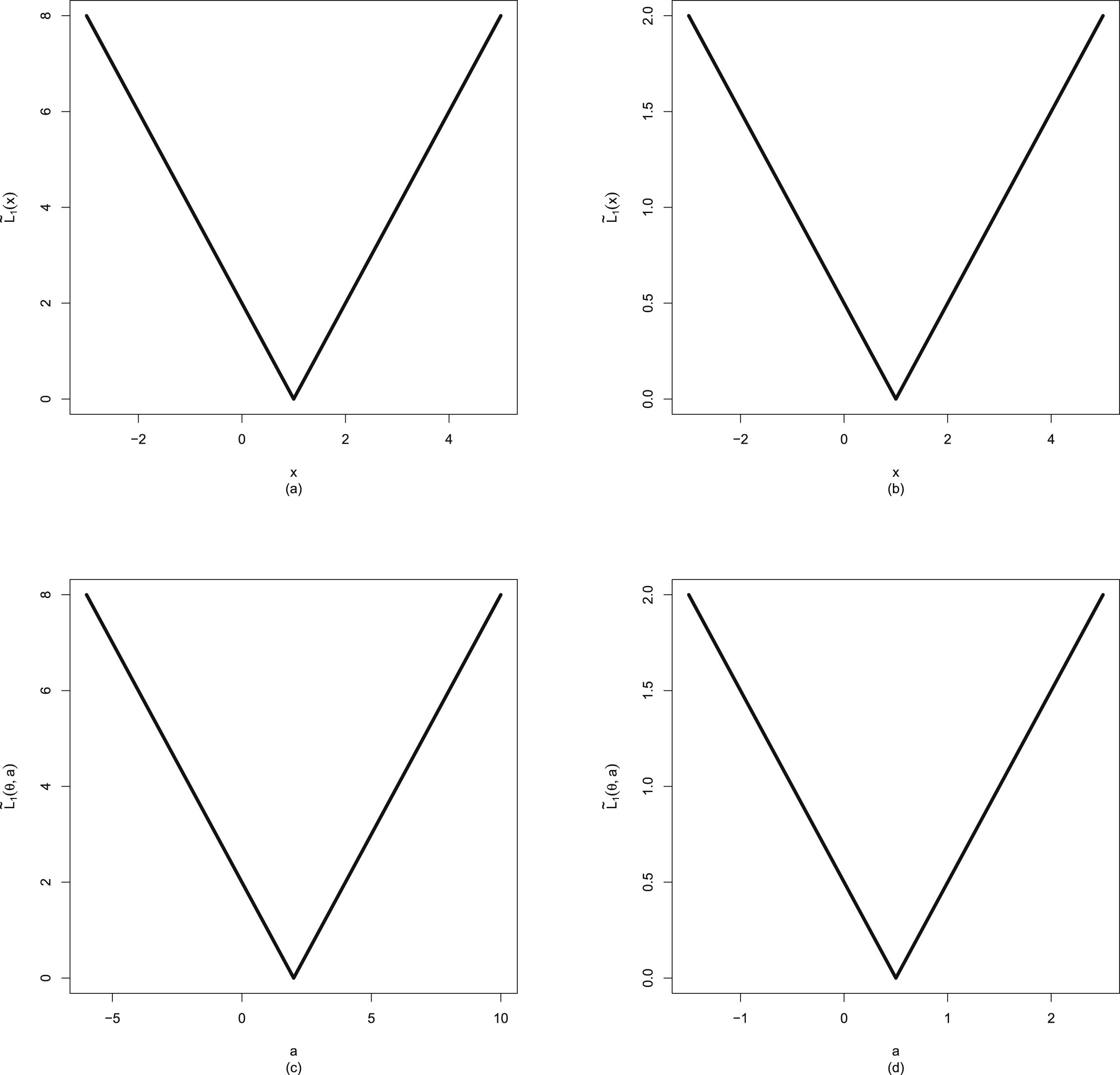

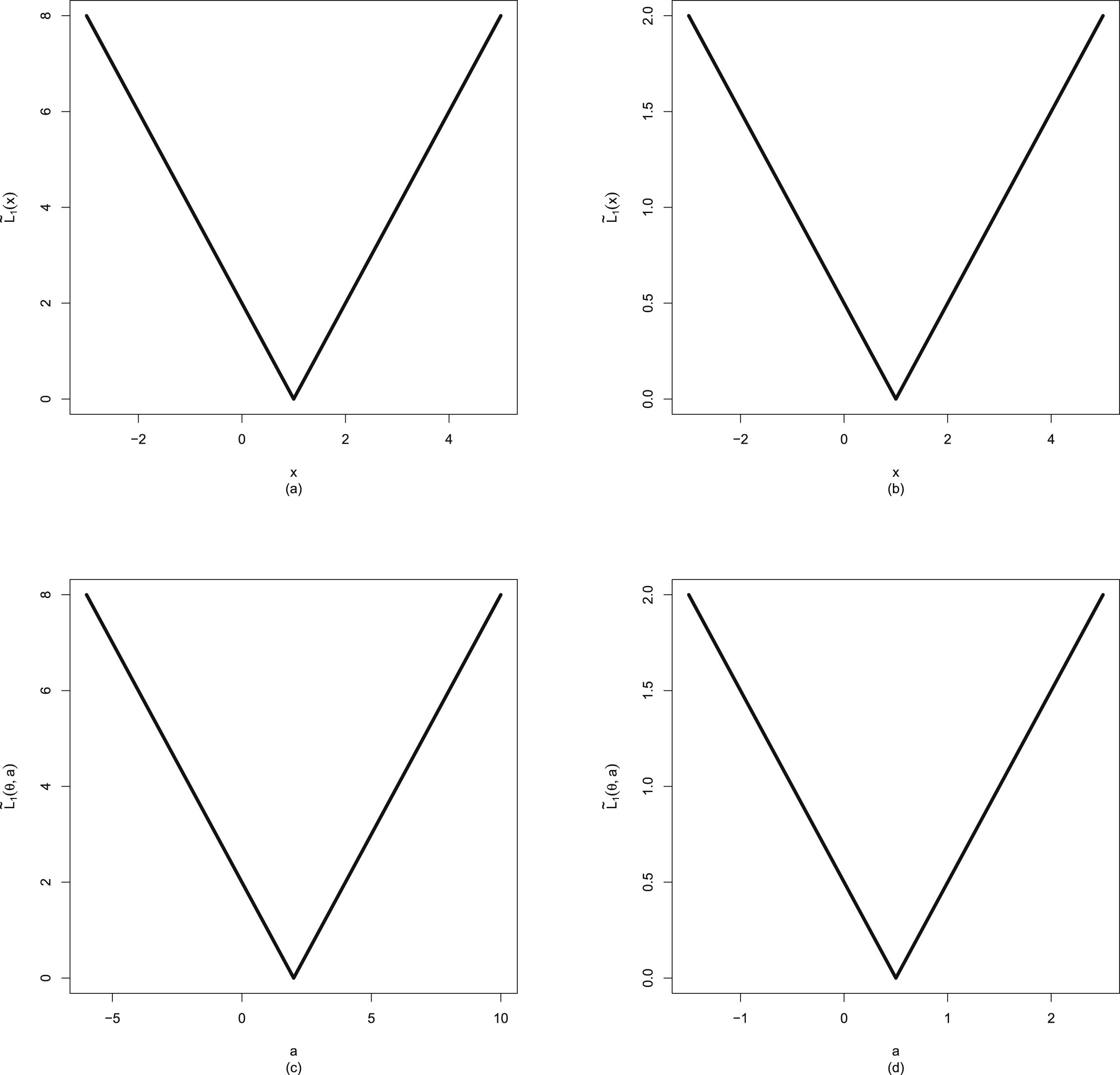

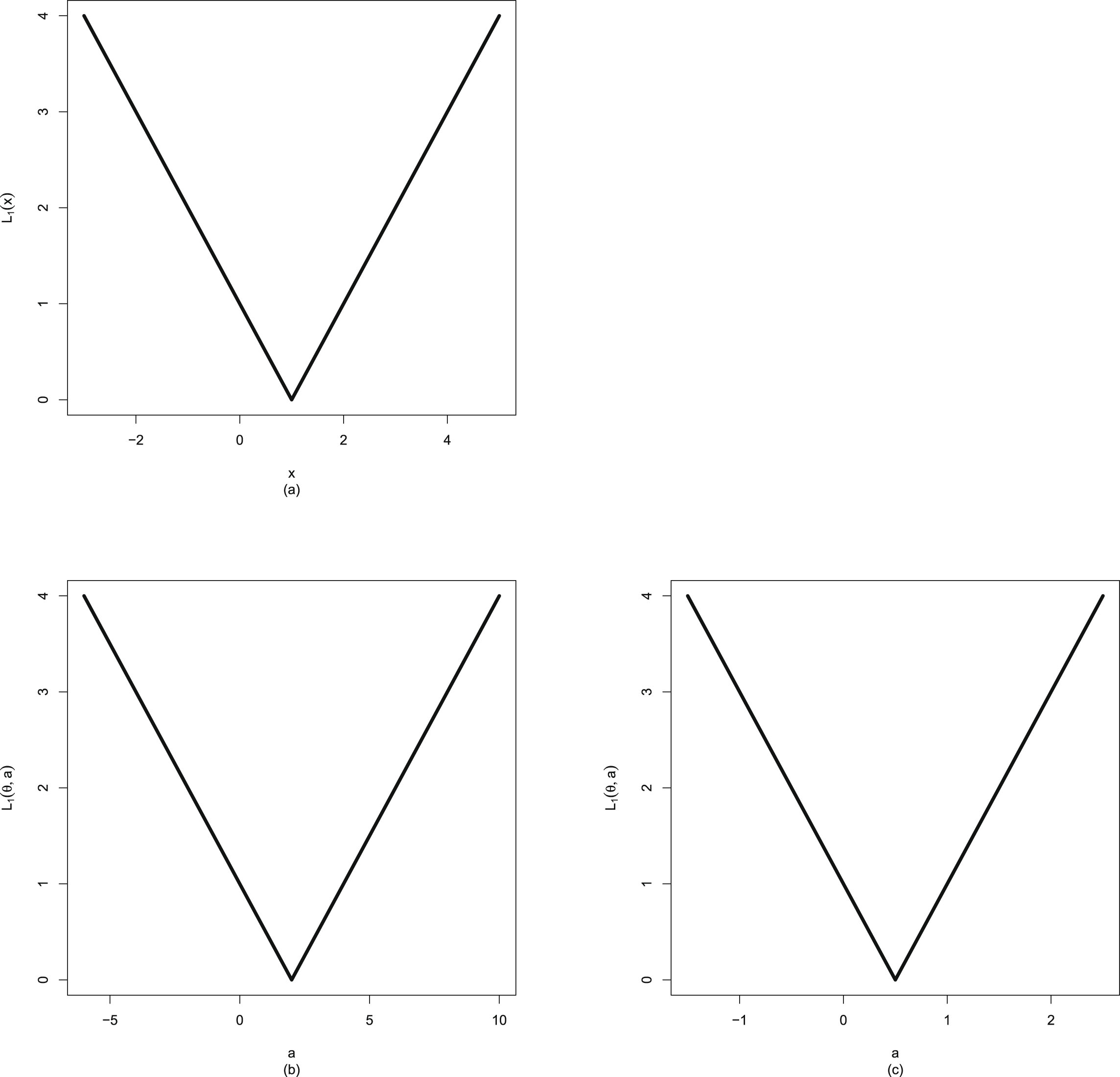

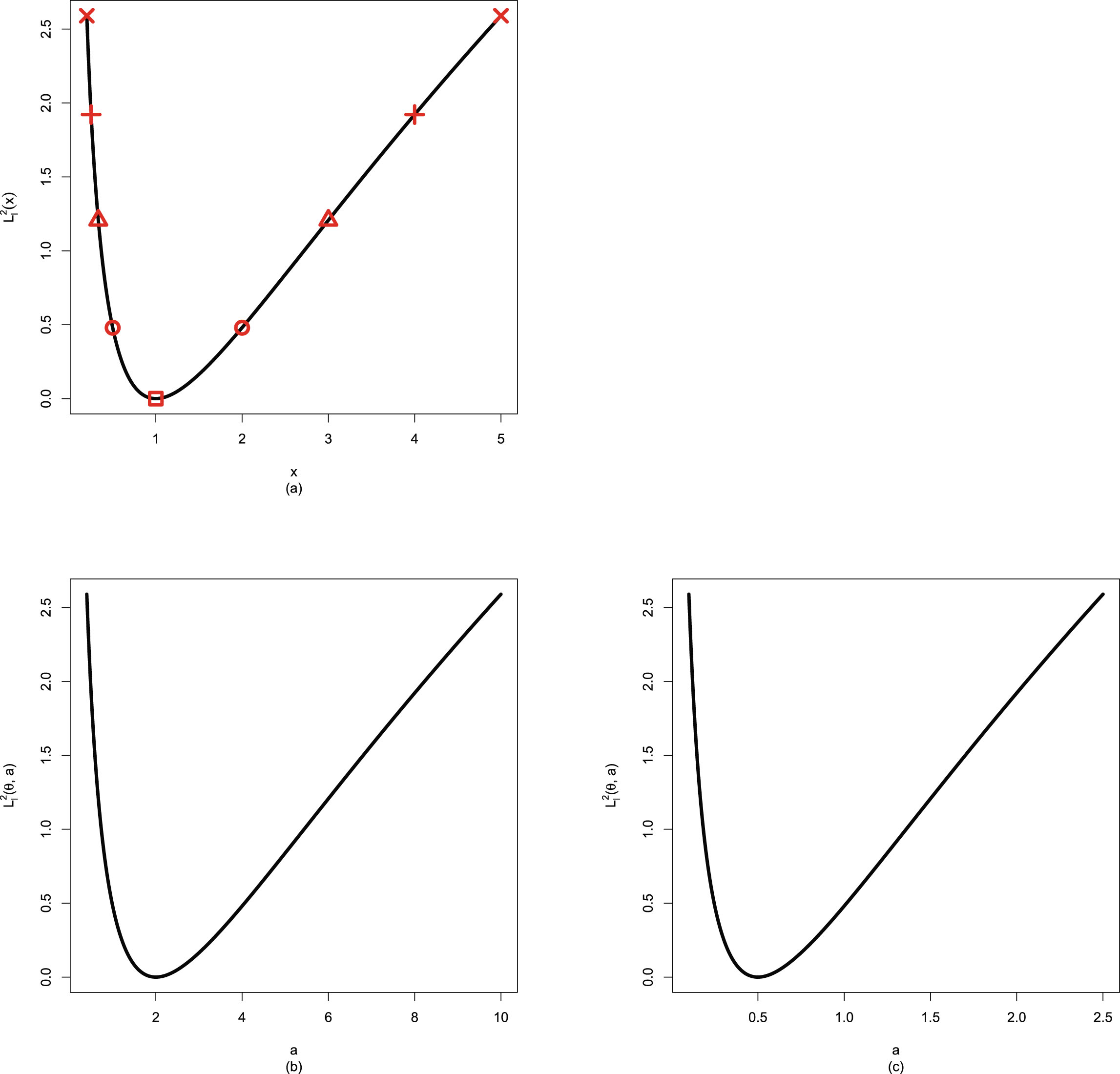

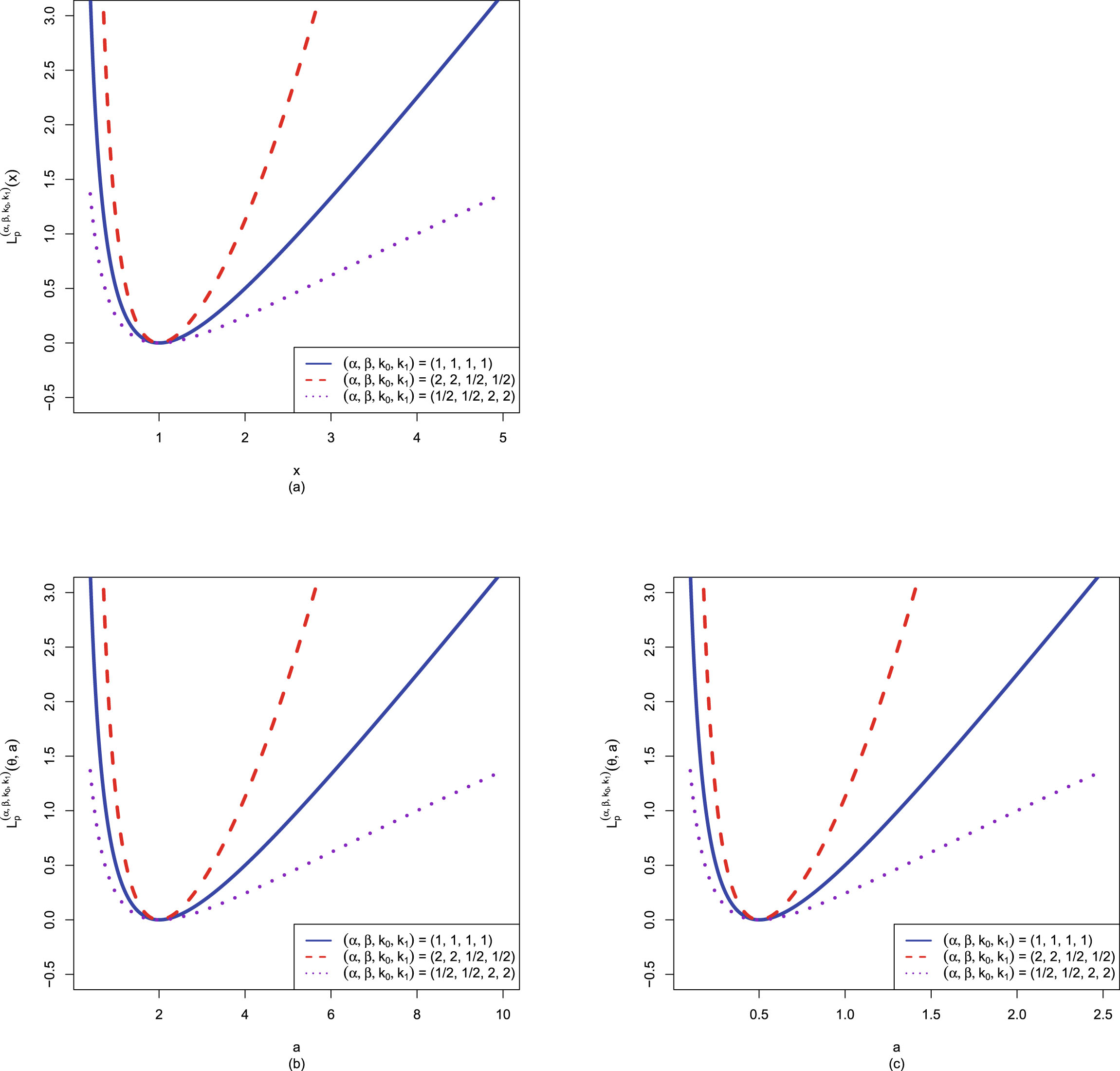

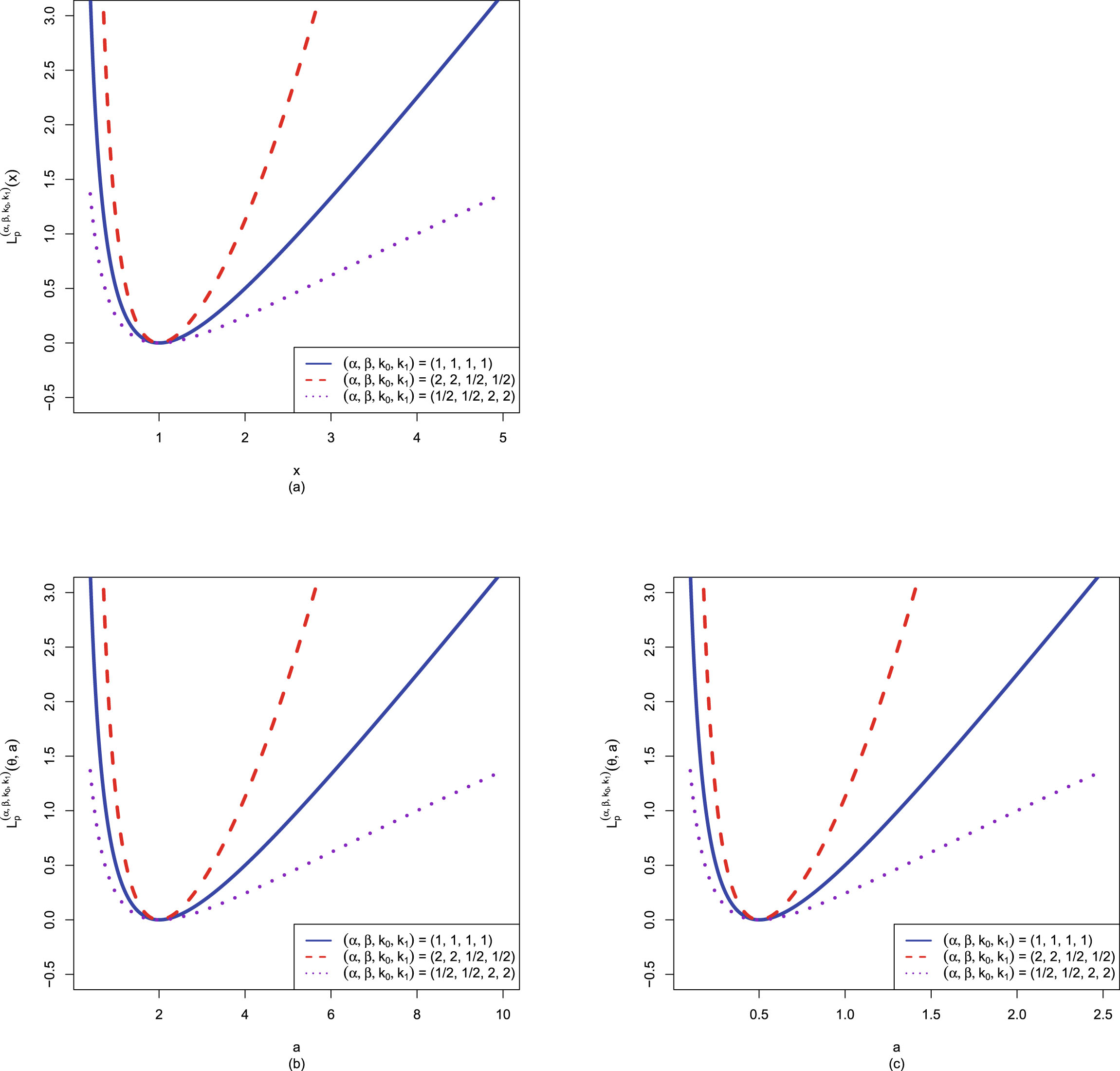

Similar to Sun et al. (2021), the Bayes estimators

, the PESL functions

, the PESL functions  , and the PESLs

, and the PESLs

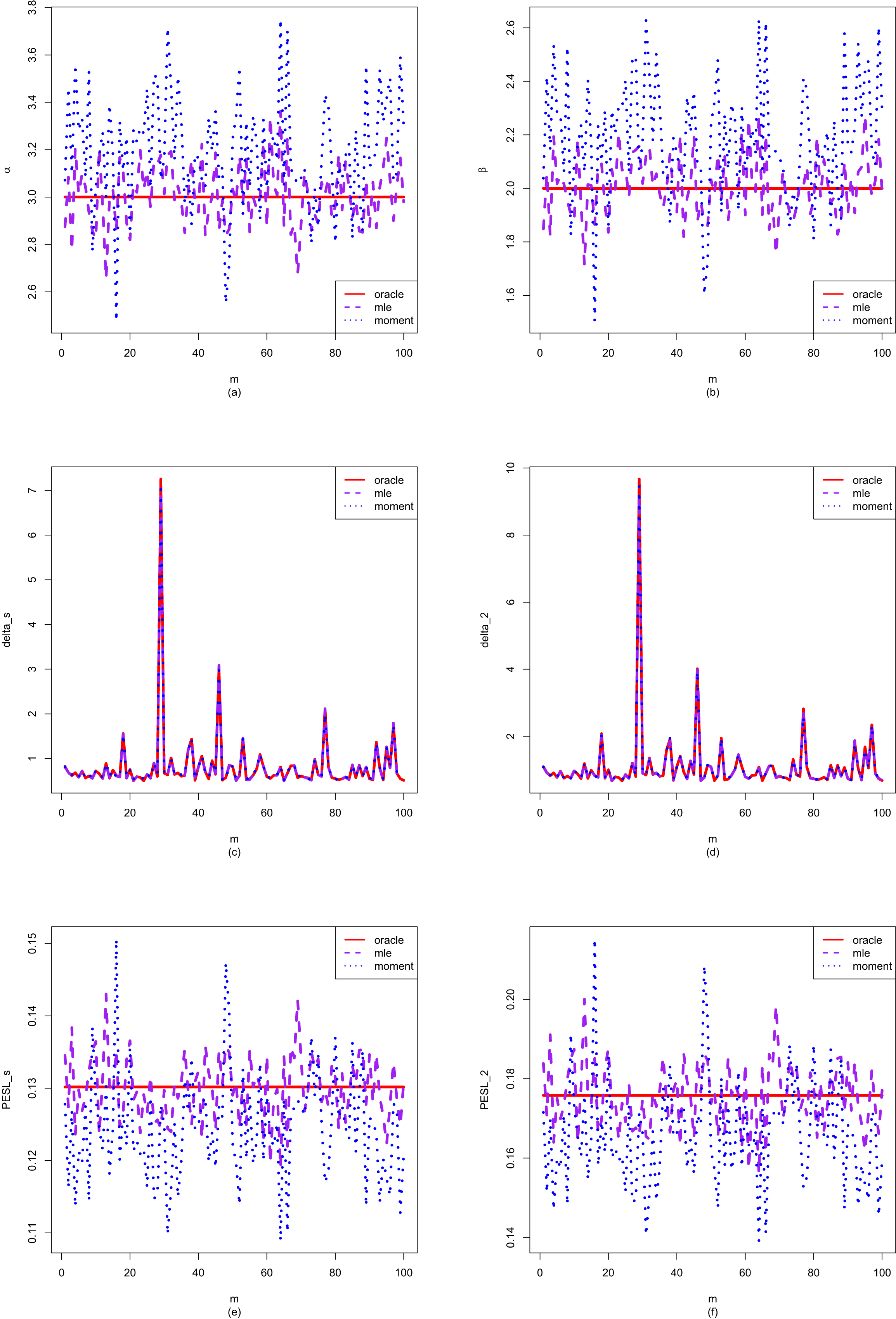

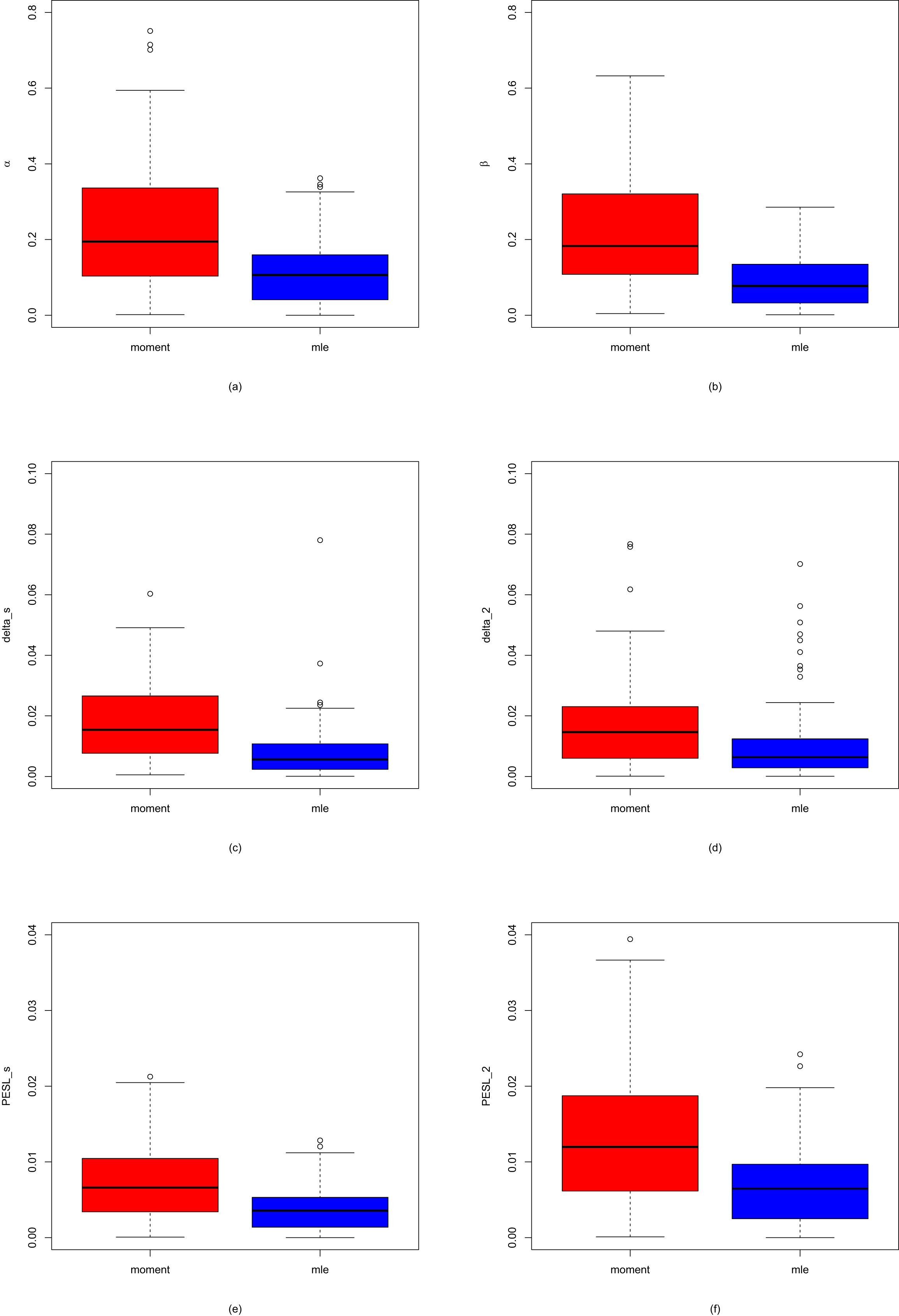

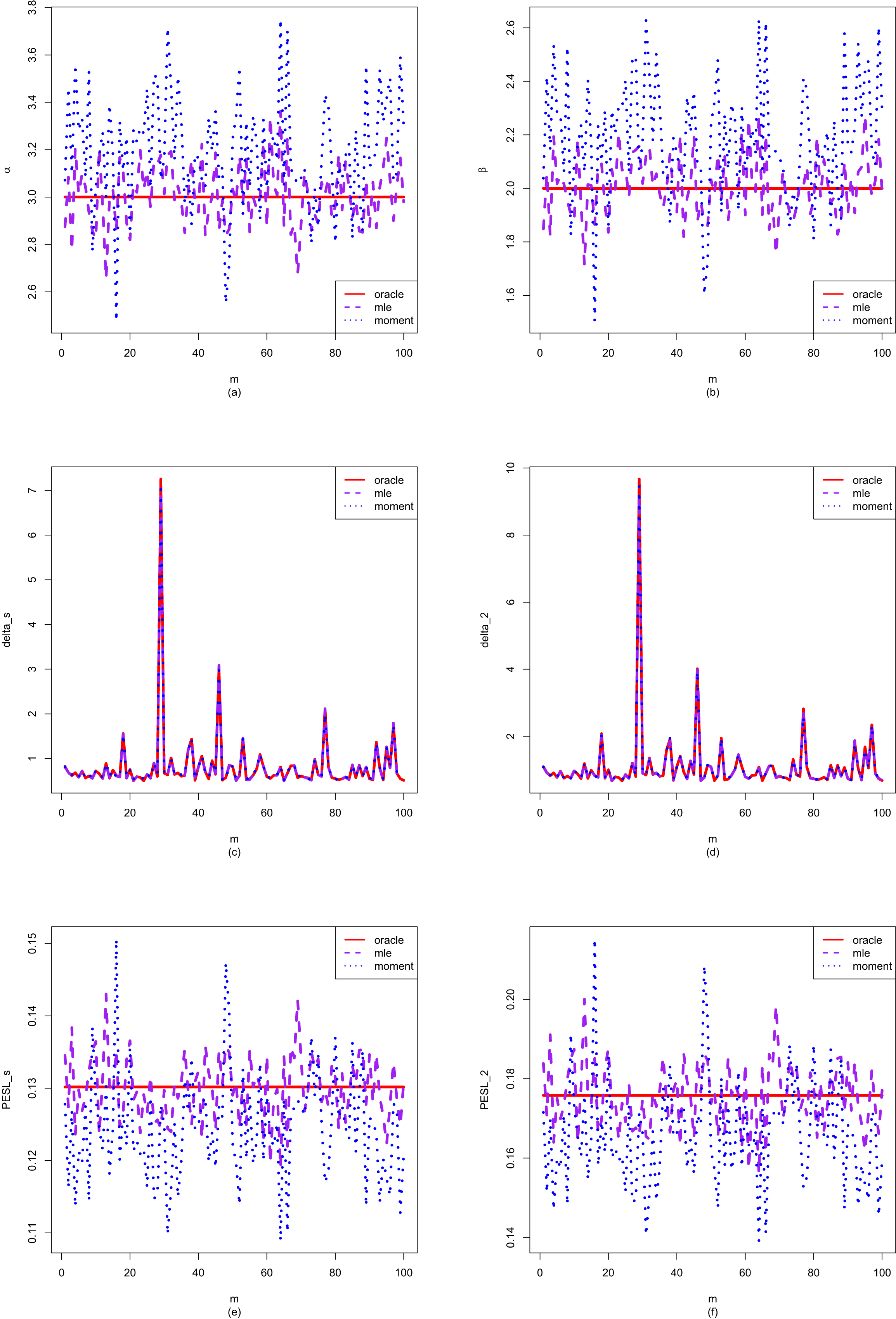

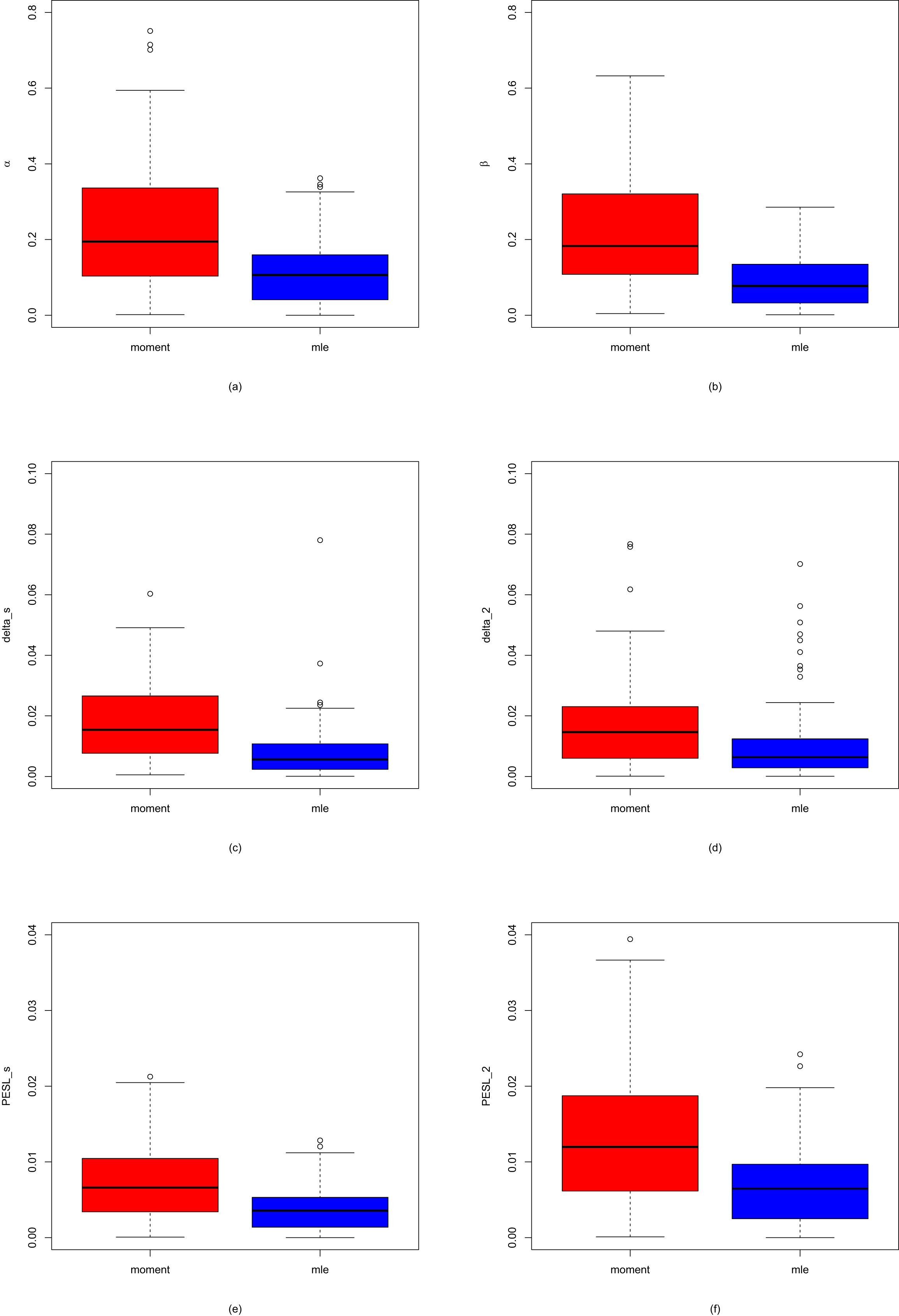

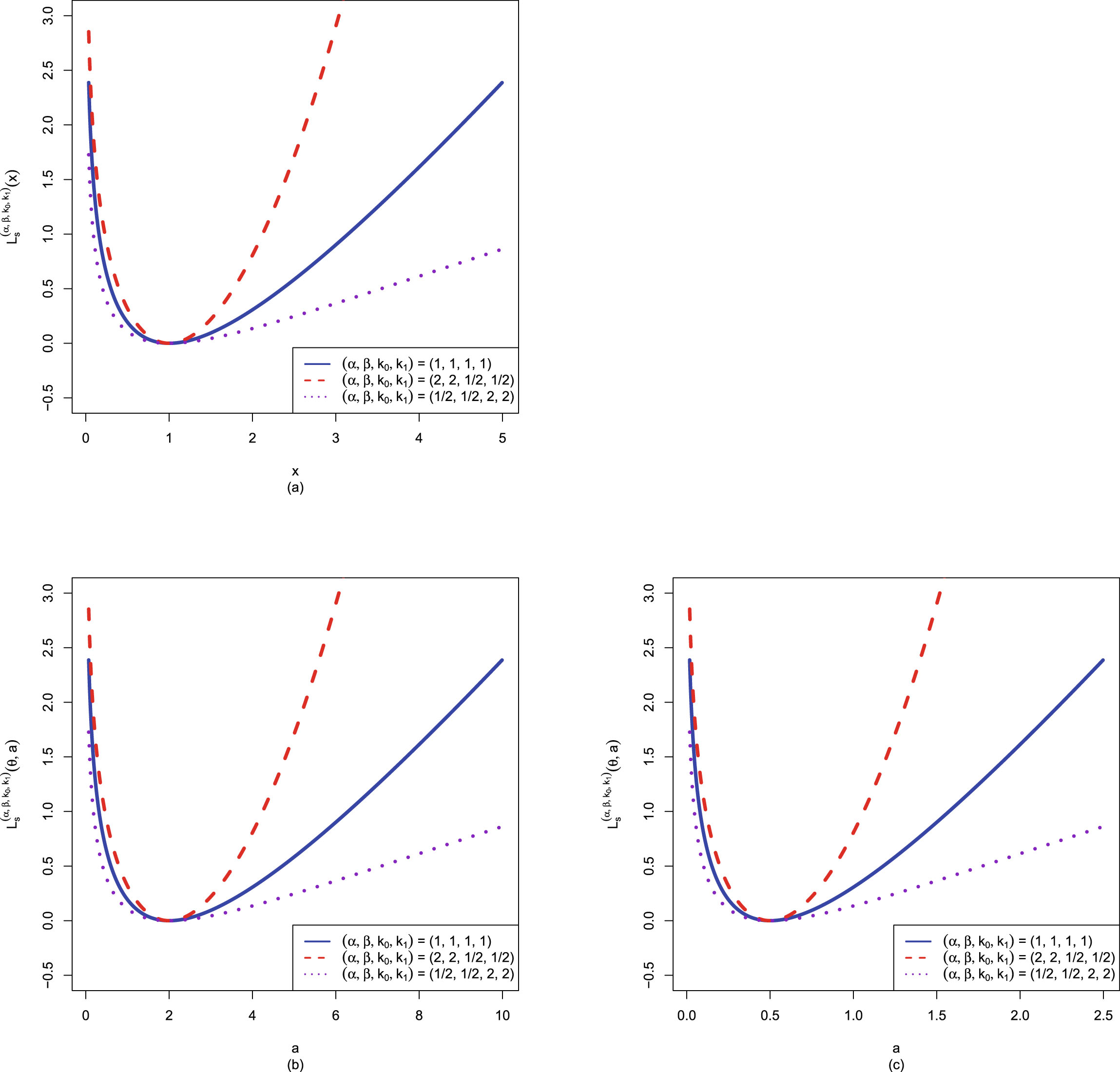

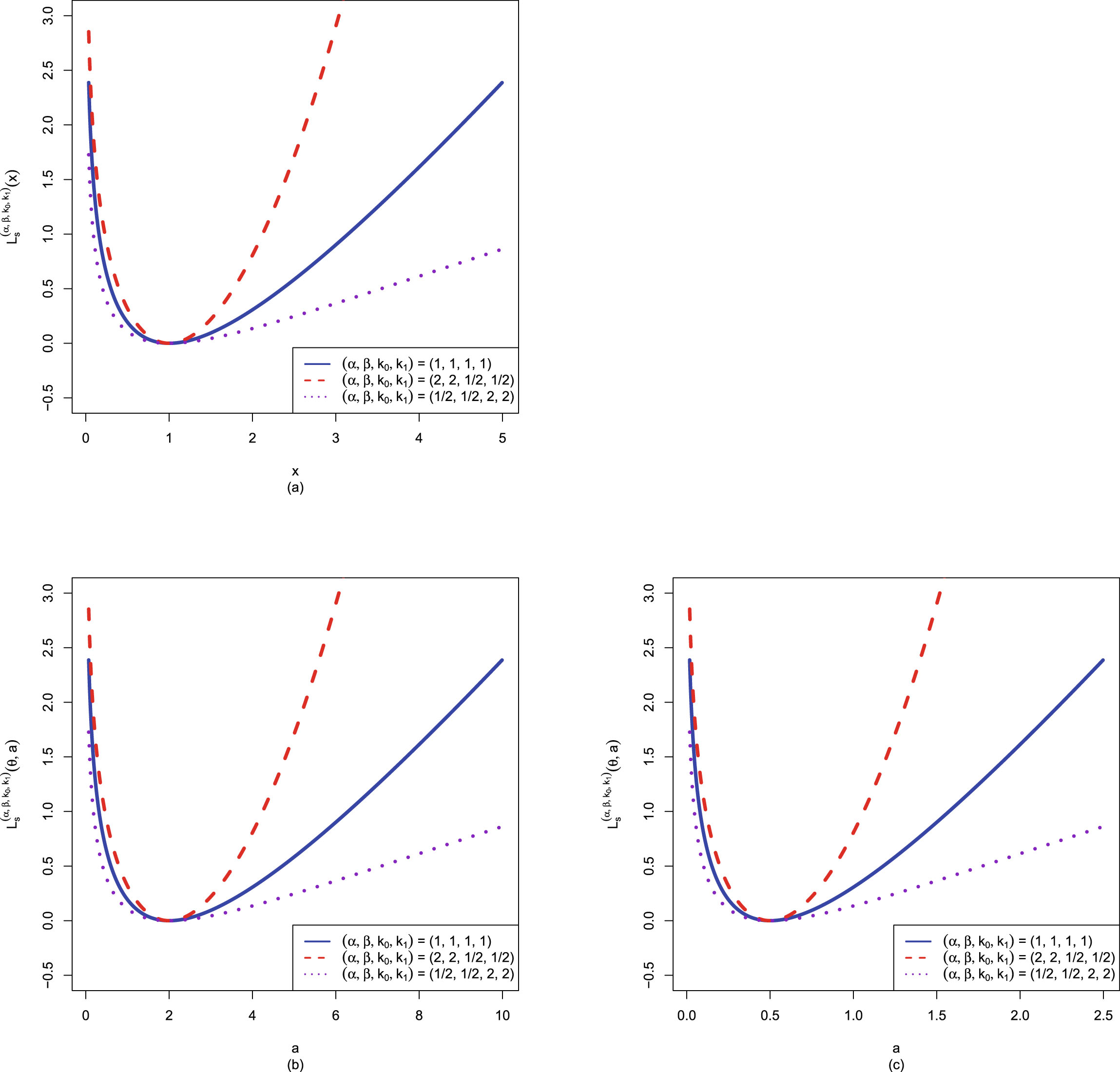

are depicted in figure 1.2. From the figure, we see that the Bayes estimators

are depicted in figure 1.2. From the figure, we see that the Bayes estimators

are the minimizers of the PESL functions

are the minimizers of the PESL functions  , and the PESLs

, and the PESLs

are the PESL functions

are the PESL functions  evaluated at the Bayes estimators

evaluated at the Bayes estimators  . Note that for the Bayes estimators,

. Note that for the Bayes estimators,  and

and  maybe larger or smaller than

maybe larger or smaller than  . Similarly, for the PESLs,

. Similarly, for the PESLs,  and

and  maybe larger or smaller than

maybe larger or smaller than  .

.

FIG. 1.2 — I: The Bayes estimators  , the PESL functions

, the PESL functions  , and the PESLs

, and the PESLs  . (a) The Bayes estimators and the PESLs by the oracle method are larger. (b) The Bayes estimators and the PESLs by the oracle method are smaller.

. (a) The Bayes estimators and the PESLs by the oracle method are larger. (b) The Bayes estimators and the PESLs by the oracle method are smaller.

1.8 Simulation Techniques

1.8.1 Consistencies of the Moment Estimators and the MLEs

In this subsection, taking the hyperparameters from Sun et al. (2021) as an example, we will introduce a simulation technique to numerically exemplify that the moment estimators ( ,

,  , and

, and  ) and the MLEs (

) and the MLEs ( ,

,  , and

, and  ) are consistent estimators of the hyperparameters (

) are consistent estimators of the hyperparameters ( ,

,  , and

, and  ) of the hierarchical inverse gamma and inverse gamma model (2.1). Note that only

) of the hierarchical inverse gamma and inverse gamma model (2.1). Note that only  are used in this subsection.

are used in this subsection.

Let  denote the hyperparameter

denote the hyperparameter  ,

,  , or

, or  . Then the consistency means that

. Then the consistency means that

for

for  , where

, where  is for the moment estimator,

is for the moment estimator,  is for the MLE,

is for the MLE,  means convergence in probability, and

means convergence in probability, and  is the sample size. Alternatively, the consistency means that

is the sample size. Alternatively, the consistency means that

(1.22)

for every

(1.22)

for every  and every

and every  . The probabilities are approximated by the corresponding frequencies:

. The probabilities are approximated by the corresponding frequencies:

for

for  , where

, where  is the indicator function of

is the indicator function of  , which is equal to 1 if

, which is equal to 1 if  is true and

is true and  otherwise, and

otherwise, and  is the number of simulations. Therefore, the frequencies (

is the number of simulations. Therefore, the frequencies ( ,

,  ,

,  , or

, or  ,

,  ) tend to

) tend to  means that the estimators are consistent.

means that the estimators are consistent.

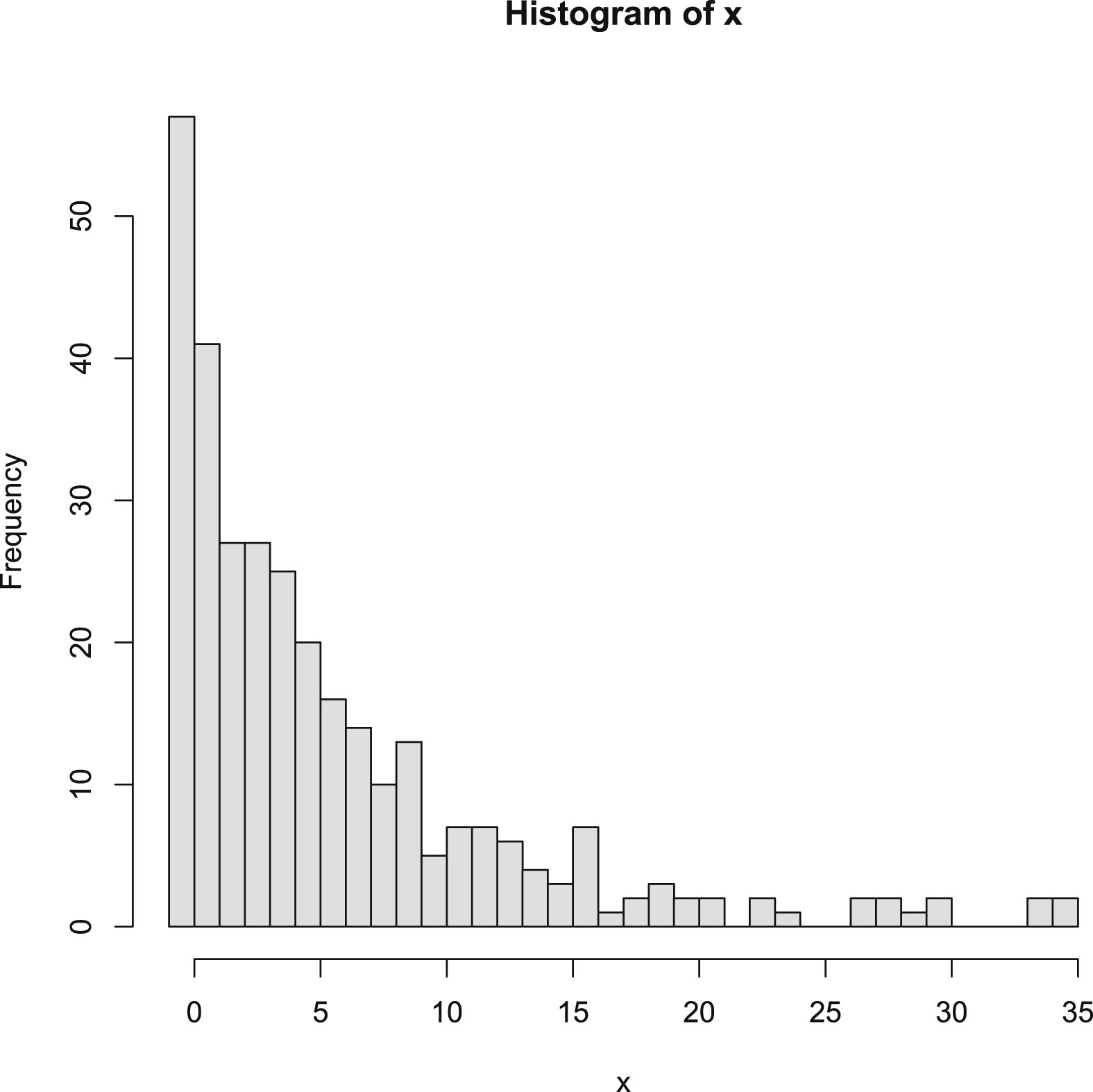

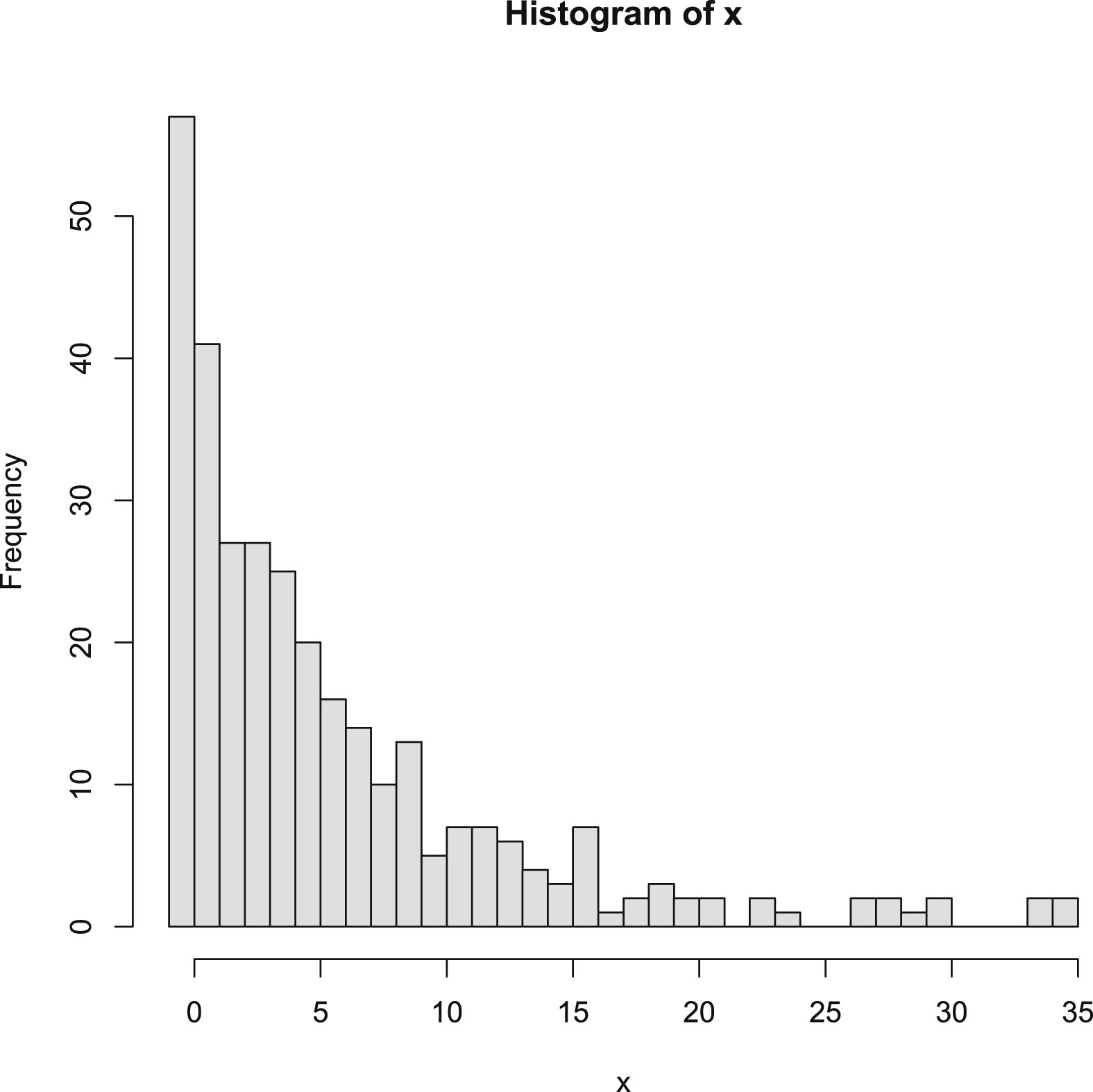

1.8.2 Goodness-of-Fit of the Model

In this subsection, we will introduce two simulation techniques to calculate the goodness-of-fit of the hierarchical model to the simulated data (see Ross (2013); Xue and Chen (2007)). The first simulation technique is the chi-square test, which is mainly used for discrete distributions. The second simulation technique is the Kolmogorov-Smirnov (KS) test, which is mainly used for continuous distributions. Note that only  are used in this subsection.

are used in this subsection.

Chi-square test

Let us take the hierarchical Poisson and gamma model (8.1) as an example to illustrate the process of the chi-square test. Two cases of the goodness-of-fit will be considered. In the first case, the hyperparameters  and

and  are assumed known. In the second case, the hyperparameters

are assumed known. In the second case, the hyperparameters  and

and  are unknown, and this is also the case encountered in real applications.

are unknown, and this is also the case encountered in real applications.

Case 1. The hyperparameters  and

and  are assumed known.

are assumed known.

In this case, the hyperparameters  and

and  are assumed known. For example,

are assumed known. For example,  and

and  . Let the null hypothesis be

. Let the null hypothesis be

where

where  is the marginal distribution of the hierarchical Poisson and gamma model (8.1) with the marginal pmf

is the marginal distribution of the hierarchical Poisson and gamma model (8.1) with the marginal pmf  given by (8.3).

given by (8.3).

The chi-square goodness-of-fit is performed as follows. We first divide the domain of  ,

,  , into

, into  groups:

groups:

Let the theoretical probabilities under  on these subintervals be

on these subintervals be

where

where

and

and  is the probability when

is the probability when  is distributed under

is distributed under  . Let

. Let  , denote the number of

, denote the number of  that lie in the

that lie in the  th subinterval

th subinterval  . Then the chi-square statistics (Ross (2013); Xue and Chen (2007))

. Then the chi-square statistics (Ross (2013); Xue and Chen (2007))

where

where  is convergence in distribution. Moreover, we can compute the p-value, which gives the probability that a value of

is convergence in distribution. Moreover, we can compute the p-value, which gives the probability that a value of  as large as

as large as  would have occurred if the null hypothesis were true. Hence,

would have occurred if the null hypothesis were true. Hence,

where pchisq(), which calculates the cumulative distribution function (cdf) of a chi-square random variable, is an R built-in function (R Core Team (2023)). Note that a large p-value (>0.05 in the usual case) indicates that the model specified by

where pchisq(), which calculates the cumulative distribution function (cdf) of a chi-square random variable, is an R built-in function (R Core Team (2023)). Note that a large p-value (>0.05 in the usual case) indicates that the model specified by  fits the (simulated) data well, while a small p-value (≤0.05 in the usual case) indicates that the model specified by

fits the (simulated) data well, while a small p-value (≤0.05 in the usual case) indicates that the model specified by  does not fit the (simulated) data well. The larger the p-value, the better the model specified by

does not fit the (simulated) data well. The larger the p-value, the better the model specified by  fits the (simulated) data.

fits the (simulated) data.

Case 2. The hyperparameters  and

and  are unknown.

are unknown.

Let the null hypothesis be

where

where  and

and  are unknown. First, the hyperparameters

are unknown. First, the hyperparameters  and

and  need to be estimated by the sample. The estimators could be the moment estimators or the MLEs. Let

need to be estimated by the sample. The estimators could be the moment estimators or the MLEs. Let  and

and  be given in Case 1. The theoretical probabilities under

be given in Case 1. The theoretical probabilities under  on the subintervals are calculated by

on the subintervals are calculated by

that is, the unknown hyperparameters

that is, the unknown hyperparameters  and

and  are estimated by their estimators

are estimated by their estimators  and

and  based on the sample. Then the chi-square statistics (Ross (2013); Xue and Chen (2007))

based on the sample. Then the chi-square statistics (Ross (2013); Xue and Chen (2007))

Note that the degree of freedom is now lost by 2, since two unknown parameters are estimated by the sample. Moreover, the p-value is given by

KS test

Chi-square test is a measure of the goodness-of-fit. However, the chi-square test is very sensitive because of the problem of choosing the number of groups and the problem of finding the cut-points. Therefore, instead of using the chi-square test as a measure of the goodness-of-fit, we may use the Kolmogorov-Smirnov test (or the KS test) as a measure of the goodness-of-fit.

The Kolmogorov-Smirnov statistic is the distance  between the empirical cdf

between the empirical cdf  and the population cdf

and the population cdf  , that is,

, that is,

(1.23)

(1.23)

In R software, the built-in function ks.test() can perform the KS test (see Marsaglia et al. (2003); Durbin (1973); Conover (1971)). Note that the KS test is generally valid for one-dimensional continuous cumulative distribution functions (cdfs). But in literature, KS-type tests have been developed for discrete data too (Santitissadeekorn et al. (2020); Aldirawi et al. (2019); Dimitrova et al. (2020)).

The return value of ks.test() is a list containing the components statistic (the value of the test statistic, or the  value) and p-value (the p-value of the test). It is well known in the literature that a smaller

value) and p-value (the p-value of the test). It is well known in the literature that a smaller  value or a larger p-value indicates a better fit of the model to the simulated data. Inspired by and based on the

value or a larger p-value indicates a better fit of the model to the simulated data. Inspired by and based on the  value and p-value, Sun et al. (2021) propose five indices (

value and p-value, Sun et al. (2021) propose five indices ( ,

,  ,

,  ,

,  , and

, and  ) to compare the three methods, namely, the oracle method, the moment method, and the MLE method in simulations.

) to compare the three methods, namely, the oracle method, the moment method, and the MLE method in simulations.  is the average

is the average  values (1.23) of

values (1.23) of  simulations (the smaller the better).

simulations (the smaller the better).  is the average p-value of

is the average p-value of  simulations (the larger the better).

simulations (the larger the better).  is the percentage of

is the percentage of  simulations that attain the minimum

simulations that attain the minimum  value in the three methods (the larger the better). The three

value in the three methods (the larger the better). The three  values should sum to

values should sum to  .

.  is the percentage of

is the percentage of  simulations which attain the maximum p-value in the three methods (the larger the better). The three

simulations which attain the maximum p-value in the three methods (the larger the better). The three  values should sum to

values should sum to  .

.  is the percentage of accepting

is the percentage of accepting  (defined as p-value >0.05) in

(defined as p-value >0.05) in  simulations for each method (the larger the better). Each

simulations for each method (the larger the better). Each  value should between 0%–100%.

value should between 0%–100%.

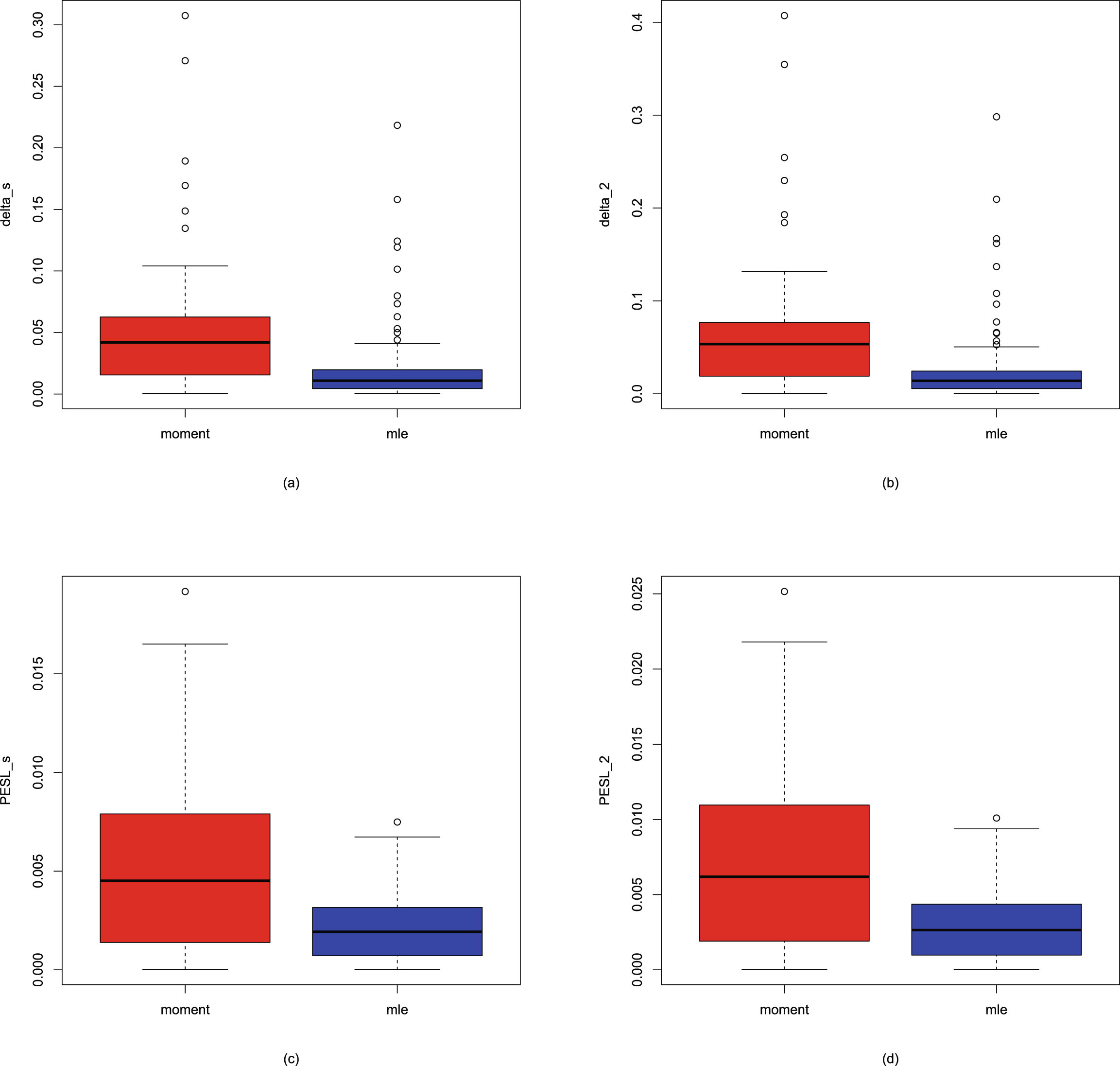

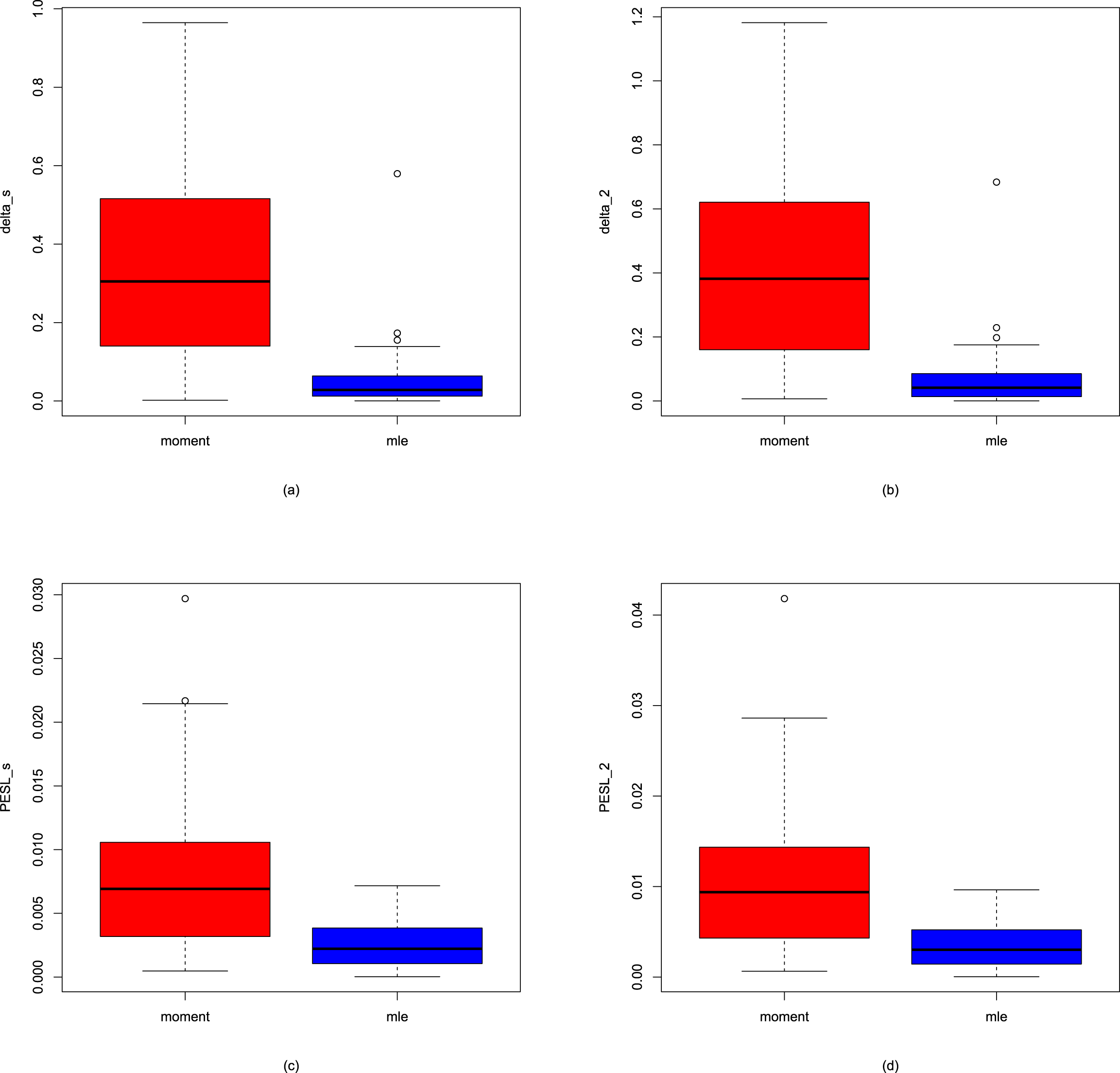

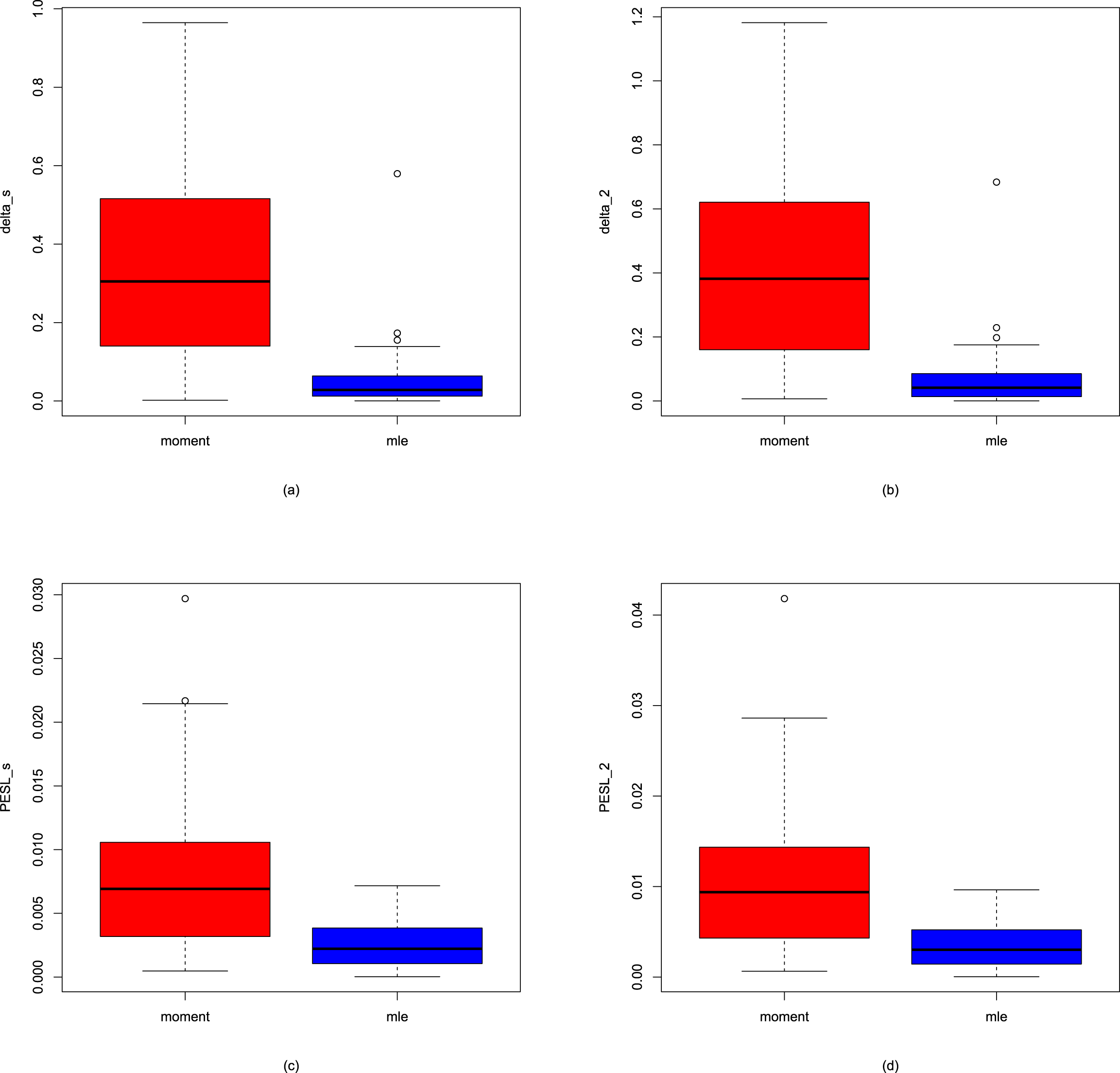

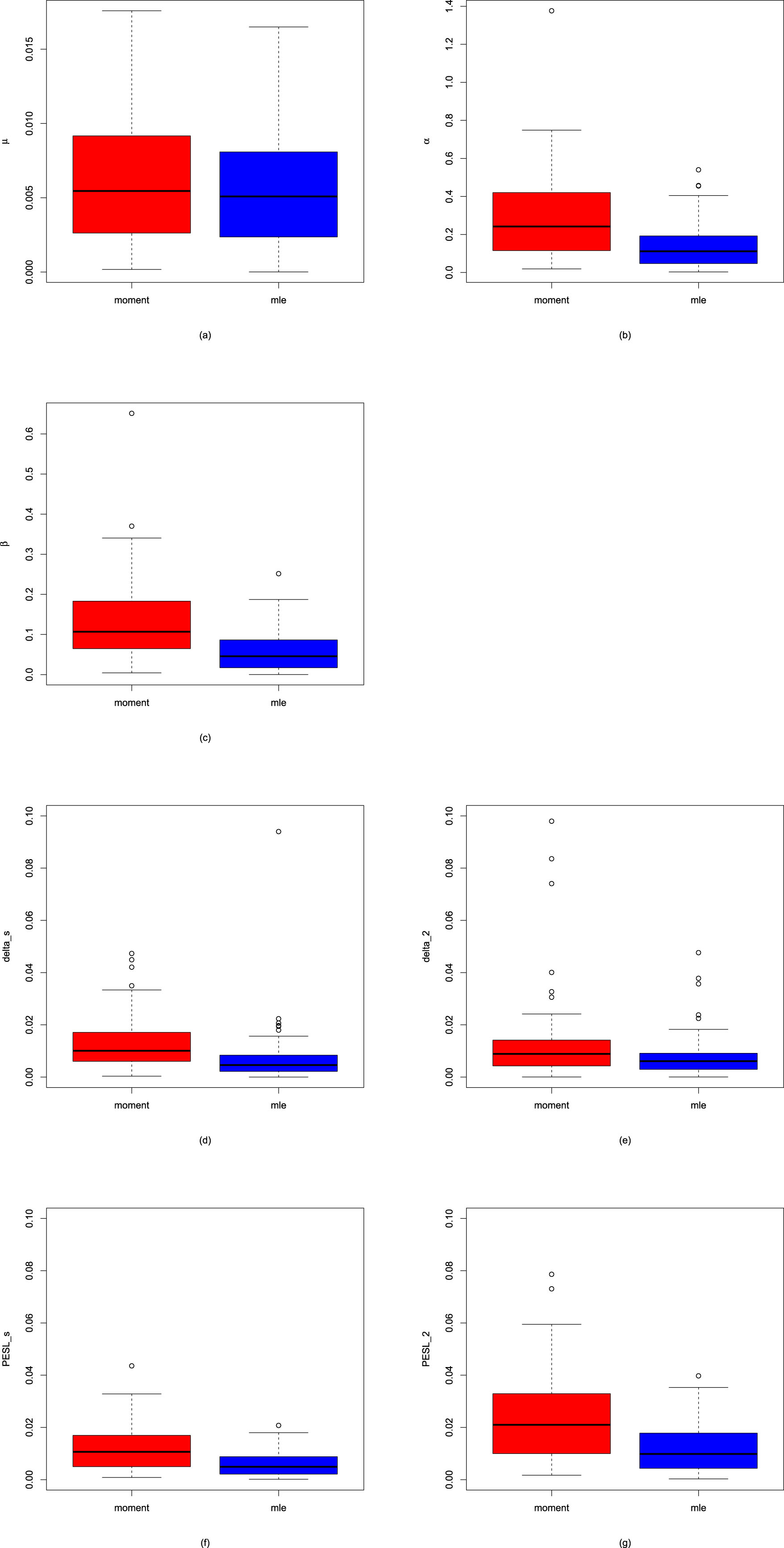

1.8.3 Numerical Comparisons of the Bayes Estimators and the PESLs of Three Methods

In this subsection, we will introduce two simulation techniques to numerically compare the Bayes estimators and the PESLs of the three methods (the oracle method, the moment method, and the MLE method). The first simulation technique is to calculate the averages and proportions of the absolute errors from the oracle method by the moment method and the MLE method, for the estimators of the hyperparameters, the Bayes estimators, and the PESLs. The second simulation technique is to calculate the Mean Square Error (MSE), Mean Absolute Error (MAE), and Mean Entropy Error (MEE) of the estimators of the hyperparameters.

The averages and proportions of the absolute errors

We can calculate the averages and proportions of the absolute errors from the oracle method by the moment method and the MLE method for the estimators of the hyperparameters, the Bayes estimators, and the PESLs. The averages of the absolute errors from the oracle method by the moment method and the MLE method are the sample averages of the absolute error vectors from the oracle method by the moment method and the MLE method; the smaller the better. The proportions of the absolute errors from the oracle method, by the moment method, and the MLE method are the sample proportions of the absolute errors by the method being equal to the minimum of the two absolute errors, the larger the better.

We will only give the mathematical formulas of the averages and proportions of the absolute errors from the oracle method by the moment method and the MLE method for the Bayes estimator under Stein’s loss function  . These quantities for the estimators of the hyperparameters, the Bayes estimator

. These quantities for the estimators of the hyperparameters, the Bayes estimator  , and the PESLs

, and the PESLs  and

and  are similar, and thus they are omitted.

are similar, and thus they are omitted.

To calculate the averages and proportions of the absolute errors from the oracle method by the moment method, and the MLE method for  , we need the absolute error vectors by the moment method and the MLE method for

, we need the absolute error vectors by the moment method and the MLE method for  , which are respectively given by

, which are respectively given by

and

and

The averages of the absolute errors from the oracle method by the moment method, and the MLE method for  are the sample averages of the absolute error vectors

are the sample averages of the absolute error vectors  and

and  , and the averages are respectively given by

, and the averages are respectively given by

and

and

With the two absolute error vectors  and

and  , we can compute a parallel minima vector of the absolute error vectors by

, we can compute a parallel minima vector of the absolute error vectors by

where pmin() is an R built-in function which returns a single vector giving the parallel minima of the argument vectors. Finally, the proportions

where pmin() is an R built-in function which returns a single vector giving the parallel minima of the argument vectors. Finally, the proportions  and

and  of the absolute errors from the oracle method by the moment method, and the MLE method for

of the absolute errors from the oracle method by the moment method, and the MLE method for  are computed by

are computed by

and

and

where

where  is the indicator function of

is the indicator function of  which is equal to

which is equal to  if

if  is true and

is true and  otherwise. To avoid the case of equal absolute errors from the oracle method by the moment method, and the MLE method for

otherwise. To avoid the case of equal absolute errors from the oracle method by the moment method, and the MLE method for  , we could compute

, we could compute

to ensure that the two proportions sum to

to ensure that the two proportions sum to  .

.

MSE, MAE, and MEE

The MSE, MAE, and MEE are criteria to compare two different estimators. The MSE, MAE, and MEE are risk functions (expected loss functions) of the estimators of the hyperparameters, and they could measure the performance of the estimators. The smaller the MSE, MAE, and MEE values, the better the estimator.

Let  be a hyperparameter. Let

be a hyperparameter. Let  be an estimator of θ, where

be an estimator of θ, where  is for the moment estimator and

is for the moment estimator and  is for the MLE. The MSE of the estimator

is for the MLE. The MSE of the estimator  is defined by (see Casella and Berger (2002))

is defined by (see Casella and Berger (2002))

Similarly, the MAE of the estimator  is defined by (see Casella and Berger (2002))

is defined by (see Casella and Berger (2002))

Moreover, the MEE of the estimator  is defined by

is defined by

where the entropy loss function is also known as Stein’s loss function, given by (1.10).

where the entropy loss function is also known as Stein’s loss function, given by (1.10).

1.9 R Codes

The R codes for the hierarchical IG-IG, G-G, Exp-IG, N-IG, N-NIG, U-IG, and P-G models and the figures of Several Common Loss Functions are available at Édition Diffusion Press (EDP) Sciences bookshop website: https://laboutique.edpsciences.fr/produit/1511/9782759839124/empirical-bayes-estimators-of-positive-parameters-in-hierarchical-models-under-stein-s-loss-function. Alternatively, one can send an email to the author at robertzhangyying@qq.com.

Chapter 2 The Empirical Bayes Estimators of the Rate Parameter of the Inverse Gamma Distribution with a Conjugate Inverse Gamma Prior under Stein’s Loss Function

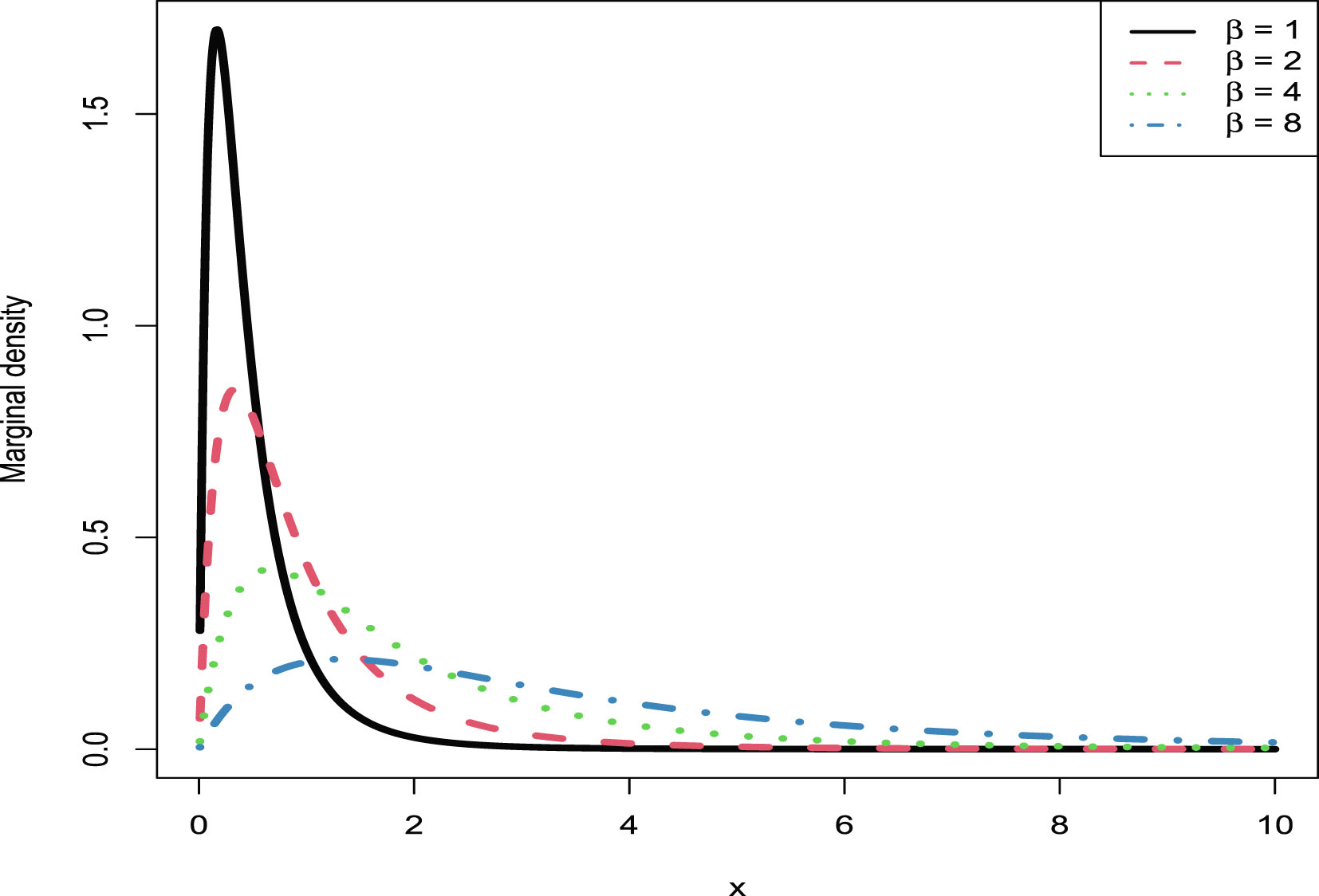

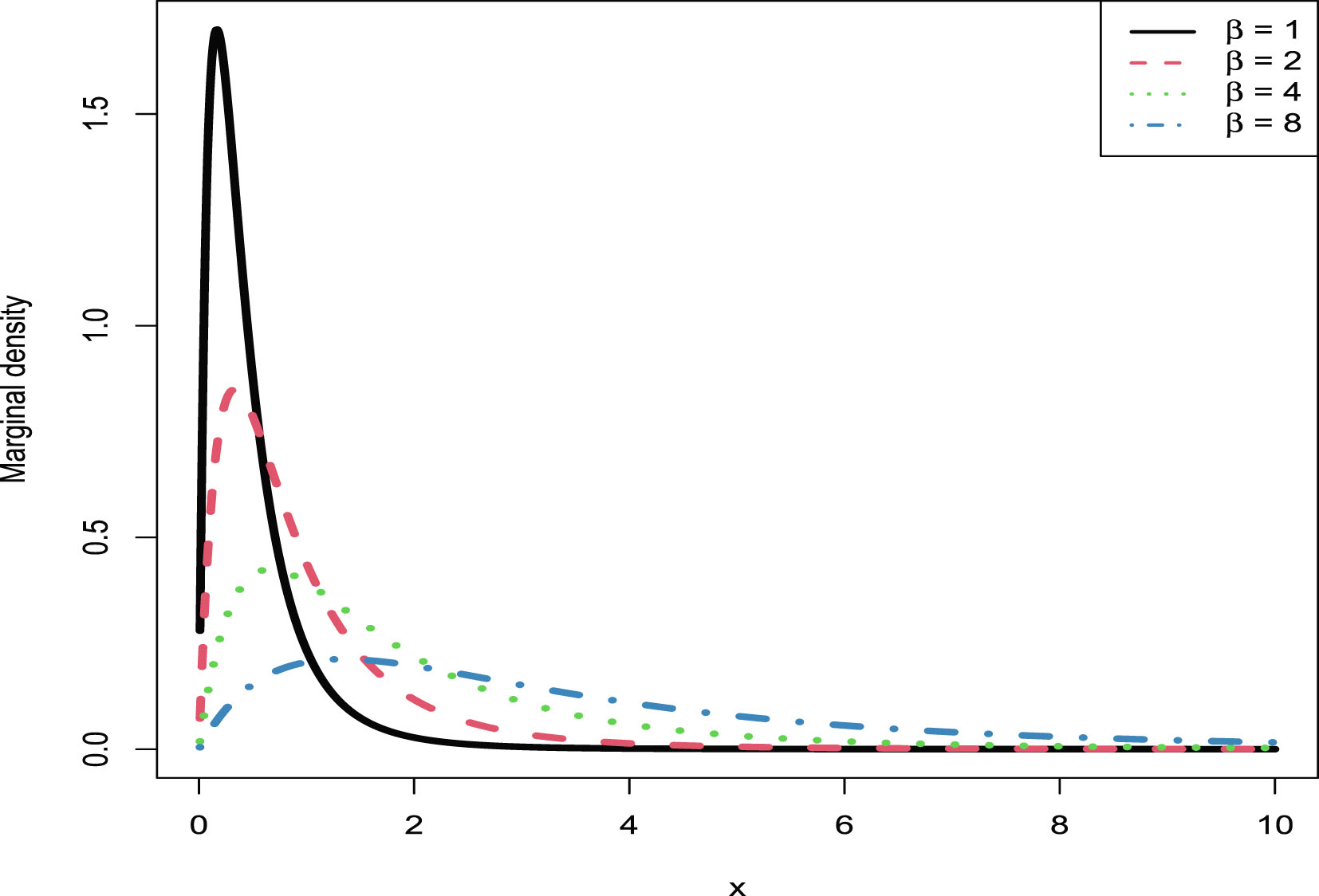

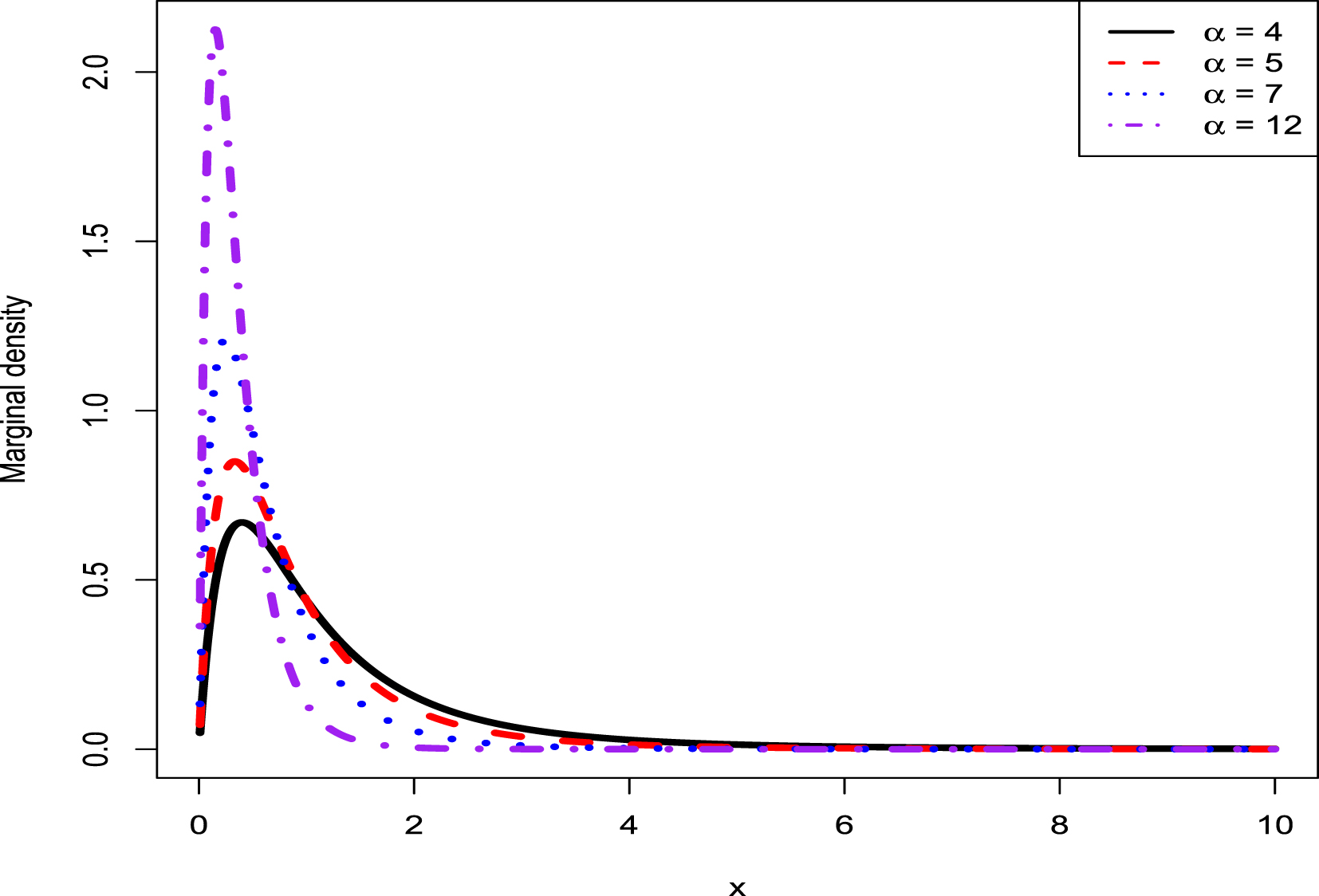

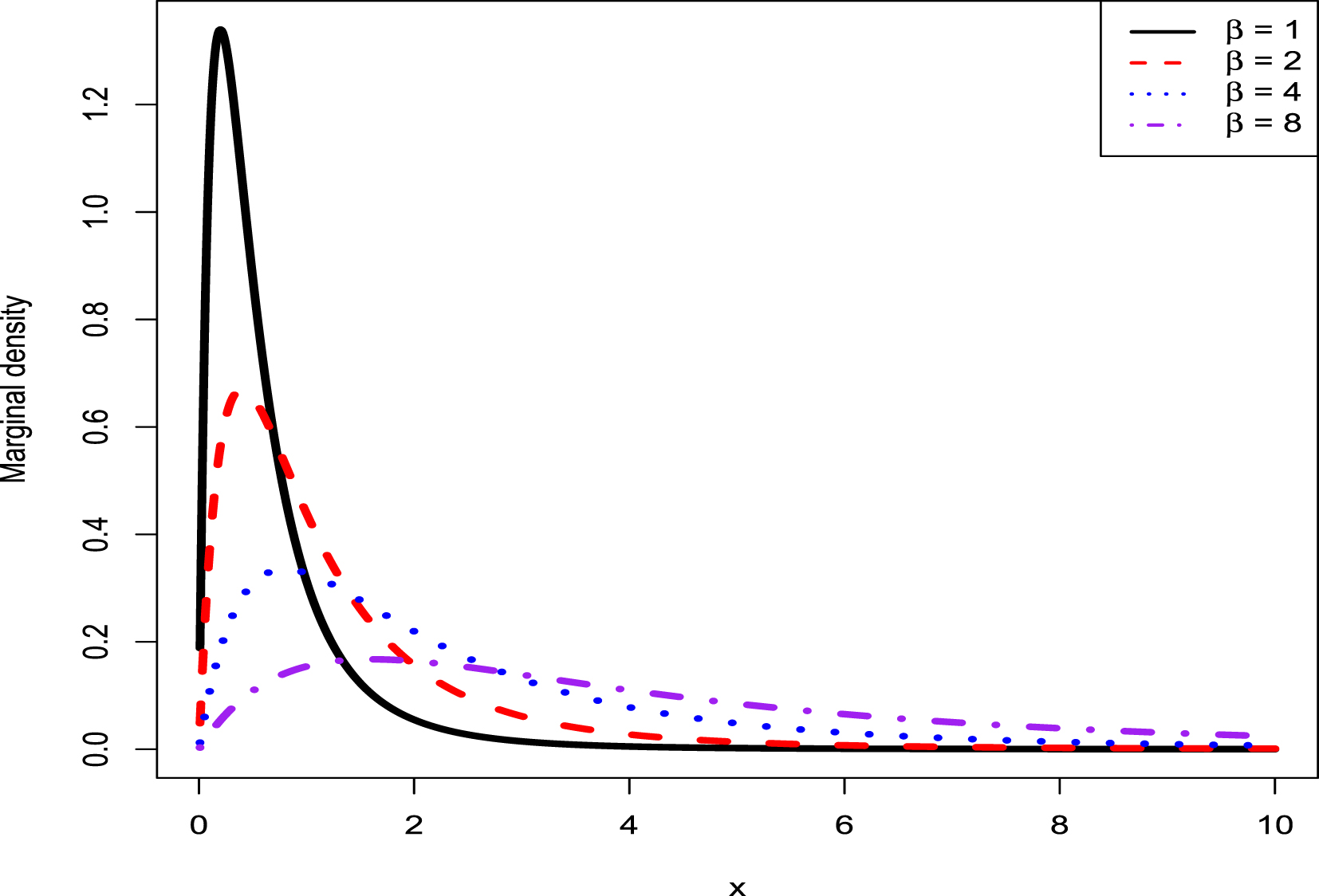

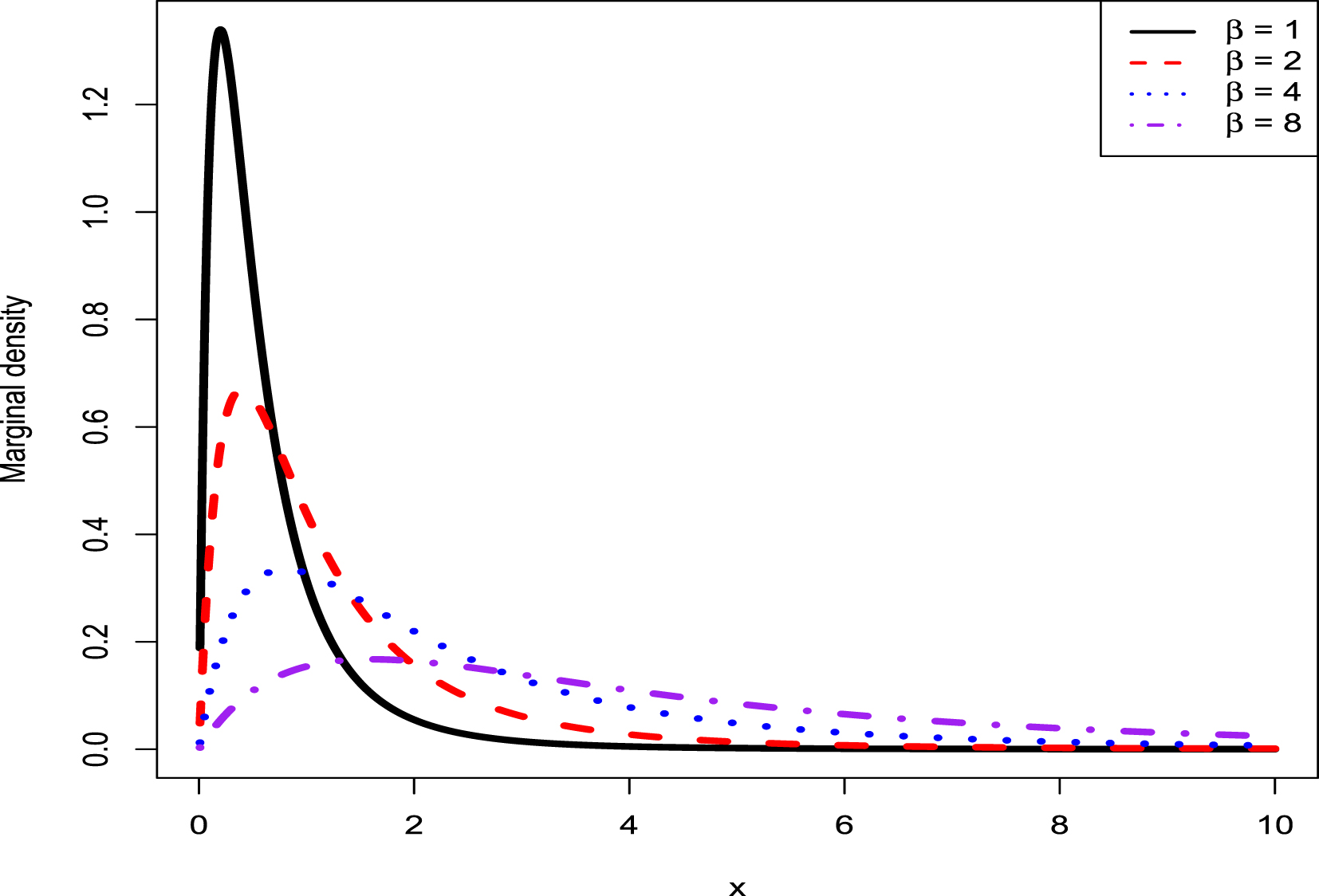

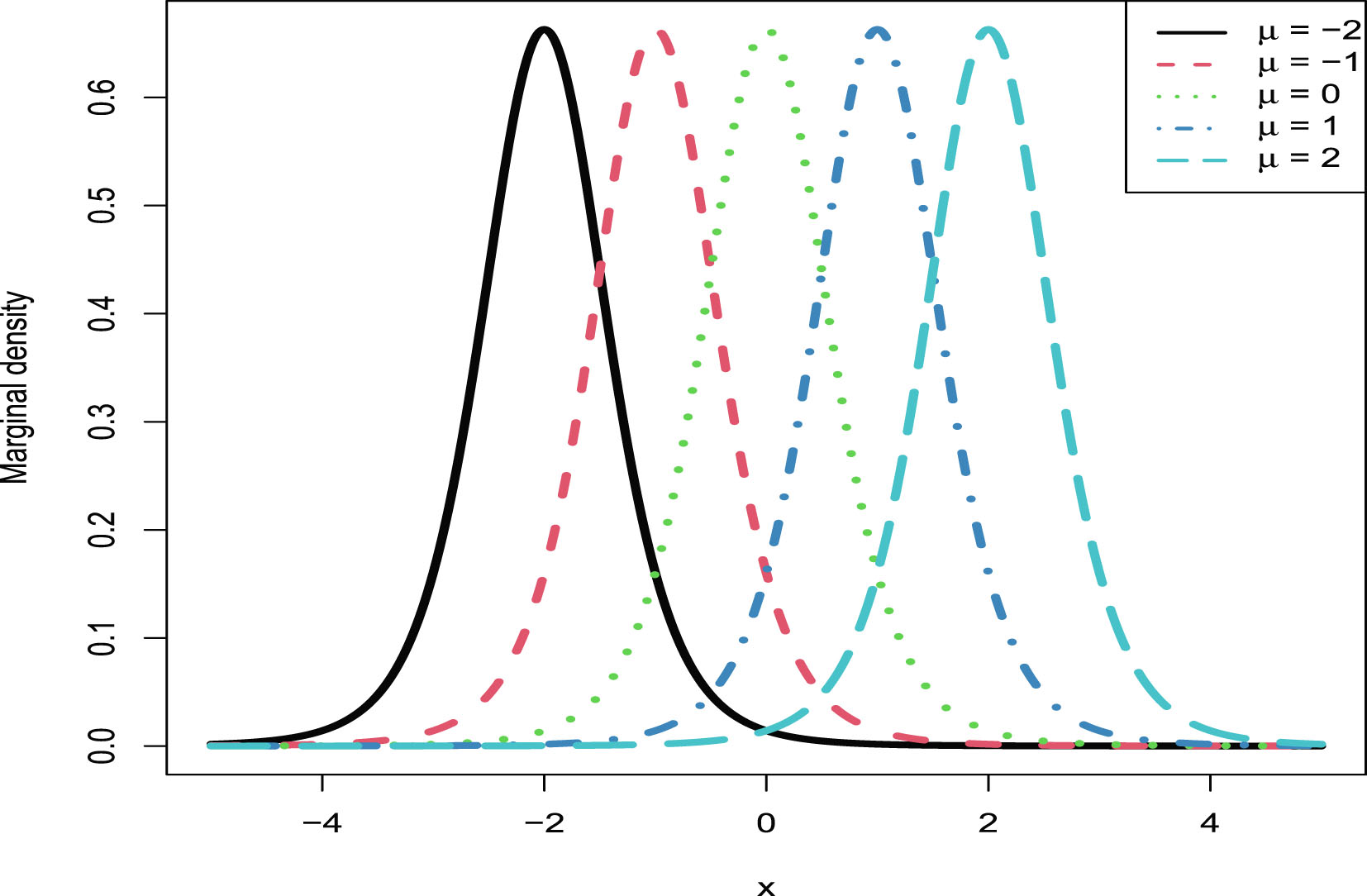

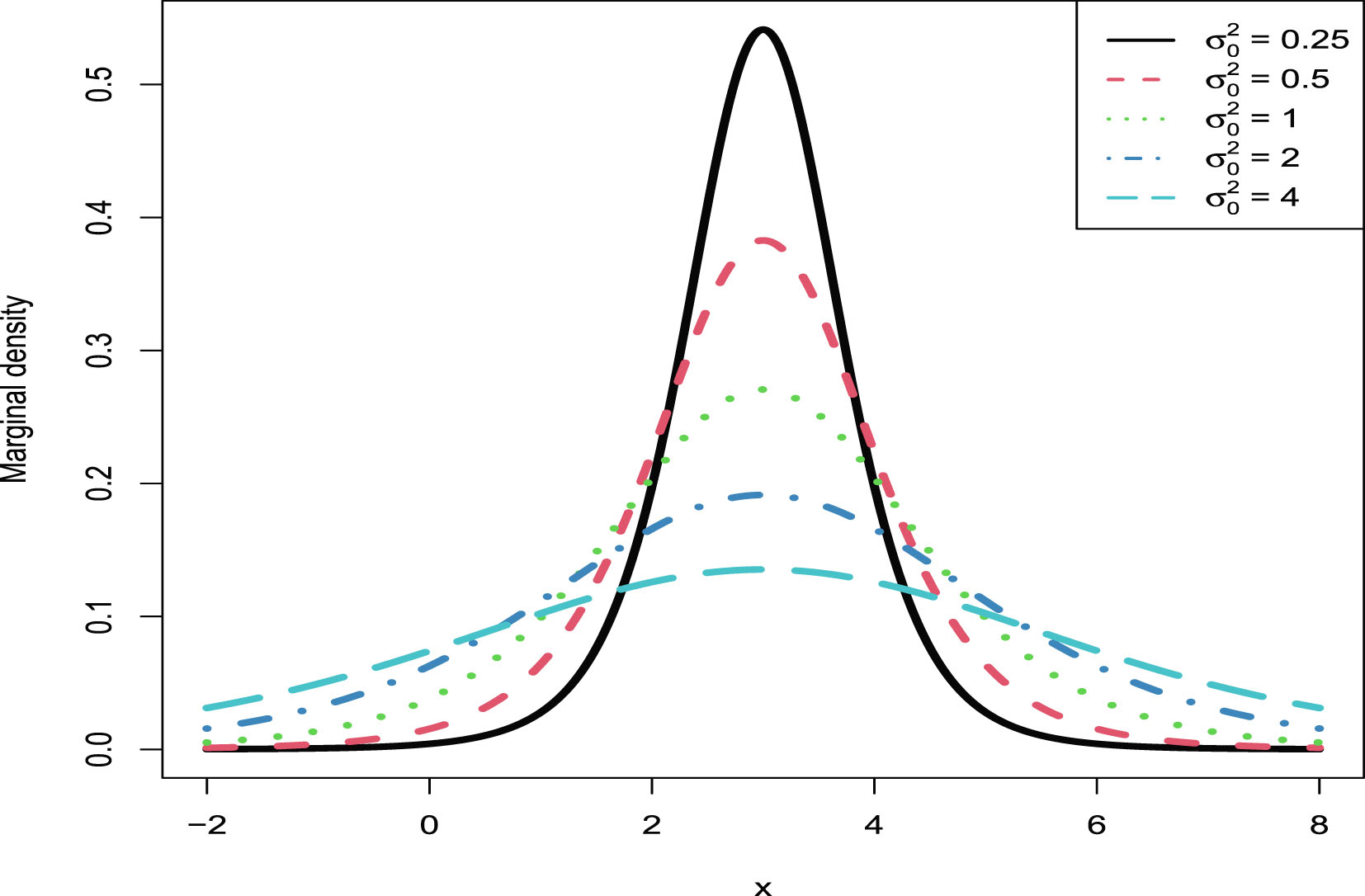

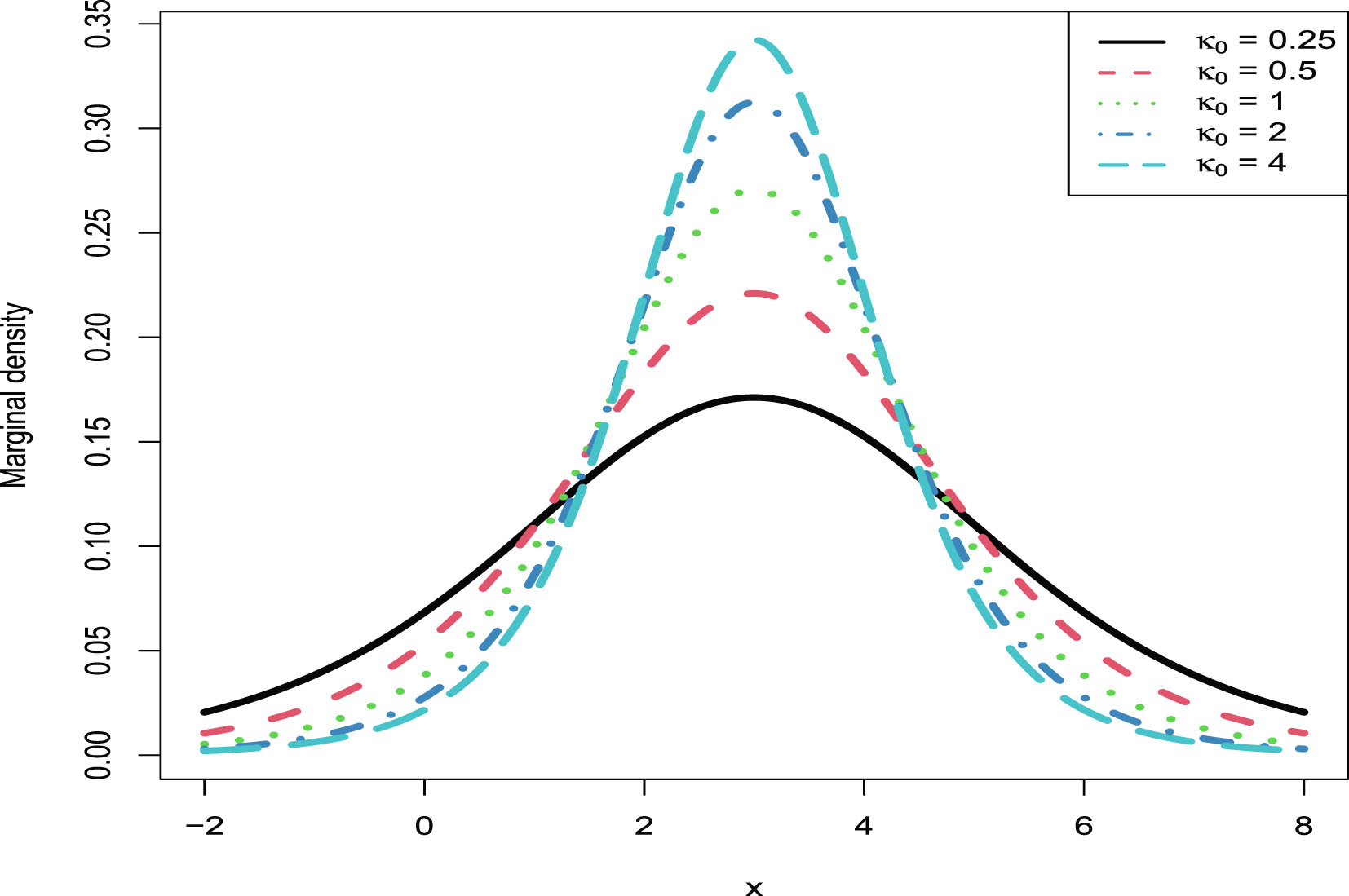

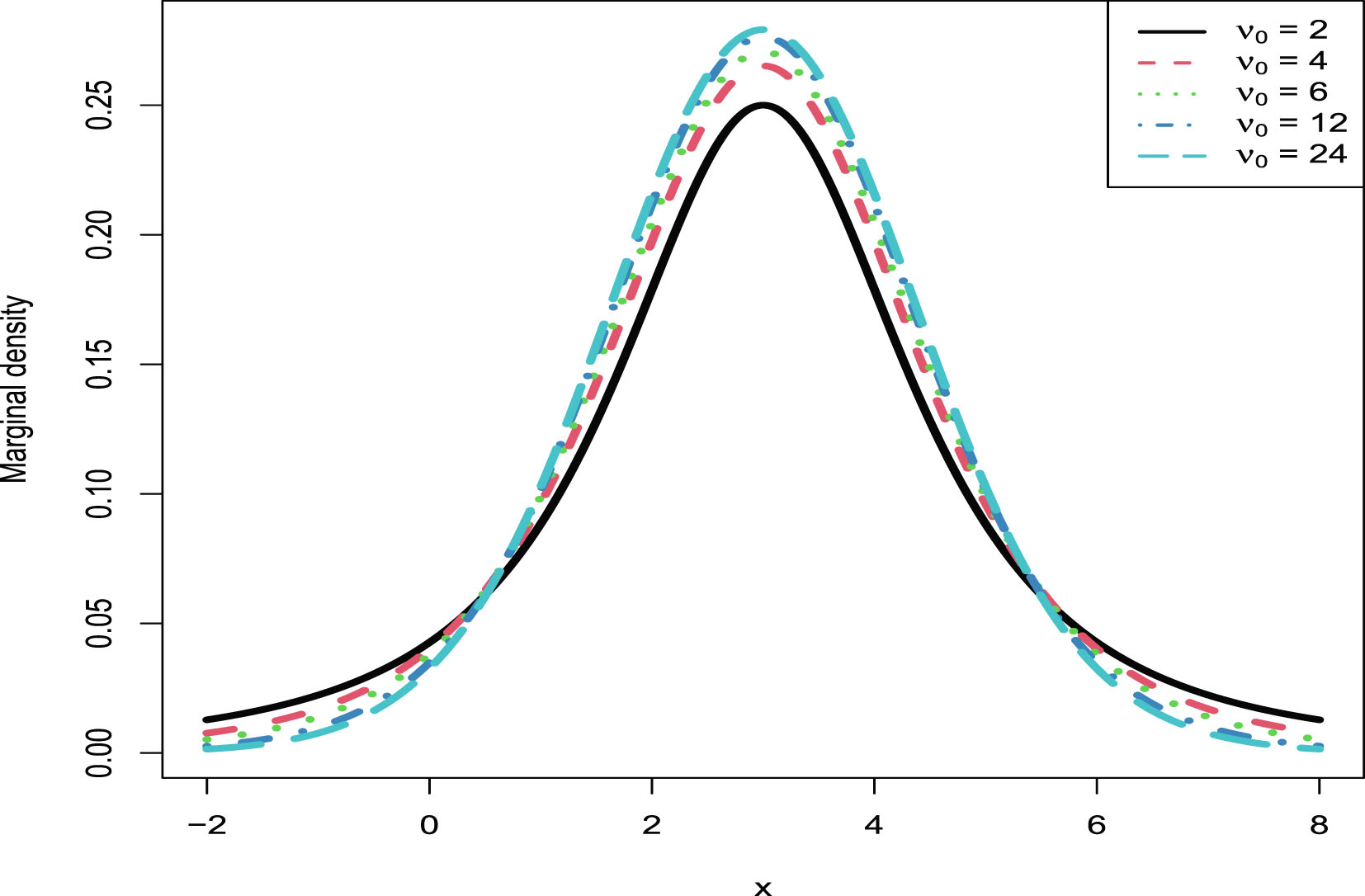

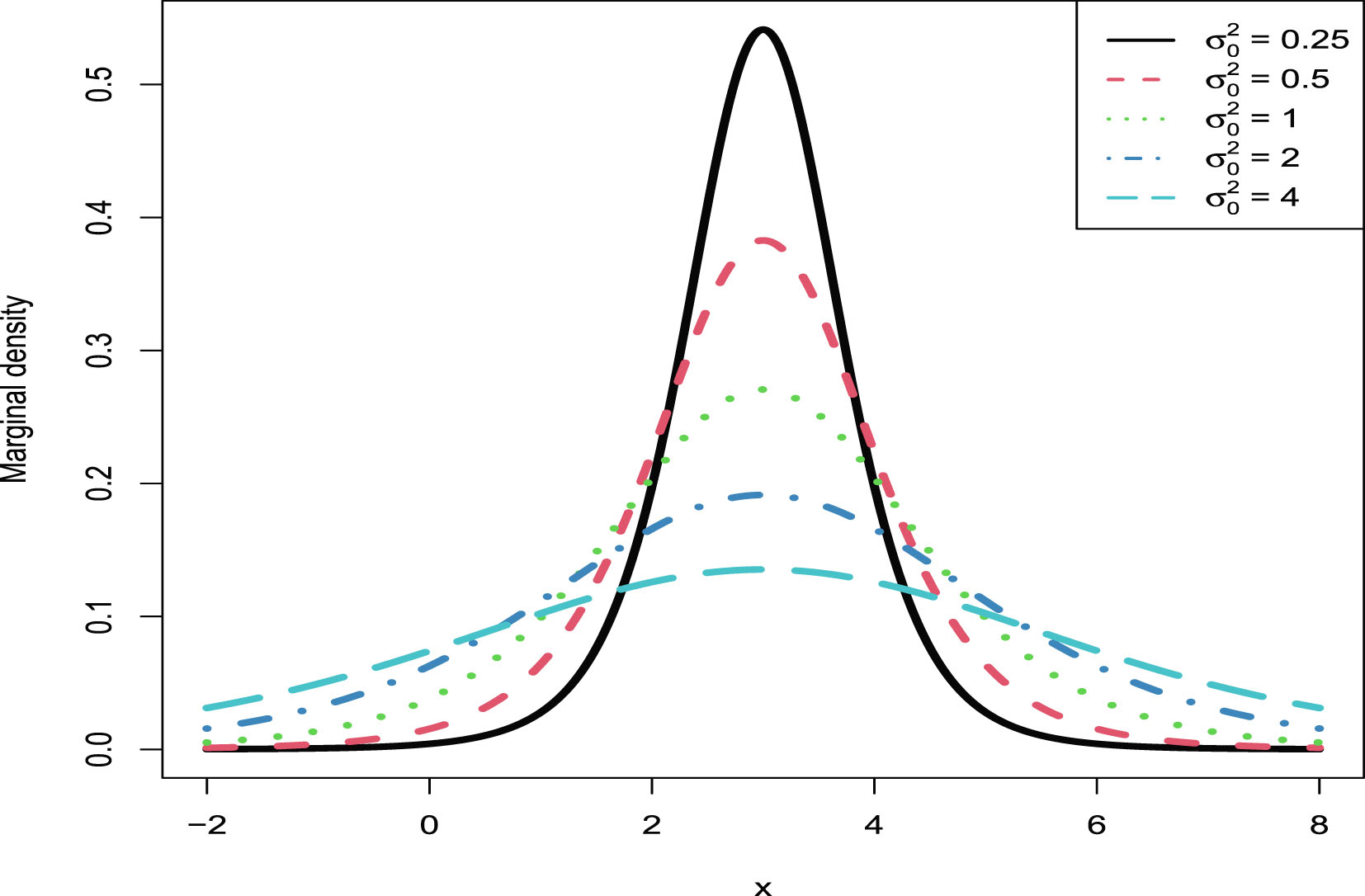

For the hierarchical inverse gamma and inverse gamma model, we calculate the Bayes estimator of the rate parameter of the inverse gamma distribution under Stein’s loss function, which penalizes gross overestimation and gross underestimation equally, and the corresponding PESL. We also obtain the Bayes estimator of the rate parameter under the squared error loss and the corresponding PESL. Moreover, we obtain the empirical Bayes estimators of the rate parameter of the inverse gamma distribution with a conjugate inverse gamma prior by the moment and MLE methods under Stein’s loss function. In numerical simulations, we have illustrated five aspects: The two inequalities of the Bayes estimators and the PESLs for the oracle method; the moment estimators and the MLEs are consistent estimators of the hyperparameters; the goodness-of-fit of the model to the simulated data; the comparisons of the Bayes estimators and the PESLs of the oracle, moment, and MLE methods; and the marginal densities of the model for various hyperparameters. The numerical results indicate that the MLEs are better than the moment estimators when estimating the hyperparameters. Finally, the model could potentially be used to fit right-skewed data, not left-skewed data.

Acknowledgement. This chapter is derived in part from an article Sun et al. (2021) published in the Journal of Statistical Computation and Simulation, 12 December 2020 <copyright Taylor & Francis>, available online: http://www.tandfonline.com/10.1080/00949655.2020.1858299.

2.1 Introduction

Since the rate parameter of the inverse gamma distribution with a conjugate inverse gamma prior is a positive parameter,  , the Bayes estimator of

, the Bayes estimator of  under the squared error loss function, is not appropriate. In contrast, we should select Stein’s loss function because it penalizes gross overestimation and gross underestimation equally. To determine the unknown hyperparameters, we adopt the empirical Bayes method. In this chapter, we calculate the Bayes estimator of

under the squared error loss function, is not appropriate. In contrast, we should select Stein’s loss function because it penalizes gross overestimation and gross underestimation equally. To determine the unknown hyperparameters, we adopt the empirical Bayes method. In this chapter, we calculate the Bayes estimator of  under Stein’s loss function and the corresponding PESL. We also obtain the Bayes estimator of

under Stein’s loss function and the corresponding PESL. We also obtain the Bayes estimator of  under the squared error loss function and the corresponding PESL. Moreover, we obtain the empirical Bayes estimators of the rate parameter

under the squared error loss function and the corresponding PESL. Moreover, we obtain the empirical Bayes estimators of the rate parameter  of the inverse gamma distribution with a conjugate inverse gamma prior by the moment method and the MLE method under Stein’s loss function.

of the inverse gamma distribution with a conjugate inverse gamma prior by the moment method and the MLE method under Stein’s loss function.

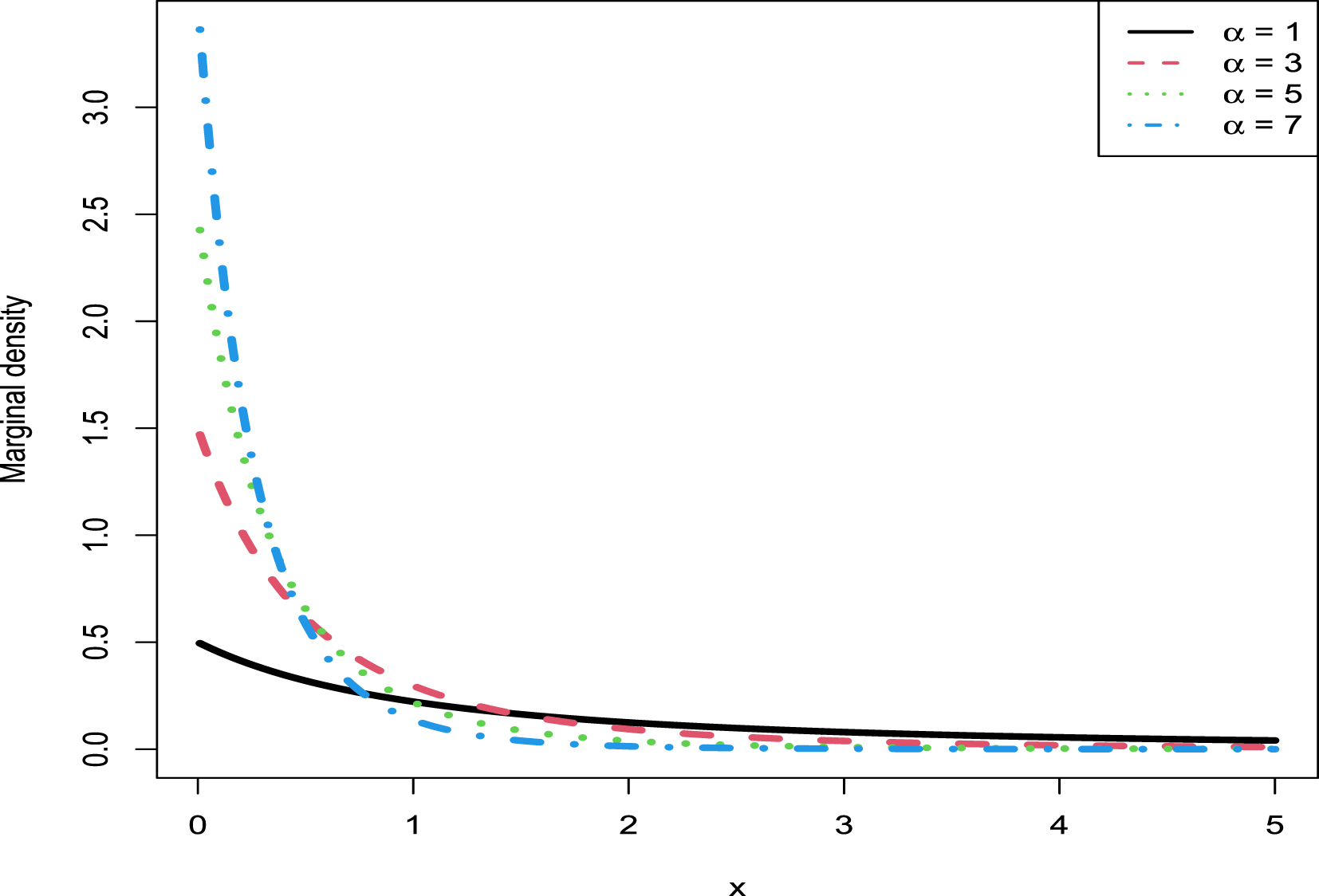

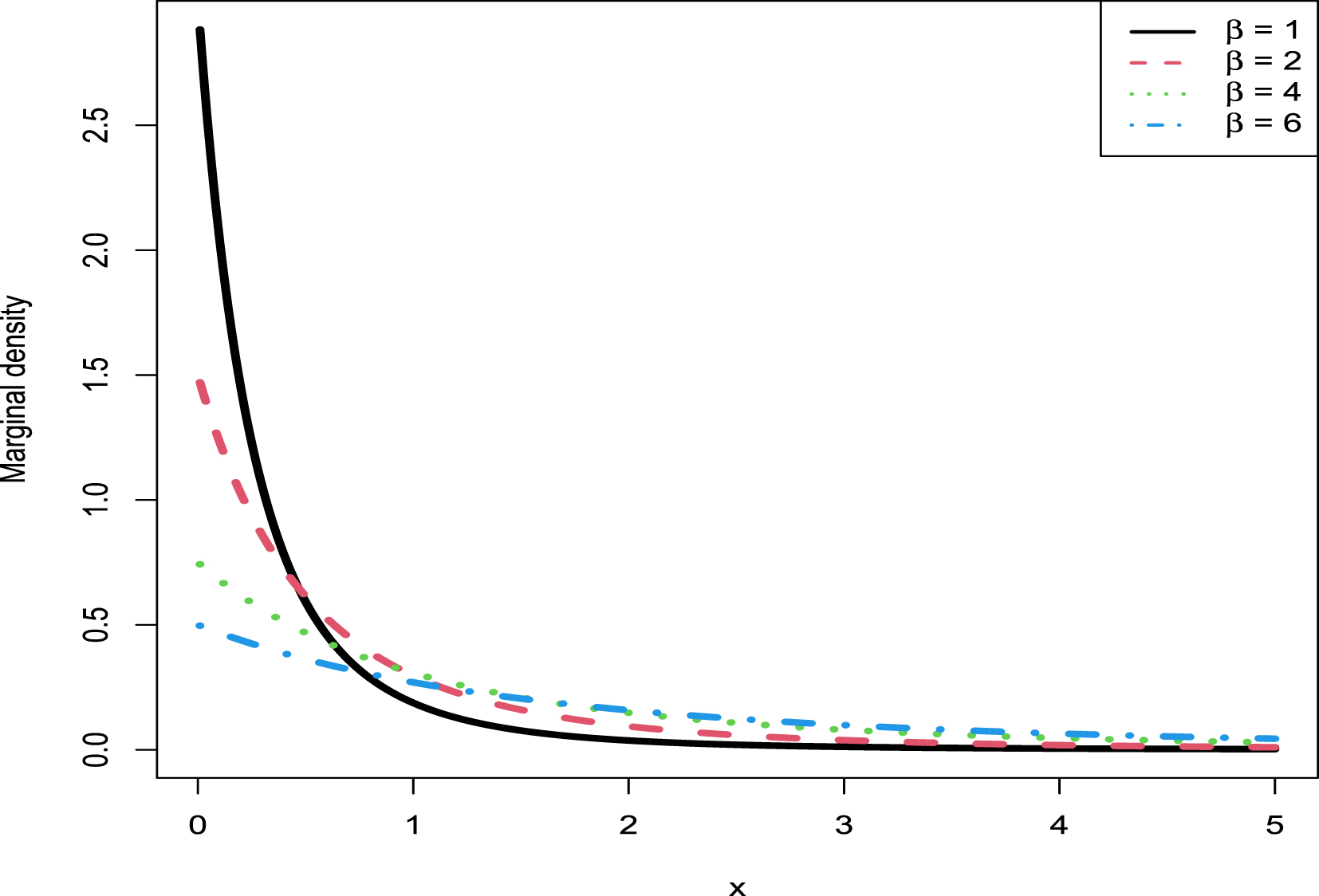

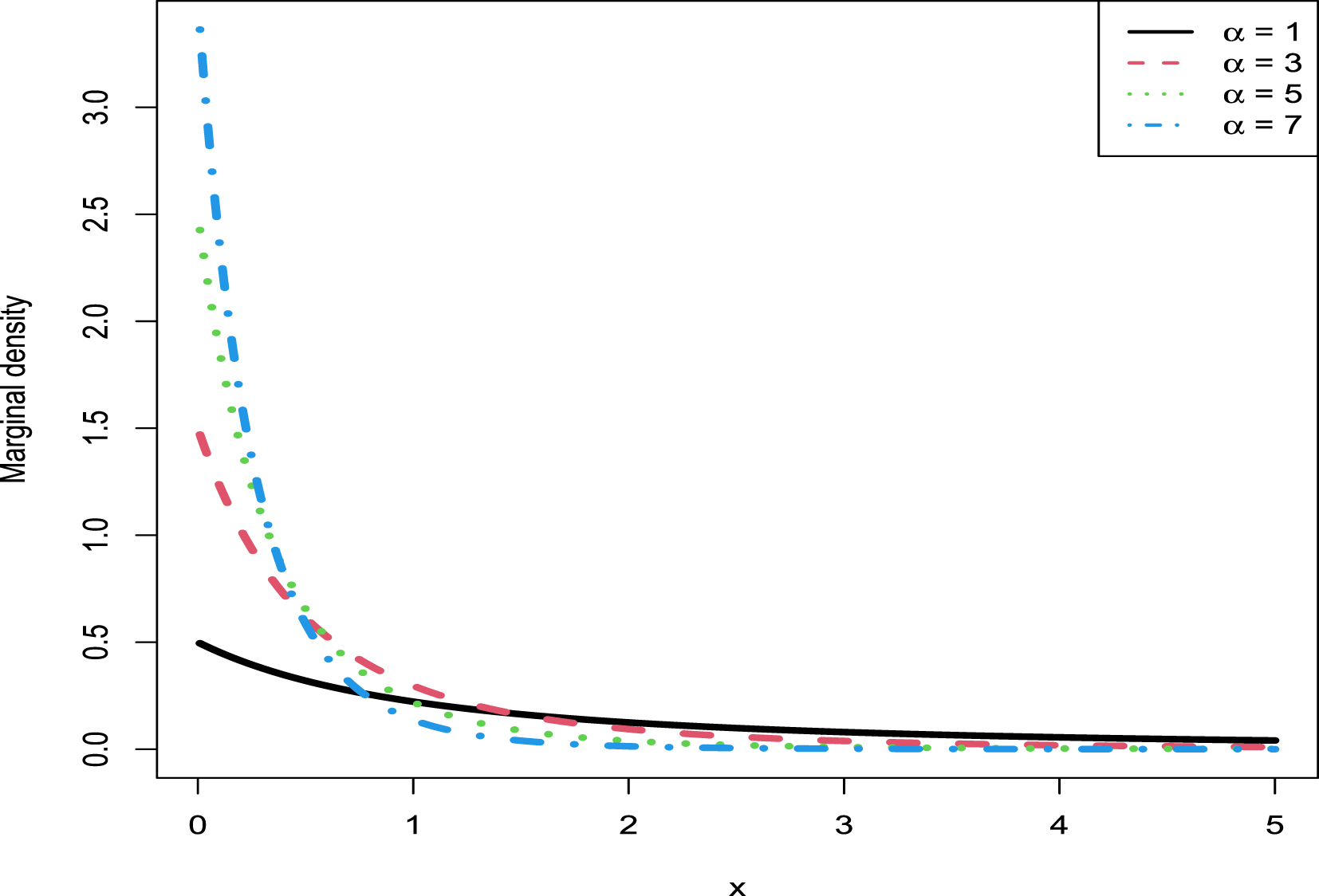

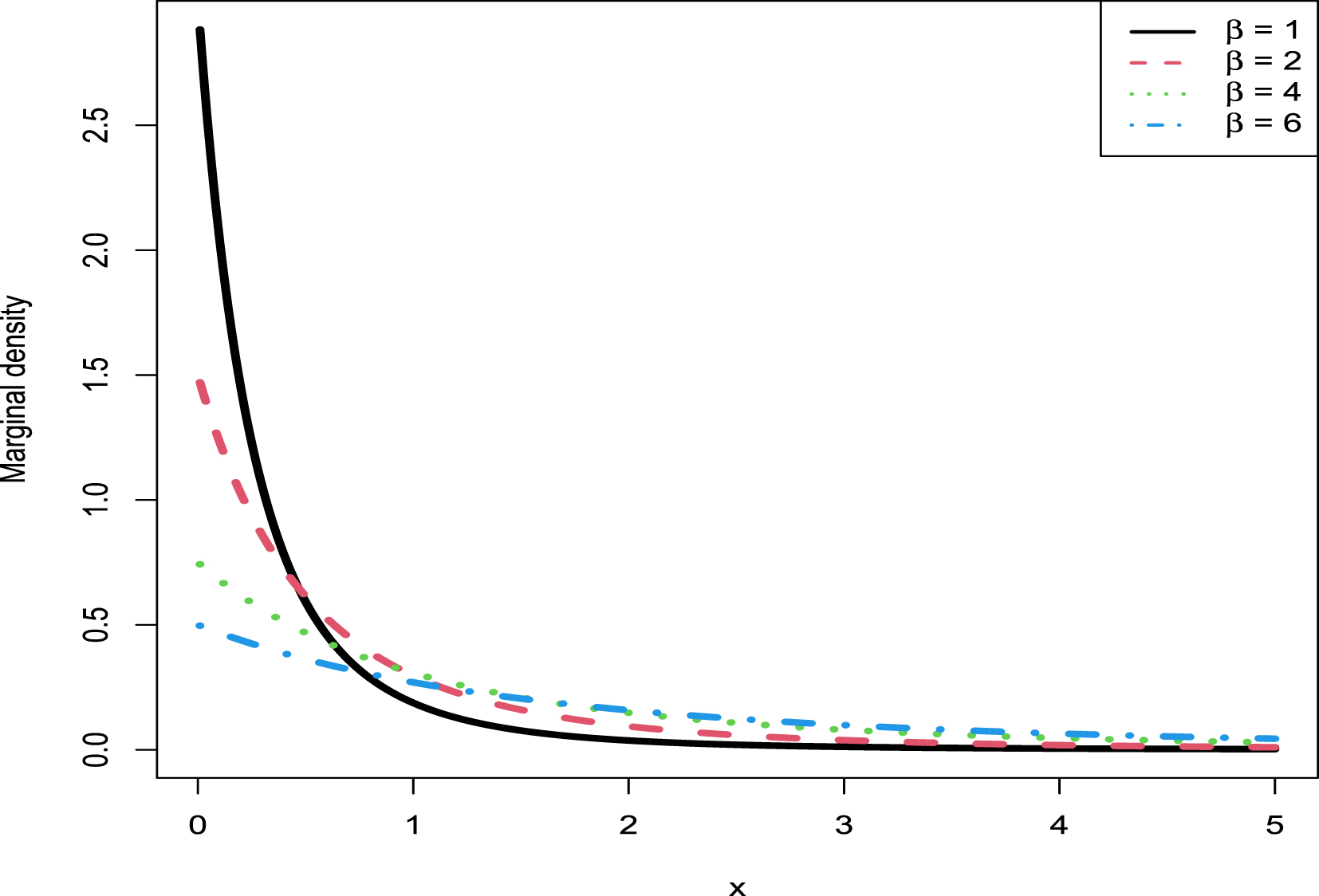

The rest of the chapter is organized as follows. In section 2.2, we calculate the Bayes estimators and the PESLs, and they satisfy two inequalities (2.6) and (2.9). Moreover, we summarize the empirical Bayes estimators of the parameter of the model (2.1) under Stein’s loss function by the moment method and the MLE method in Theorem 2.4. Furthermore, we have theoretically compared the Bayes estimators and the PESLs of the three methods (the oracle method, the moment method, and the MLE method). In section 2.3, we will carry out some numerical simulations, where we will illustrate five aspects. First, we will numerically exemplify the two inequalities of the Bayes estimators and the PESLs for the oracle method. Second, we will illustrate that the moment estimators and the MLEs are consistent estimators of the hyperparameters. Third, we will calculate the goodness-of-fit of the model (2.1) to the simulated data. Fourth, we will numerically compare the Bayes estimators and the PESLs of the three methods. Finally, we will plot the marginal densities of the hierarchical inverse gamma and inverse gamma model (2.1) for various hyperparameters. Some conclusions and discussions are provided in section 2.4.

2.2 Theoretical Results

In this section, we will give some theoretical results for the hierarchical inverse gamma and inverse gamma model (2.1). First, we will calculate the Bayes estimators and the PESLs of the rate parameter of the inverse gamma distribution with a conjugate inverse gamma prior. Second, we will obtain the empirical Bayes estimators of the rate parameter of the inverse gamma distribution with a conjugate inverse gamma prior. Third, we will theoretically compare the Bayes estimators and the PESLs of three methods (the oracle method, the moment method, and the MLE method).

Suppose that we observe  from the hierarchical inverse gamma and inverse gamma model:

from the hierarchical inverse gamma and inverse gamma model:

(2.1)

where

(2.1)

where  ,

,  , and

, and  are hyperparameters to be estimated,

are hyperparameters to be estimated,  is the unknown parameter of interest,

is the unknown parameter of interest,  is the inverse gamma distribution with shape parameter

is the inverse gamma distribution with shape parameter  and rate parameter

and rate parameter  , and

, and  is the inverse gamma distribution with shape parameter

is the inverse gamma distribution with shape parameter  and rate parameter

and rate parameter  . The pdf of the inverse gamma distribution can be found in section 1.2. As described in Deely and Lindley (1981), the statistician observes data

. The pdf of the inverse gamma distribution can be found in section 1.2. As described in Deely and Lindley (1981), the statistician observes data  and wishes to make an inference about

and wishes to make an inference about  . Therefore,

. Therefore,  provides direct information about the parameter

provides direct information about the parameter  , while supplementary information

, while supplementary information  is also available. The connection between the prime data

is also available. The connection between the prime data  and the supplementary information

and the supplementary information  is provided by the common distributions

is provided by the common distributions  and

and  .

.

Now we give the justifications of why  is the only parameter of interest. The pdf of

is the only parameter of interest. The pdf of  is

is

for

for  and

and  . We can only handle the case of unknown rate parameter

. We can only handle the case of unknown rate parameter  , letting the shape parameter

, letting the shape parameter  be a hyperparameter to be determined. If the shape parameter

be a hyperparameter to be determined. If the shape parameter  is also an unknown parameter of interest, then we have to deal with the

is also an unknown parameter of interest, then we have to deal with the  part in the posterior distribution, which is very complicated and has no analytical solutions. It seems that the Bayesian community avoids dealing with such a situation by letting

part in the posterior distribution, which is very complicated and has no analytical solutions. It seems that the Bayesian community avoids dealing with such a situation by letting  be a known constant or assuming

be a known constant or assuming  be a hyperparameter to be determined.

be a hyperparameter to be determined.

2.2.1 The Bayes Estimators and the PESLs

In this subsection, we will calculate the Bayes estimators and the PESLs of the rate parameter of the inverse gamma distribution with a conjugate inverse gamma prior.

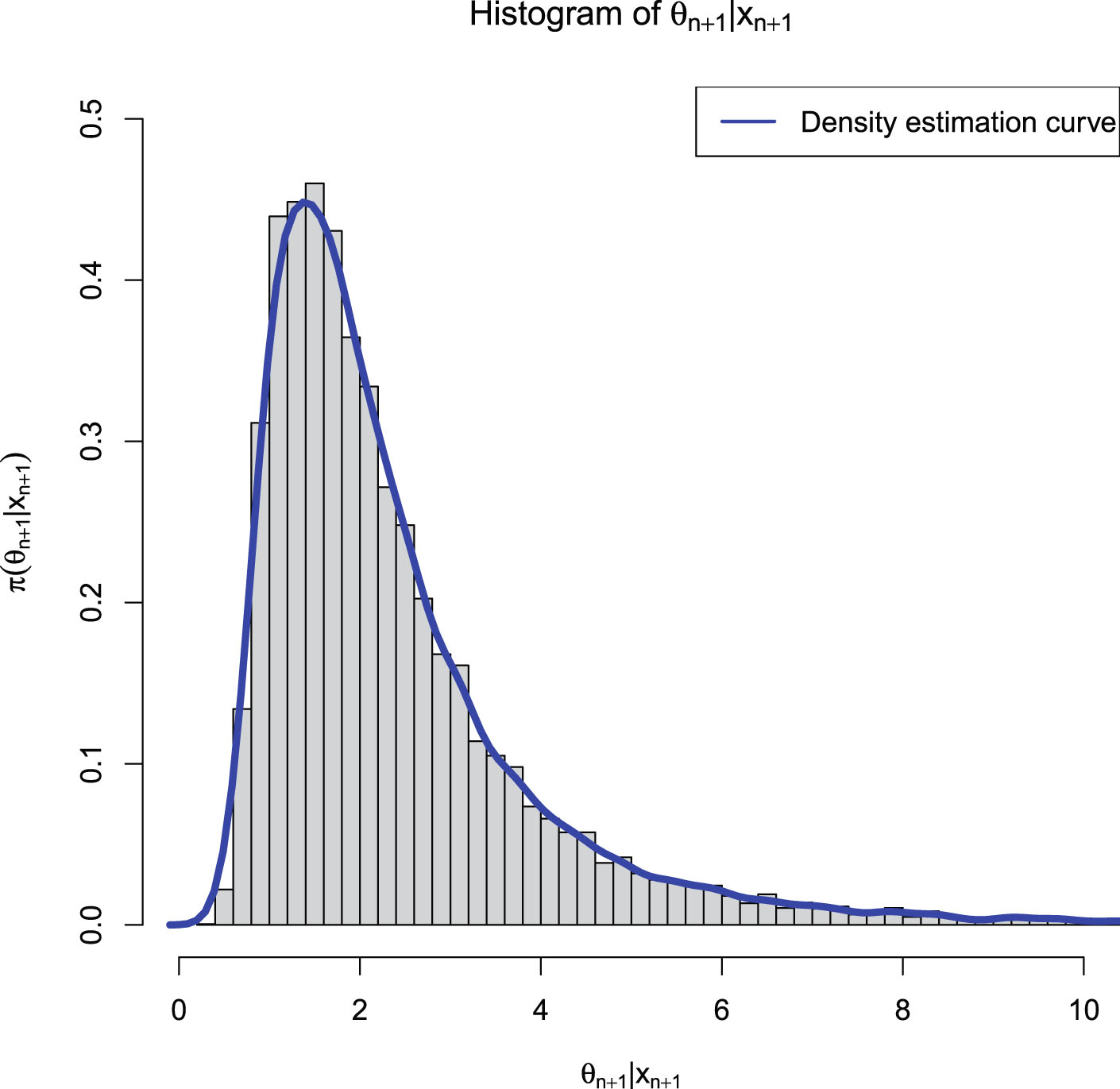

For the hierarchical inverse gamma and inverse gamma model (2.1), the posterior density of  and the marginal density of

and the marginal density of  are given by the following theorem, whose proof can be found in appendix A.1.

are given by the following theorem, whose proof can be found in appendix A.1.

Theorem 2.1. For the hierarchical inverse gamma and inverse gamma model (2.1), the posterior density of  is

is

where

where

(2.2)

(2.2)

Moreover, the marginal density of  is

is

(2.3)

for

(2.3)

for  and

and  .

.

From Theorem 2.1, we have

Since  is a rate parameter of the inverse gamma distribution, the Bayes estimator of

is a rate parameter of the inverse gamma distribution, the Bayes estimator of  under the squared error loss function is not appropriate. In contrast, we should choose Stein’s loss function (James and Stein (1961); see also Brown (1990)) because it penalizes gross overestimation and gross underestimation equally. The Bayes estimator of

under the squared error loss function is not appropriate. In contrast, we should choose Stein’s loss function (James and Stein (1961); see also Brown (1990)) because it penalizes gross overestimation and gross underestimation equally. The Bayes estimator of  under Stein’s loss function is given by (see Zhang (2017))

under Stein’s loss function is given by (see Zhang (2017))

(2.4)

where

(2.4)

where  and

and  are given by (2.2). Moreover, we also calculate the Bayes estimator of

are given by (2.2). Moreover, we also calculate the Bayes estimator of  under the usual squared error loss function,

under the usual squared error loss function,

(2.5)

(2.5)

It is easy to show that

(2.6)

which exemplifies the theoretical study of (1.16). Furthermore, from Zhang (2017), the PESLs at

(2.6)

which exemplifies the theoretical study of (1.16). Furthermore, from Zhang (2017), the PESLs at  and

and  are respectively given by

are respectively given by

(2.7)

and

(2.7)

and

(2.8)

where

(2.8)

where

is the digamma function. It is easy to show that

is the digamma function. It is easy to show that

(2.9)

which exemplifies the theoretical study of (1.17). The numerical simulations will exemplify (2.6) and (2.9).

(2.9)

which exemplifies the theoretical study of (1.17). The numerical simulations will exemplify (2.6) and (2.9).

It is worth noting that the Bayes estimators ( and

and  ) and the PESLs

) and the PESLs  and

and  in this subsection assume that the hyperparameters

in this subsection assume that the hyperparameters  ,

,  , and

, and  are known. In other words, the Bayes estimators and the PESLs in this subsection are calculated by the oracle method, which will be further discussed in subsection 2.2.3.

are known. In other words, the Bayes estimators and the PESLs in this subsection are calculated by the oracle method, which will be further discussed in subsection 2.2.3.

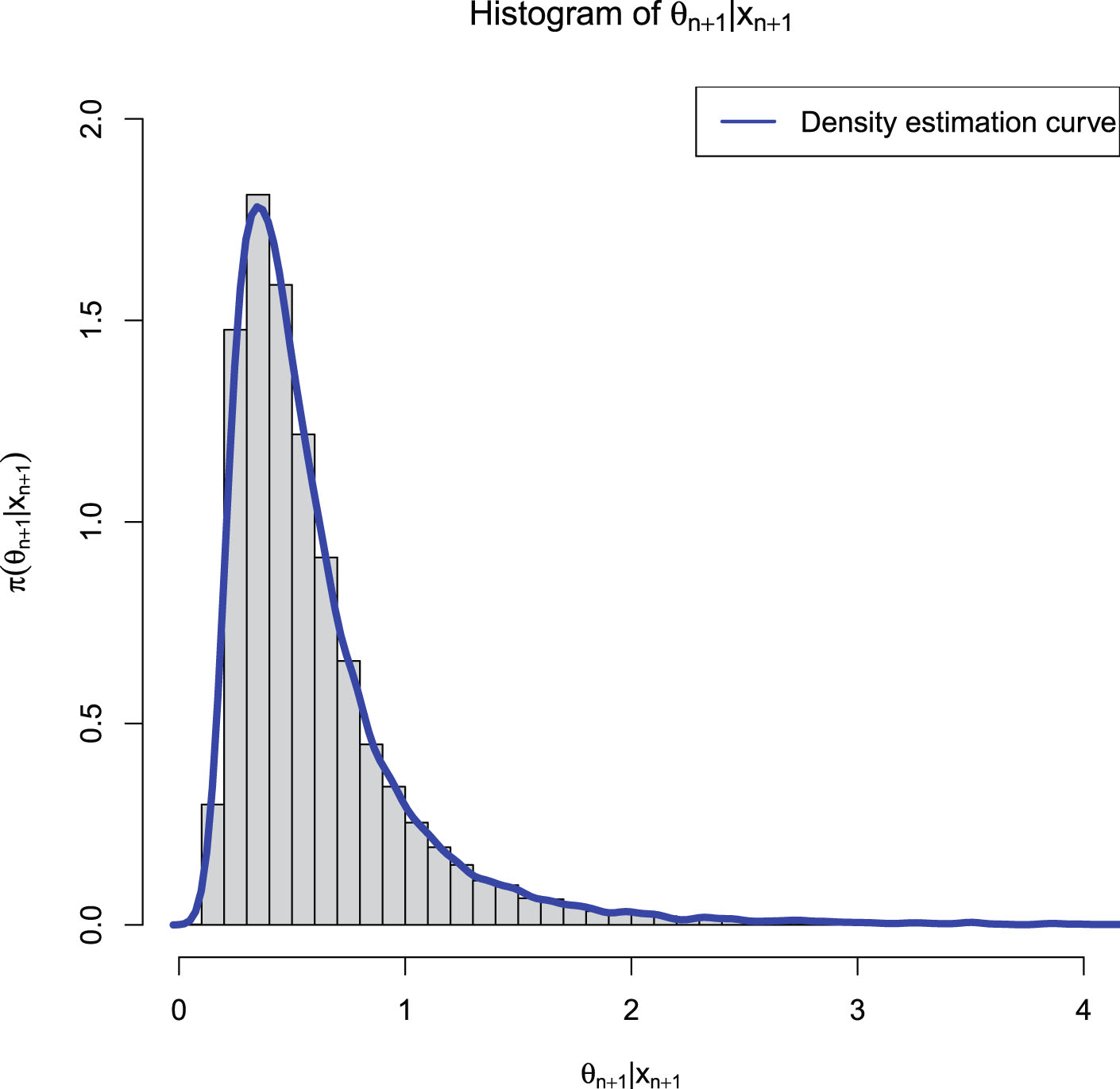

2.2.2 The Empirical Bayes Estimators of θn+1

In this subsection, we will obtain the empirical Bayes estimators of the rate parameter of the inverse gamma distribution with a conjugate inverse gamma prior.

To obtain the empirical Bayes estimators of  , we need to estimate the hyperparameters from the supplementary information

, we need to estimate the hyperparameters from the supplementary information  . There are two common methods to estimate the hyperparameters: the moment method and the MLE method.

. There are two common methods to estimate the hyperparameters: the moment method and the MLE method.

The estimators of the hyperparameters of the model (2.1) by the moment method  ,

,  , and

, and  and their consistencies are summarized in the following theorem, whose proof can be found in appendix A.2.

and their consistencies are summarized in the following theorem, whose proof can be found in appendix A.2.

Theorem 2.2. The estimators of the hyperparameters of the model (2.1) by the moment method are

(2.10)

(2.10)

(2.11)

(2.11)

(2.12)

where

(2.12)

where  ,

,  , is the sample kth moment of

, is the sample kth moment of  . Moreover, the moment estimators are consistent estimators of the hyperparameters.

. Moreover, the moment estimators are consistent estimators of the hyperparameters.

The estimators of the hyperparameters of the model (2.1) by the MLE method  ,

,  , and

, and  and their consistencies are summarized in the following theorem whose proof can be found in appendix A.3.

and their consistencies are summarized in the following theorem whose proof can be found in appendix A.3.

Theorem 2.3. The estimators of the hyperparameters of the model (2.1) by the MLE method  ,

,  , and

, and  are the solutions to the following equations:

are the solutions to the following equations:

(2.13)

(2.13)

(2.14)

(2.14)

(2.15)

(2.15)

Moreover, the MLEs are consistent estimators of the hyperparameters.

The analytical calculations of the MLEs of  ,

,  , and

, and  by solving the equations (2.13)–(2.15) are impossible, and thus we have to resort to numerical solutions. We can exploit Newton’s method to solve the equations equations (2.13)–(2.15) and to obtain the MLEs of

by solving the equations (2.13)–(2.15) are impossible, and thus we have to resort to numerical solutions. We can exploit Newton’s method to solve the equations equations (2.13)–(2.15) and to obtain the MLEs of  ,

,  , and

, and  . Note that the MLEs of

. Note that the MLEs of  ,

,  , and

, and  are very sensitive to the initial estimators, and the moment estimators are usually proved to be good initial estimators.

are very sensitive to the initial estimators, and the moment estimators are usually proved to be good initial estimators.

Finally, the empirical Bayes estimators of the parameter of the model (2.1) under Stein’s loss function by the moment method and the MLE method are summarized in the following theorem.

Theorem 2.4. The empirical Bayes estimator of the parameter of the model (2.1) under Stein’s loss function by the moment method is given by (2.4) with the hyperparameters estimated by  in Theorem 2.2. Alternatively, the empirical Bayes estimator of the parameter of the model (2.1) under Stein’s loss function by the MLE method is given by (2.4) with the hyperparameters estimated by

in Theorem 2.2. Alternatively, the empirical Bayes estimator of the parameter of the model (2.1) under Stein’s loss function by the MLE method is given by (2.4) with the hyperparameters estimated by  numerically determined in Theorem 2.3.

numerically determined in Theorem 2.3.

2.2.3 Theoretical Comparisons of the Bayes Estimators and the PESLs of Three Methods

In this subsection, similar to section 1.7, we will theoretically compare the Bayes estimators and the PESLs of three methods (the oracle method, the moment method, and the MLE method) for the hierarchical inverse gamma and inverse gamma model (2.1). Note that the numerical comparisons of the Bayes estimators and the PESLs of the three methods can be found in subsection 2.3.4.

Note that the subscripts 0, 1, and 2 below are for the oracle method, the moment method, and the MLE method, respectively. The PESLs of the three methods are respectively given by

where

where

,

,  , and

, and  are unknown hyperparameters,

are unknown hyperparameters,  ,

,  , and

, and  are the moment estimators of the hyperparameters given in Theorem 2.2, and

are the moment estimators of the hyperparameters given in Theorem 2.2, and  ,

,  , and

, and  are the MLEs of the hyperparameters numerically determined in Theorem 2.3.

are the MLEs of the hyperparameters numerically determined in Theorem 2.3.

The Bayes estimators of  under Stein’s loss function, are given by

under Stein’s loss function, are given by

and

and

The Bayes estimators of  under the squared error loss function are given by

under the squared error loss function are given by

for

for  .

.

The PESLs evaluated at the Bayes estimators

are given by

are given by

The PESLs evaluated at the Bayes estimators

are given by

are given by

2.3 Simulations

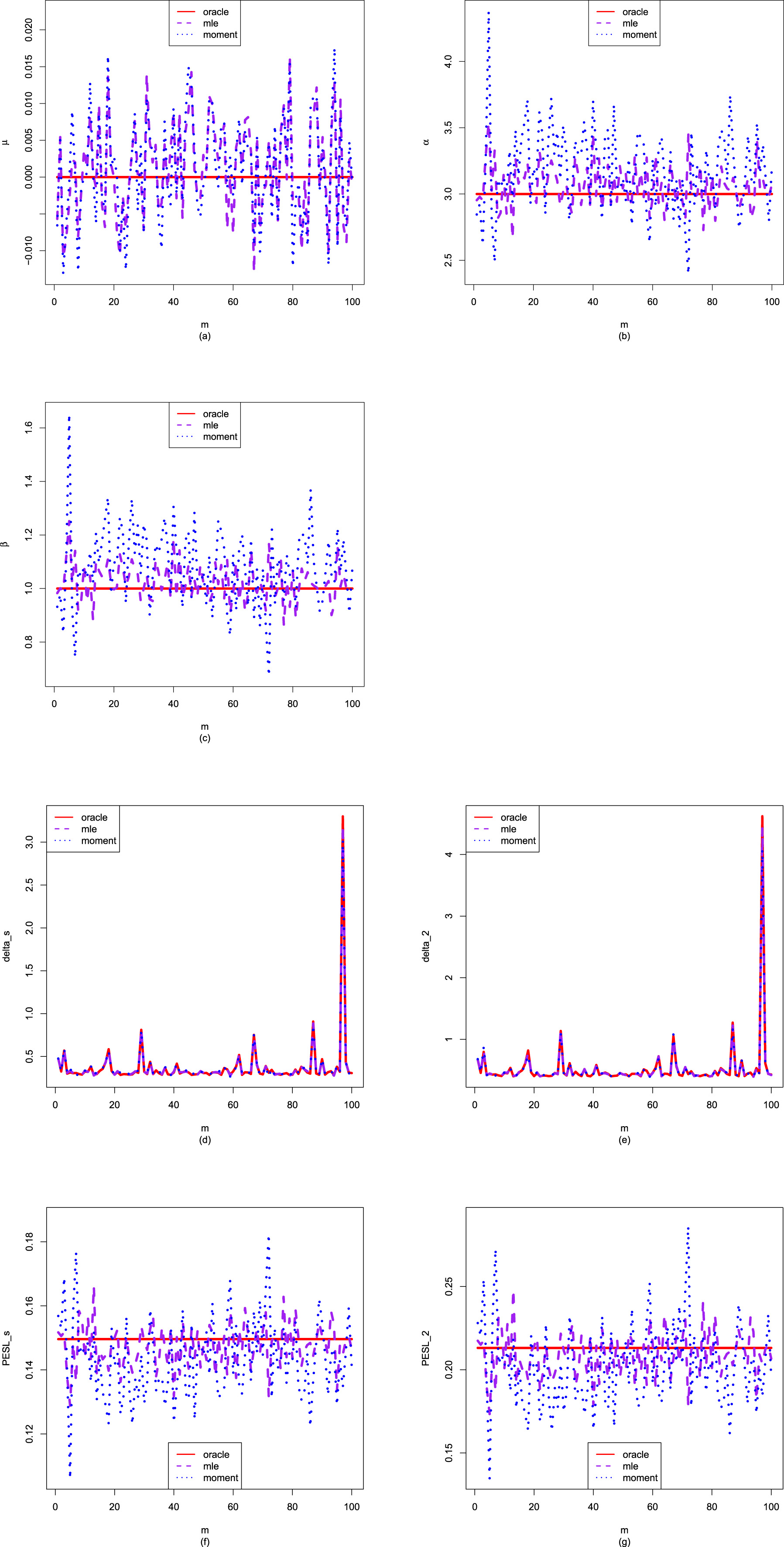

In this section, we will carry out the numerical simulations for the hierarchical inverse gamma and inverse gamma model (2.1). We will illustrate five aspects. First, we will numerically exemplify (2.6) and (2.9) for the oracle method. Second, we will illustrate that the moment estimators and the MLEs are consistent estimators of the hyperparameters. Third, we will calculate the goodness-of-fit of the model (2.1) to the simulated data. Fourth, we will numerically compare the Bayes estimators and the PESLs of the three methods (the oracle method, the moment method, and the MLE method). Finally, we will plot the marginal densities of the model (2.1) for various hyperparameters.

The simulated data are generated according to model (2.1) with the hyperparameters specified by  ,

,  , and

, and  . The reason why we choose these values is that

. The reason why we choose these values is that  ,

,  , and

, and  are required in the moment estimations of the hyperparameters. Other numerical values of the hyperparameters can also be specified.

are required in the moment estimations of the hyperparameters. Other numerical values of the hyperparameters can also be specified.

2.3.1 Two Inequalities of the Bayes Estimators and the PESLs

In this subsection, we will numerically exemplify the two inequalities of the Bayes estimators and the PESLs (2.6) and (2.9) for the oracle method. The motivation of this subsection is that theoretically we have the two inequalities (2.6) and (2.9).

First, we fix  ,

,  , and

, and  . Then we set a seed number 1 in R software and draw

. Then we set a seed number 1 in R software and draw  from

from  . After that, we draw

. After that, we draw  from

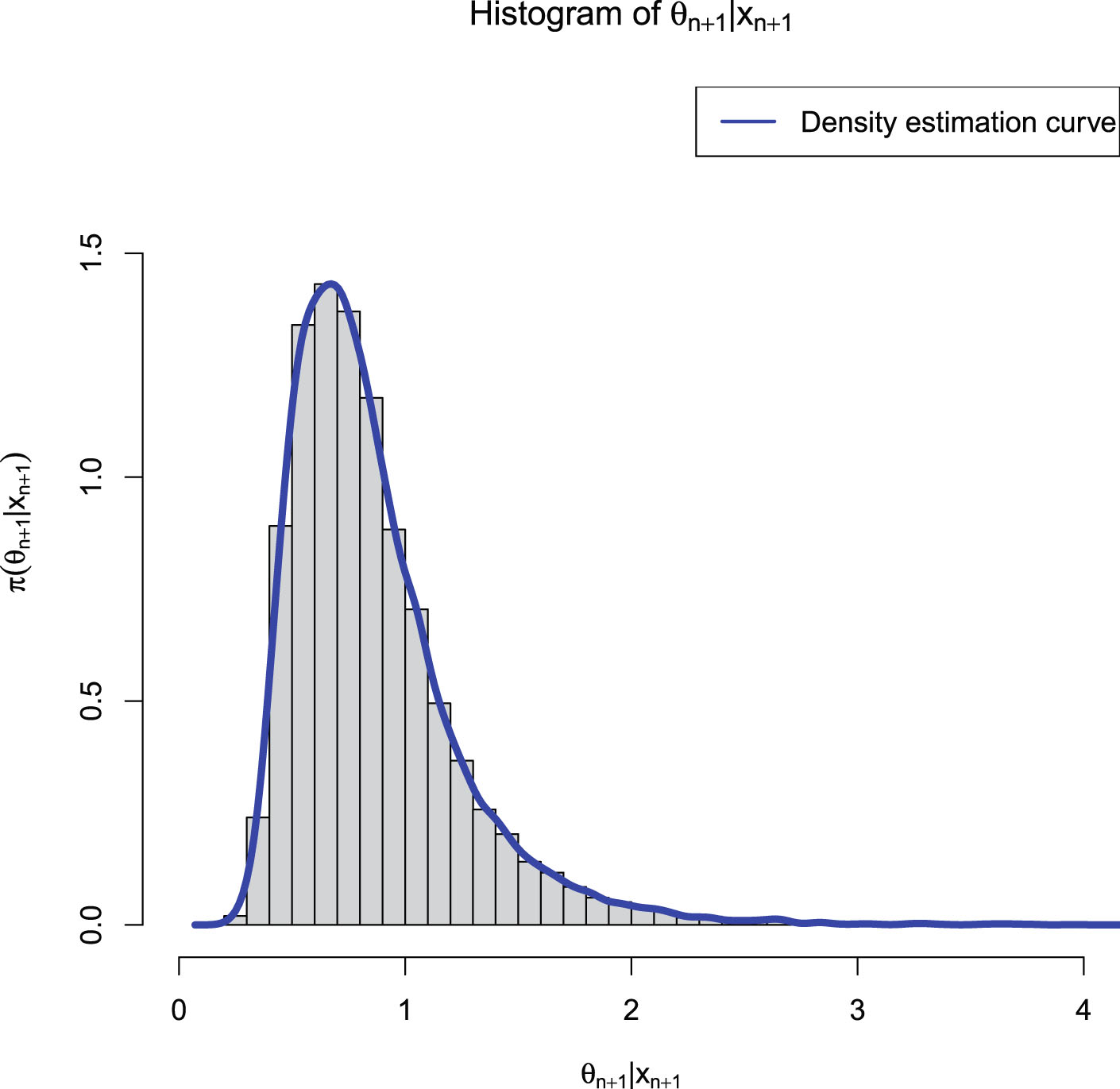

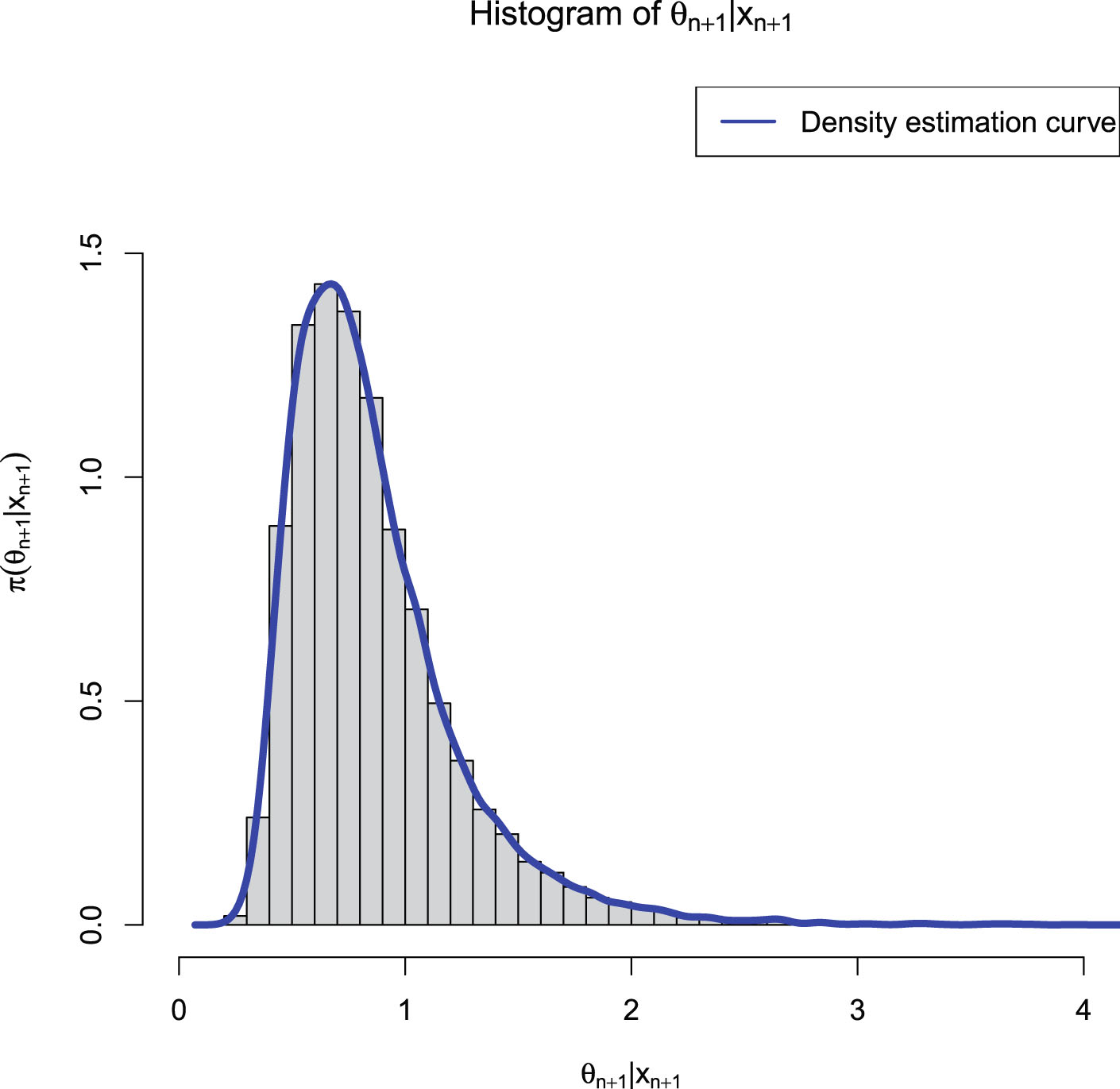

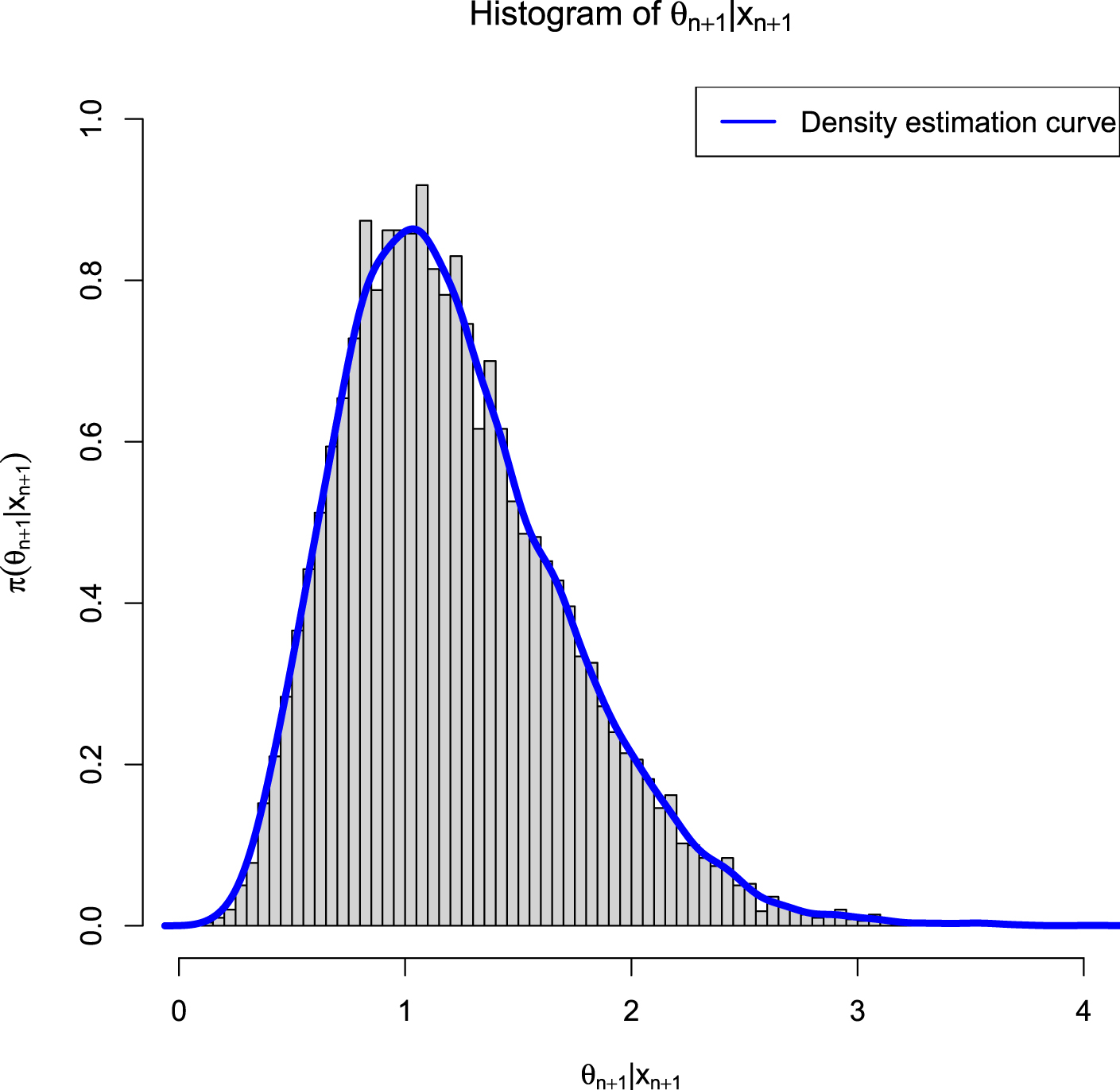

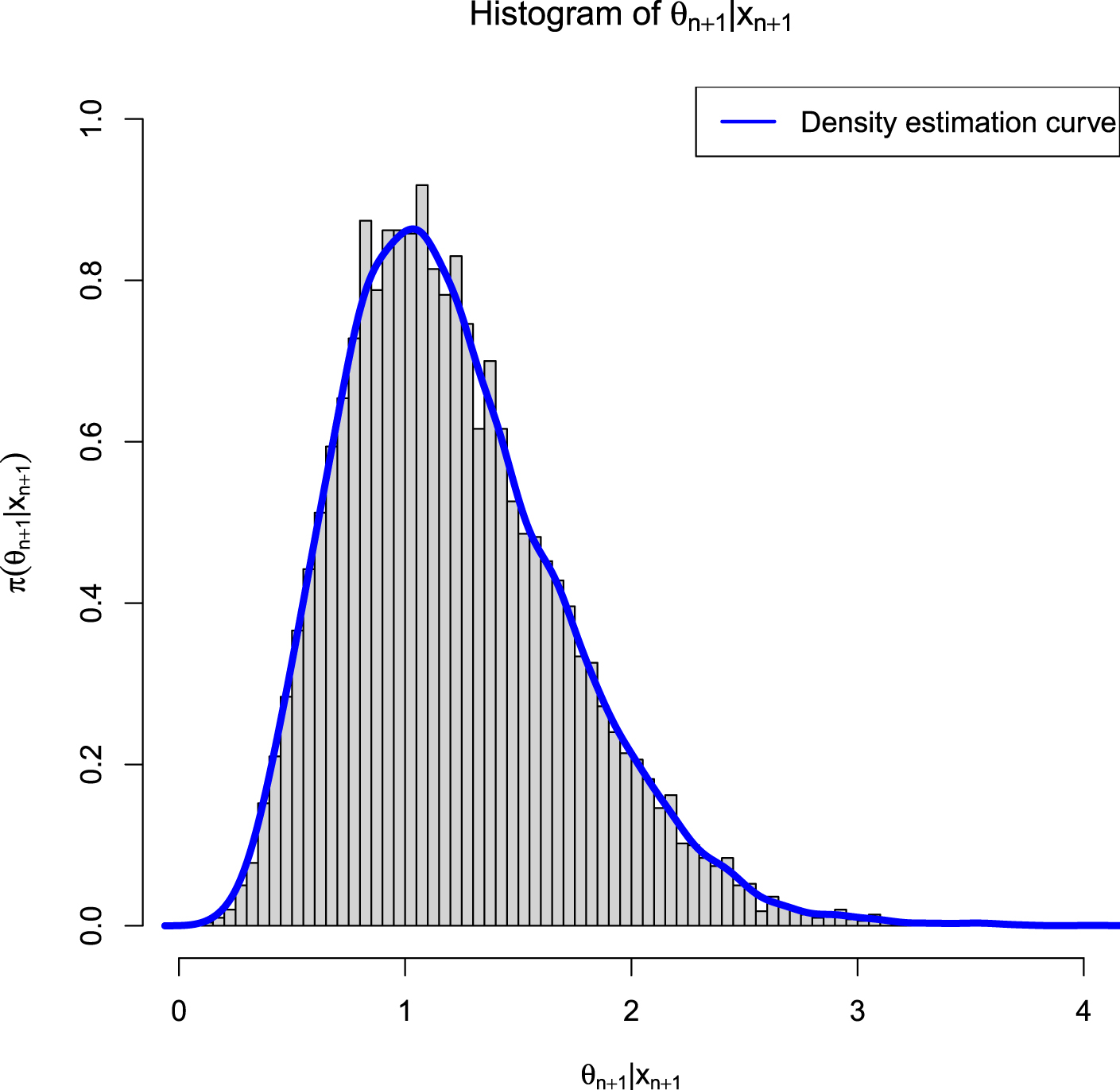

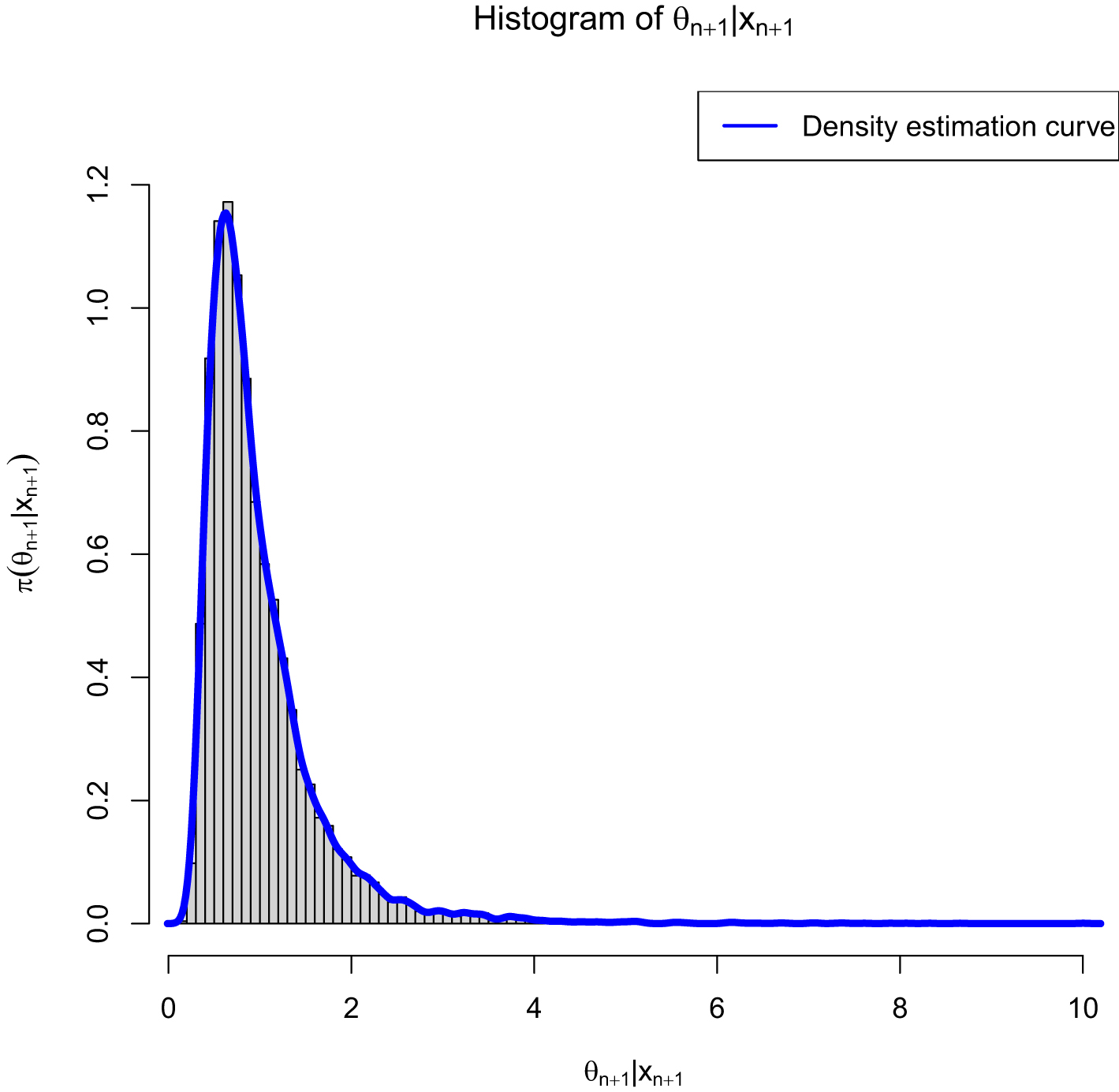

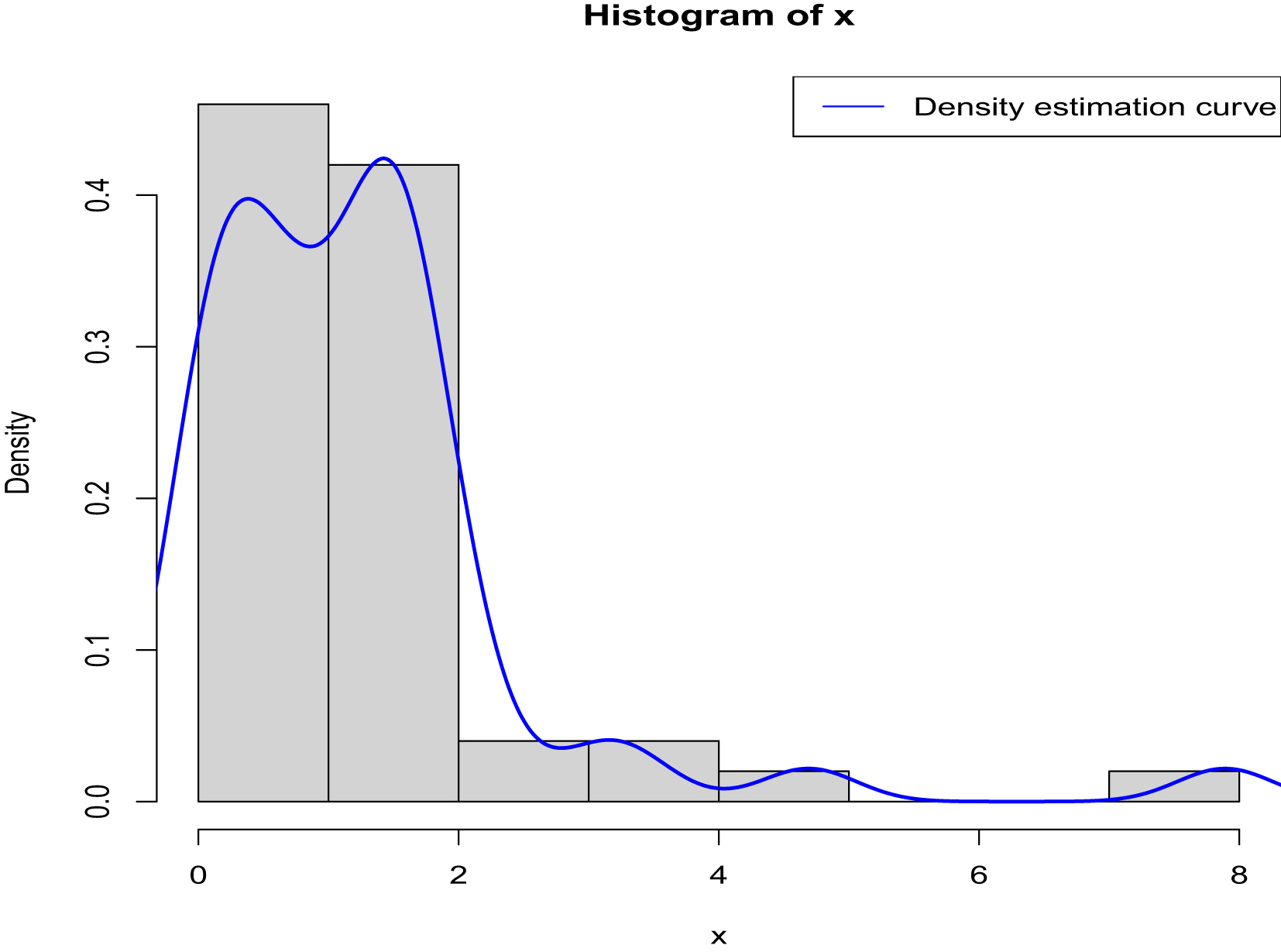

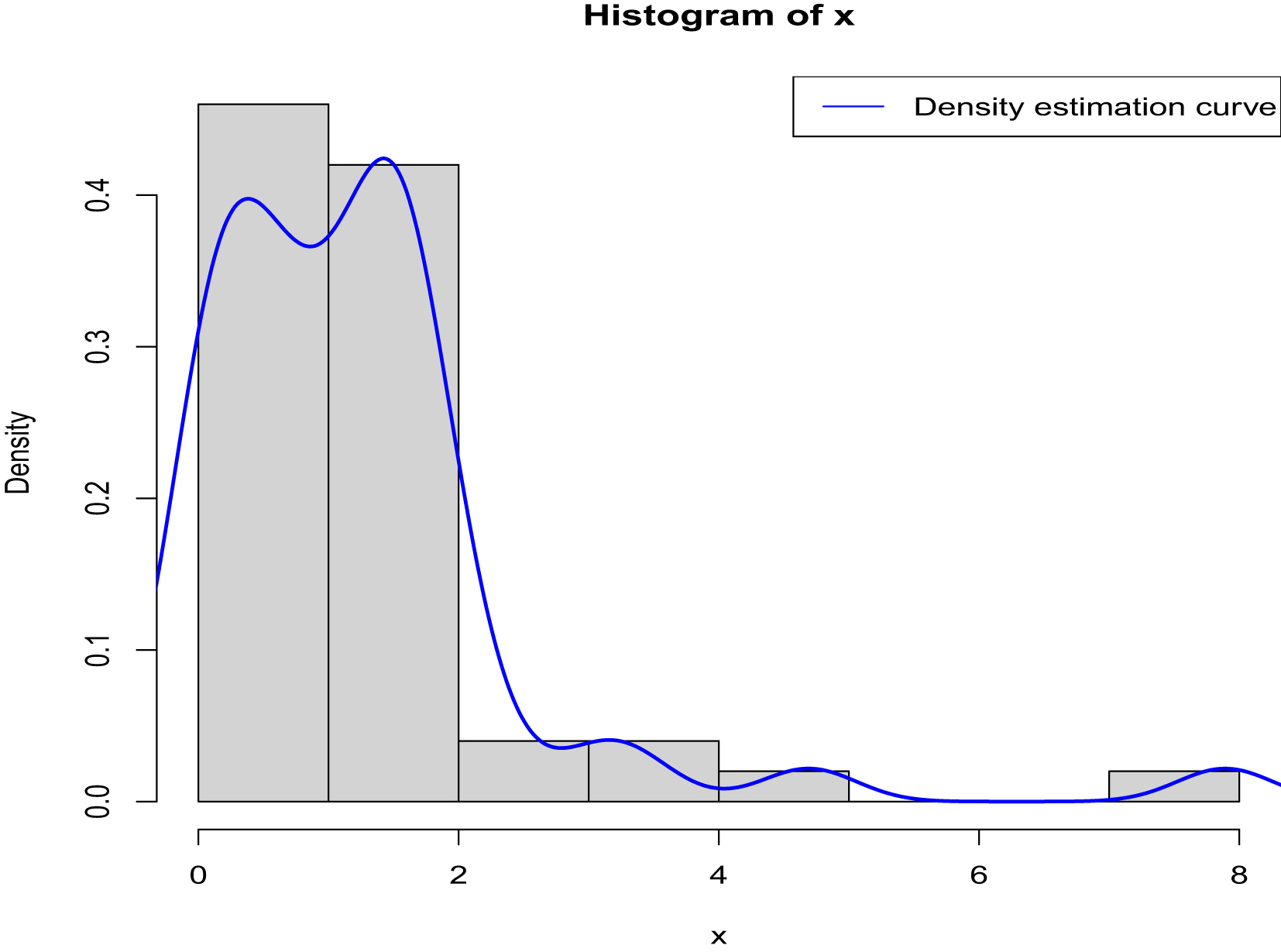

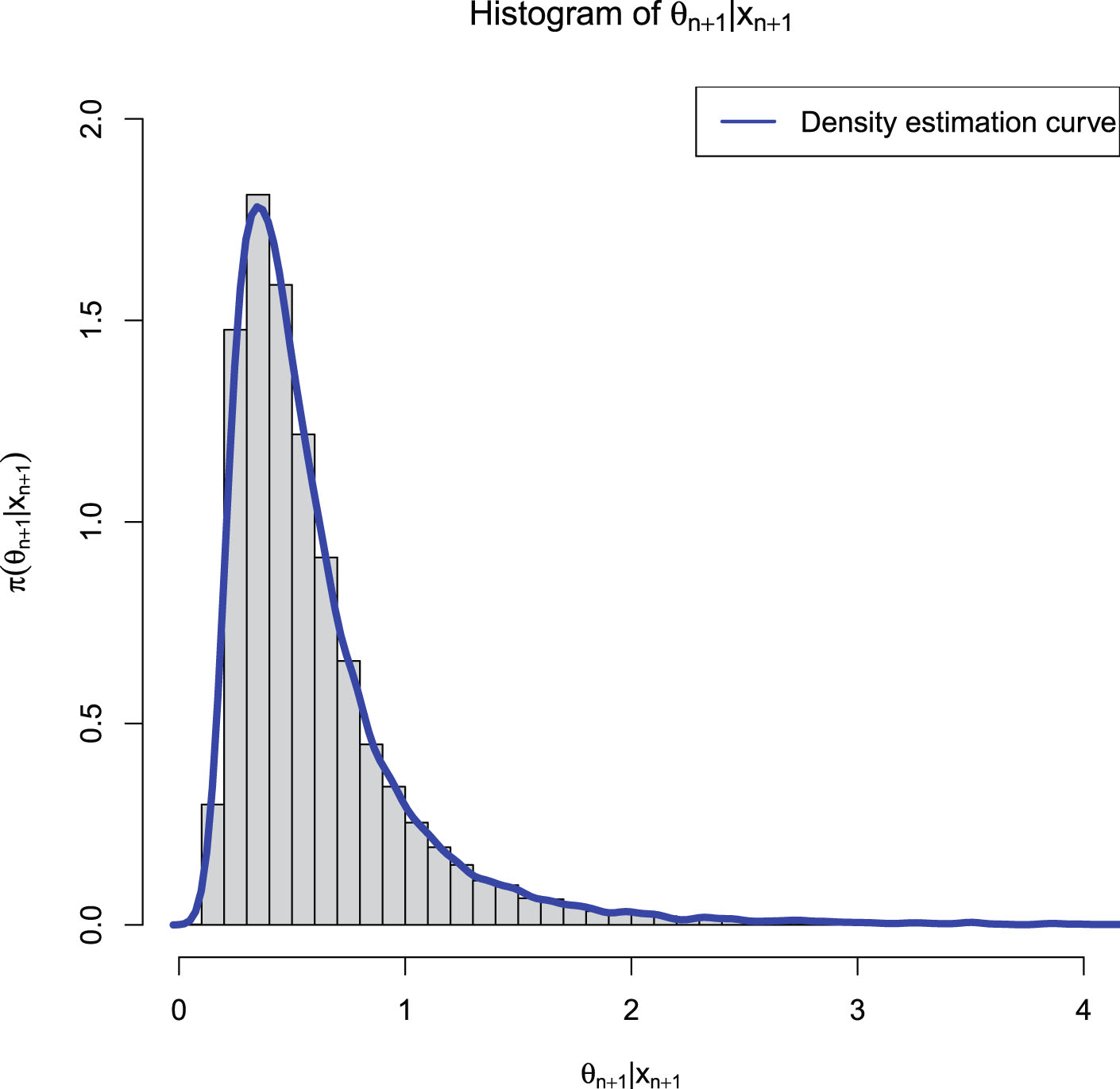

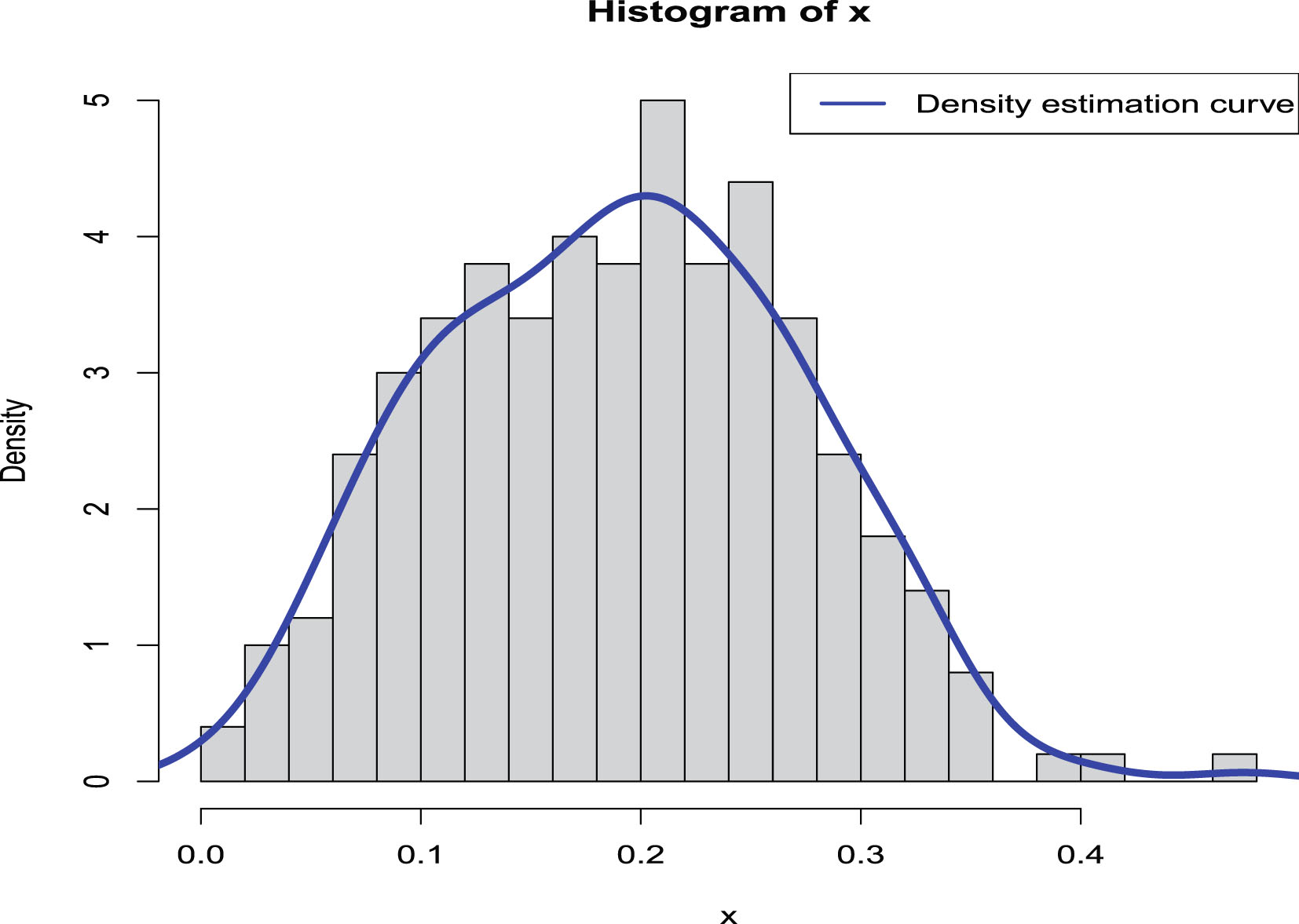

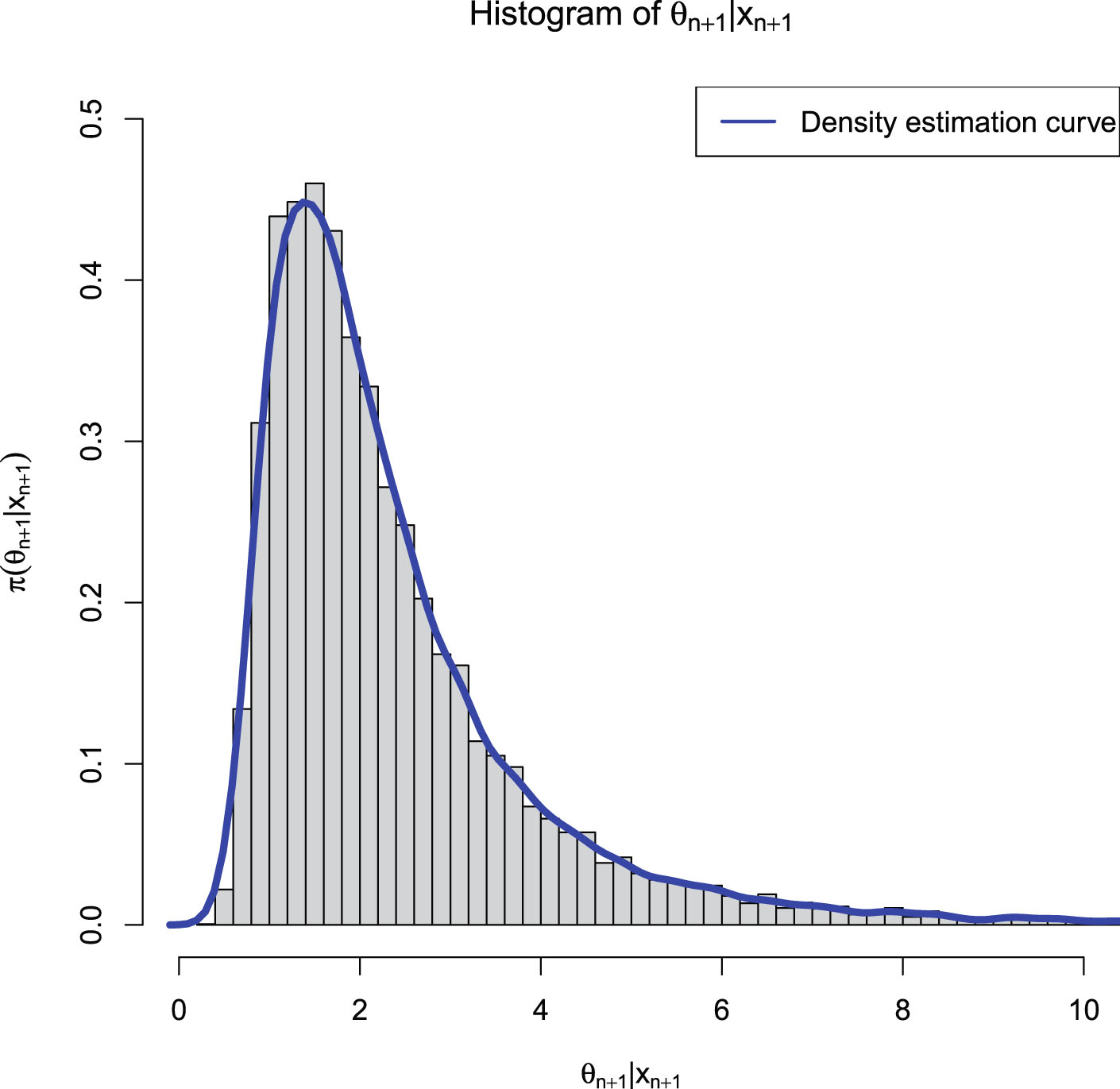

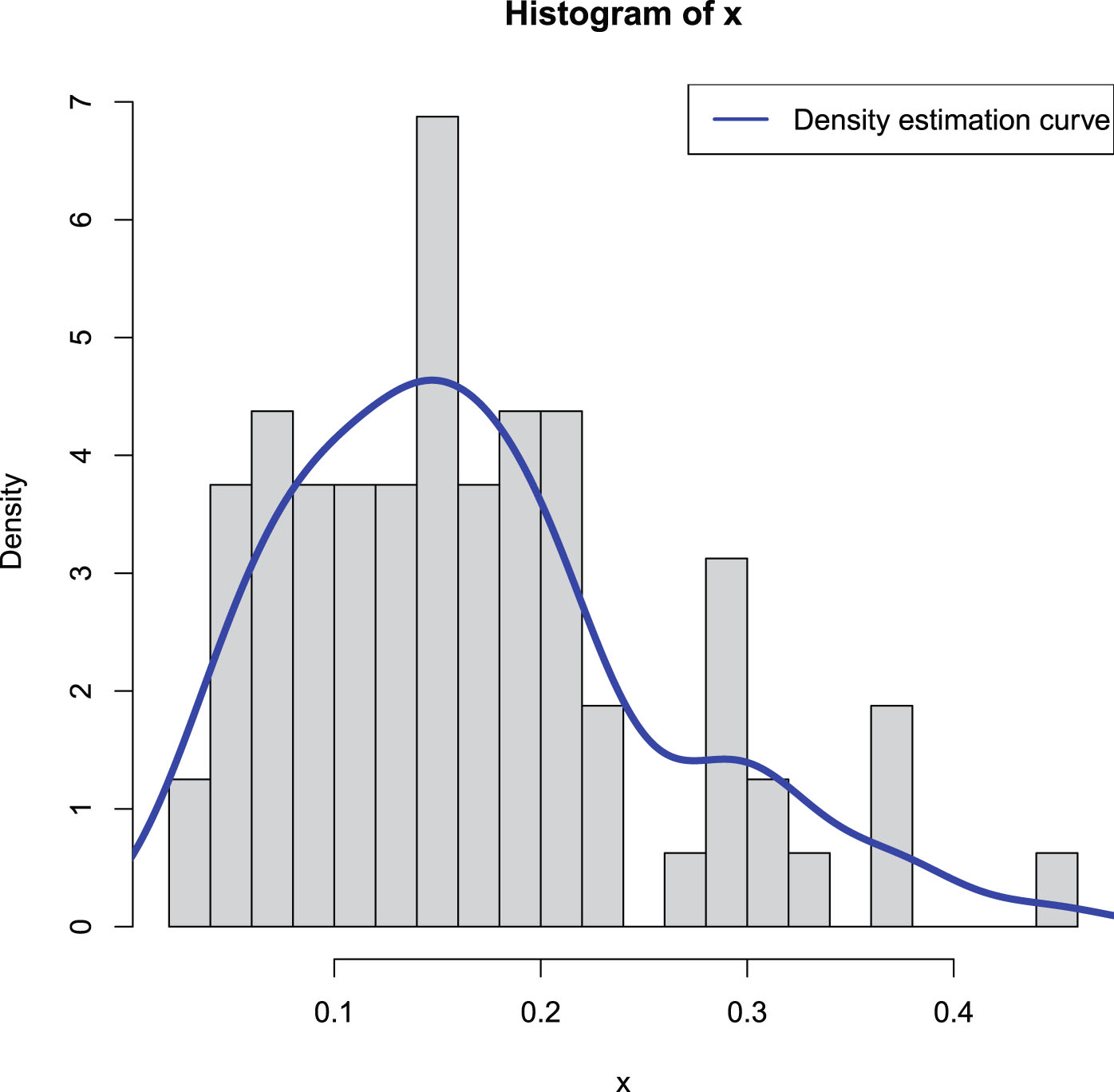

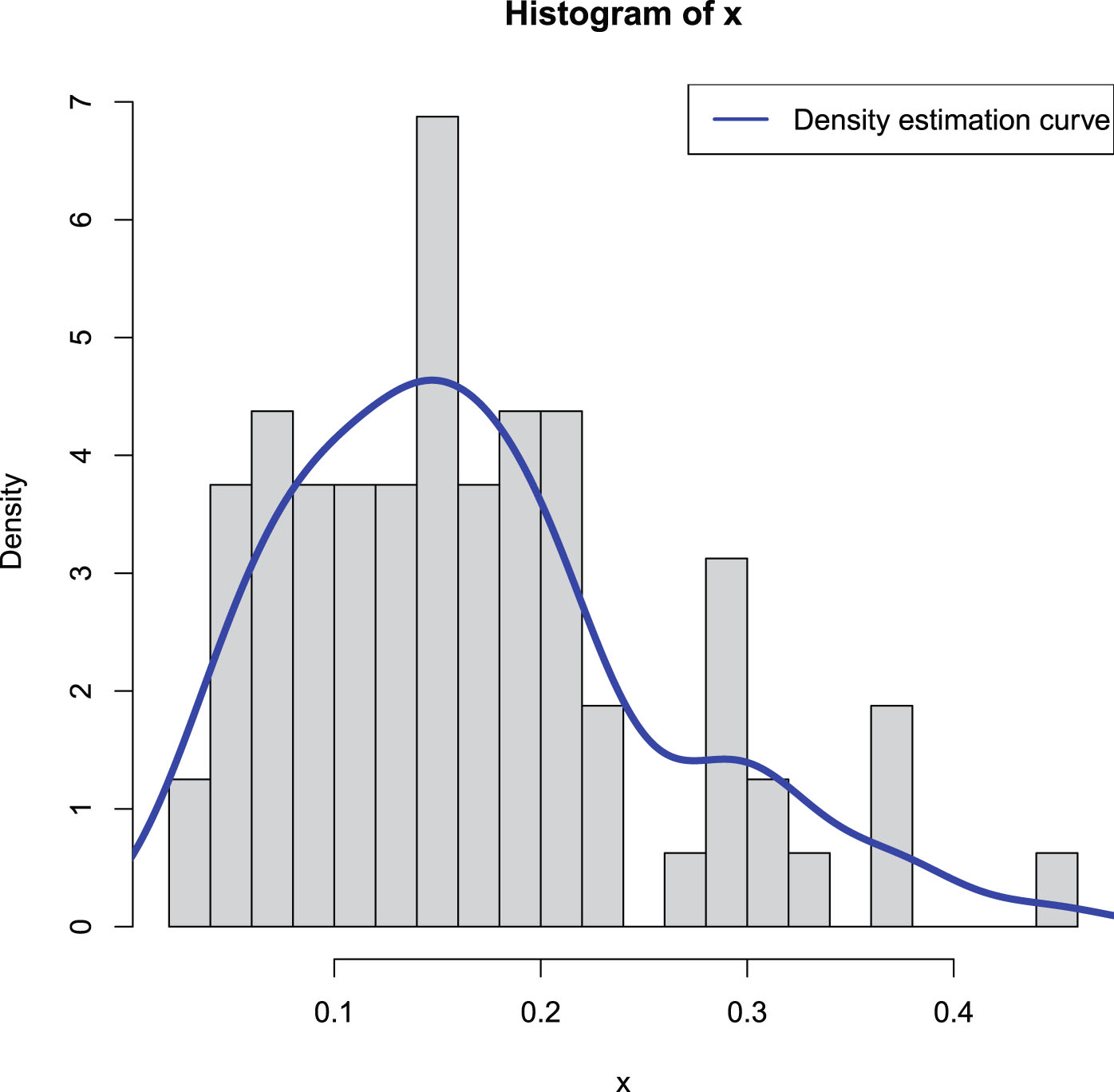

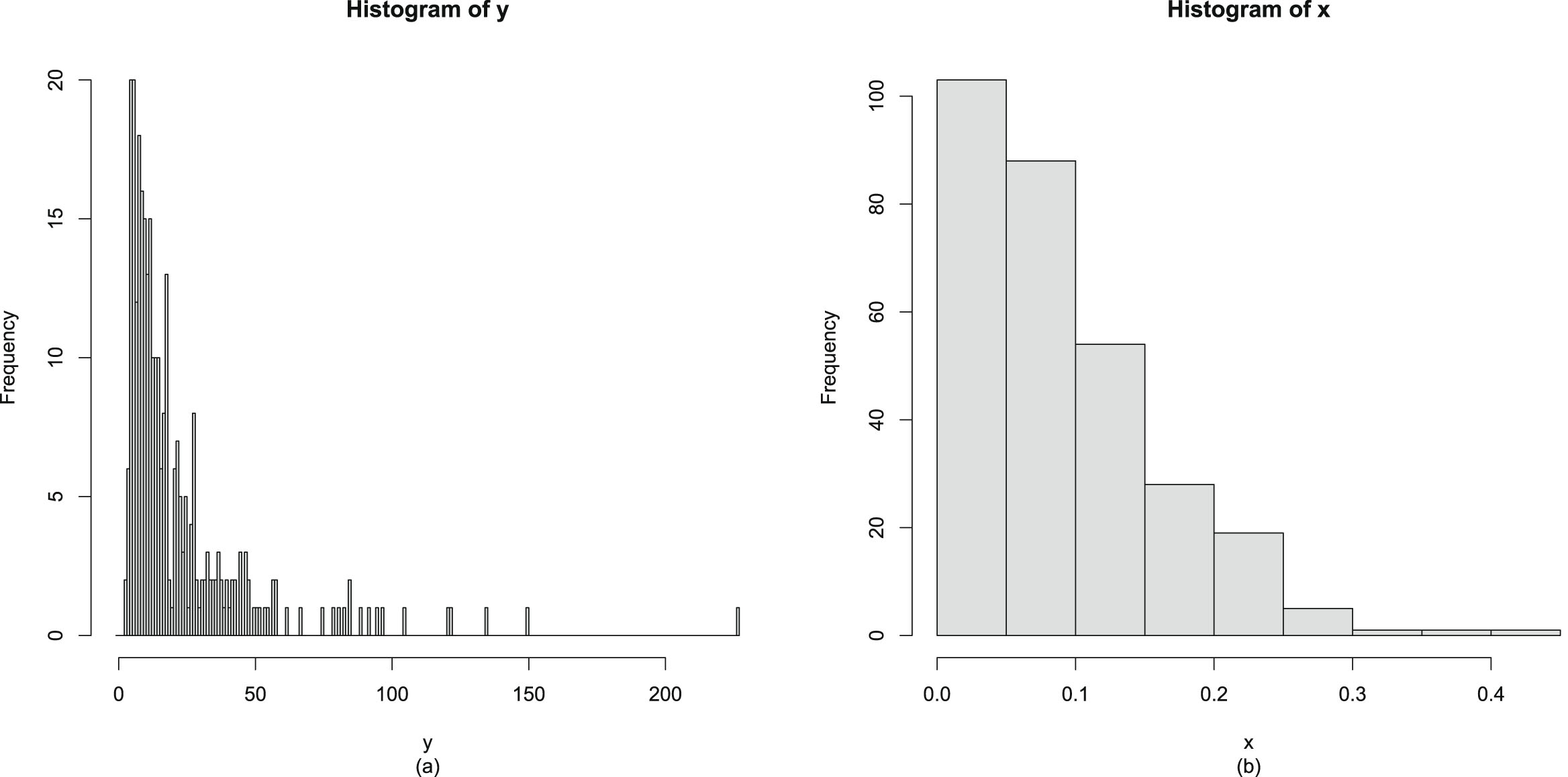

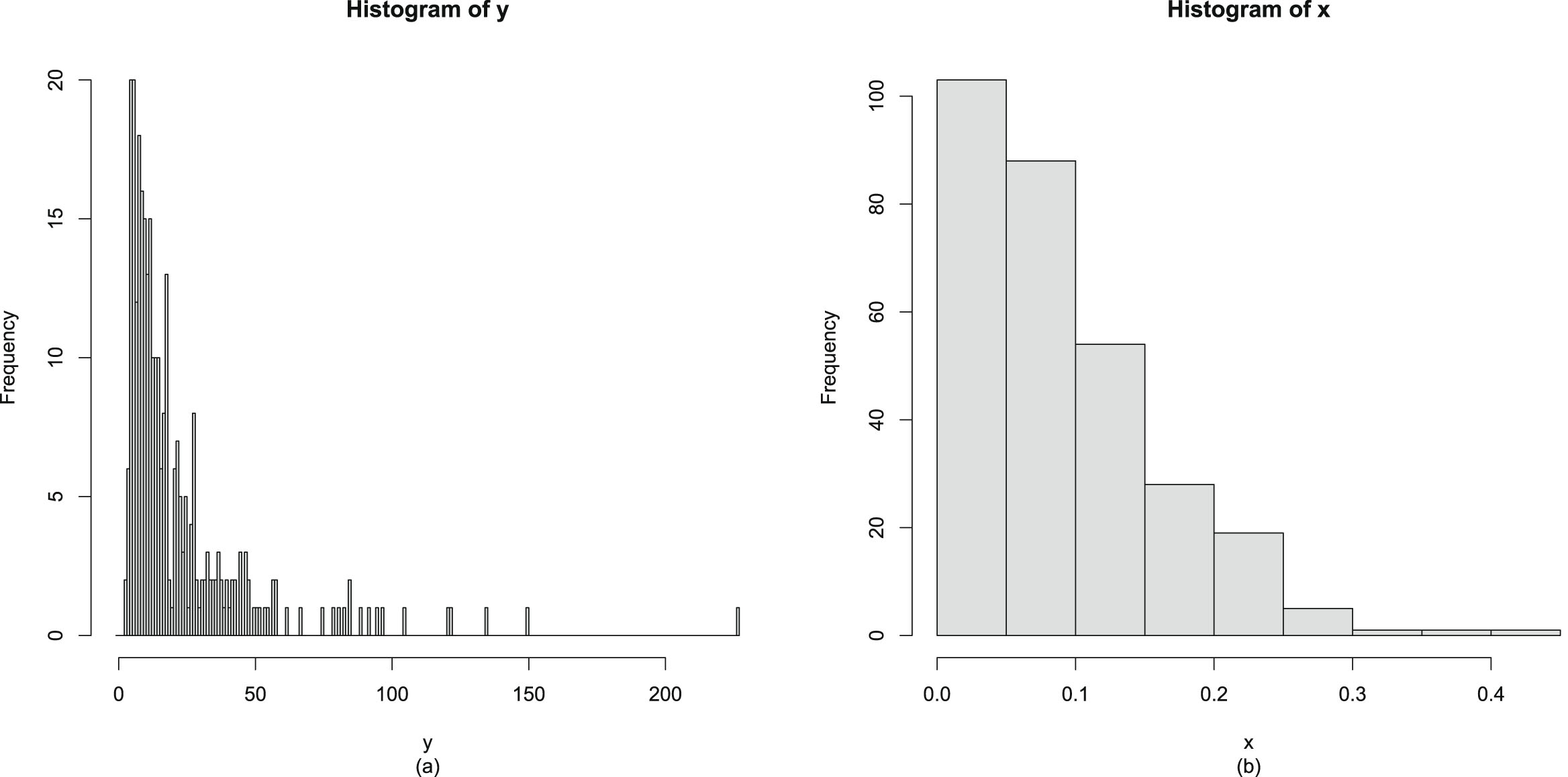

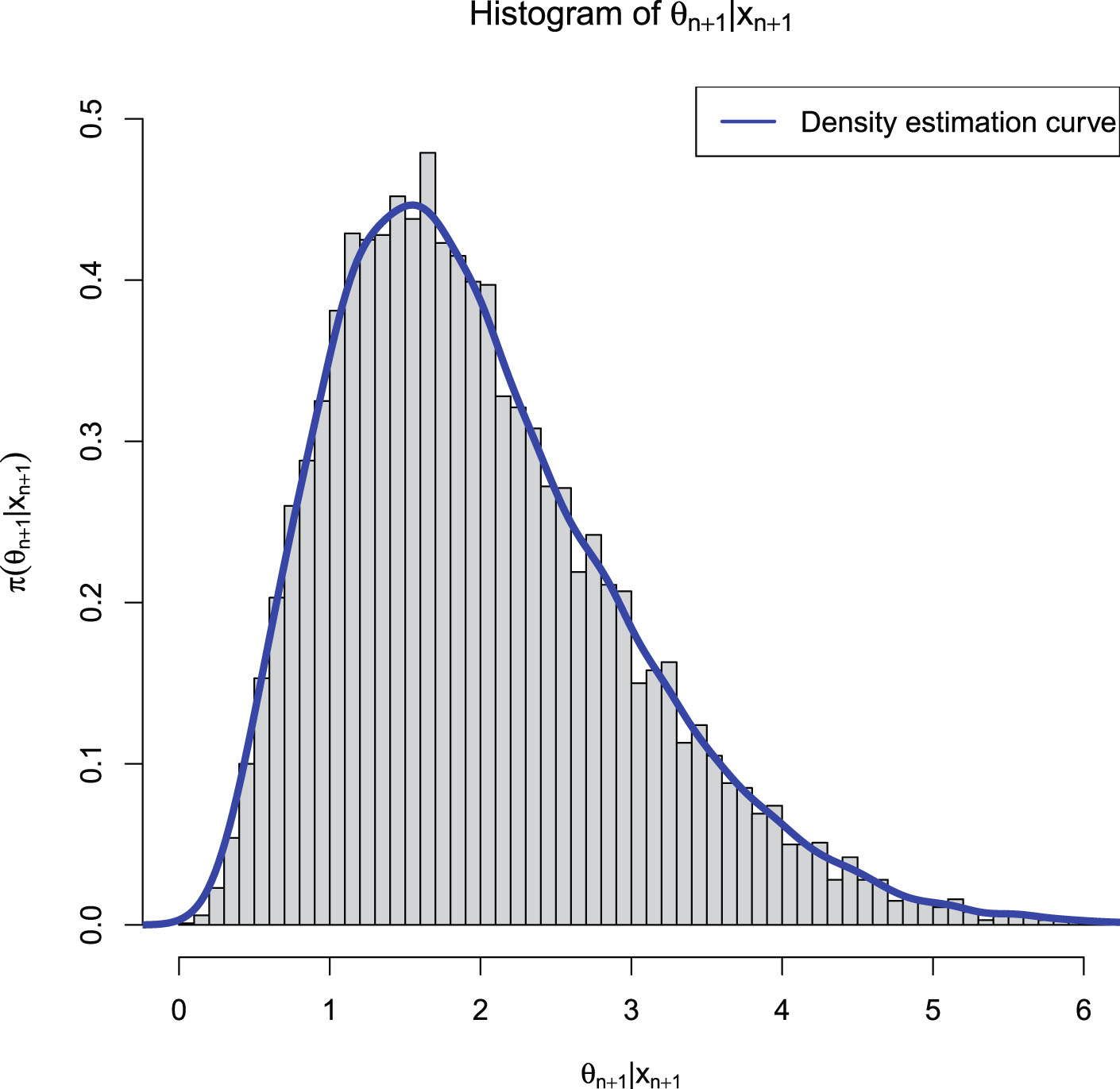

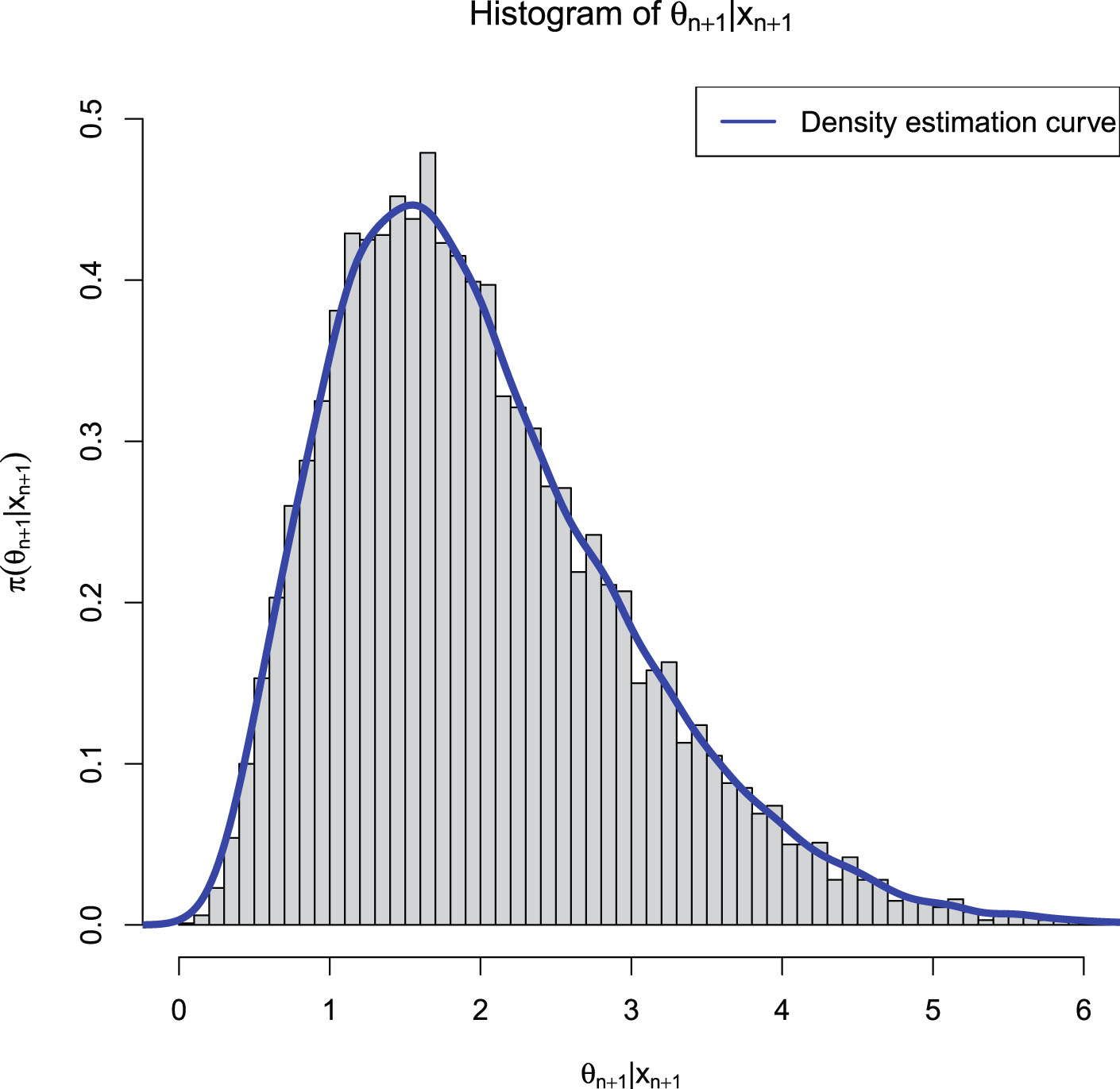

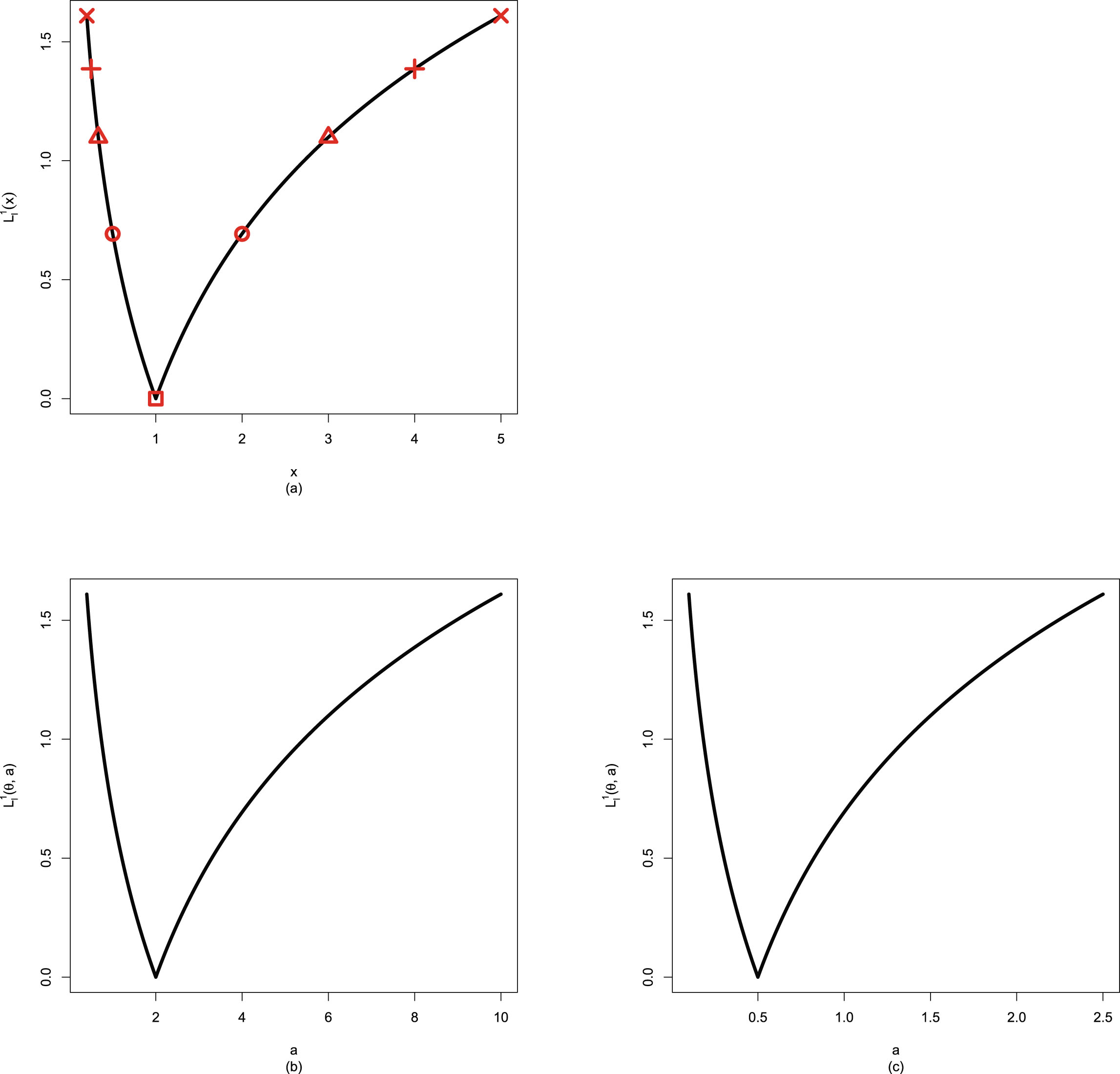

from  . Figure 2.1 shows the histogram of

. Figure 2.1 shows the histogram of  and the density estimation curve of

and the density estimation curve of  . It is

. It is  that we find

that we find  to minimize the PESL. Numerical results show that

to minimize the PESL. Numerical results show that

and

and

which exemplify the theoretical studies of (2.6) and (2.9).

which exemplify the theoretical studies of (2.6) and (2.9).

FIG. 2.1 — IG-IG: The histogram of  and the density estimation curve of

and the density estimation curve of  .

.

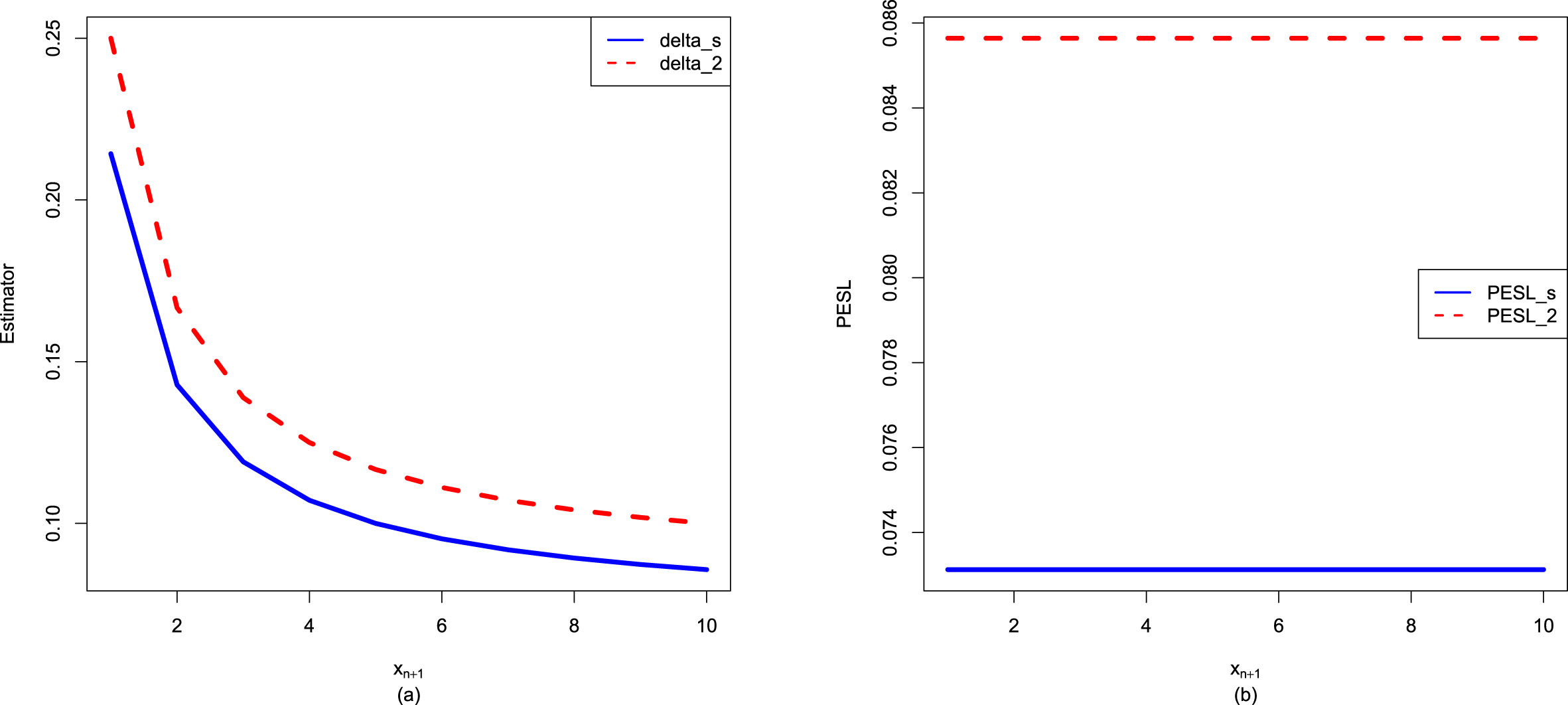

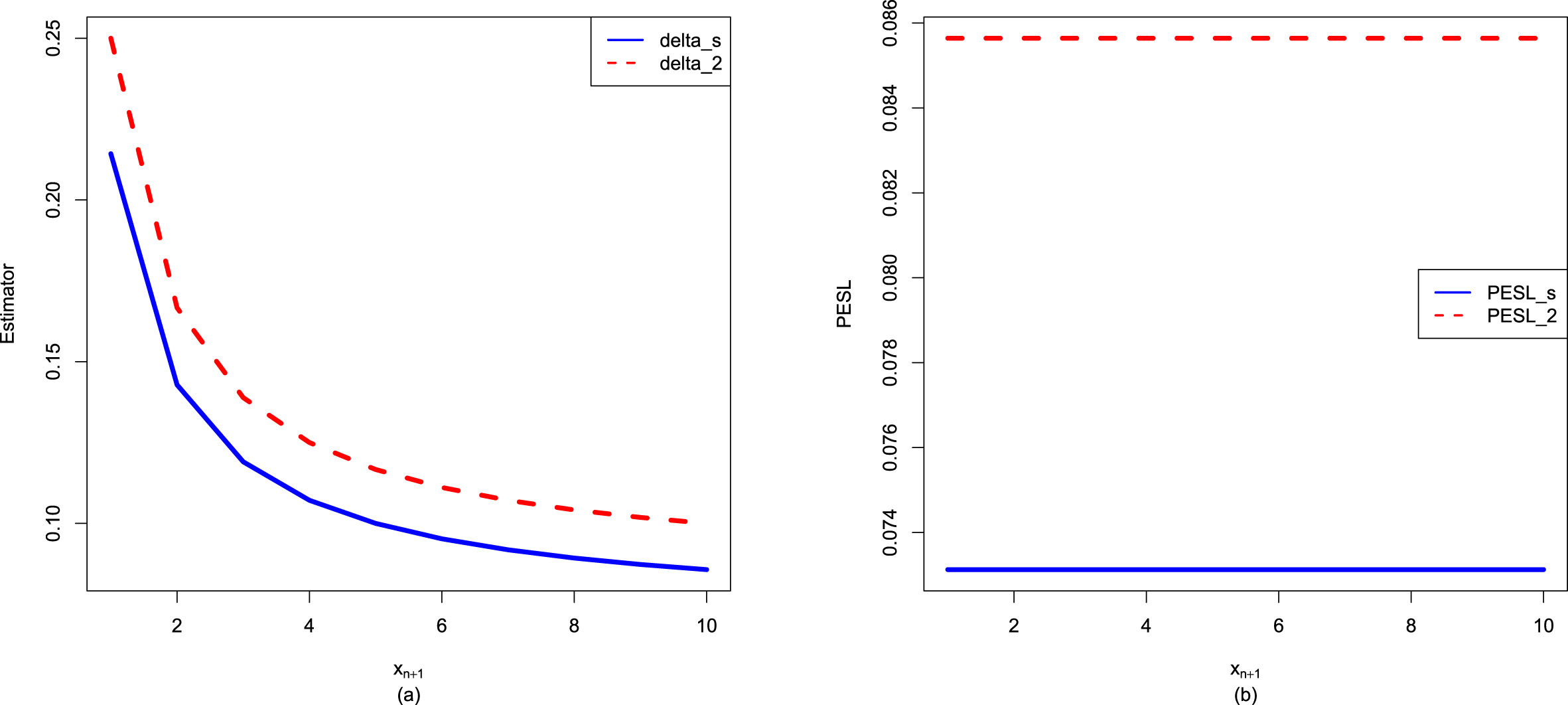

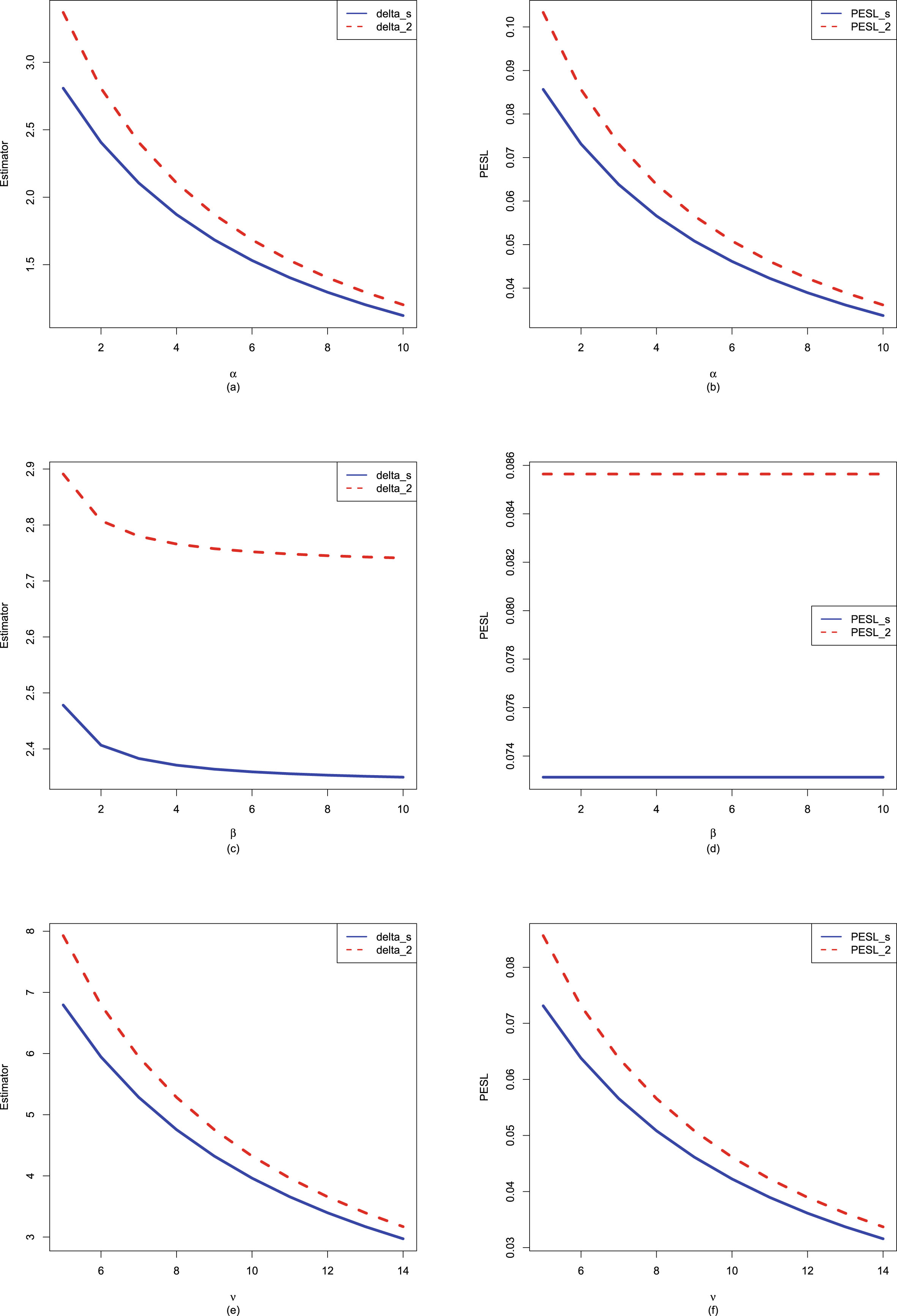

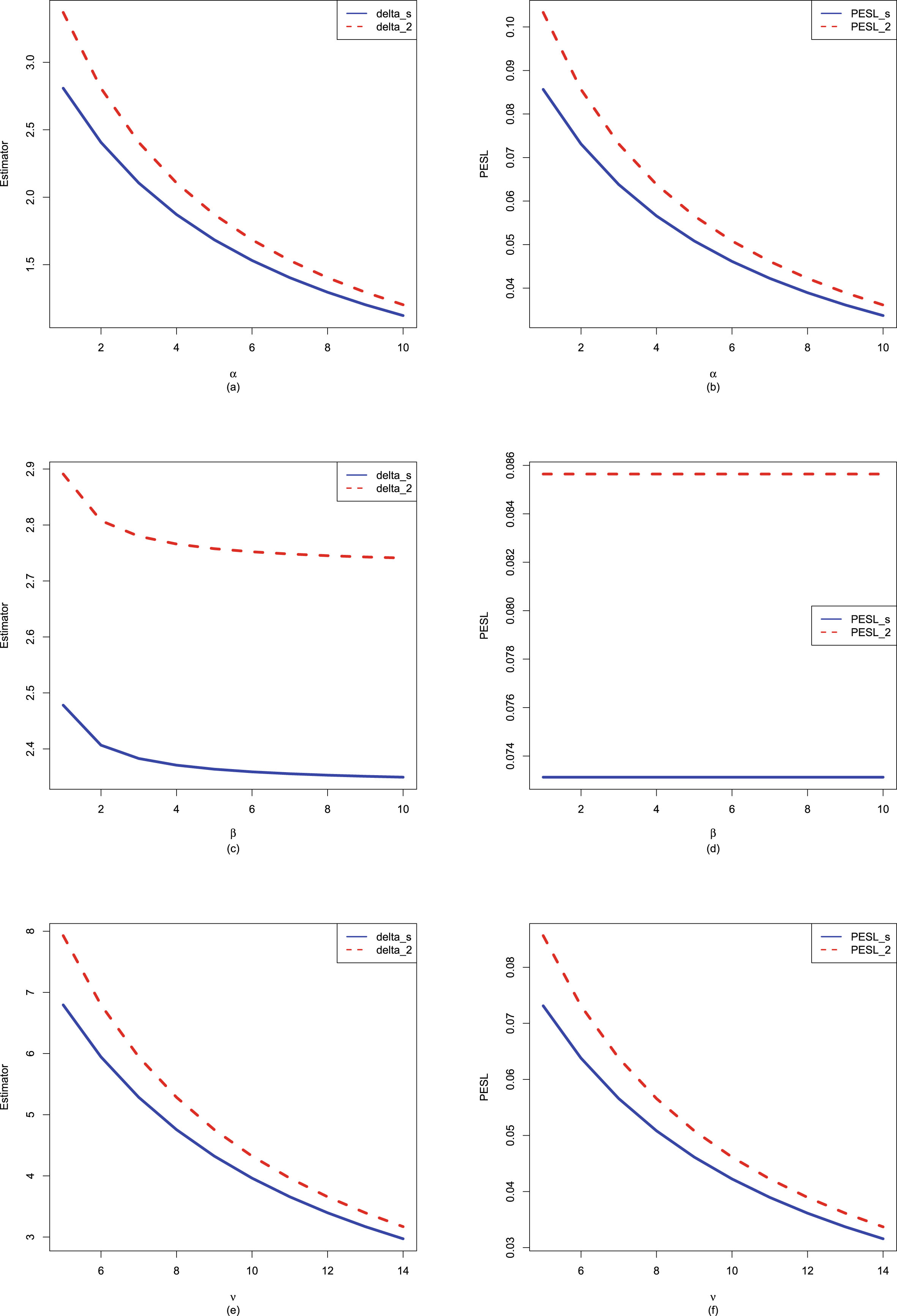

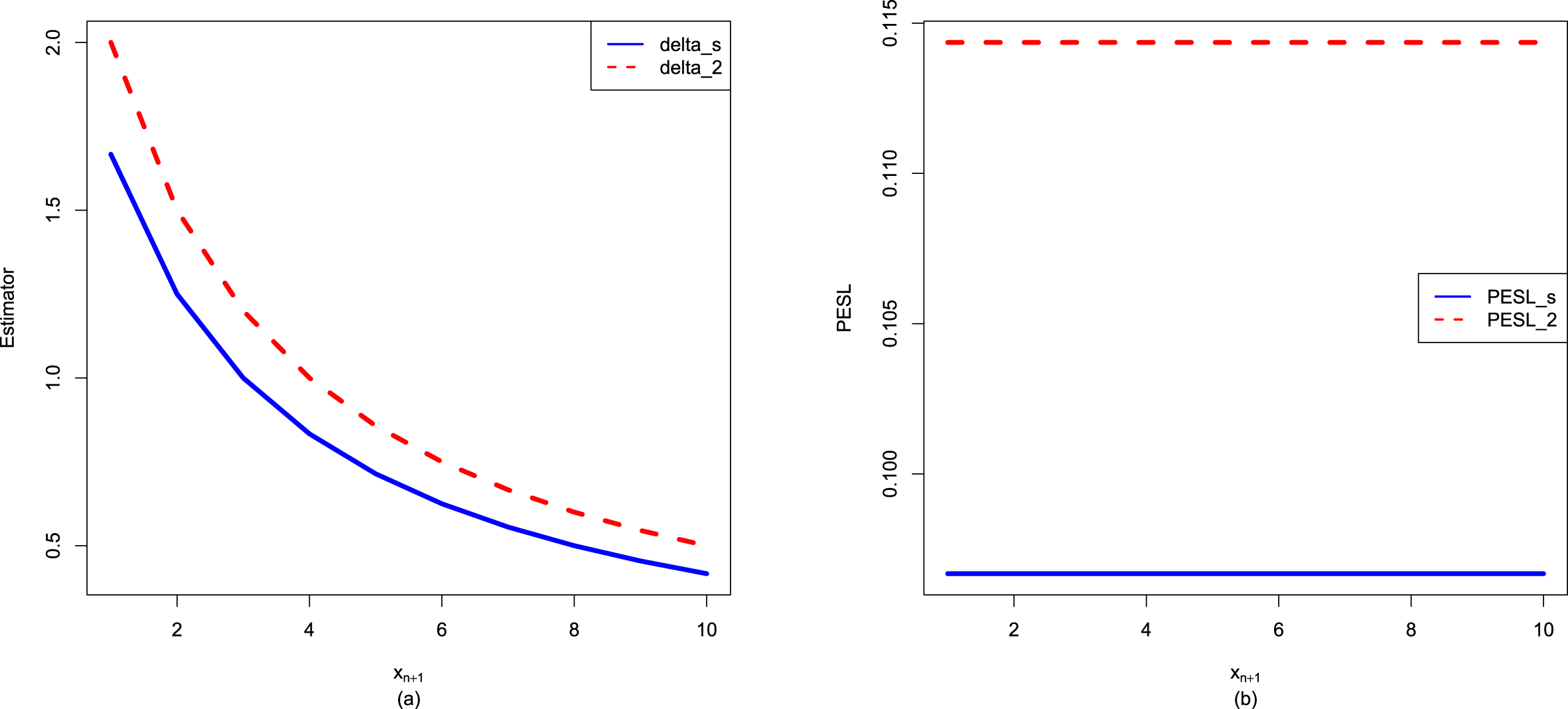

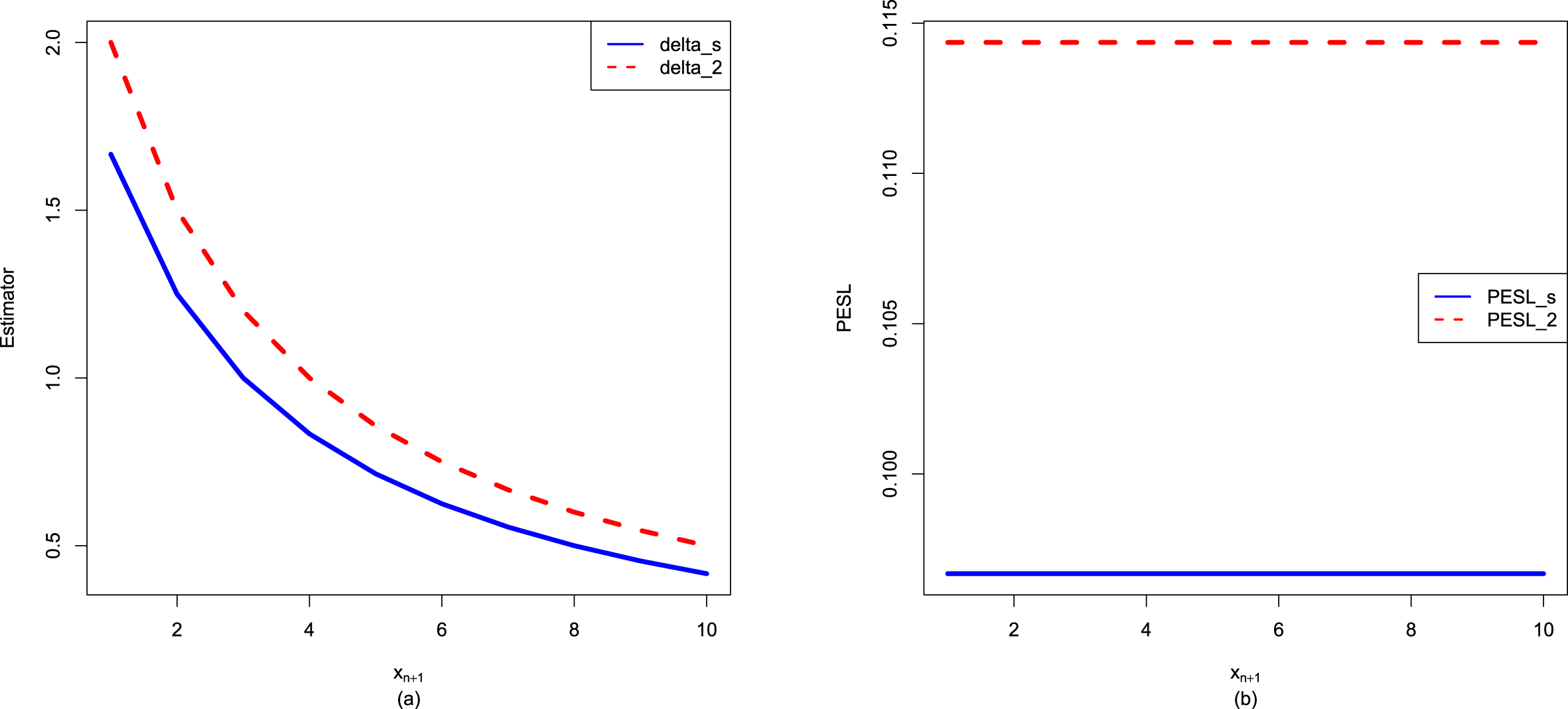

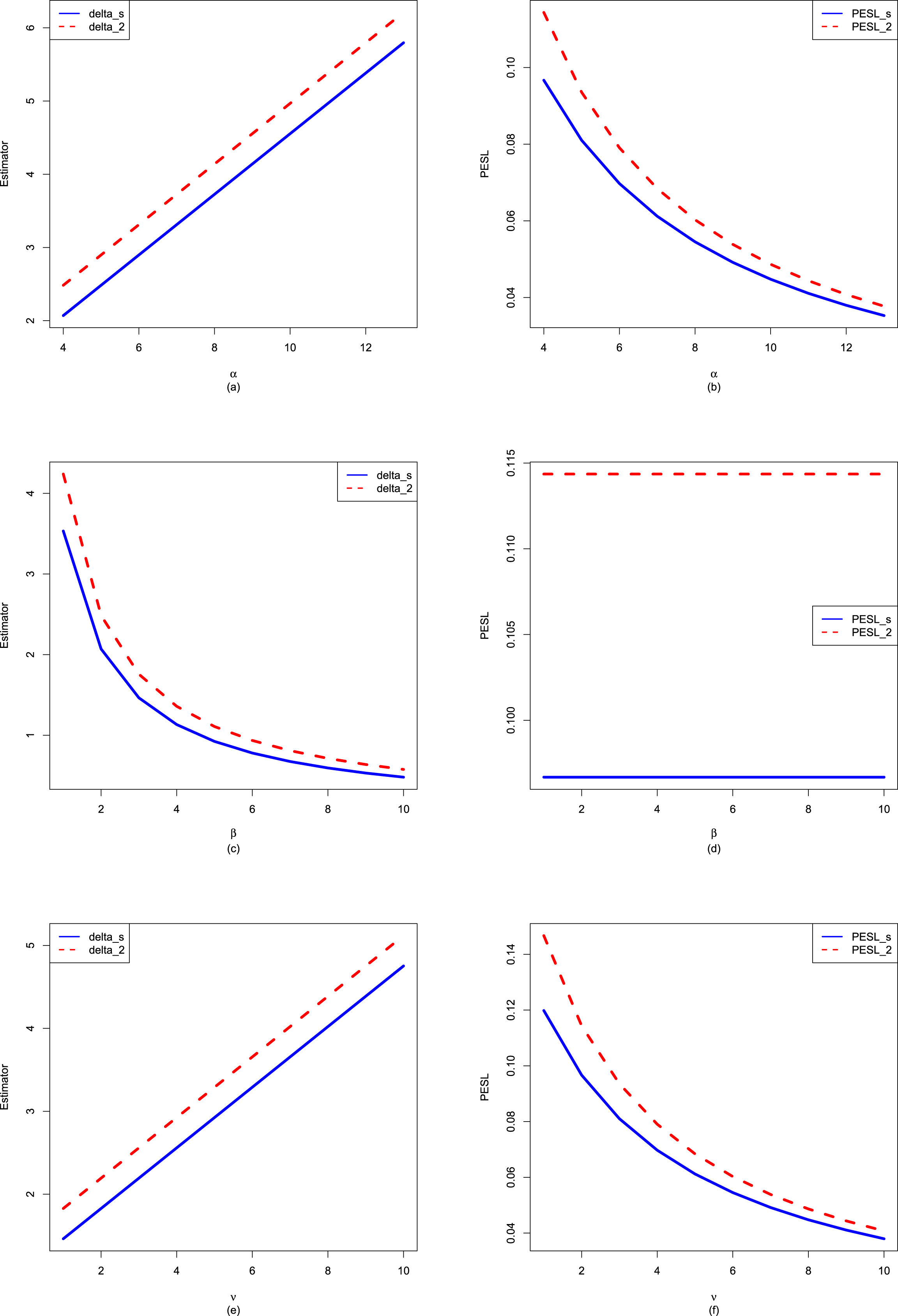

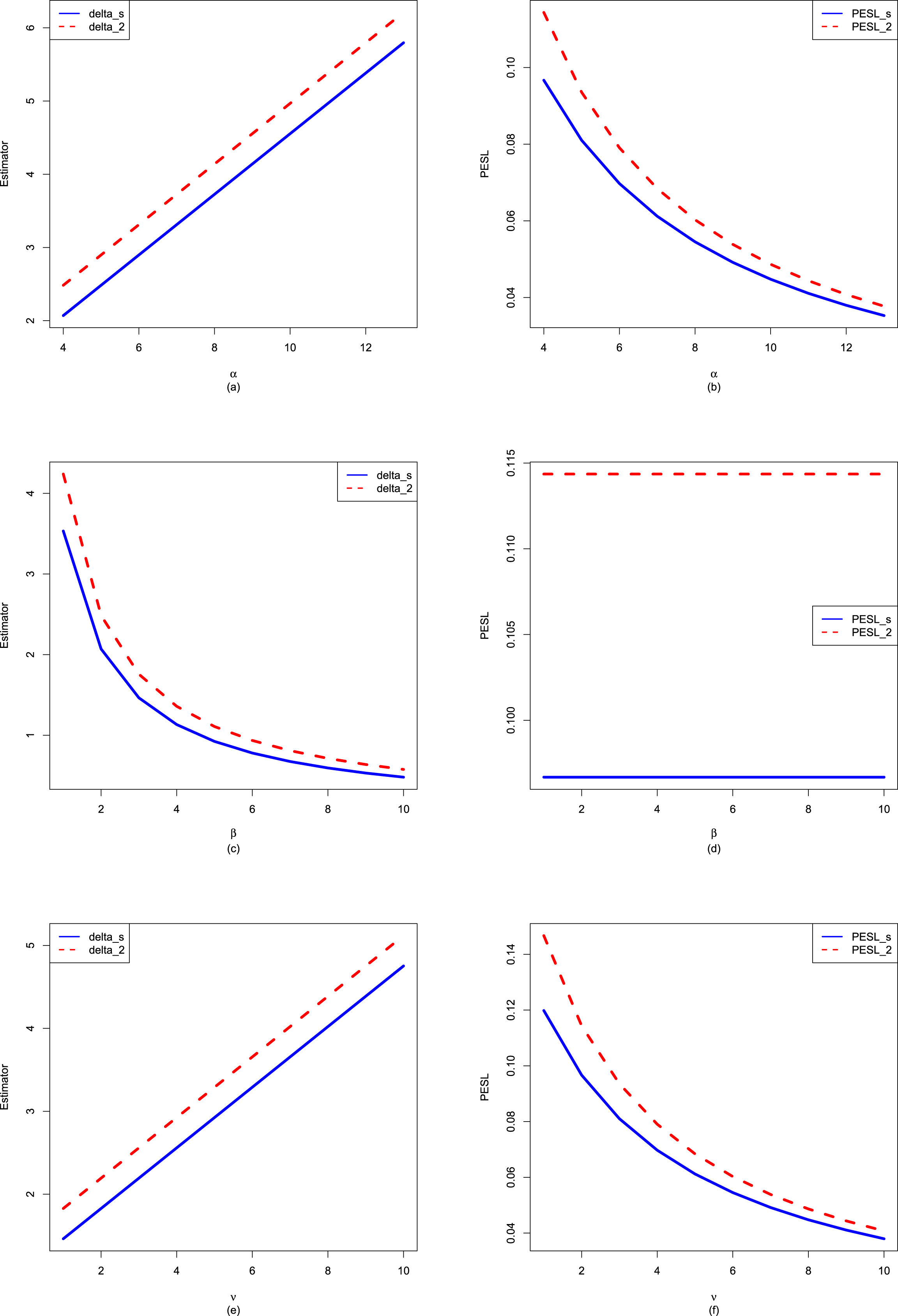

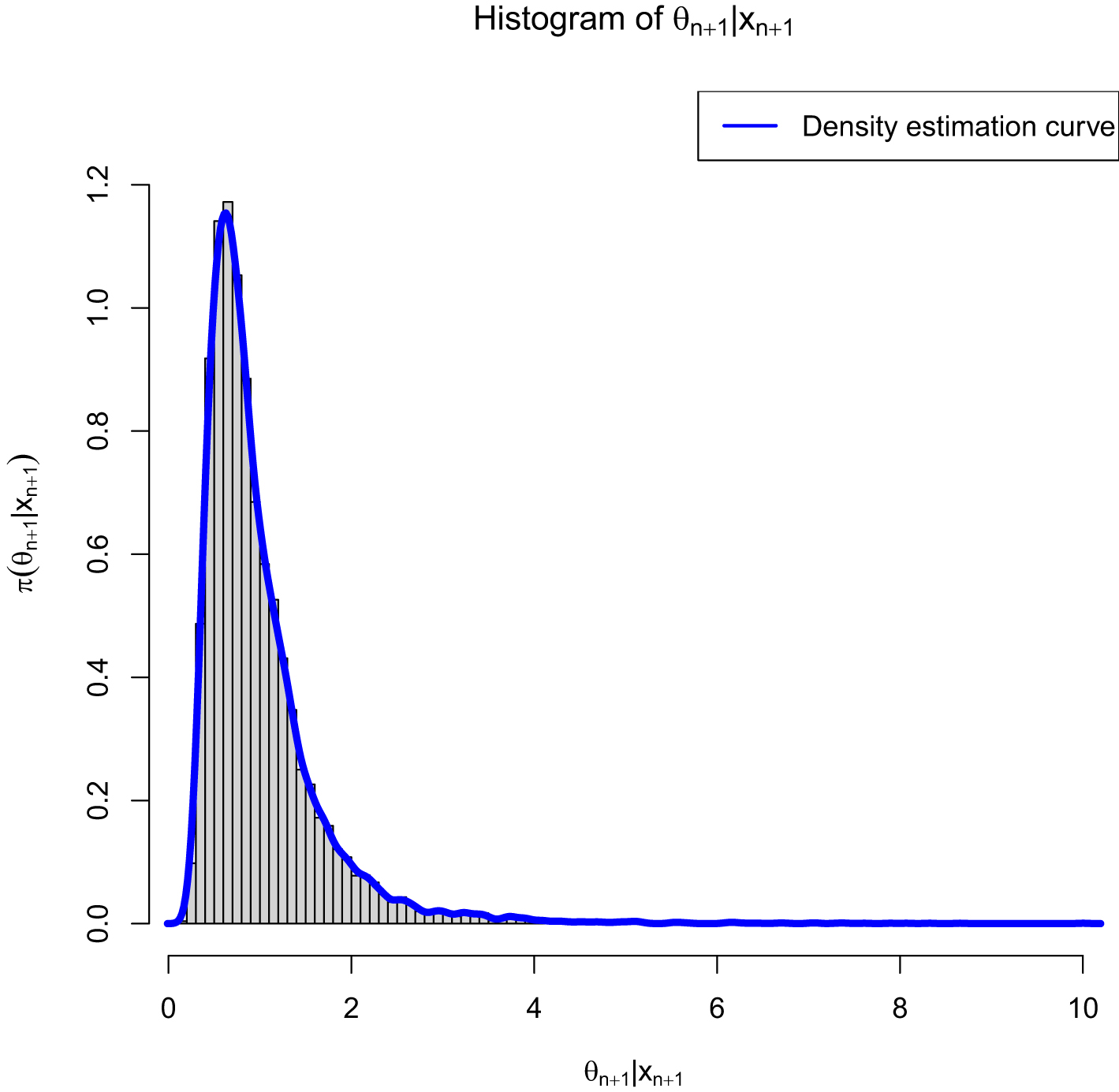

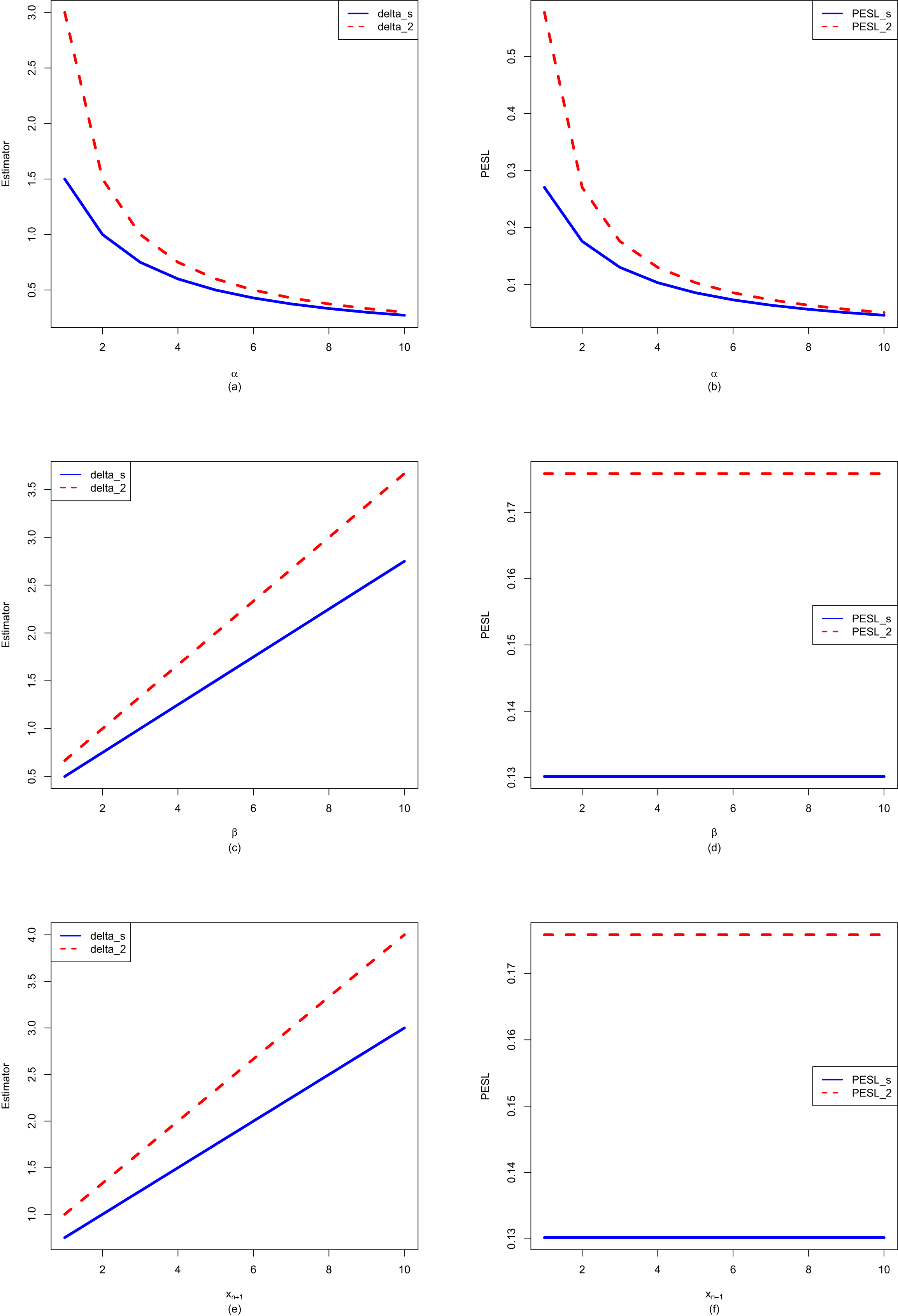

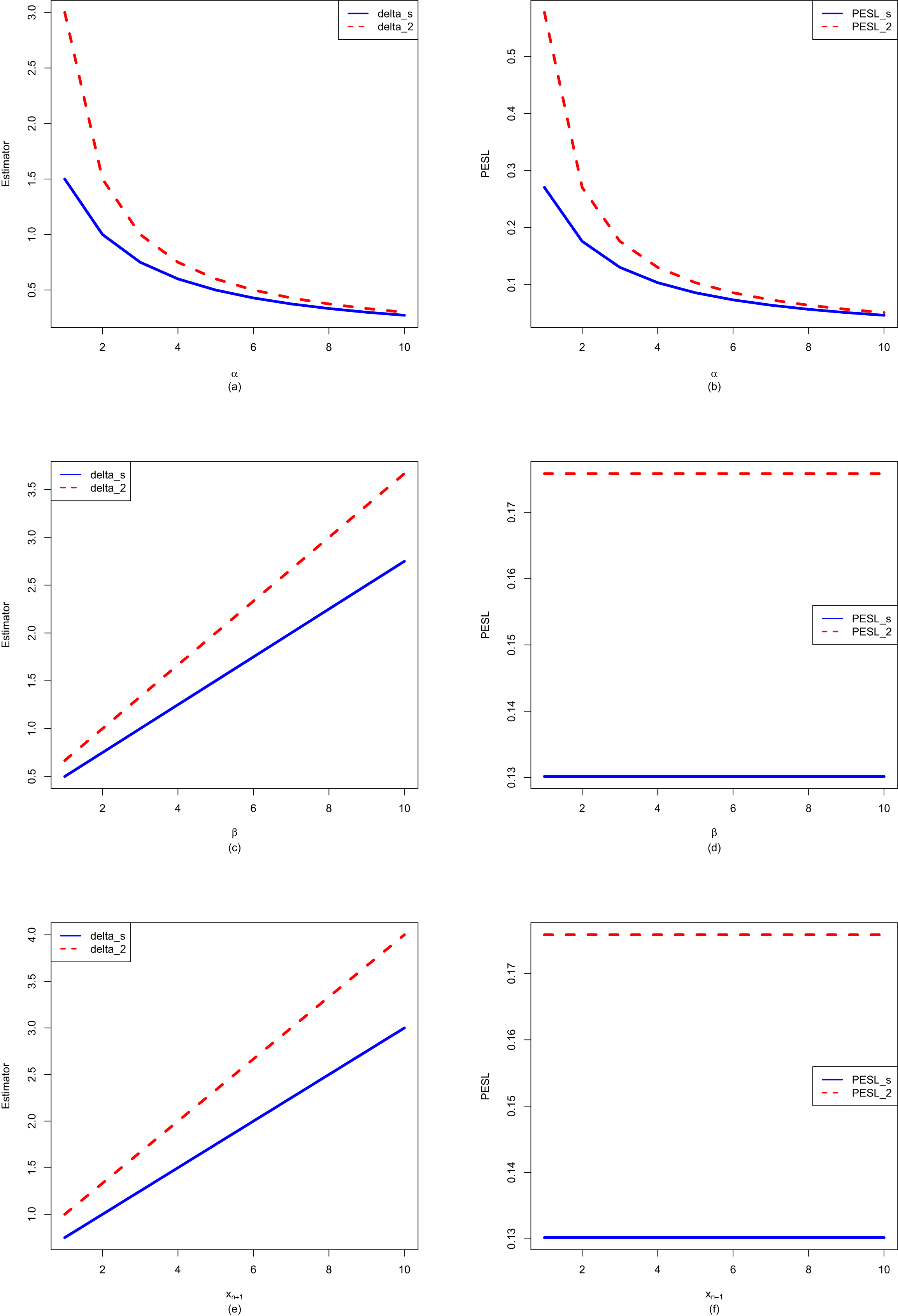

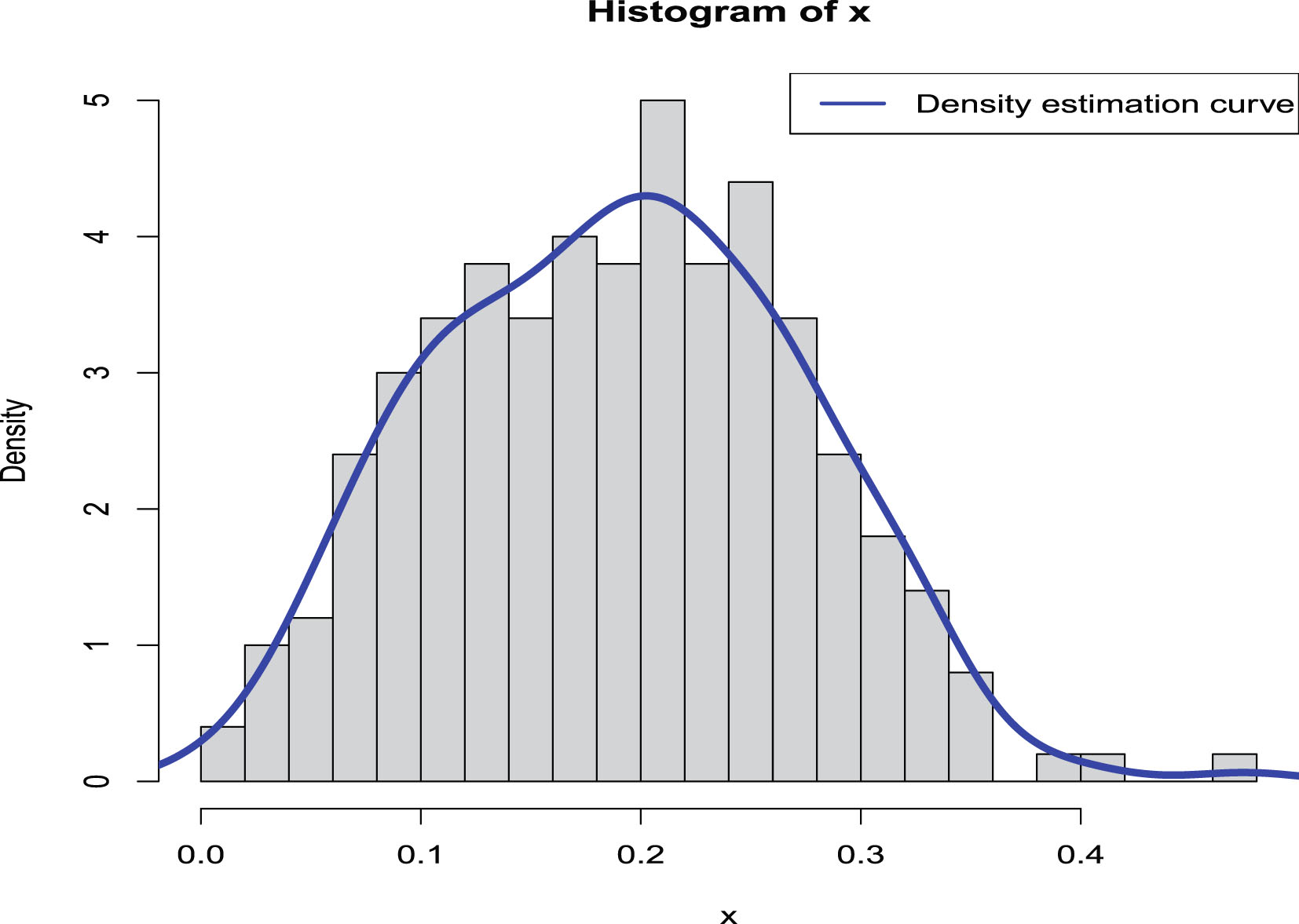

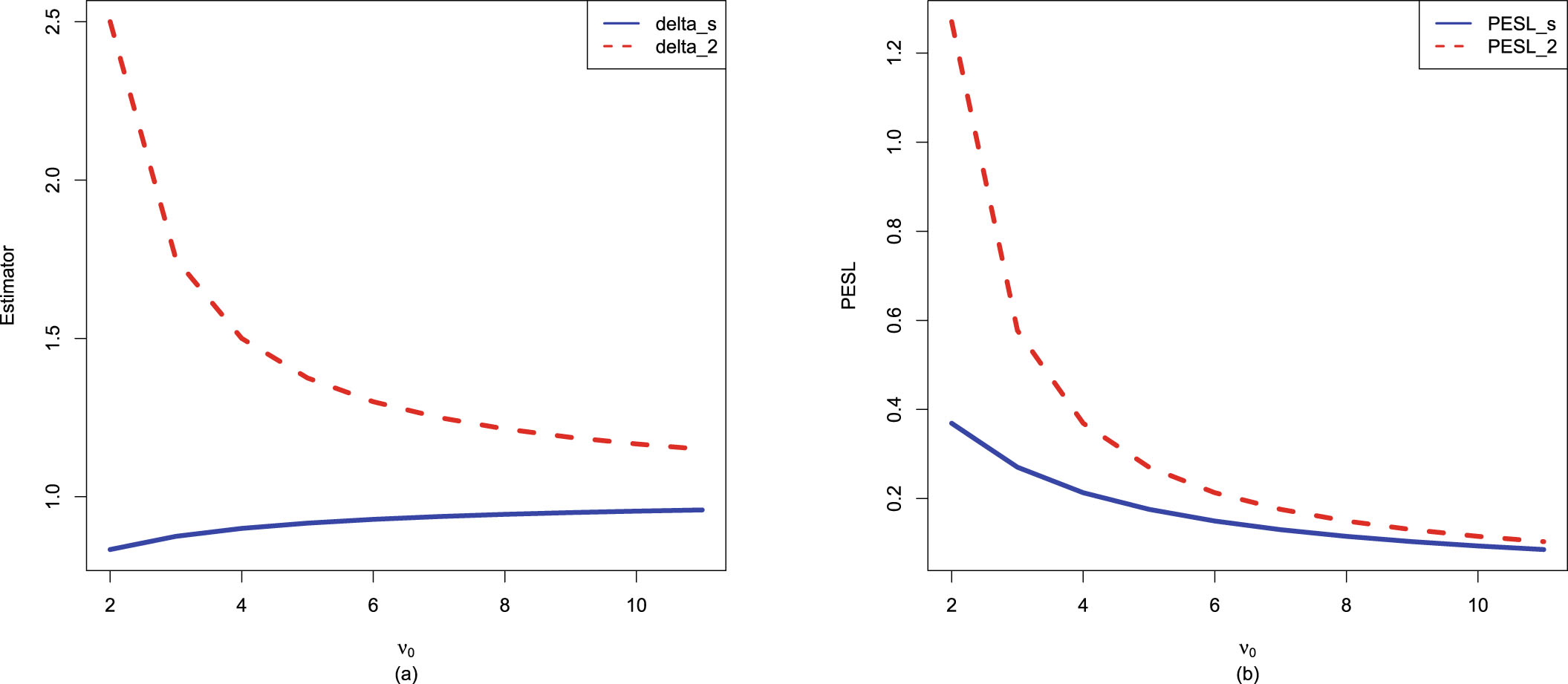

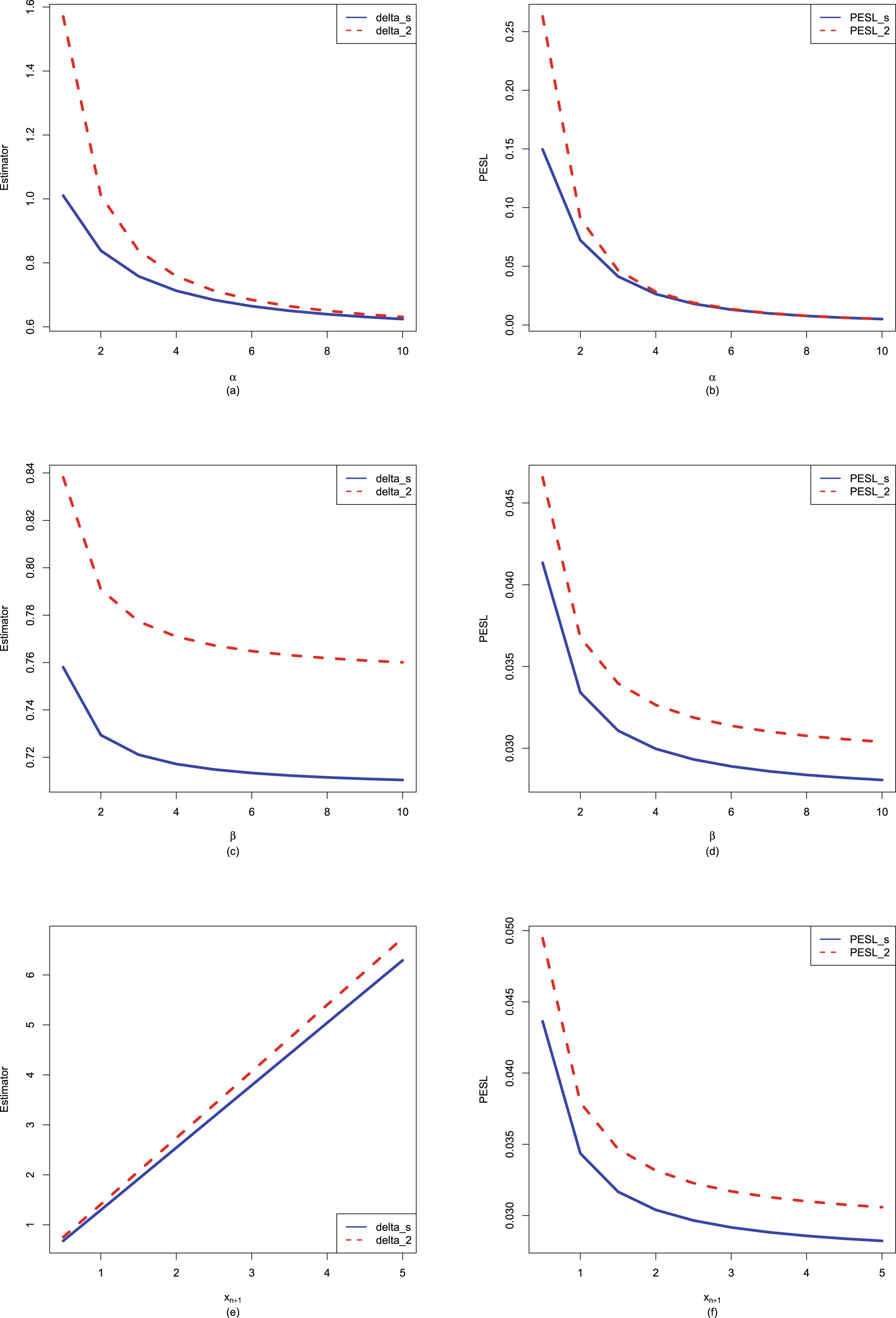

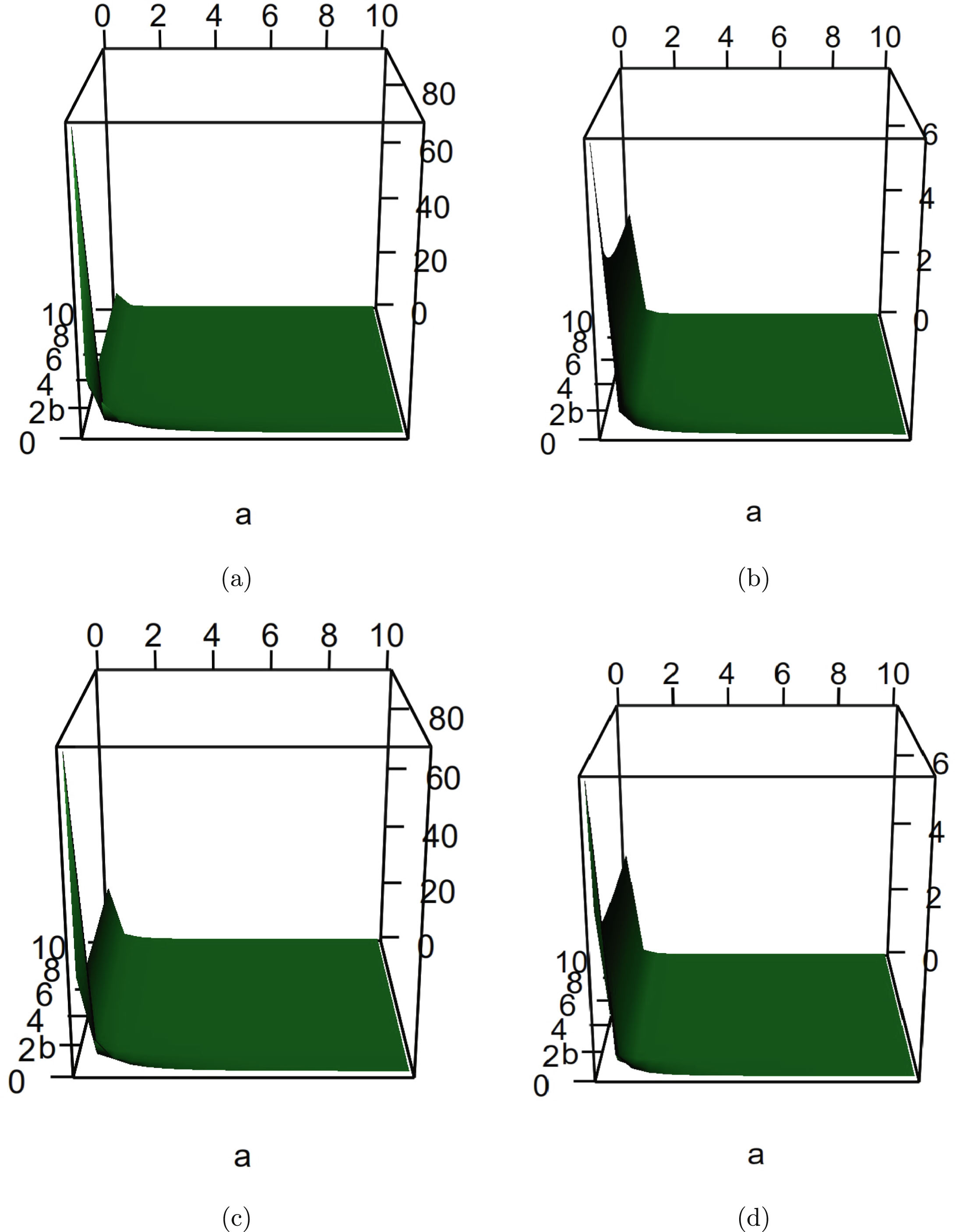

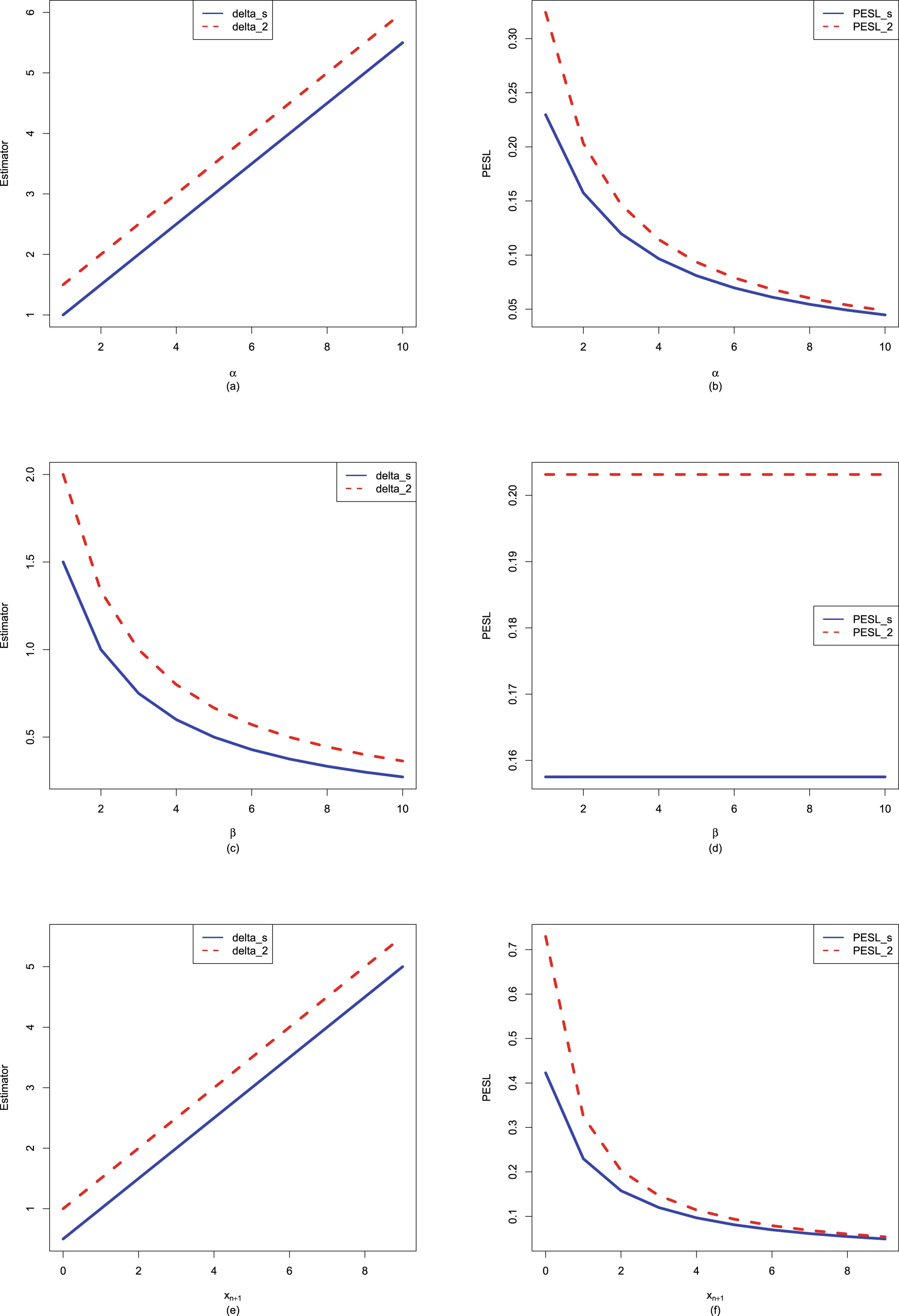

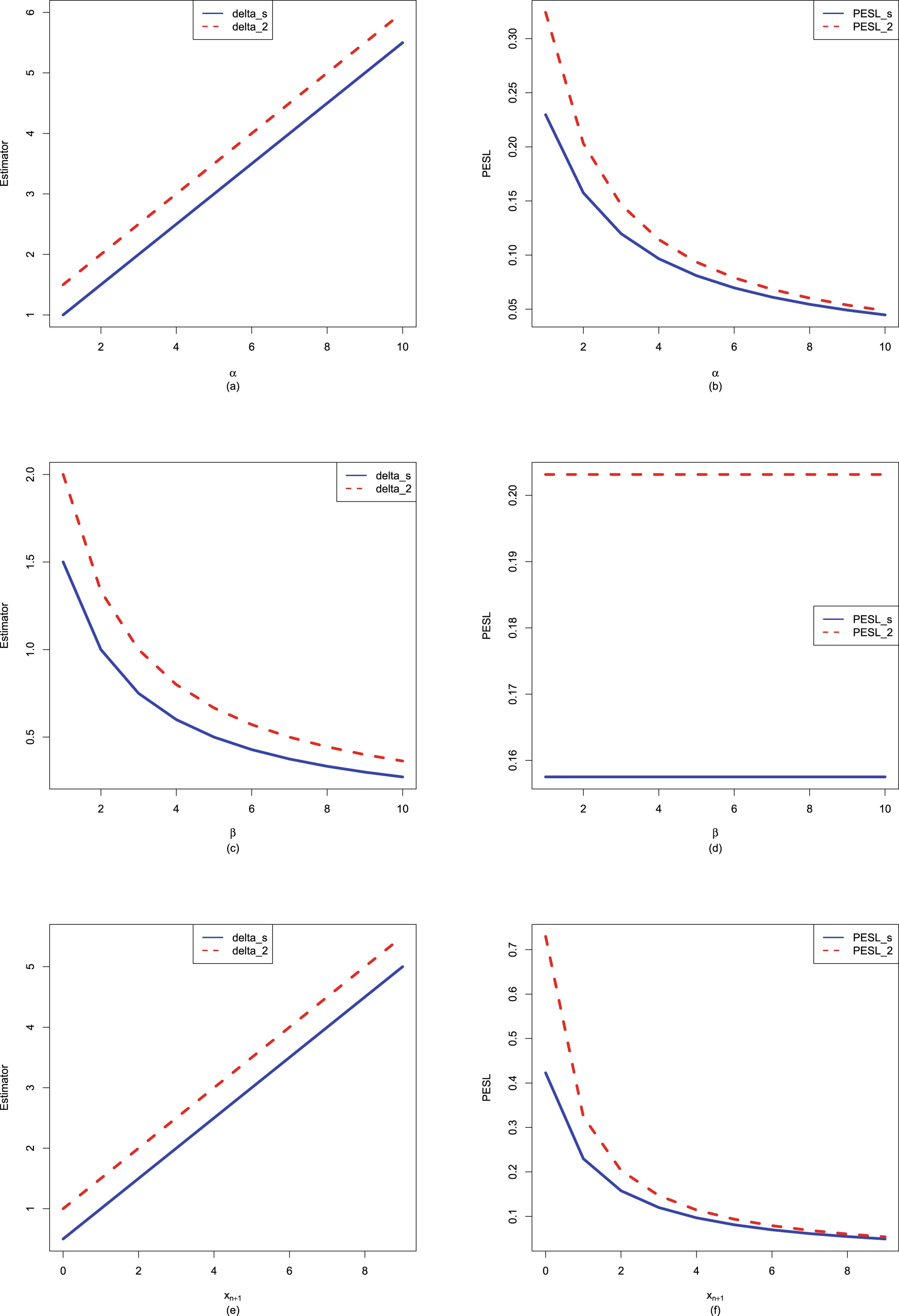

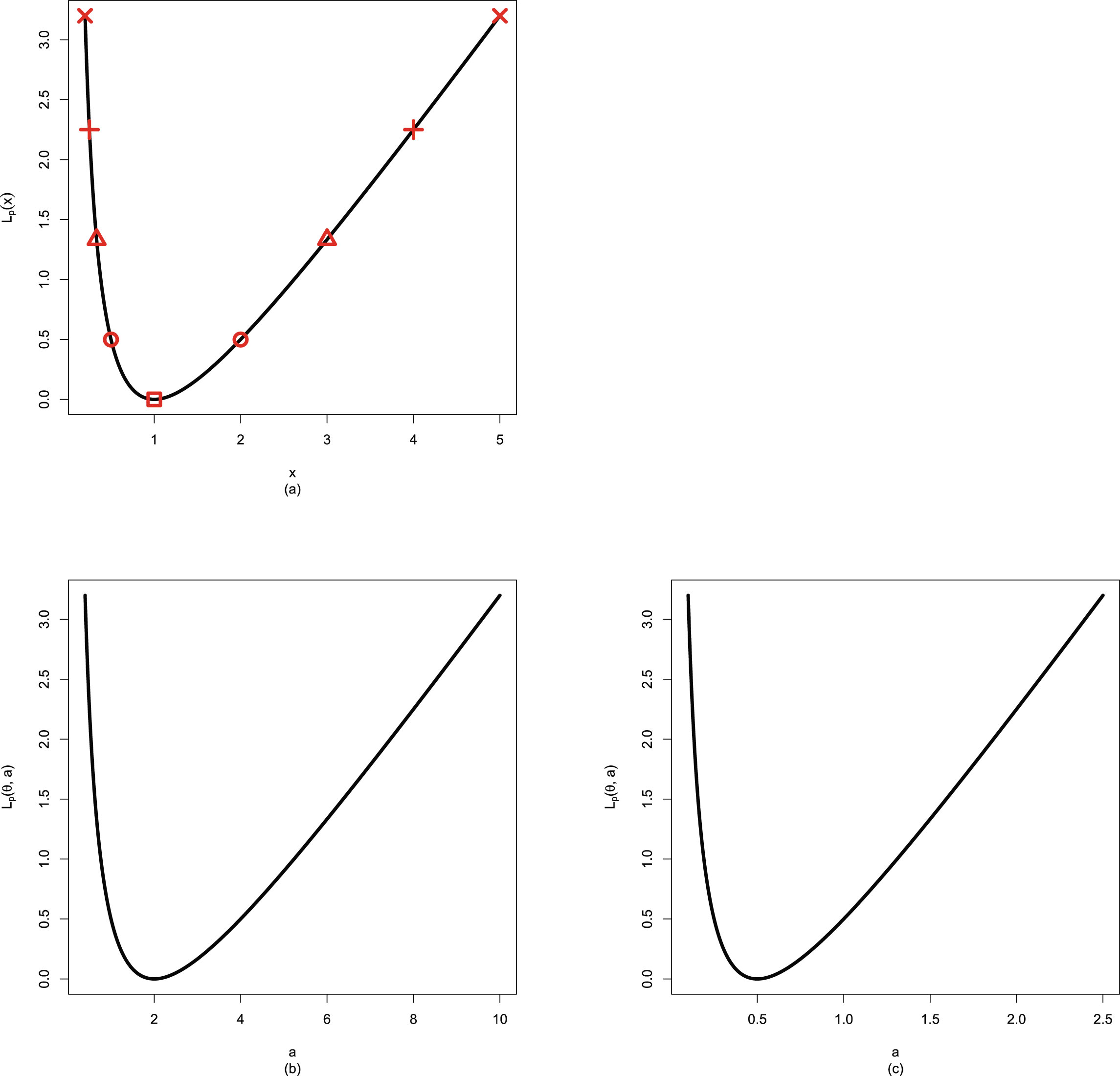

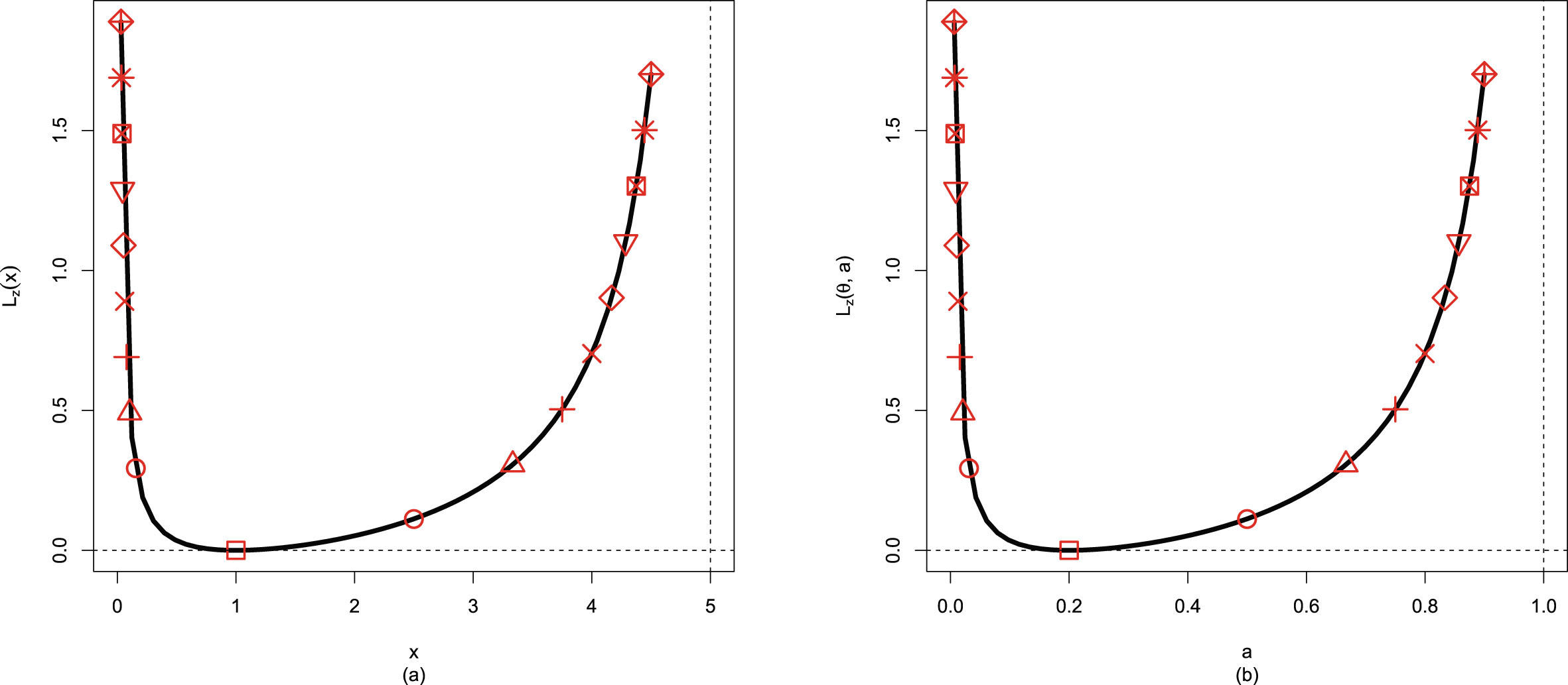

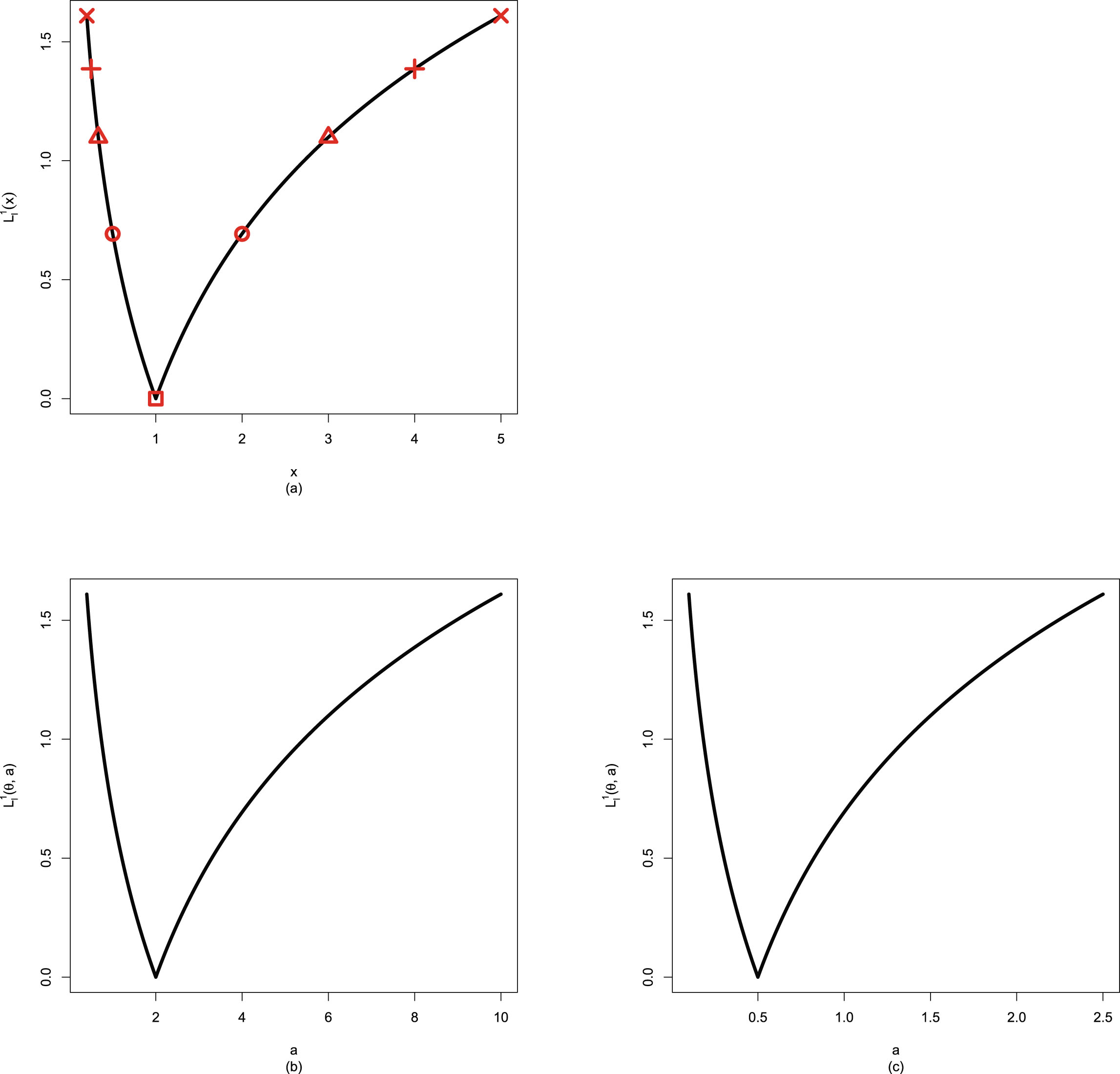

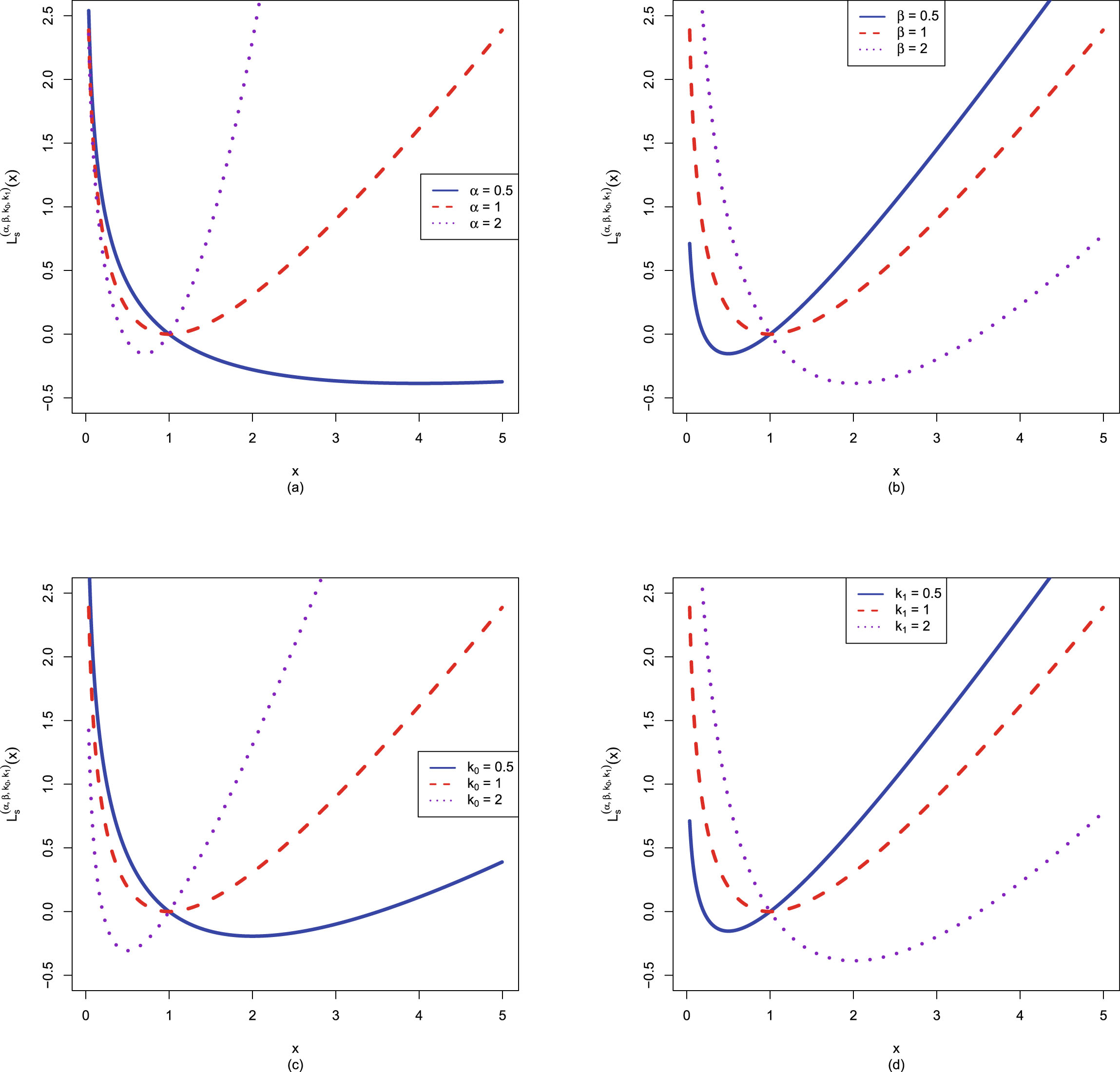

In figure 2.2, we fix  ,

,  , and

, and  , but allow

, but allow  to change from 1 to 10. From the figure, we see that the Bayes estimators and PESLs are functions of

to change from 1 to 10. From the figure, we see that the Bayes estimators and PESLs are functions of  . The numerical values of the Bayes estimators and the PESLs in the figure are displayed in table 2.1. We see from plot (a) or the first two lines of table 2.1 that the Bayes estimators are decreasing functions of

. The numerical values of the Bayes estimators and the PESLs in the figure are displayed in table 2.1. We see from plot (a) or the first two lines of table 2.1 that the Bayes estimators are decreasing functions of  , and

, and  are unanimously smaller than

are unanimously smaller than  , and thus (2.6) is exemplified. Plot (b) or the last two lines of table 2.1 exhibit that the PESLs do not depend on

, and thus (2.6) is exemplified. Plot (b) or the last two lines of table 2.1 exhibit that the PESLs do not depend on  , and

, and  are unanimously smaller than

are unanimously smaller than  , and thus (2.9) is exemplified.

, and thus (2.9) is exemplified.

FIG. 2.2 — IG-IG: The Bayes estimators and the PESLs as functions of  . (a) Bayes estimators. (b) PESLs.

. (a) Bayes estimators. (b) PESLs.

TAB. 2.1 — IG-IG: The numerical values of the Bayes estimators and the PESLs in figure 2.2:  changes.

changes.

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | |

| 0.2143 | 0.1429 | 0.1190 | 0.1071 | 0.1000 | 0.0952 | 0.0918 | 0.0893 | 0.0873 | 0.0857 | |

| 0.2500 | 0.1667 | 0.1389 | 0.1250 | 0.1167 | 0.1111 | 0.1071 | 0.1042 | 0.1019 | 0.1000 | |

| 0.0731 | 0.0731 | 0.0731 | 0.0731 | 0.0731 | 0.0731 | 0.0731 | 0.0731 | 0.0731 | 0.0731 | |

| 0.0856 | 0.0856 | 0.0856 | 0.0856 | 0.0856 | 0.0856 | 0.0856 | 0.0856 | 0.0856 | 0.0856 |

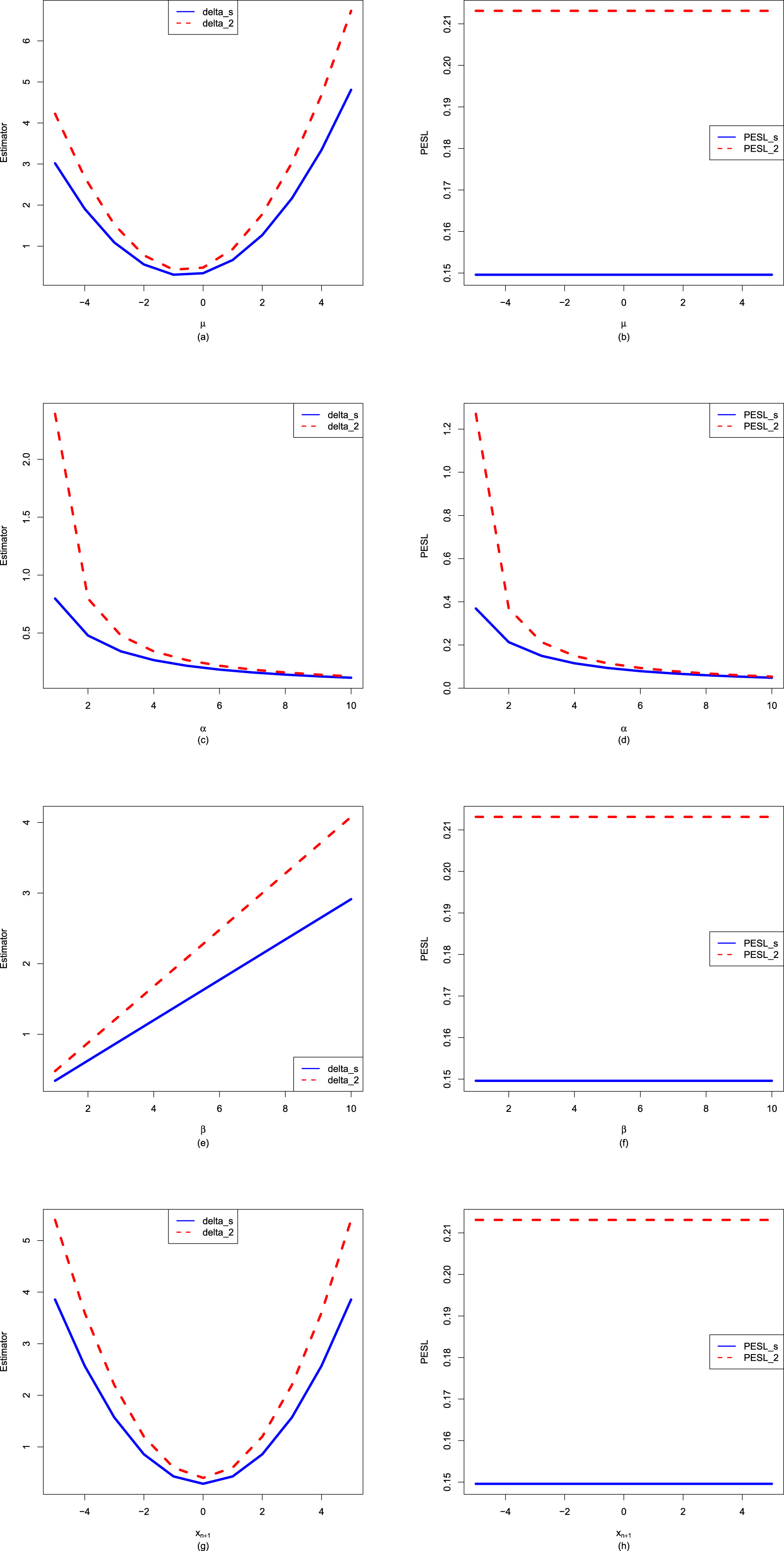

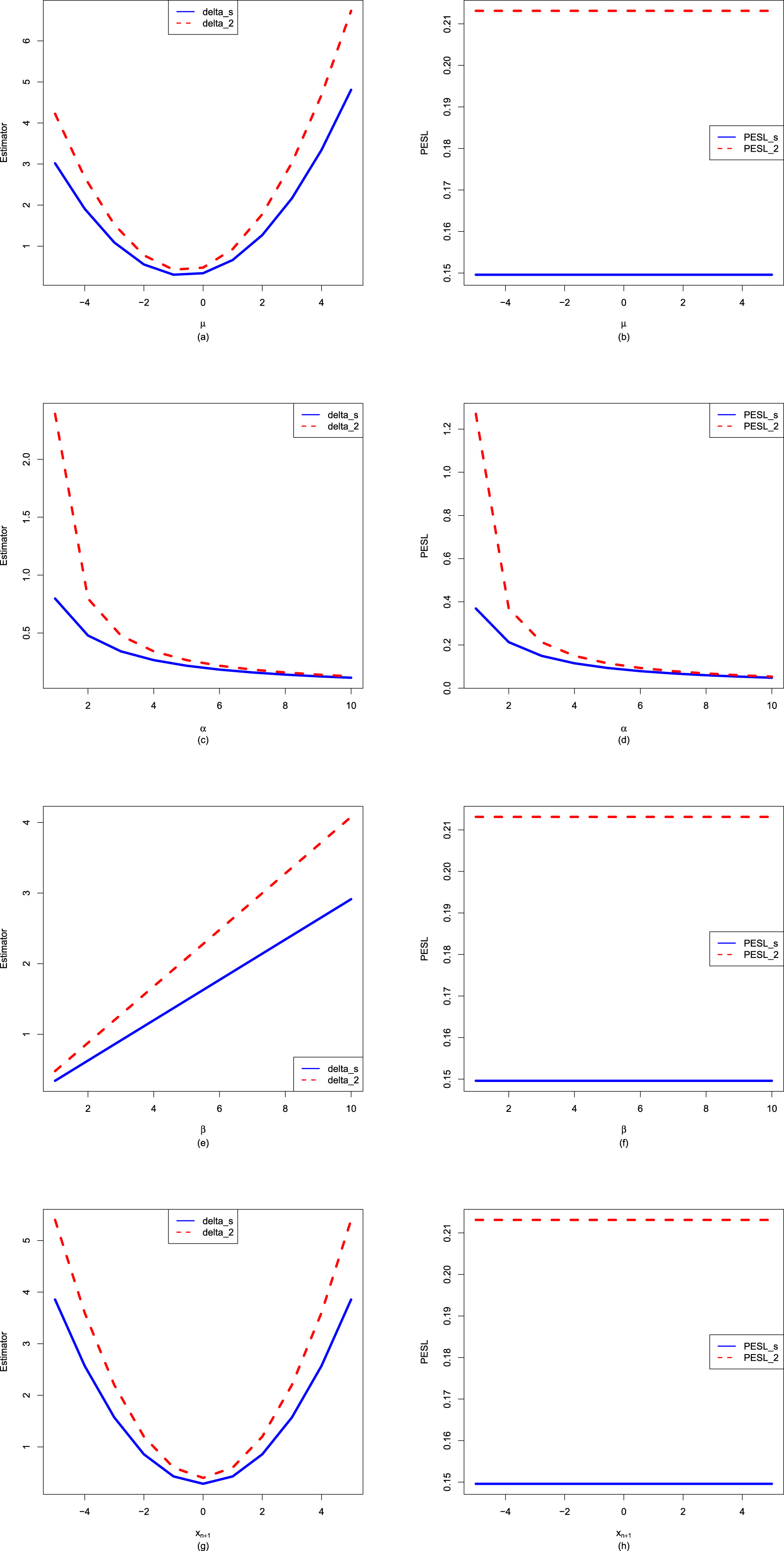

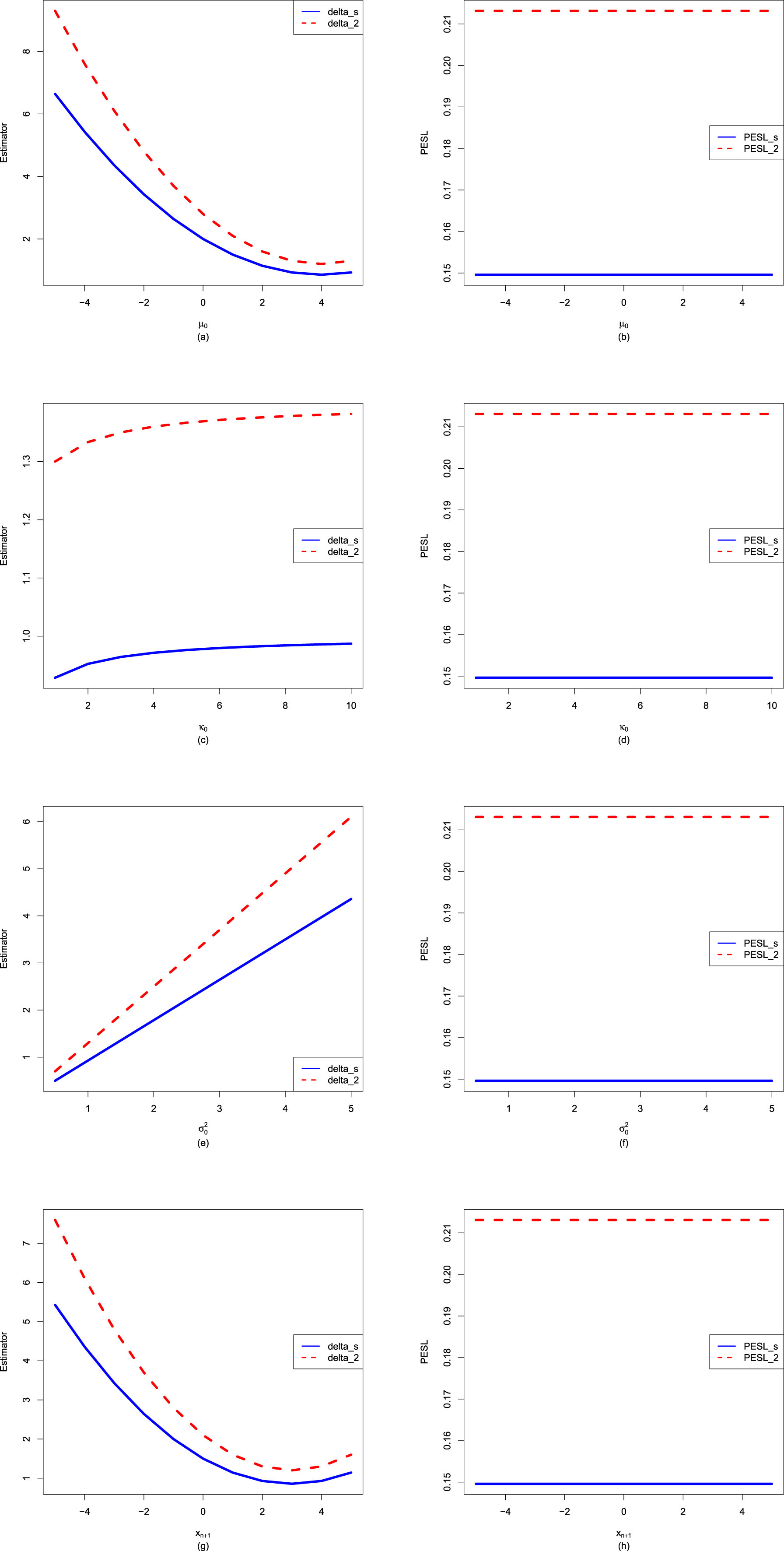

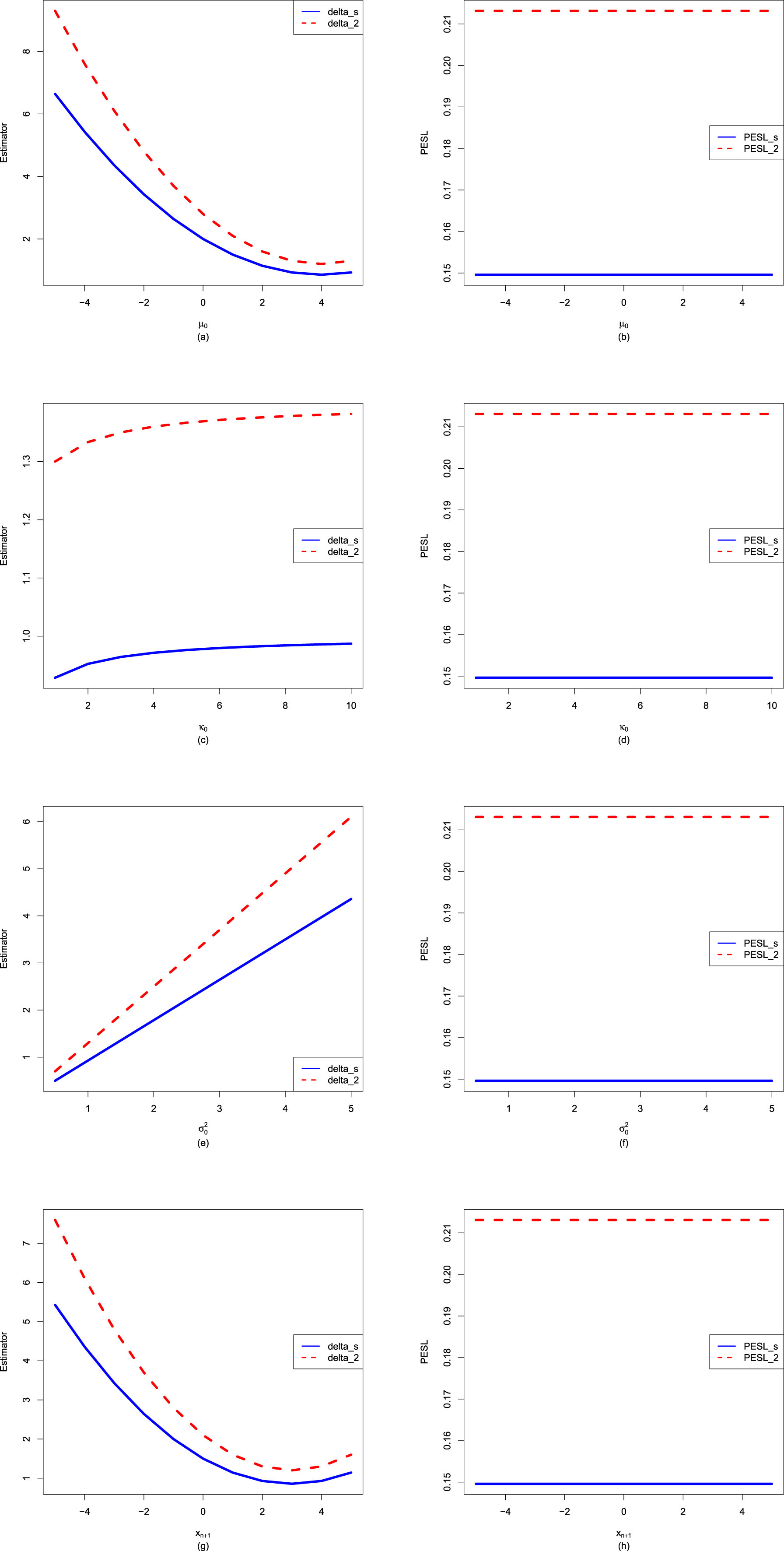

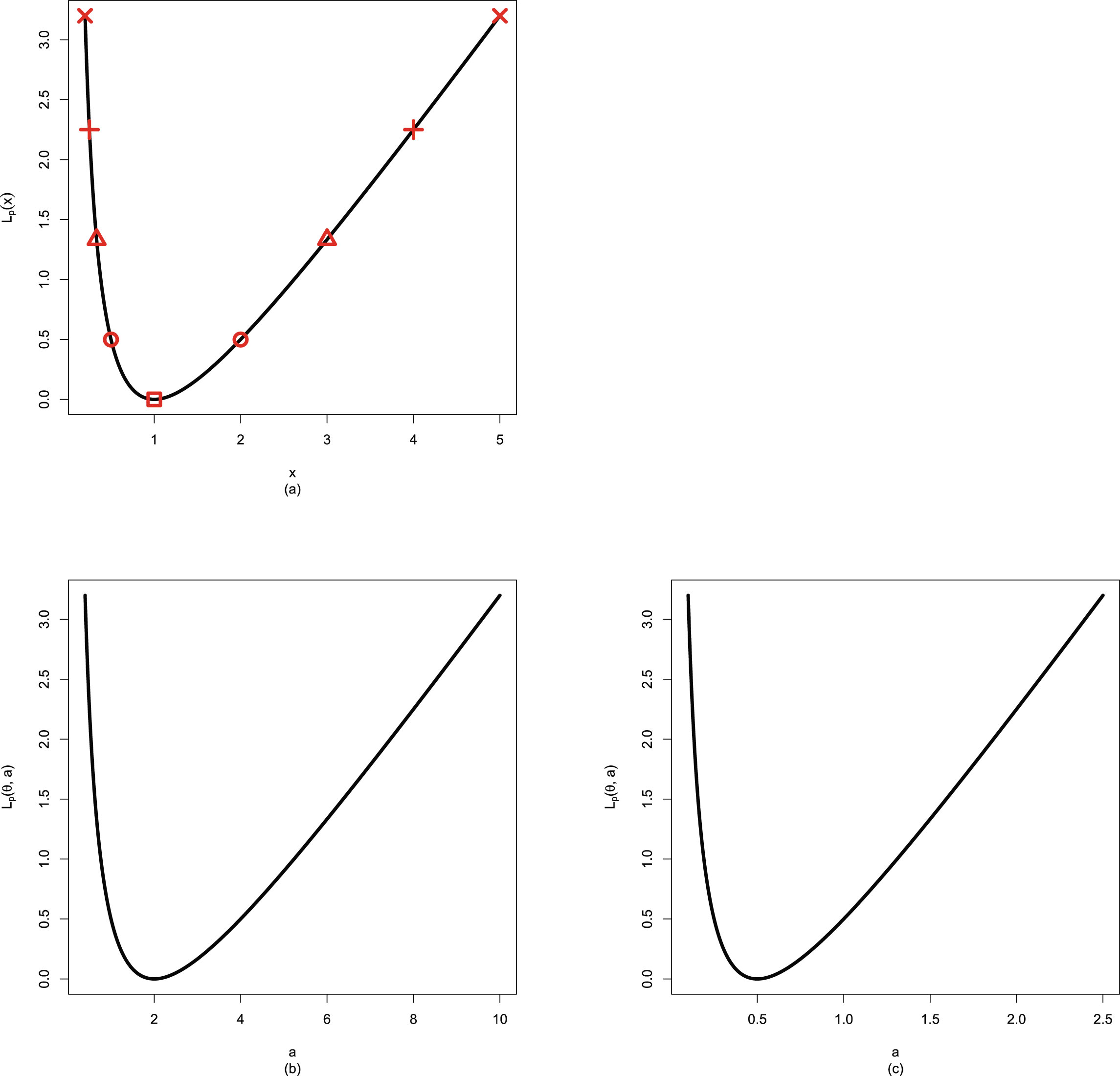

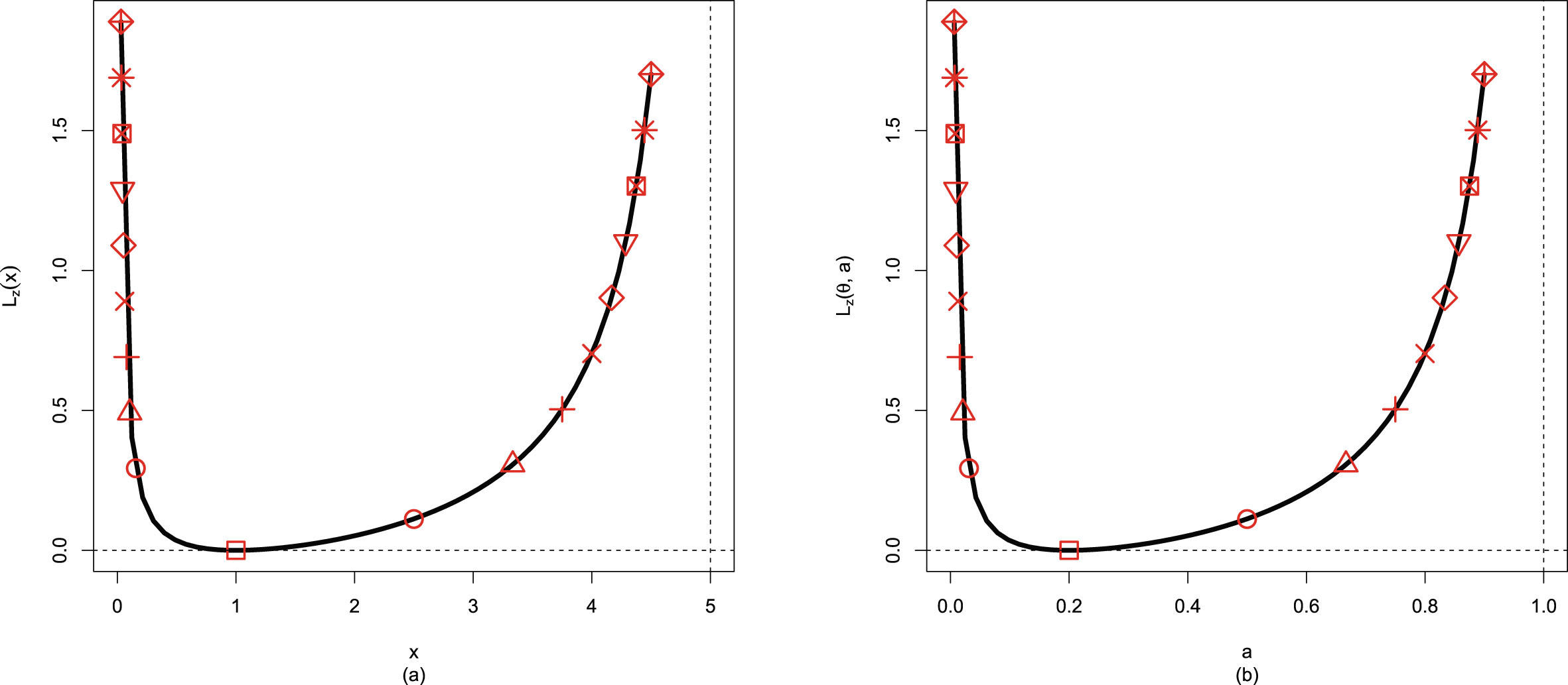

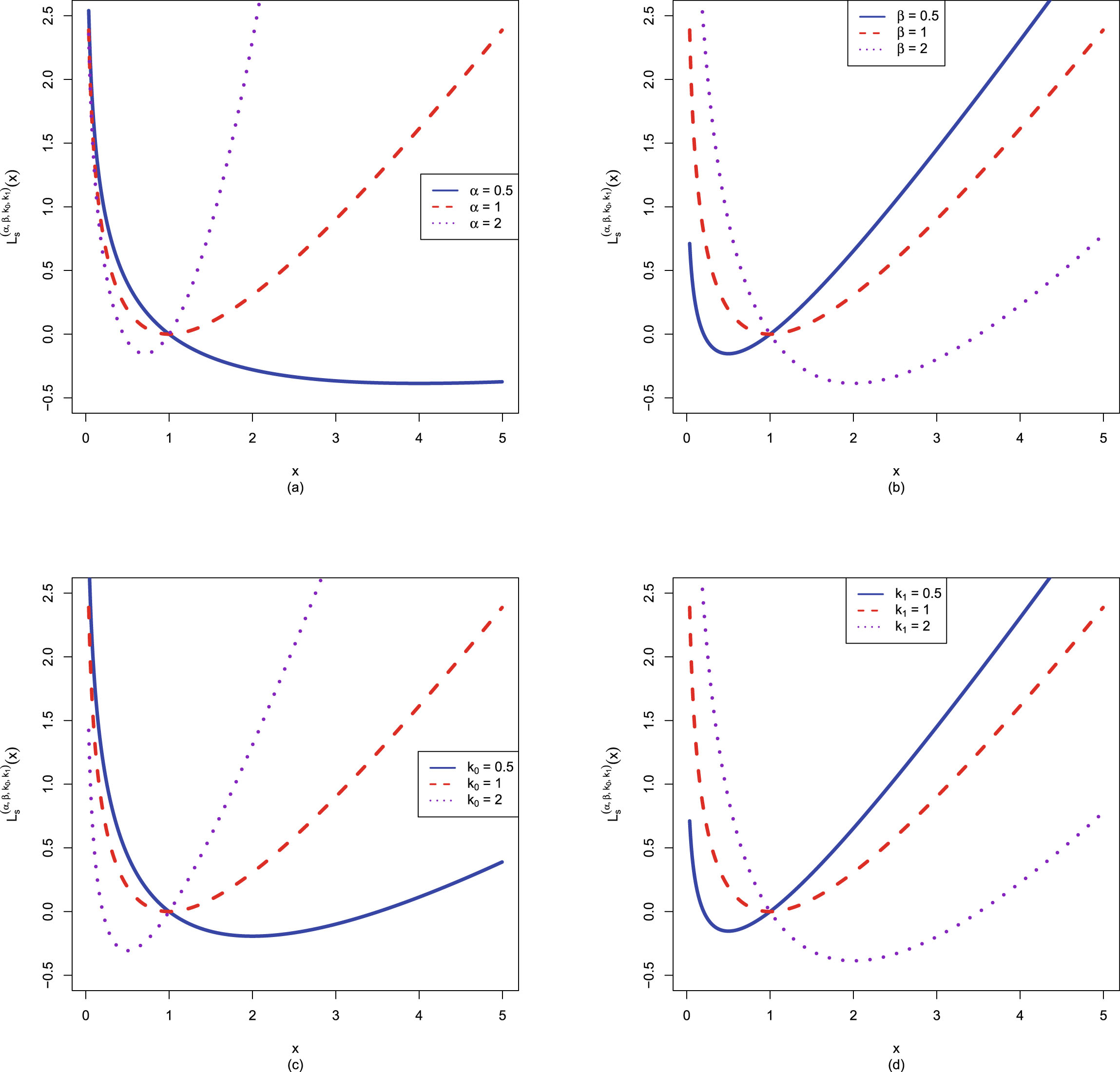

Now we allow one of the three parameters  ,

,  , and

, and  to change, holding other parameters fixed. Moreover, we also assume that the datum

to change, holding other parameters fixed. Moreover, we also assume that the datum  is fixed, as is the case for the real data. Figure 2.3 shows the Bayes estimators and the PESLs as functions of

is fixed, as is the case for the real data. Figure 2.3 shows the Bayes estimators and the PESLs as functions of  ,

,  , and

, and  . We see from the left plots of the figure that the Bayes estimators depend on

. We see from the left plots of the figure that the Bayes estimators depend on  ,

,  , and

, and  , and (2.6) is exemplified. The right plots of the figure exhibit that the PESLs depend on

, and (2.6) is exemplified. The right plots of the figure exhibit that the PESLs depend on  and

and  , but not on

, but not on  , and (2.9) is exemplified. Furthermore, tables 2.2–2.4 display the numerical values of the Bayes estimators and the PESLs in figure 2.3. In summary, the results of figure 2.3 and tables 2.2–2.4 exemplify the theoretical studies of (2.6) and (2.9).

, and (2.9) is exemplified. Furthermore, tables 2.2–2.4 display the numerical values of the Bayes estimators and the PESLs in figure 2.3. In summary, the results of figure 2.3 and tables 2.2–2.4 exemplify the theoretical studies of (2.6) and (2.9).

FIG. 2.3 — IG-IG: The Bayes estimators and the PESLs as functions of  ,

,  , and

, and  . (a) Bayes estimators vs.

. (a) Bayes estimators vs.  . (b) PESLs vs.

. (b) PESLs vs.  . (c) Bayes estimators vs.

. (c) Bayes estimators vs.  . (d) PESLs vs.

. (d) PESLs vs.  . (e) Bayes estimators vs.

. (e) Bayes estimators vs.  . (f) PESLs vs.

. (f) PESLs vs.  .

.

TAB. 2.2 — IIG-IG: The numerical values of the Bayes estimators and the PESLs in figure 2.3:  changes.

changes.

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | |

| 2.8077 | 2.4066 | 2.1058 | 1.8718 | 1.6846 | 1.5315 | 1.4038 | 1.2958 | 1.2033 | 1.1231 | |

| 3.3692 | 2.8077 | 2.4066 | 2.1058 | 1.8718 | 1.6846 | 1.5315 | 1.4038 | 1.2958 | 1.2033 | |

| 0.0856 | 0.0731 | 0.0638 | 0.0566 | 0.0508 | 0.0461 | 0.0422 | 0.0390 | 0.0361 | 0.0337 | |

| 0.1033 | 0.0856 | 0.0731 | 0.0638 | 0.0566 | 0.0508 | 0.0461 | 0.0422 | 0.0390 | 0.0361 |

TAB. 2.3 — IIG-IG: The numerical values of the Bayes estimators and the PESLs in figure 2.3:  changes.

changes.

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | |

| 2.4780 | 2.4066 | 2.3828 | 2.3709 | 2.3637 | 2.3590 | 2.3556 | 2.3530 | 2.3510 | 2.3494 | |

| 2.8910 | 2.8077 | 2.7799 | 2.7660 | 2.7577 | 2.7521 | 2.7481 | 2.7452 | 2.7429 | 2.7410 | |

| 0.0731 | 0.0731 | 0.0731 | 0.0731 | 0.0731 | 0.0731 | 0.0731 | 0.0731 | 0.0731 | 0.0731 | |

| 0.0856 | 0.0856 | 0.0856 | 0.0856 | 0.0856 | 0.0856 | 0.0856 | 0.0856 | 0.0856 | 0.0856 |

TAB. 2.4 — IIG-IG: The numerical values of the Bayes estimators and the PESLs in figure 2.3:  changes.

changes.

| 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 | |

| 6.7943 | 5.9450 | 5.2844 | 4.7560 | 4.3236 | 3.9633 | 3.6585 | 3.3971 | 3.1707 | 2.9725 | |

| 7.9267 | 6.7943 | 5.9450 | 5.2844 | 4.7560 | 4.3236 | 3.9633 | 3.6585 | 3.3971 | 3.1707 | |

| 0.0731 | 0.0638 | 0.0566 | 0.0508 | 0.0461 | 0.0422 | 0.0390 | 0.0361 | 0.0337 | 0.0316 | |

| 0.0856 | 0.0731 | 0.0638 | 0.0566 | 0.0508 | 0.0461 | 0.0422 | 0.0390 | 0.0361 | 0.0337 |

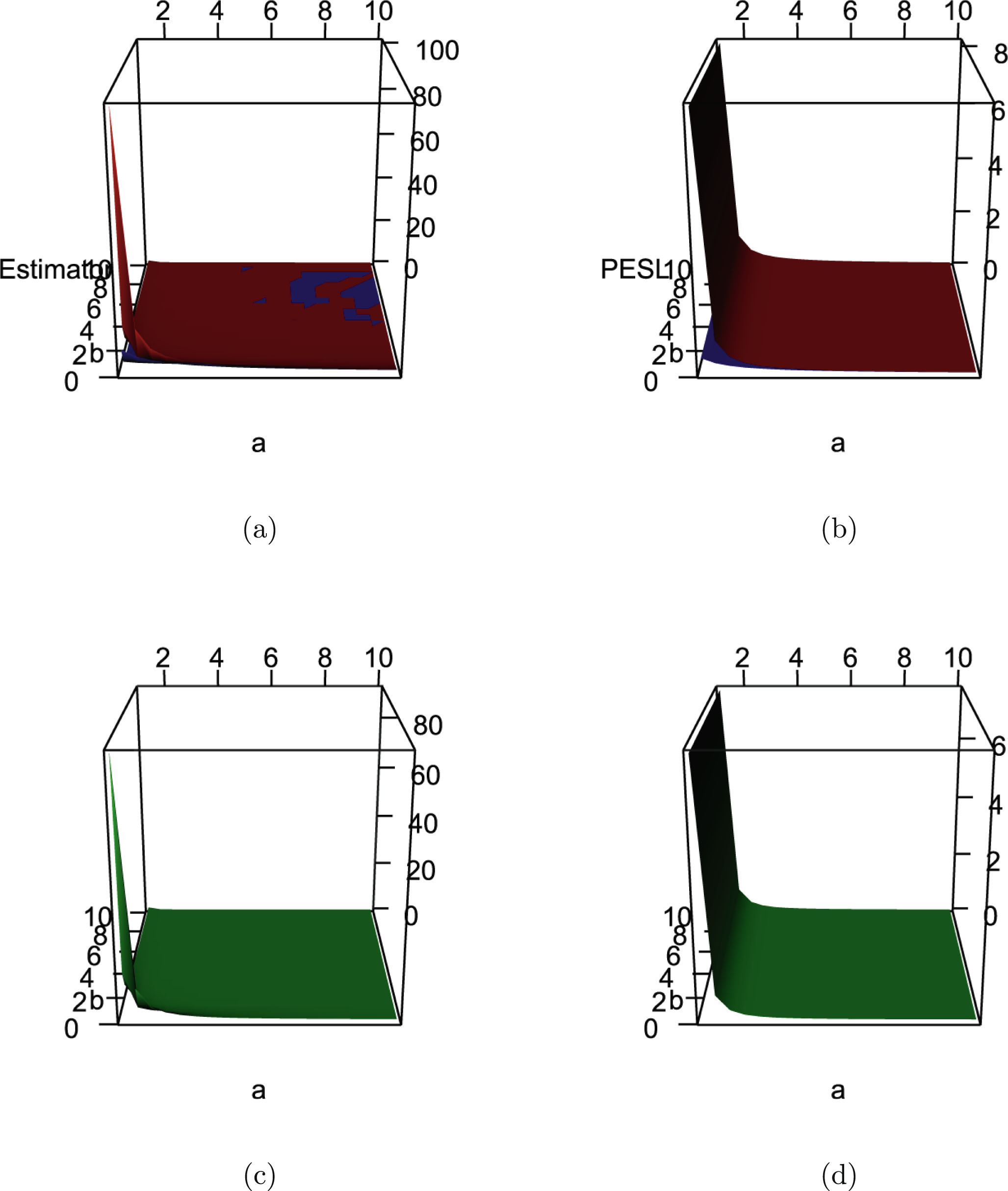

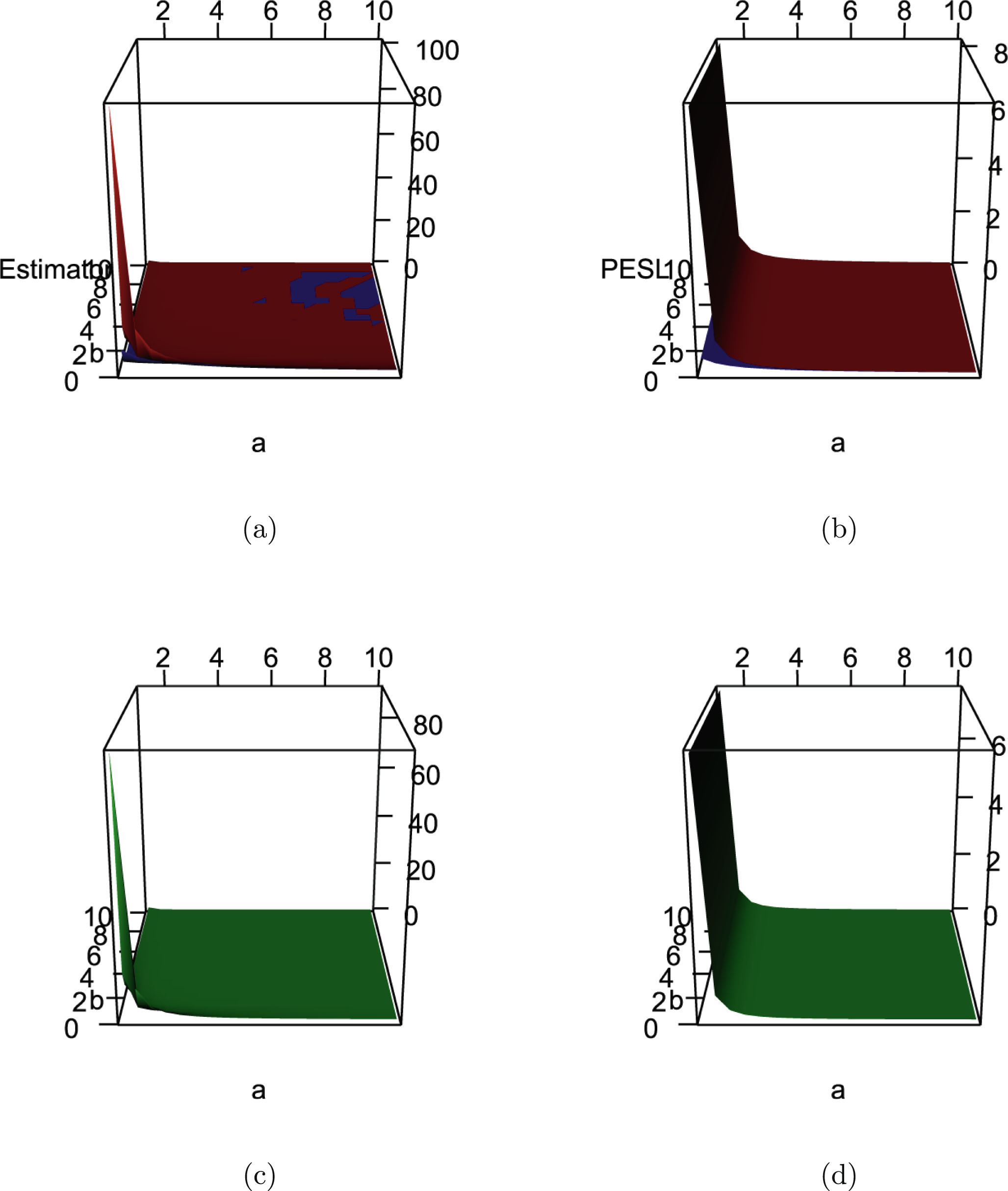

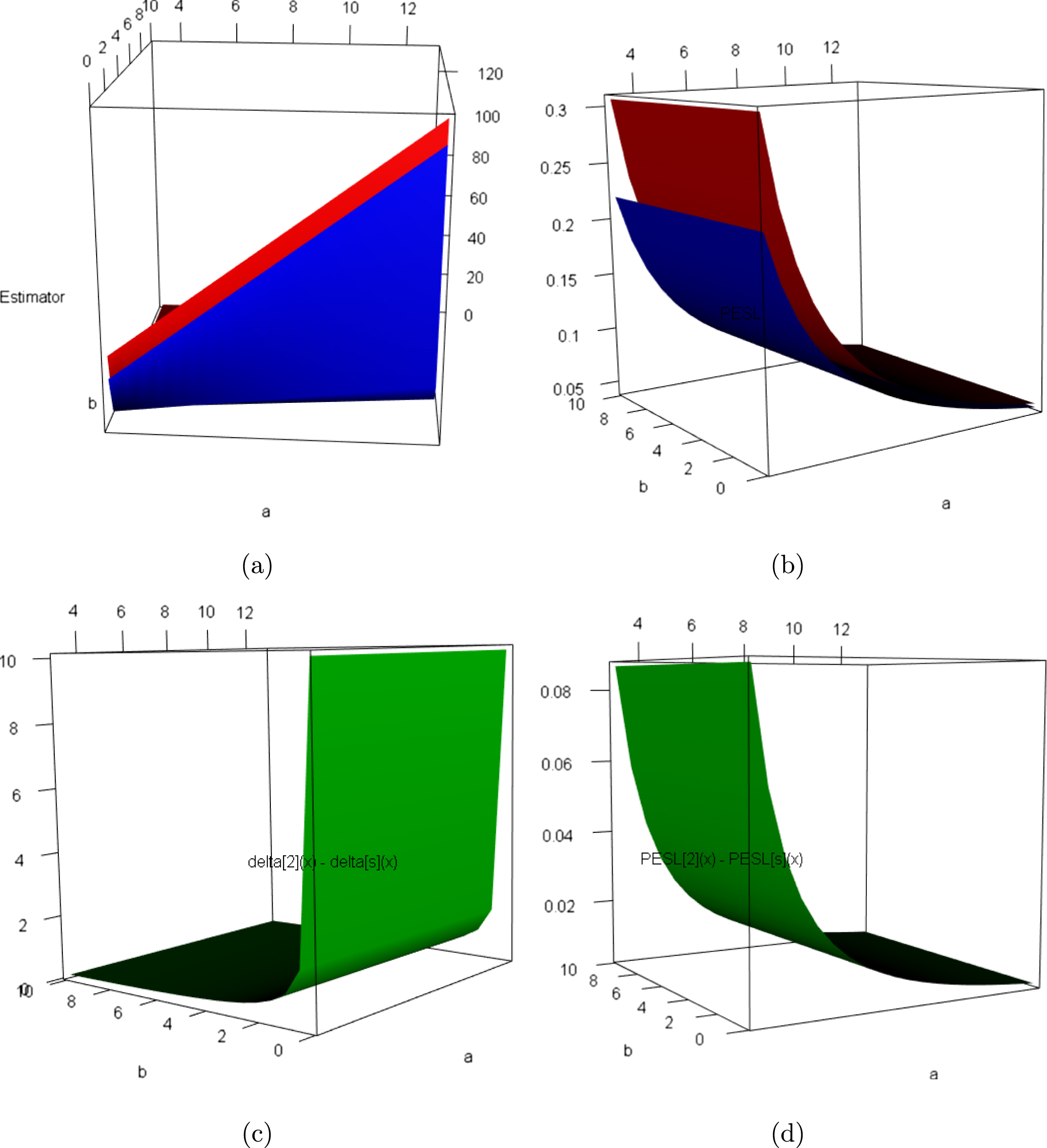

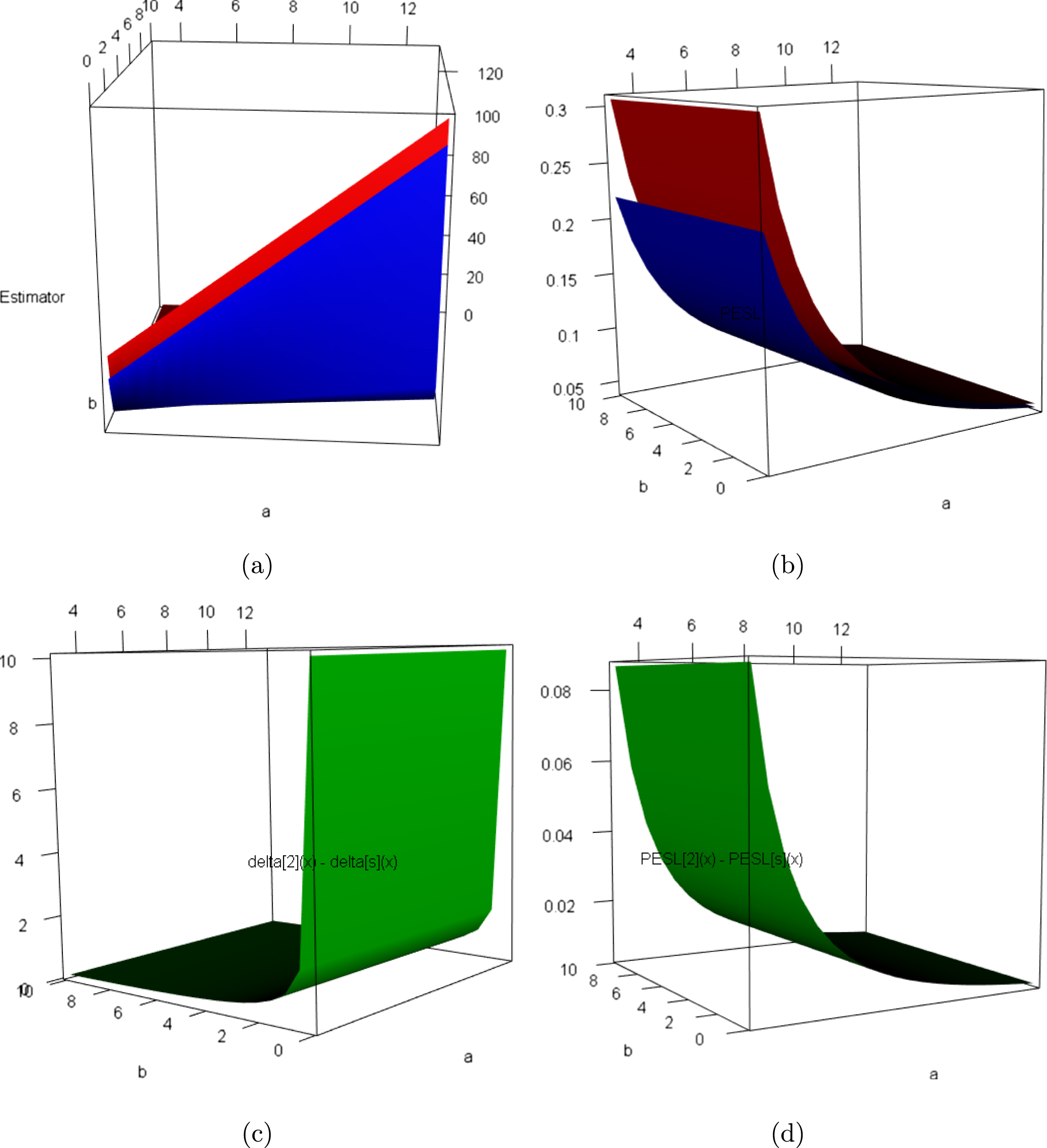

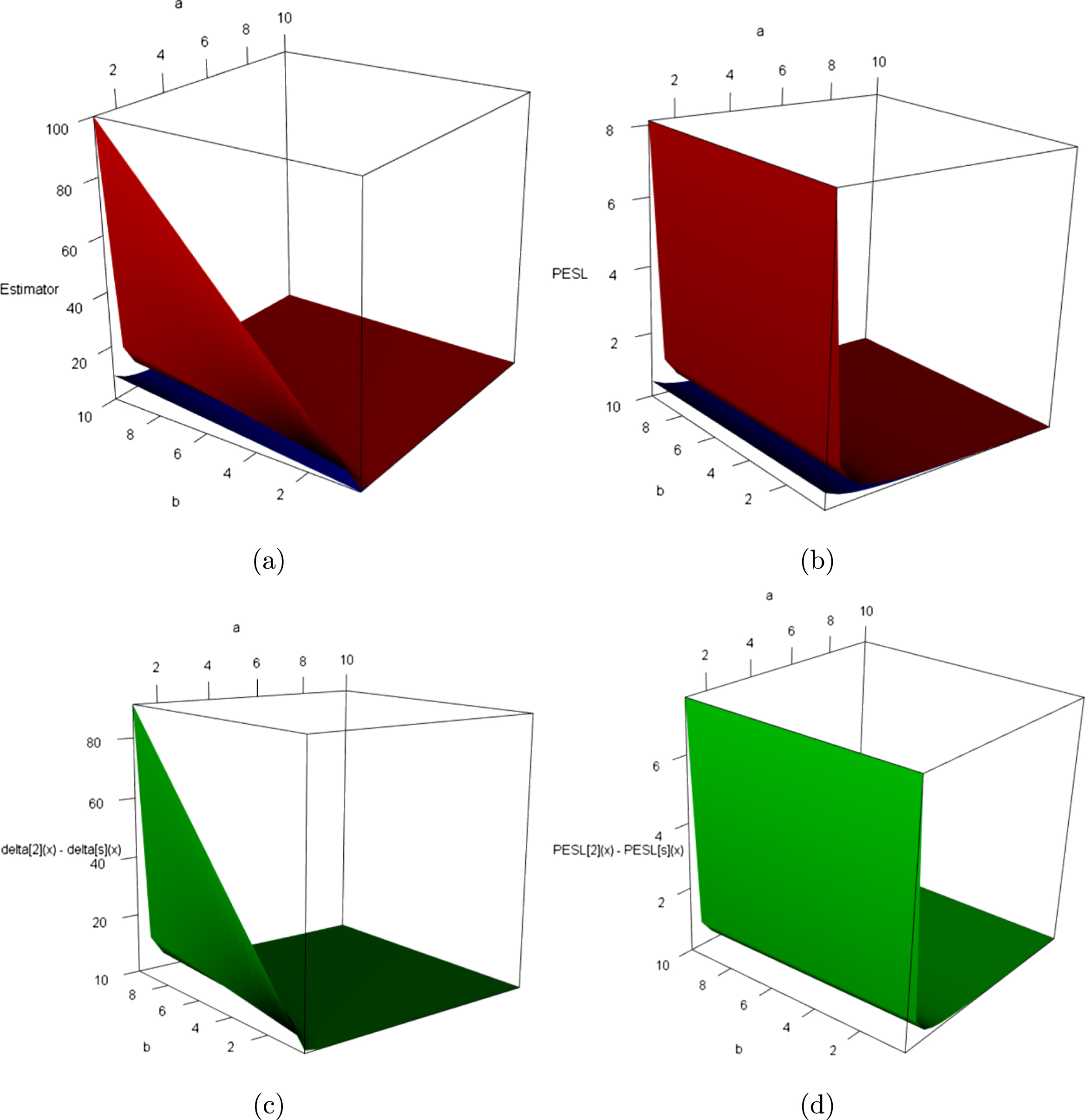

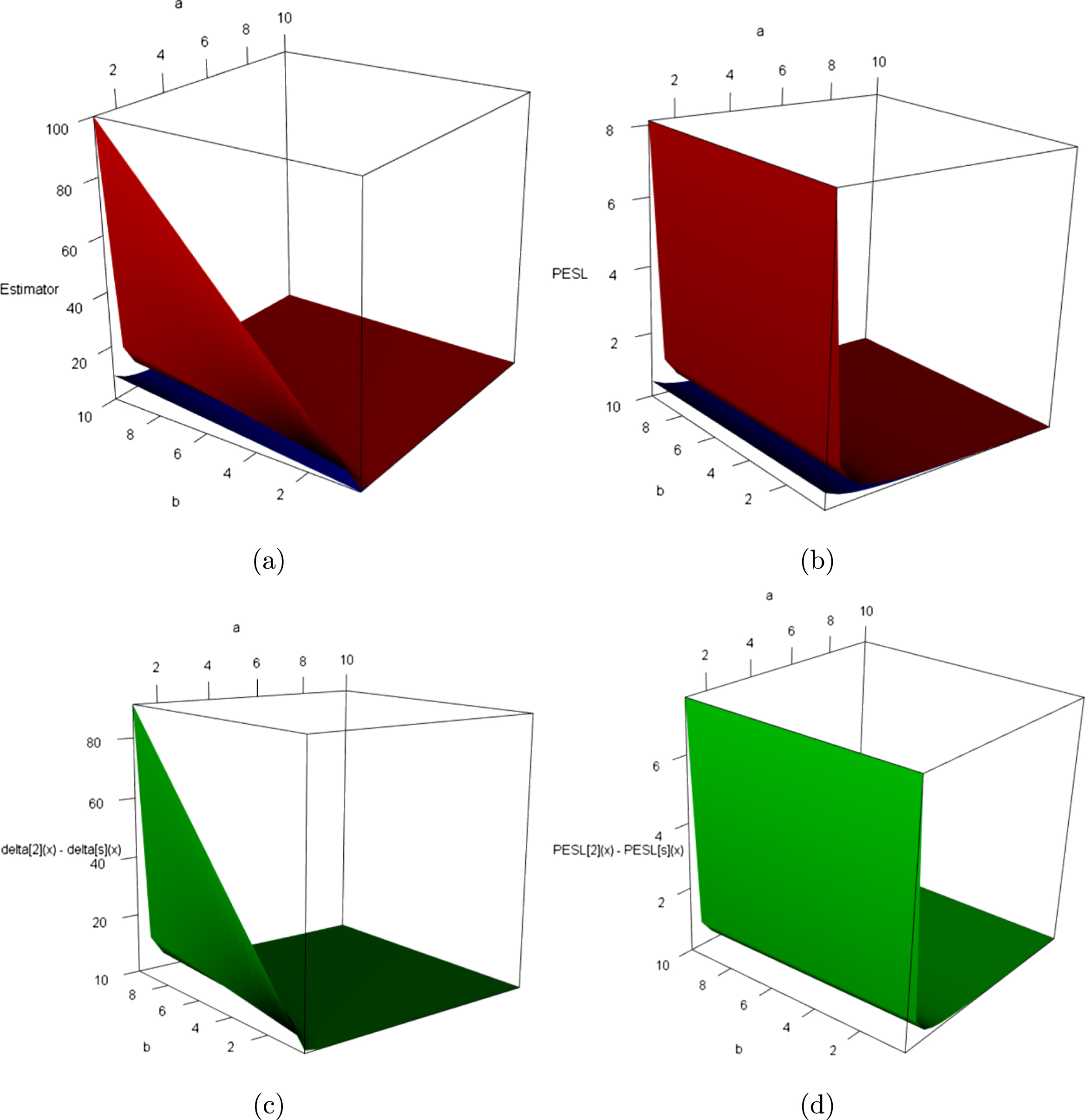

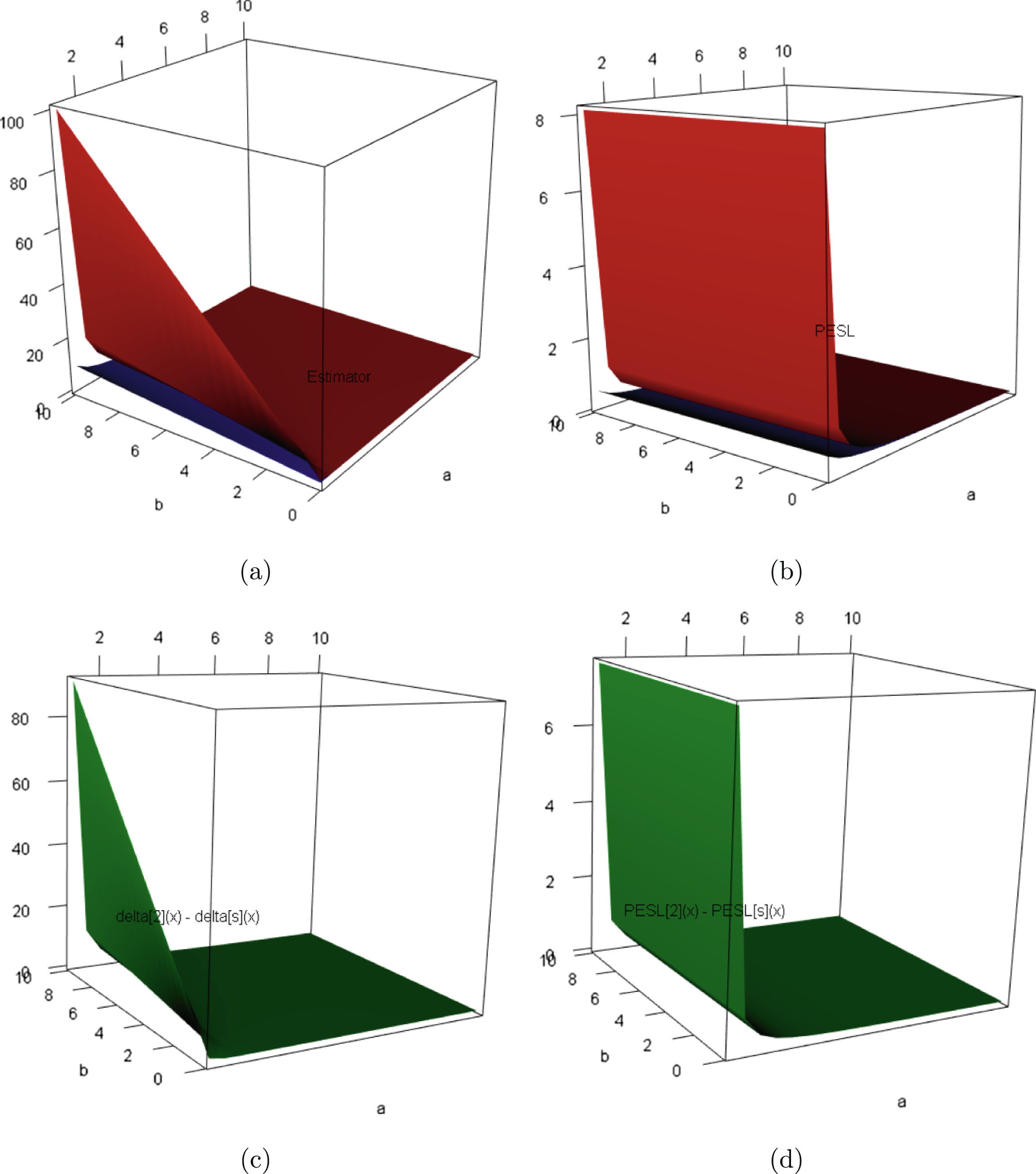

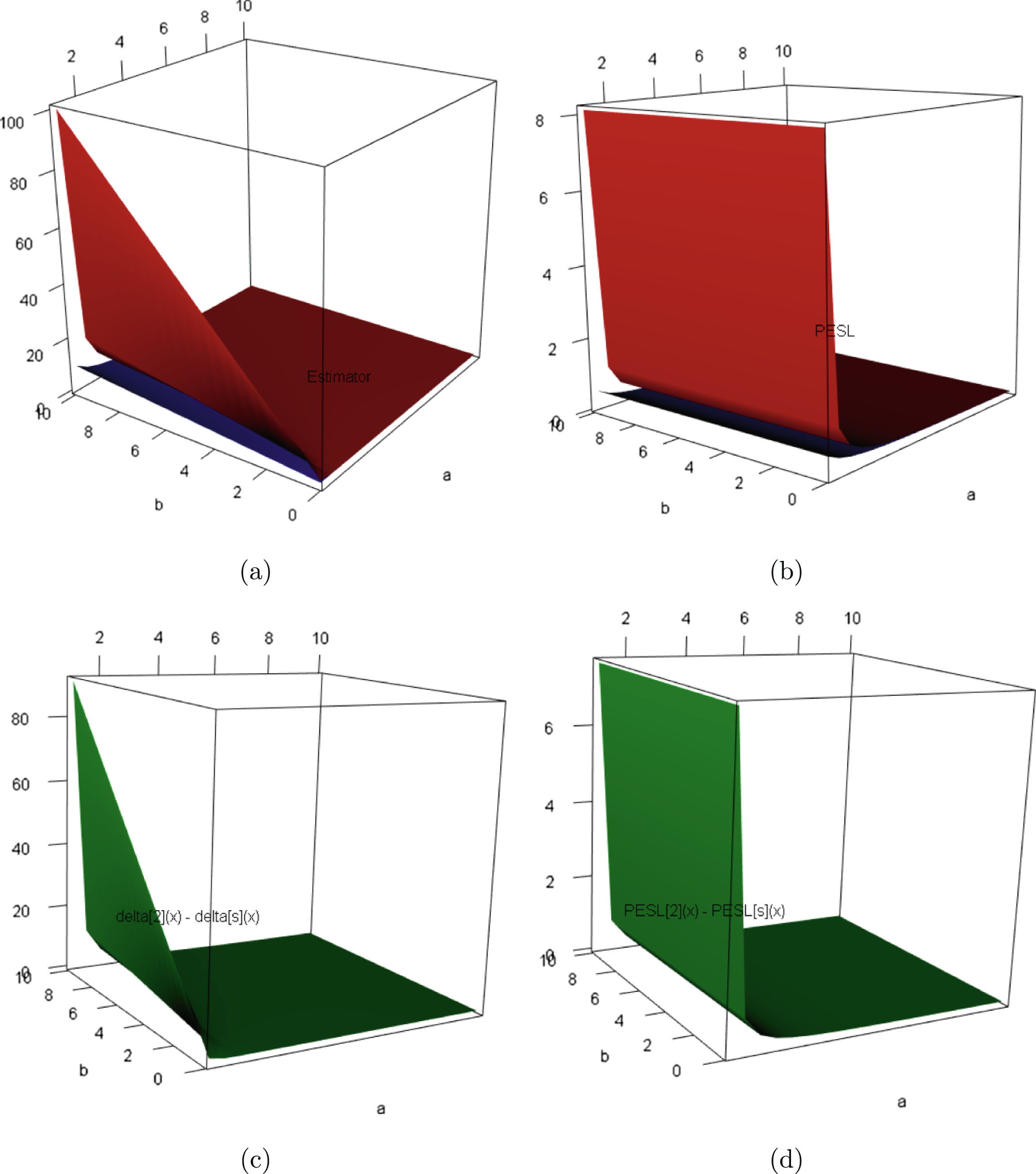

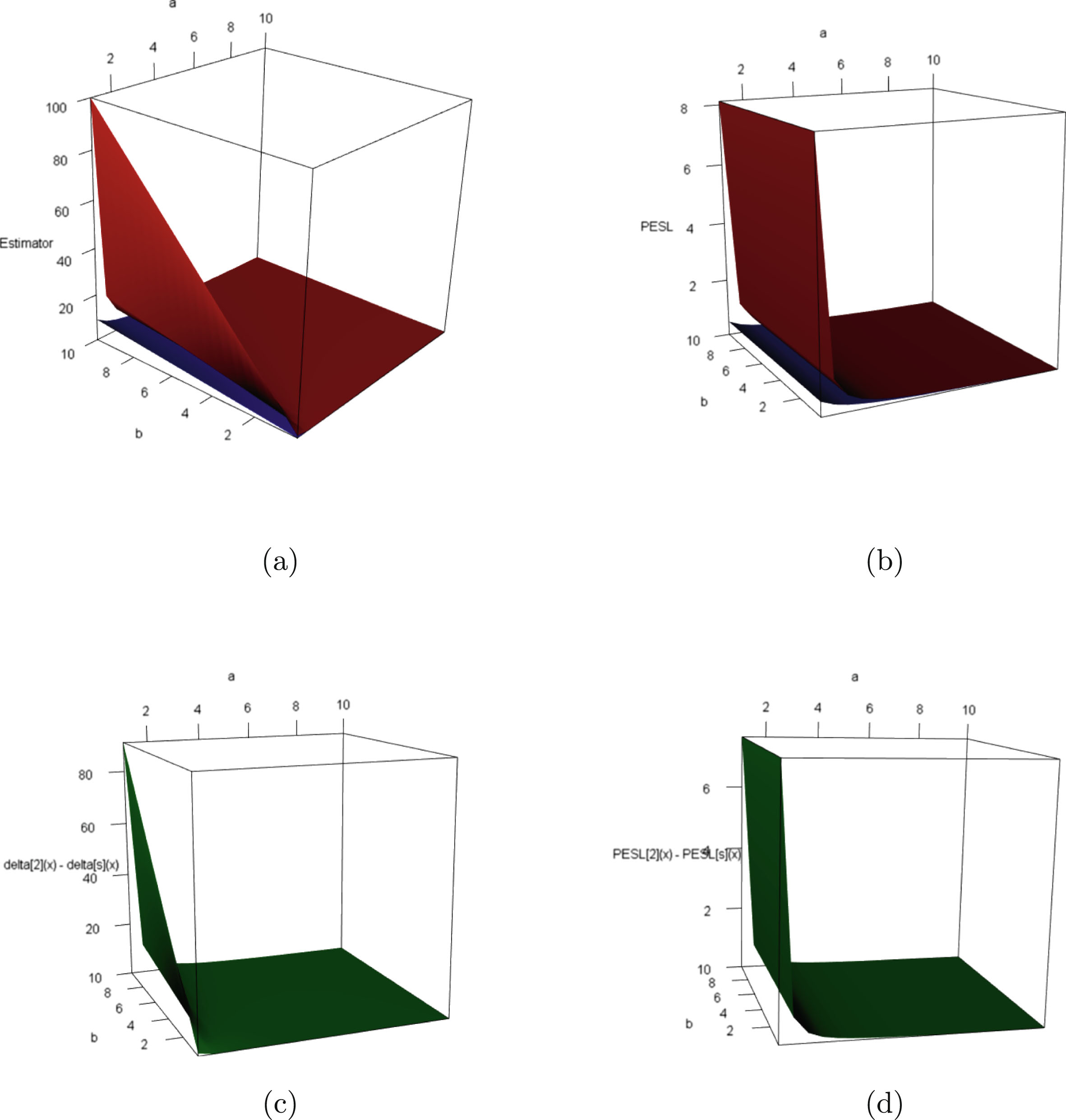

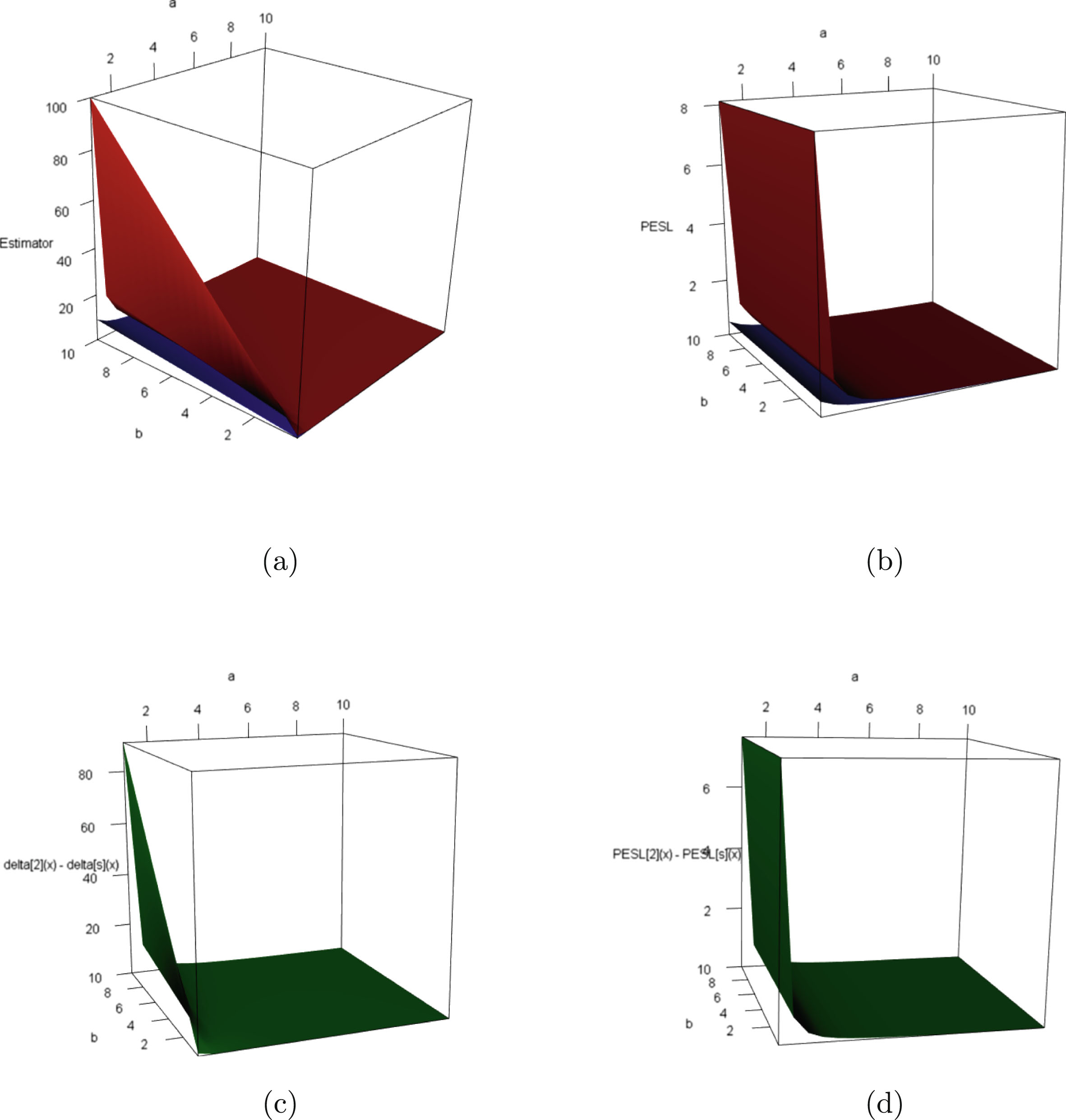

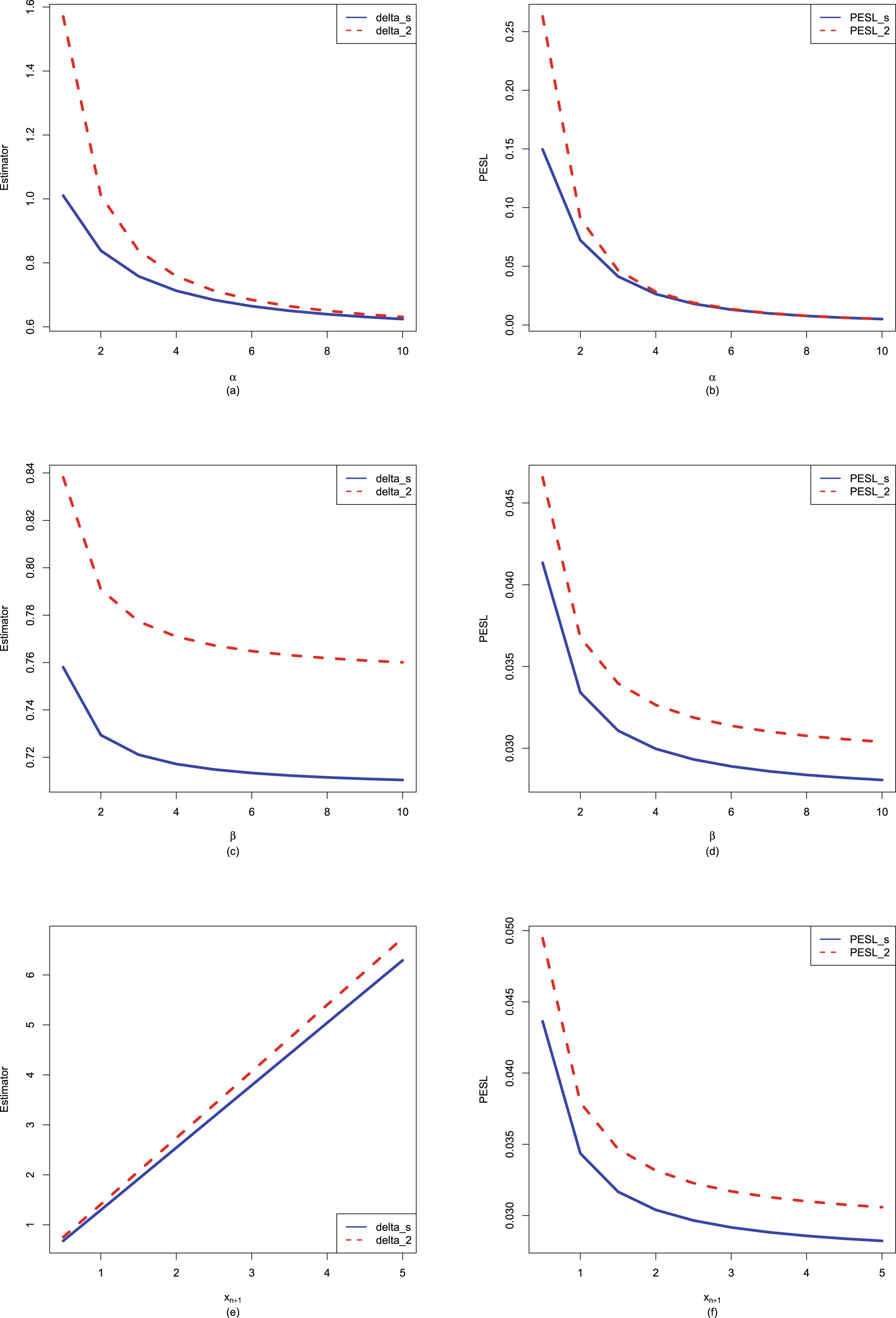

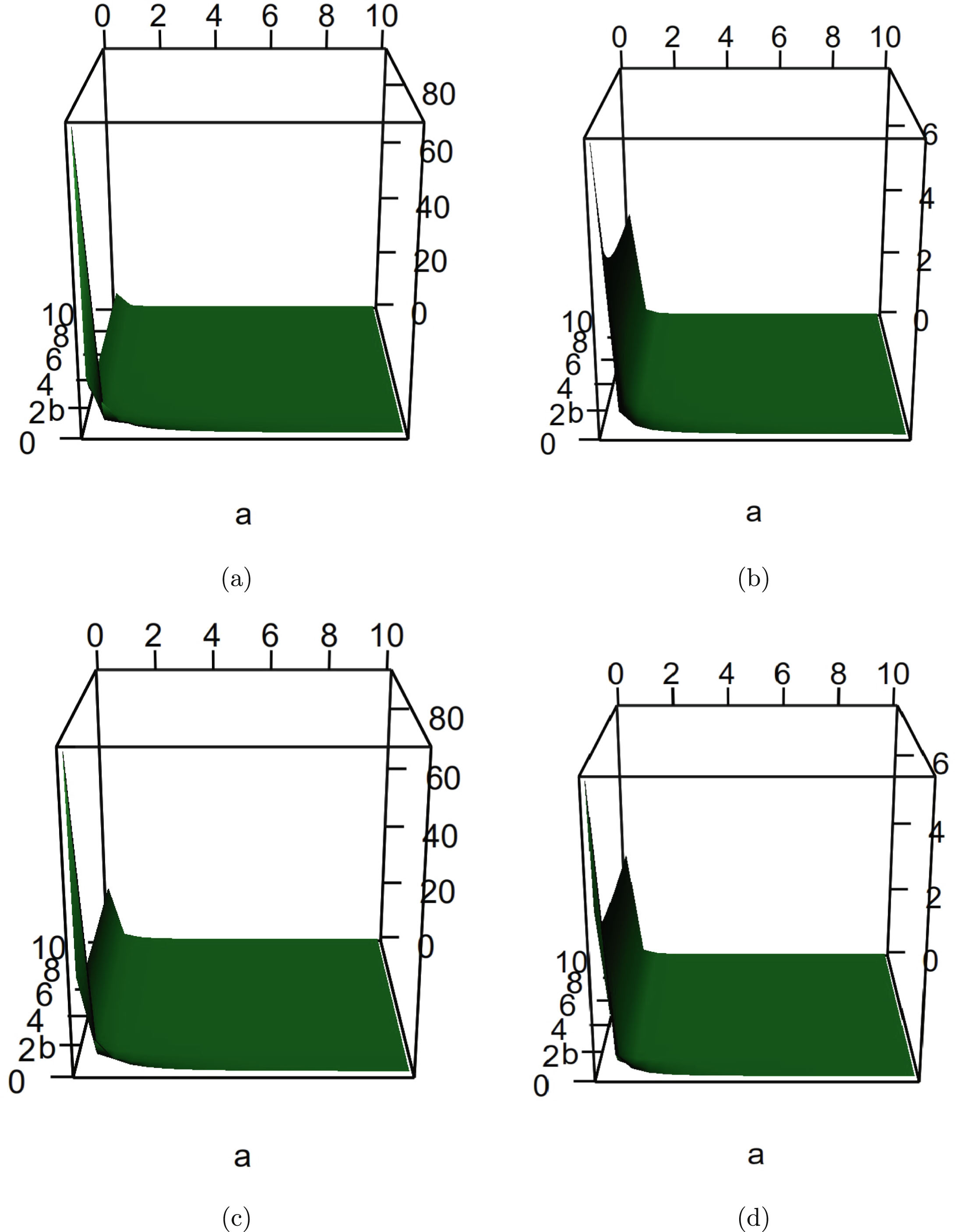

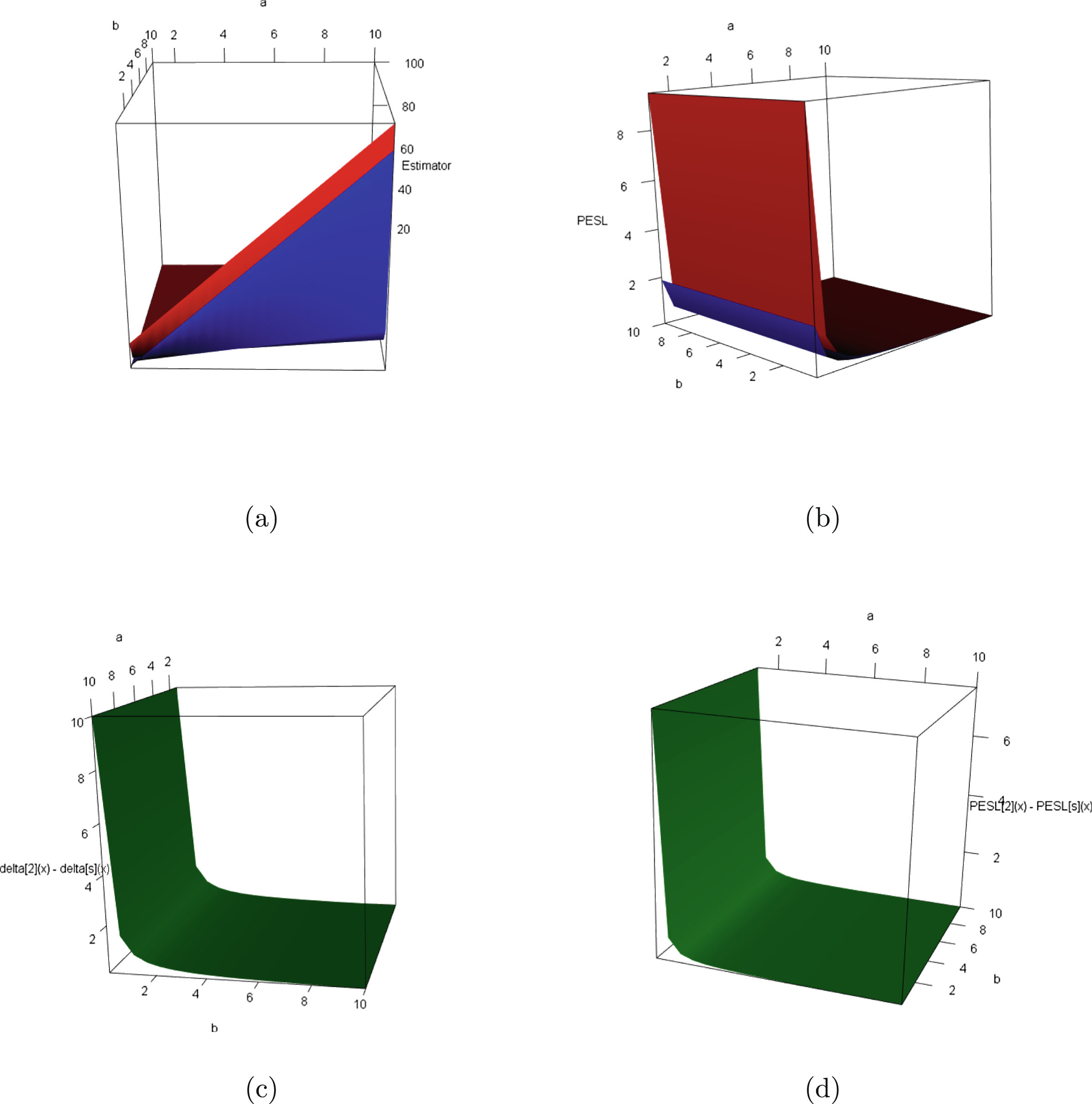

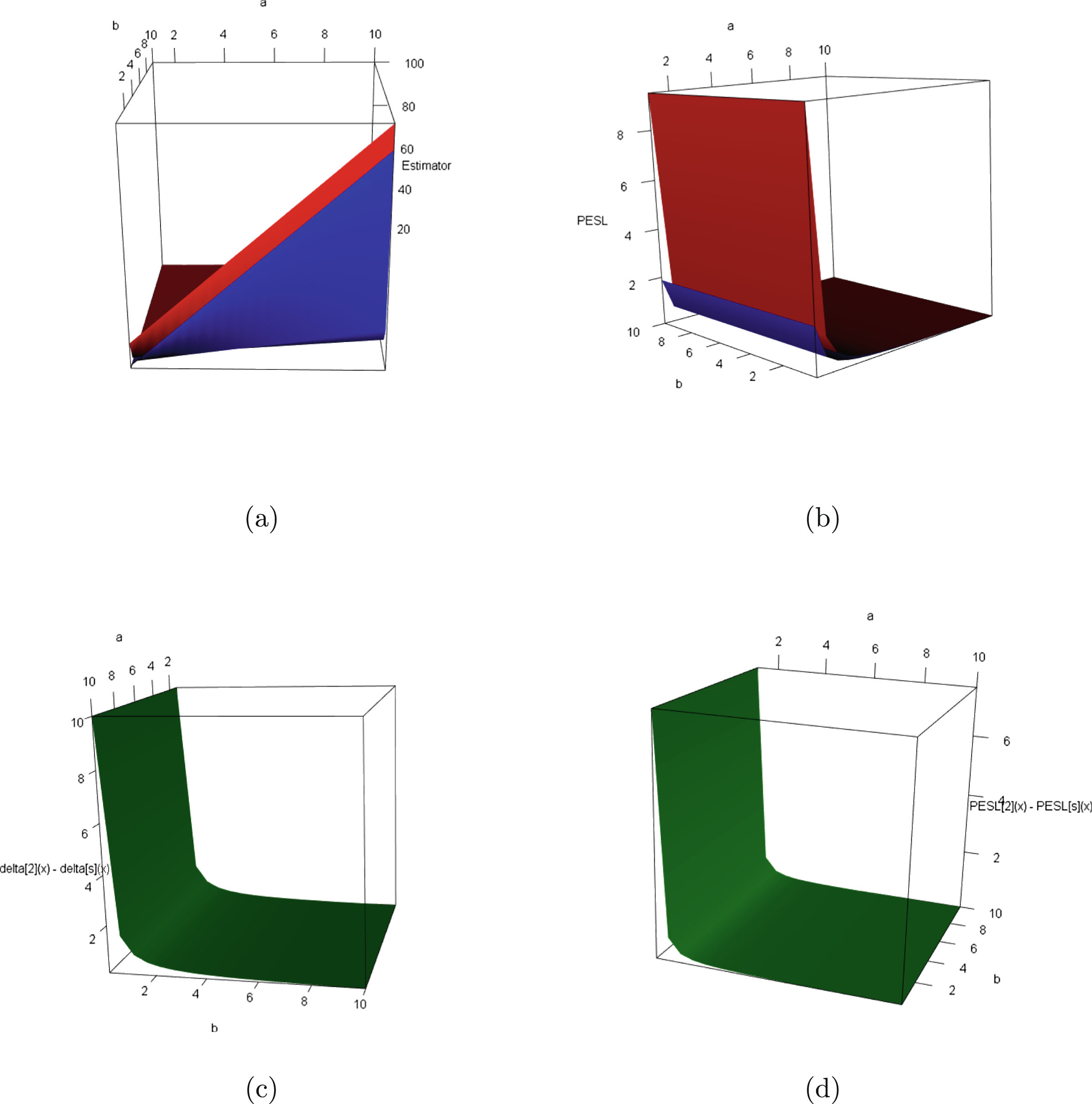

Since the Bayes estimators  and

and  and the PESLs

and the PESLs  and

and  depend on

depend on  and

and  , where

, where  and

and  , we can plot the surfaces of the Bayes estimators and the PESLs on the domain

, we can plot the surfaces of the Bayes estimators and the PESLs on the domain  via the R function persp3d() in the R package rgl (see Adler et al. (2017)). We remark that the R function persp() in the R package graphics can not add another surface to the existing surface, but persp3d() can. Moreover, persp3d() allows one to rotate the perspective plots of the surface according to one’s wishes. Figure 2.4 plots the surfaces of the Bayes estimators and the PESLs, and the surfaces of the differences of the Bayes estimators and the PESLs. The domain for

via the R function persp3d() in the R package rgl (see Adler et al. (2017)). We remark that the R function persp() in the R package graphics can not add another surface to the existing surface, but persp3d() can. Moreover, persp3d() allows one to rotate the perspective plots of the surface according to one’s wishes. Figure 2.4 plots the surfaces of the Bayes estimators and the PESLs, and the surfaces of the differences of the Bayes estimators and the PESLs. The domain for  is

is  for all the plots. a is for

for all the plots. a is for  and b is for

and b is for  in the axes of all the plots. The red surface is for

in the axes of all the plots. The red surface is for  and the blue surface is for

and the blue surface is for  in the upper two plots. From the left two plots of the figure, we see that

in the upper two plots. From the left two plots of the figure, we see that  for all

for all  on

on  . From the right two plots of the figure, we see that

. From the right two plots of the figure, we see that  for all

for all  on

on  . The results of the figure exemplify the theoretical studies of (2.6) and (2.9).

. The results of the figure exemplify the theoretical studies of (2.6) and (2.9).

FIG. 2.4 — IG-IG: (a) The Bayes estimators as functions of  and

and  . (b) The PESLs as functions of

. (b) The PESLs as functions of  and

and  . (c) The surface of

. (c) The surface of  which is positive for all

which is positive for all  on

on  . (d) The surface of

. (d) The surface of  which is also positive for all

which is also positive for all  on

on  .

.

2.3.2 Consistencies of the Moment Estimators and the MLEs

In this subsection, similar to subsection 1.8.1, we will numerically exemplify that the moment estimators and the MLEs are consistent estimators of the hyperparameters  ,

,  , and

, and  of the hierarchical inverse gamma and inverse gamma model (2.1). The motivation of this subsection is that in Theorems 2.2 and 2.3, we have theoretically shown that the moment estimators and the MLEs are consistent estimators of the hyperparameters. Note that only

of the hierarchical inverse gamma and inverse gamma model (2.1). The motivation of this subsection is that in Theorems 2.2 and 2.3, we have theoretically shown that the moment estimators and the MLEs are consistent estimators of the hyperparameters. Note that only  are used in this subsection. The simulation design of this subsection is detailed in appendix A.4.

are used in this subsection. The simulation design of this subsection is detailed in appendix A.4.

The frequencies of the moment estimators ( ,

,  , and

, and  ) and the MLEs (

) and the MLEs ( ,

,  , and

, and  ) of the hyperparameters (

) of the hyperparameters ( ,

,  , and

, and  ) as

) as  varies for

varies for  and

and  , 0.5, and 0.1 are reported in table 2.5. From this table, we observe the following facts.

, 0.5, and 0.1 are reported in table 2.5. From this table, we observe the following facts.

1. Given  , 0.5, or 0.1, the frequencies of the estimators (

, 0.5, or 0.1, the frequencies of the estimators ( ,

,  ,

,  , or

, or  ,

,  ) tend to 0 as

) tend to 0 as  increases to infinity, which means that the moment estimators and the MLEs are consistent estimators of the hyperparameters. For

increases to infinity, which means that the moment estimators and the MLEs are consistent estimators of the hyperparameters. For  , the frequencies of the estimators are still very large. However, we observe the tendencies of declining to 0 as

, the frequencies of the estimators are still very large. However, we observe the tendencies of declining to 0 as  increases to infinity.

increases to infinity.

2. Comparing the frequencies corresponding to  , 0.5, and 0.1, we observe that as

, 0.5, and 0.1, we observe that as  gets smaller, the frequencies tend to be larger, since the constraints

gets smaller, the frequencies tend to be larger, since the constraints

are easier to meet.

are easier to meet.

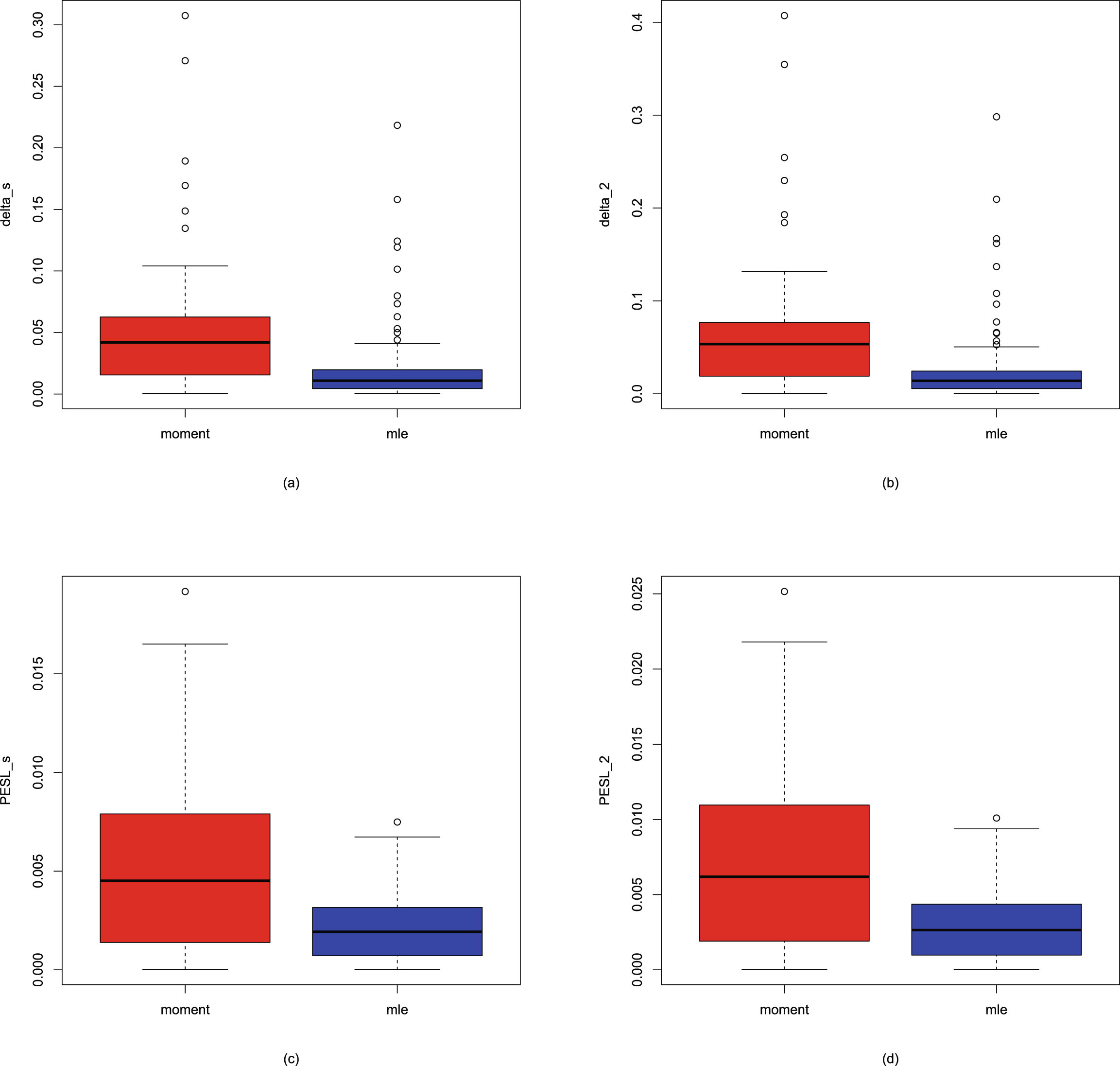

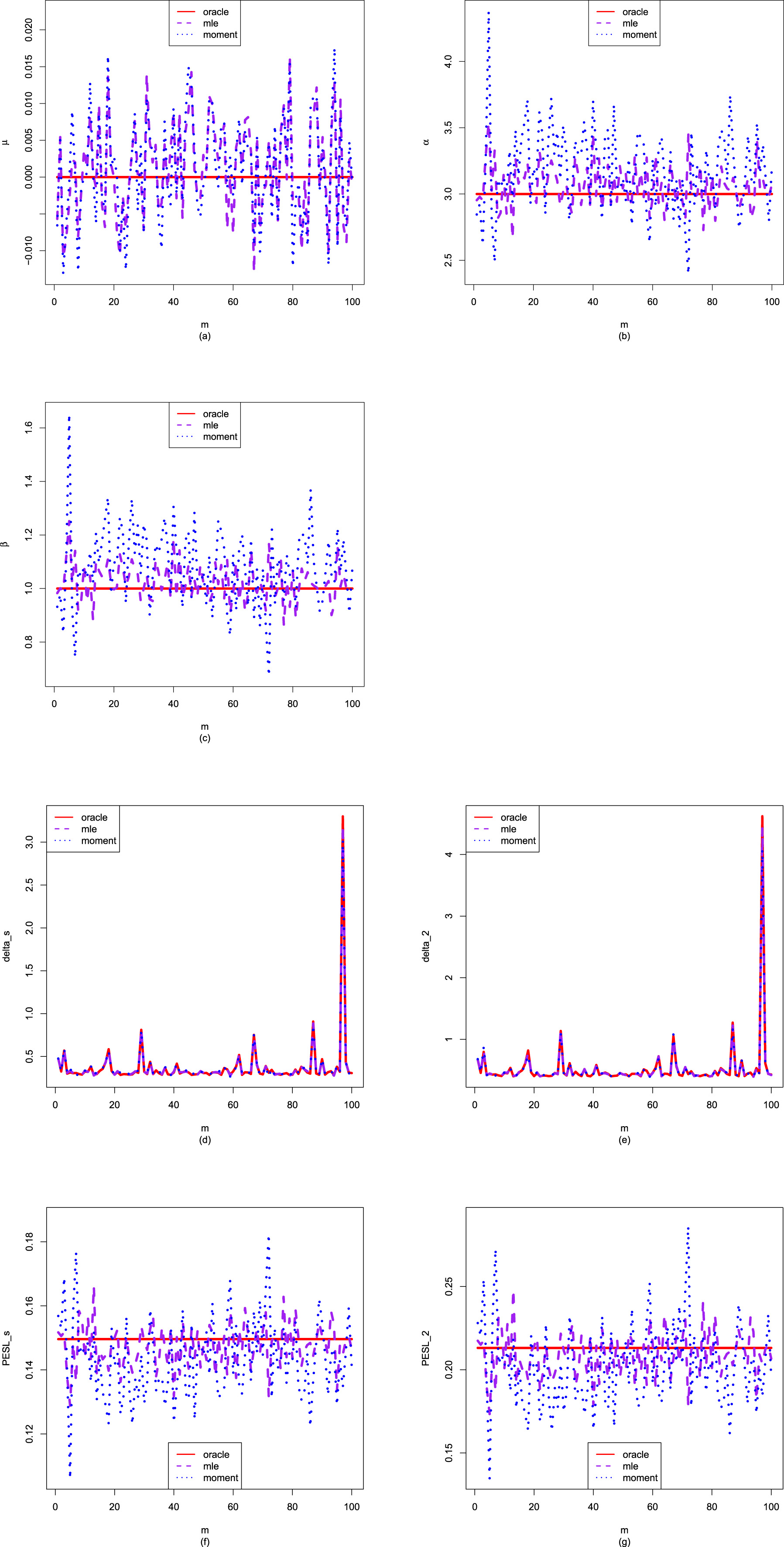

3. Comparing the moment estimators and the MLEs of the hyperparameters  ,

,  , and

, and  , we see that the frequencies of the MLEs are smaller than those of the moment estimators, which means that the MLEs are better than the moment estimators when estimating the hyperparameters in terms of consistency.

, we see that the frequencies of the MLEs are smaller than those of the moment estimators, which means that the MLEs are better than the moment estimators when estimating the hyperparameters in terms of consistency.

TAB. 2.5 — IIG-IG: The frequencies of the moment estimators and the MLEs of the hyperparameters as  varies for

varies for  and

and  , 0.5, and 0.1.

, 0.5, and 0.1.

| Moment estimators | MLEs | ||||||

| n | |||||||

| 1e4 | 0.01 | 0.25 | 0.33 | 0.01 | 0.03 | 0.04 | |

| 2e4 | 0.00 | 0.09 | 0.12 | 0.00 | 0.00 | 0.01 | |

| 4e4 | 0.00 | 0.01 | 0.07 | 0.00 | 0.00 | 0.00 | |

| 8e4 | 0.00 | 0.00 | 0.00 | 0.00 | 0.00 | 0.00 | |

| 1e4 | 0.07 | 0.62 | 0.63 | 0.05 | 0.07 | 0.12 | |

| 2e4 | 0.05 | 0.55 | 0.60 | 0.02 | 0.05 | 0.07 | |

| 4e4 | 0.03 | 0.45 | 0.48 | 0.01 | 0.06 | 0.09 | |

| 8e4 | 0.01 | 0.20 | 0.27 | 0.00 | 0.01 | 0.02 | |

| 1e4 | 0.82 | 0.94 | 0.88 | 0.14 | 0.56 | 0.75 | |

| 2e4 | 0.83 | 0.92 | 0.93 | 0.09 | 0.40 | 0.65 | |

| 4e4 | 0.71 | 0.92 | 0.90 | 0.10 | 0.32 | 0.53 | |

| 8e4 | 0.62 | 0.87 | 0.88 | 0.07 | 0.19 | 0.34 | |

2.3.3 Goodness-of-Fit of the Model: KS Test

In this subsection, similar to subsection 1.8.2, we will calculate the goodness-of-fit of the hierarchical inverse gamma and inverse gamma model (2.1) to the simulated data. The motivation of this subsection is that we want to check whether the marginal distribution of the hierarchical inverse gamma and inverse gamma model (2.1) fits the simulated data well. Note that only  are used in this subsection.

are used in this subsection.

In our problem, the null hypothesis specifies that

where

where  is the marginal distribution of the hierarchical inverse gamma and inverse gamma model (2.1). The marginal density of the

is the marginal distribution of the hierarchical inverse gamma and inverse gamma model (2.1). The marginal density of the  distribution is given by (2.3), which is obviously one-dimensional continuous. Hence, the KS test can be utilized as a measure of the goodness-of-fit.

distribution is given by (2.3), which is obviously one-dimensional continuous. Hence, the KS test can be utilized as a measure of the goodness-of-fit.

The results of the KS test goodness-of-fit of the model (2.1) to the simulated data are reported in table 2.6. Note that the data are simulated according to the hierarchical inverse gamma and inverse gamma model (2.1) with  ,

,  , and

, and  . In the table, the hyperparameters

. In the table, the hyperparameters  ,

,  , and

, and  are estimated by three methods. The first method is the oracle method, in that we know the hyperparameters

are estimated by three methods. The first method is the oracle method, in that we know the hyperparameters  ,

,  , and

, and  . The second method is the moment method, in that the hyperparameters

. The second method is the moment method, in that the hyperparameters  ,

,  , and

, and  are estimated by their moment estimators (see Theorem 2.2). The third method is the MLE method, in that the hyperparameters

are estimated by their moment estimators (see Theorem 2.2). The third method is the MLE method, in that the hyperparameters  ,

,  , and

, and  are estimated by their MLEs (see Theorem 2.3). In the table, the sample size is

are estimated by their MLEs (see Theorem 2.3). In the table, the sample size is  , and the number of simulations is

, and the number of simulations is  .

.

1. From table 2.6, we observe the following facts.

The  values for the three methods are respectively given by 0.2983, 0.0338, and 0.0255, which means that the MLE method is the best method, the moment method is the second-best method, and the oracle method is the worst method. A possible explanation for this phenomenon is that in (1.23), the empirical cdf

values for the three methods are respectively given by 0.2983, 0.0338, and 0.0255, which means that the MLE method is the best method, the moment method is the second-best method, and the oracle method is the worst method. A possible explanation for this phenomenon is that in (1.23), the empirical cdf  is based on data, and the population cdfs

is based on data, and the population cdfs  for the MLE method and the moment method are also based on data, while the population cdf

for the MLE method and the moment method are also based on data, while the population cdf  for the oracle method is not based on data.

for the oracle method is not based on data.

2. The  values for the three methods are respectively given by 0.0677, 0.4077, and 0.6503, which also means that the MLE method ranks first, the moment method ranks second, and the oracle method ranks third. The possible explanation for this phenomenon is described in the previous paragraph.

values for the three methods are respectively given by 0.0677, 0.4077, and 0.6503, which also means that the MLE method ranks first, the moment method ranks second, and the oracle method ranks third. The possible explanation for this phenomenon is described in the previous paragraph.

3. The  values for the three methods are respectively given by 0.02, 0.40, and 0.58. The

values for the three methods are respectively given by 0.02, 0.40, and 0.58. The  value for the MLE method accounts for over half of the

value for the MLE method accounts for over half of the  simulations. The order of preference for the three methods is the MLE method, the moment method, and the oracle method.

simulations. The order of preference for the three methods is the MLE method, the moment method, and the oracle method.

4. The  values for the three methods are respectively given by 0.02, 0.40, and 0.58. A small

values for the three methods are respectively given by 0.02, 0.40, and 0.58. A small  value corresponds to a large p-value. Hence, the smallest

value corresponds to a large p-value. Hence, the smallest  value corresponds to the largest p-value. Therefore, the

value corresponds to the largest p-value. Therefore, the  value and the

value and the  value for the three methods are the same. The order of preference for the three methods is the MLE method, the moment method, and the oracle method.

value for the three methods are the same. The order of preference for the three methods is the MLE method, the moment method, and the oracle method.

5. The  values for the three methods are respectively given by 0.14, 0.81, and 0.91. Once again, the order of preference for the three methods is the MLE method, the moment method, and the oracle method. The

values for the three methods are respectively given by 0.14, 0.81, and 0.91. Once again, the order of preference for the three methods is the MLE method, the moment method, and the oracle method. The  values for the moment method and the MLE method are over

values for the moment method and the MLE method are over  , which means that the two methods have good performances in terms of goodness-of-fit.

, which means that the two methods have good performances in terms of goodness-of-fit.

6. In summary, for the five indices ( ,

,  ,

,  ,

,  ,

,  ), the order of preference for the three methods is the MLE method, the moment method, and the oracle method. Comparing the moment method and the MLE method, we find that the MLE method has a better performance than the moment method in terms of all five indices.

), the order of preference for the three methods is the MLE method, the moment method, and the oracle method. Comparing the moment method and the MLE method, we find that the MLE method has a better performance than the moment method in terms of all five indices.

TAB. 2.6 — IIG-IG: The results of the KS test goodness-of-fit of the model (2.1) to the simulated data.

| Oracle method | Moment method | MLE method | |

| 0.2983 | 0.0338 | 0.0255 | |

| 0.0677 | 0.4077 | 0.6503 | |

| 0.02 | 0.40 | 0.58 | |

| 0.02 | 0.40 | 0.58 | |

| 0.14 | 0.81 | 0.91 |

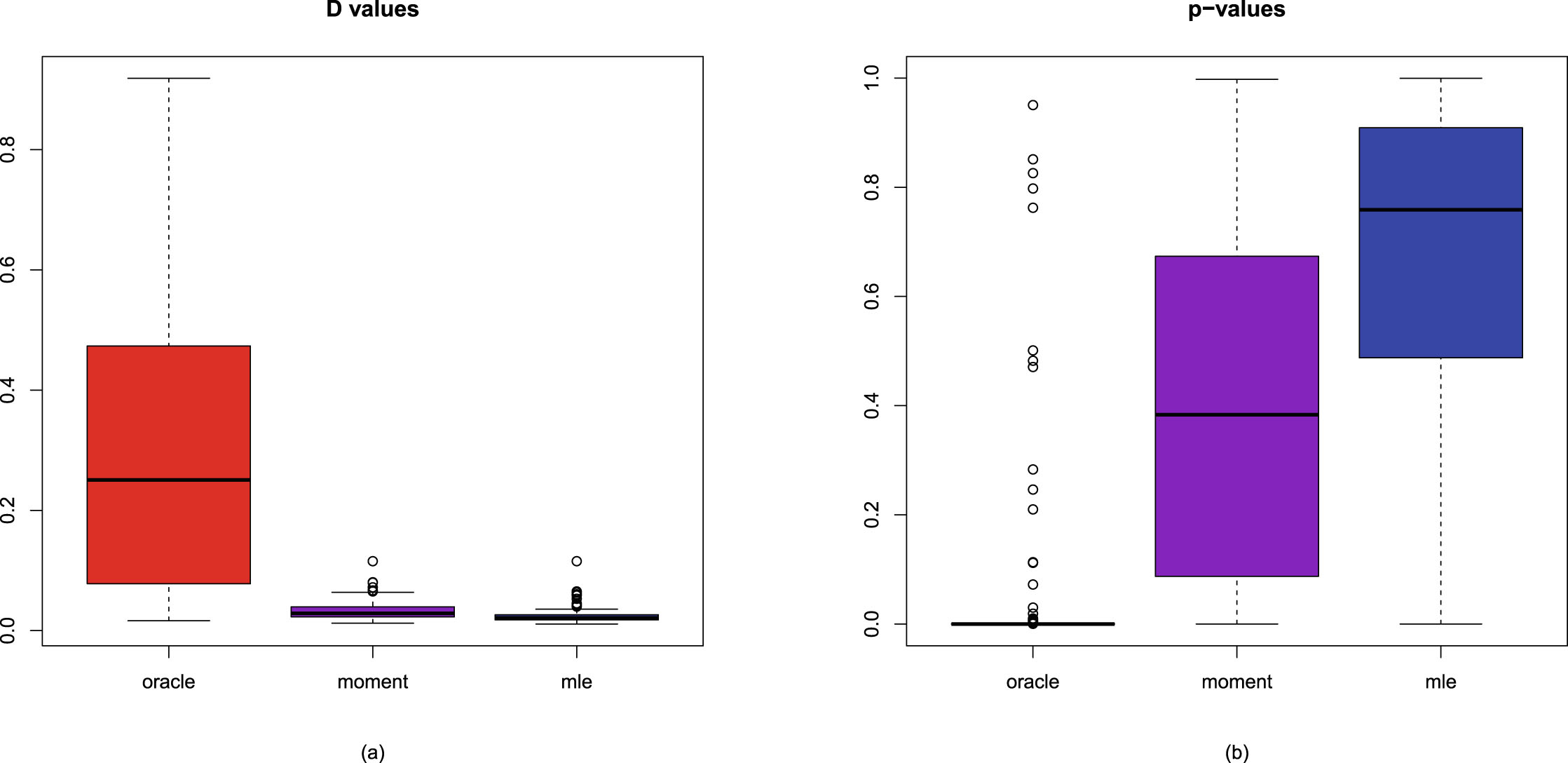

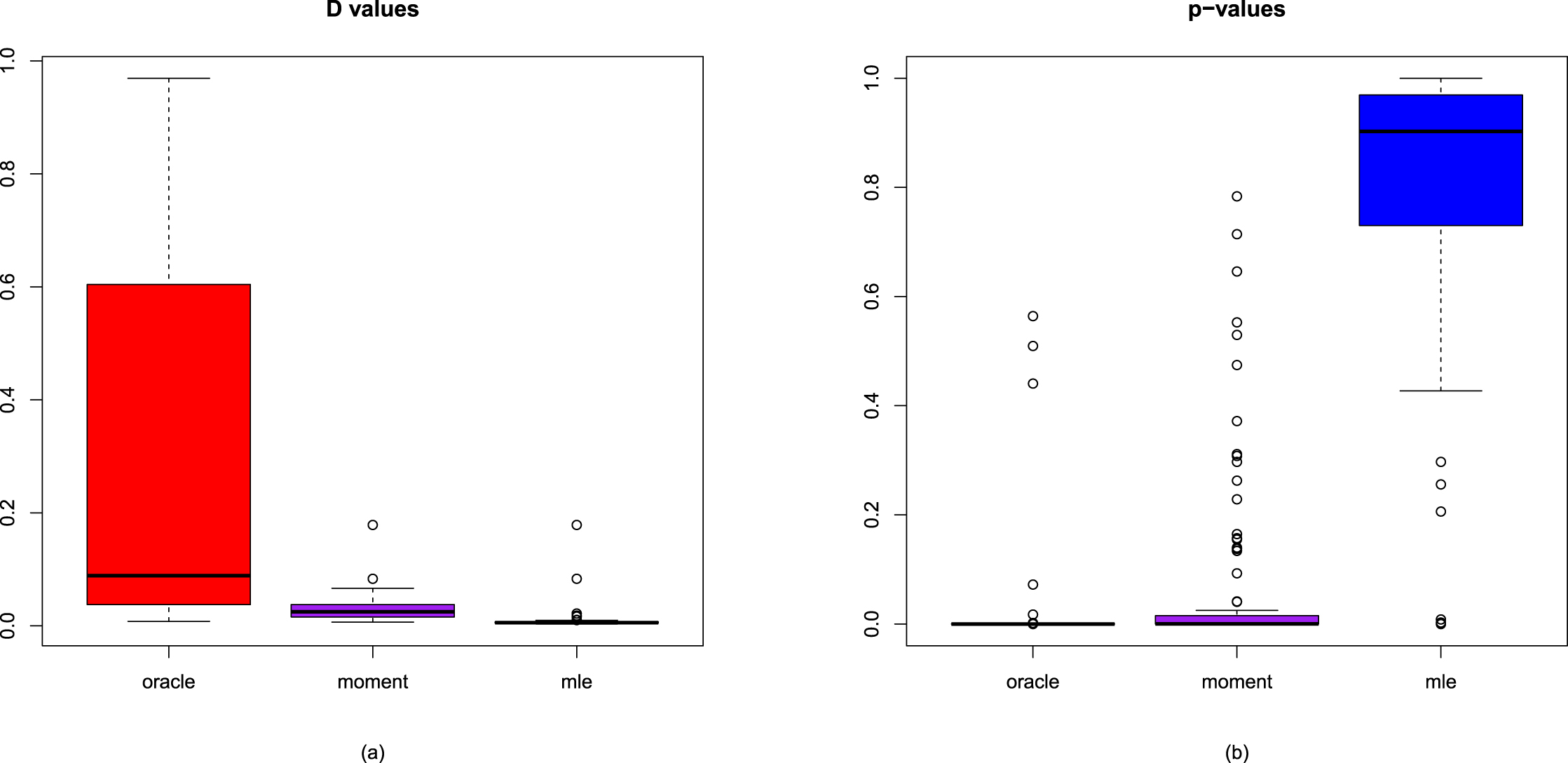

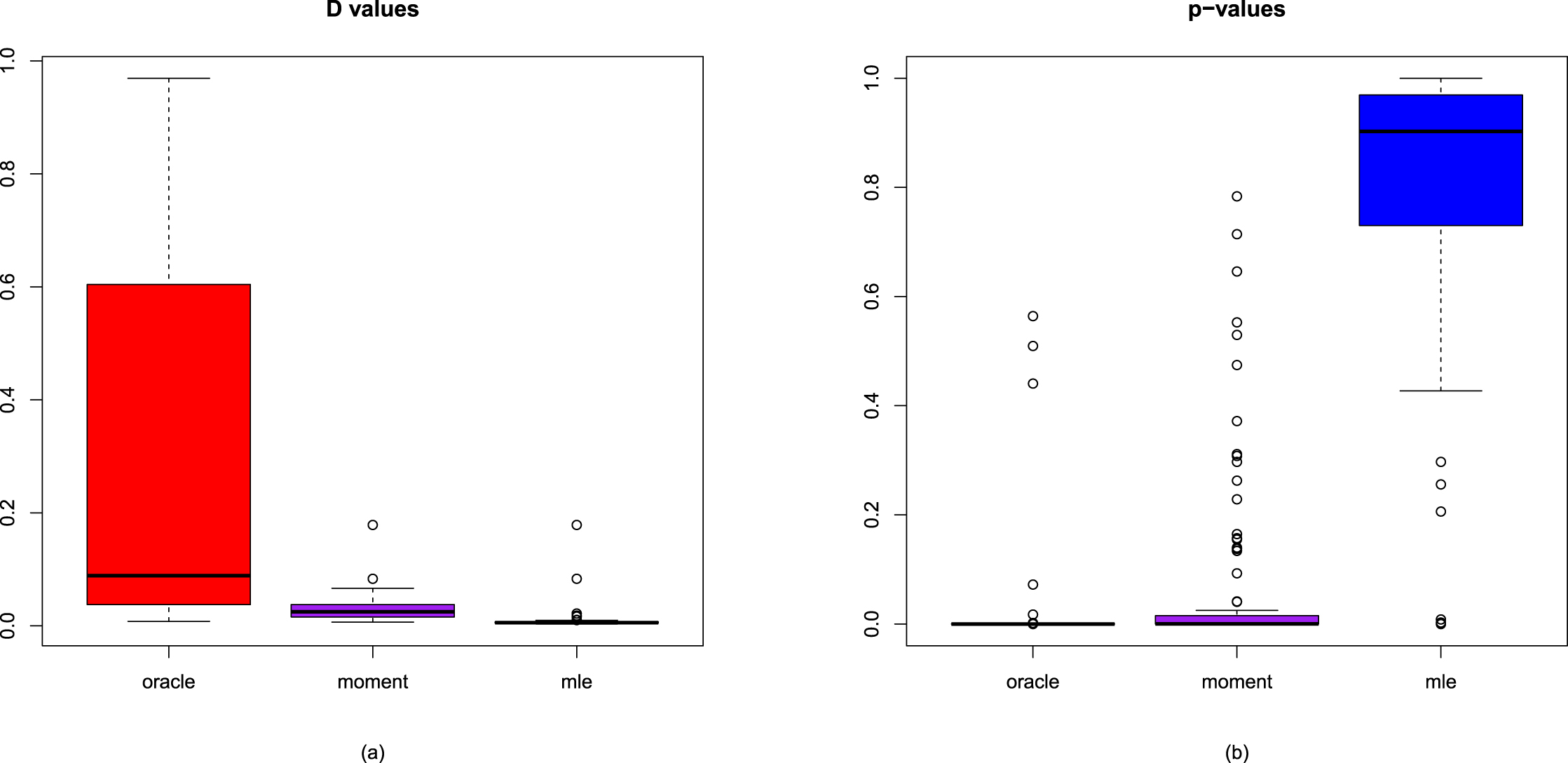

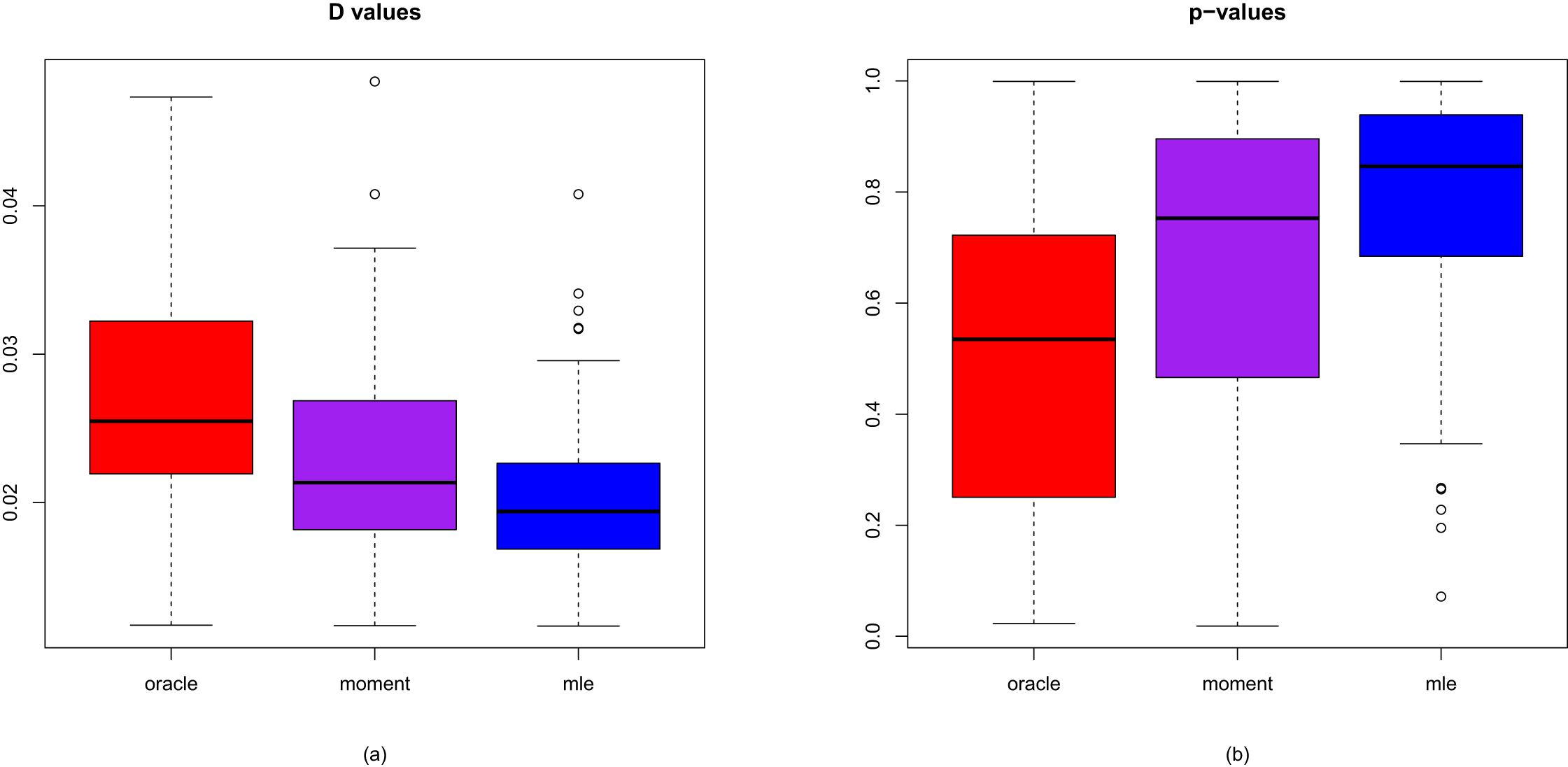

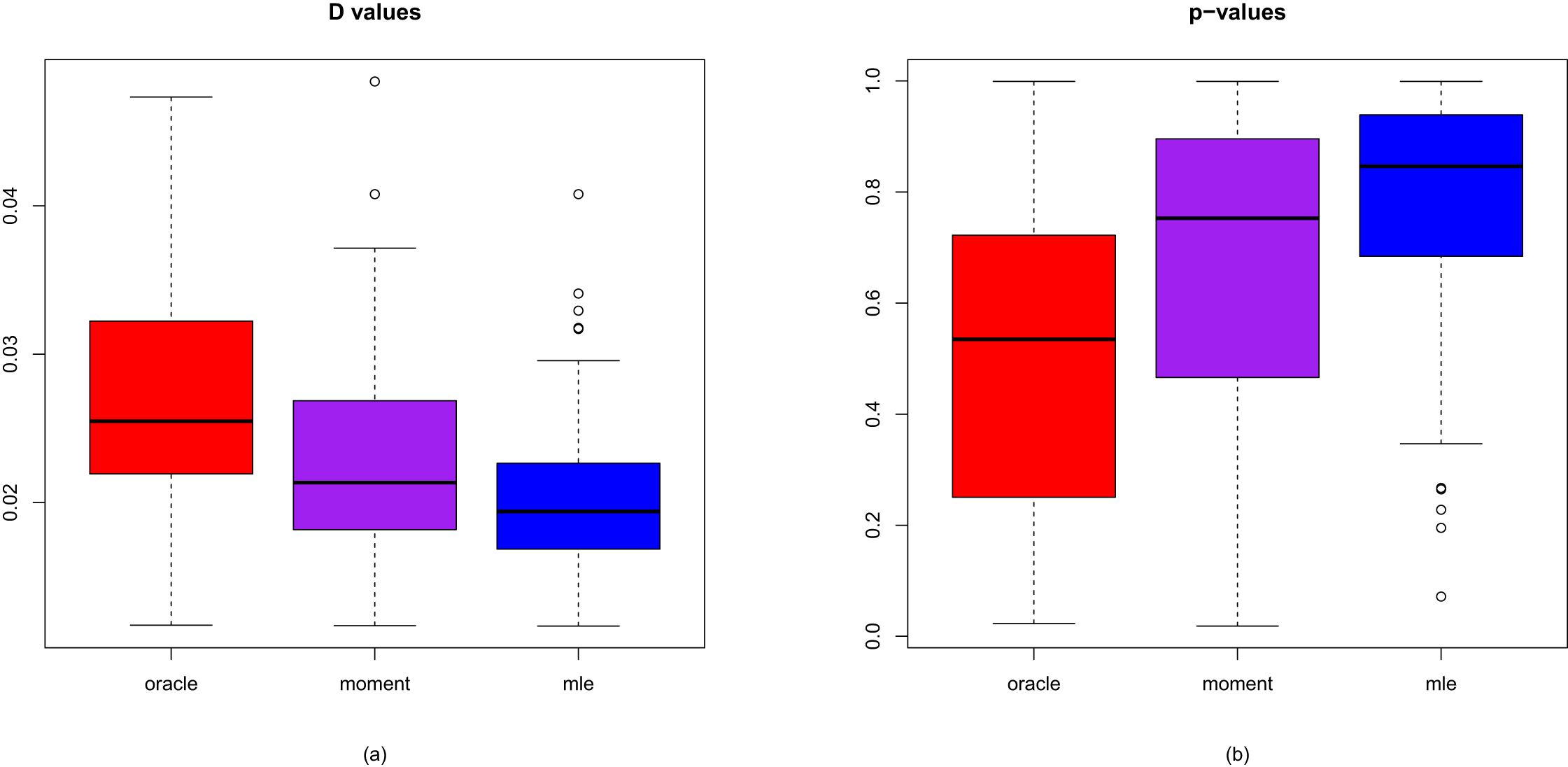

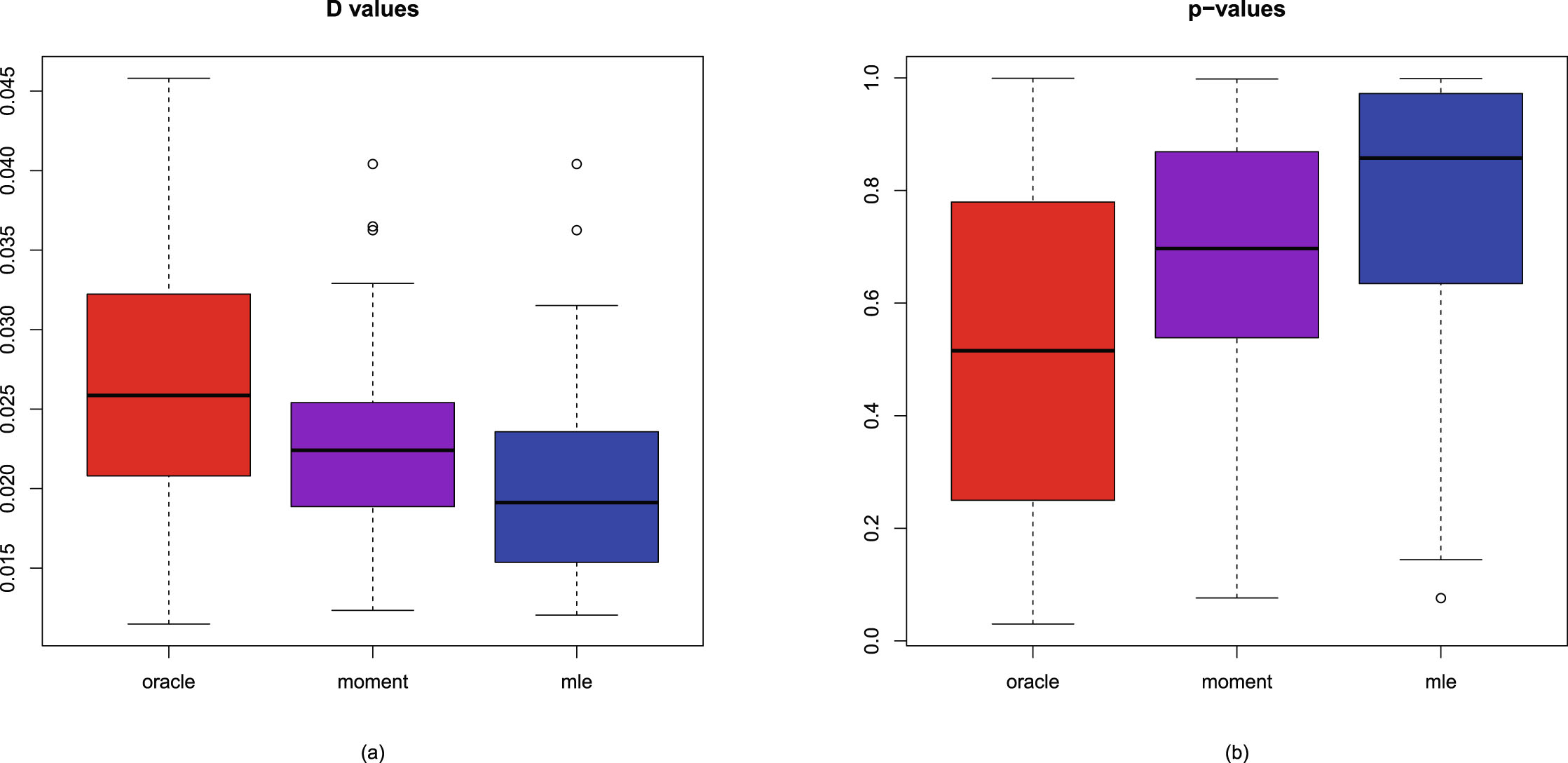

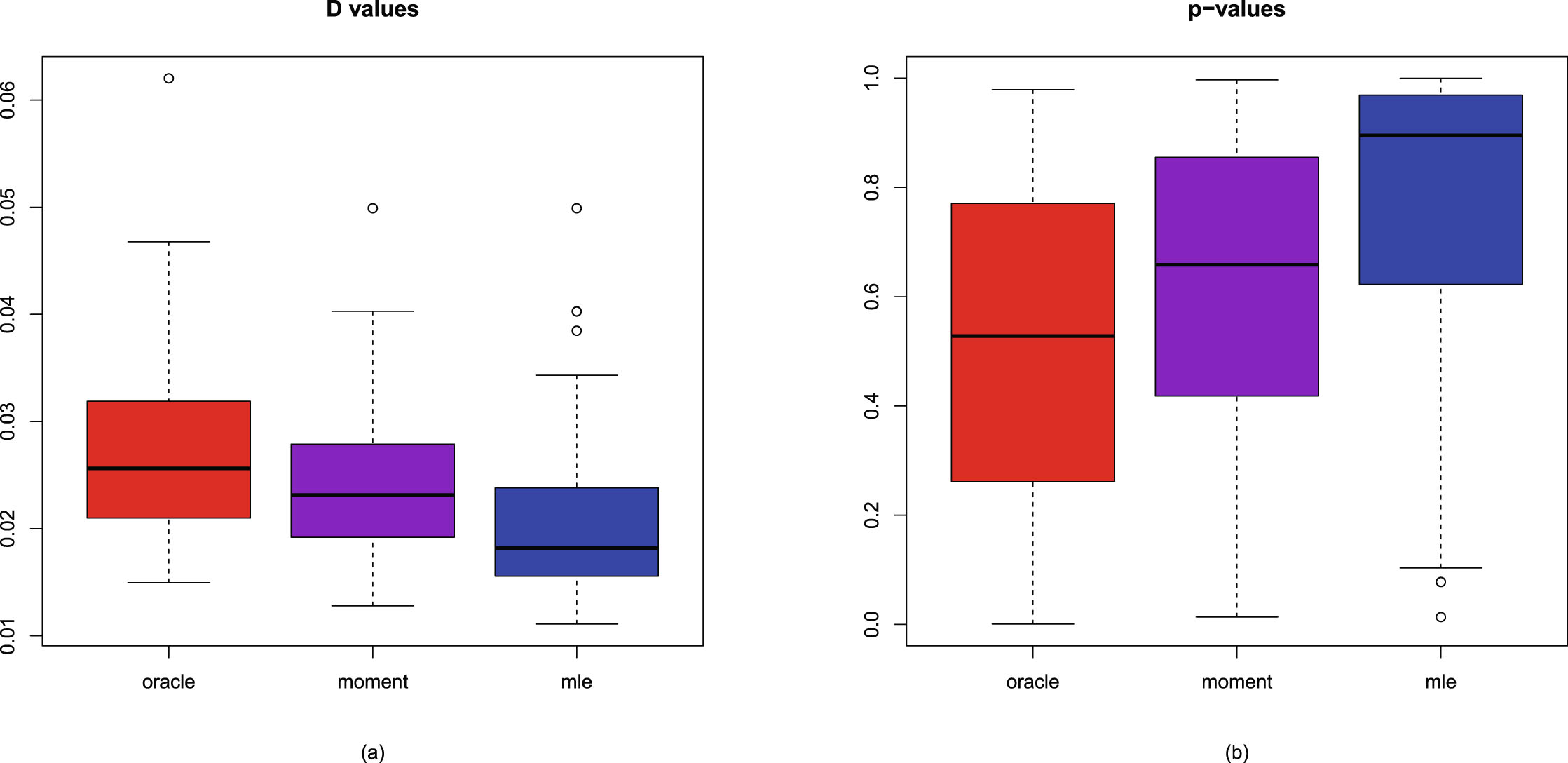

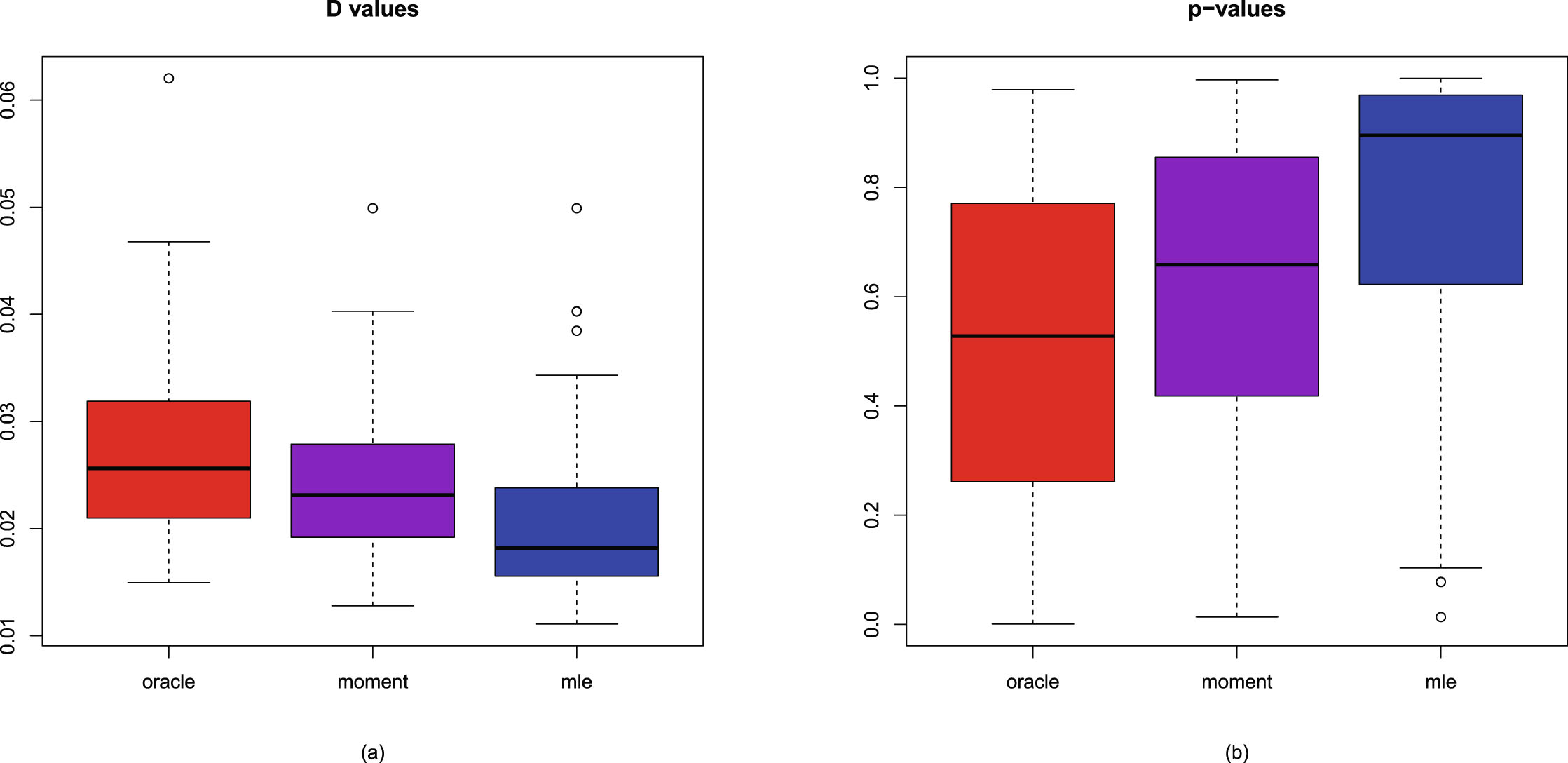

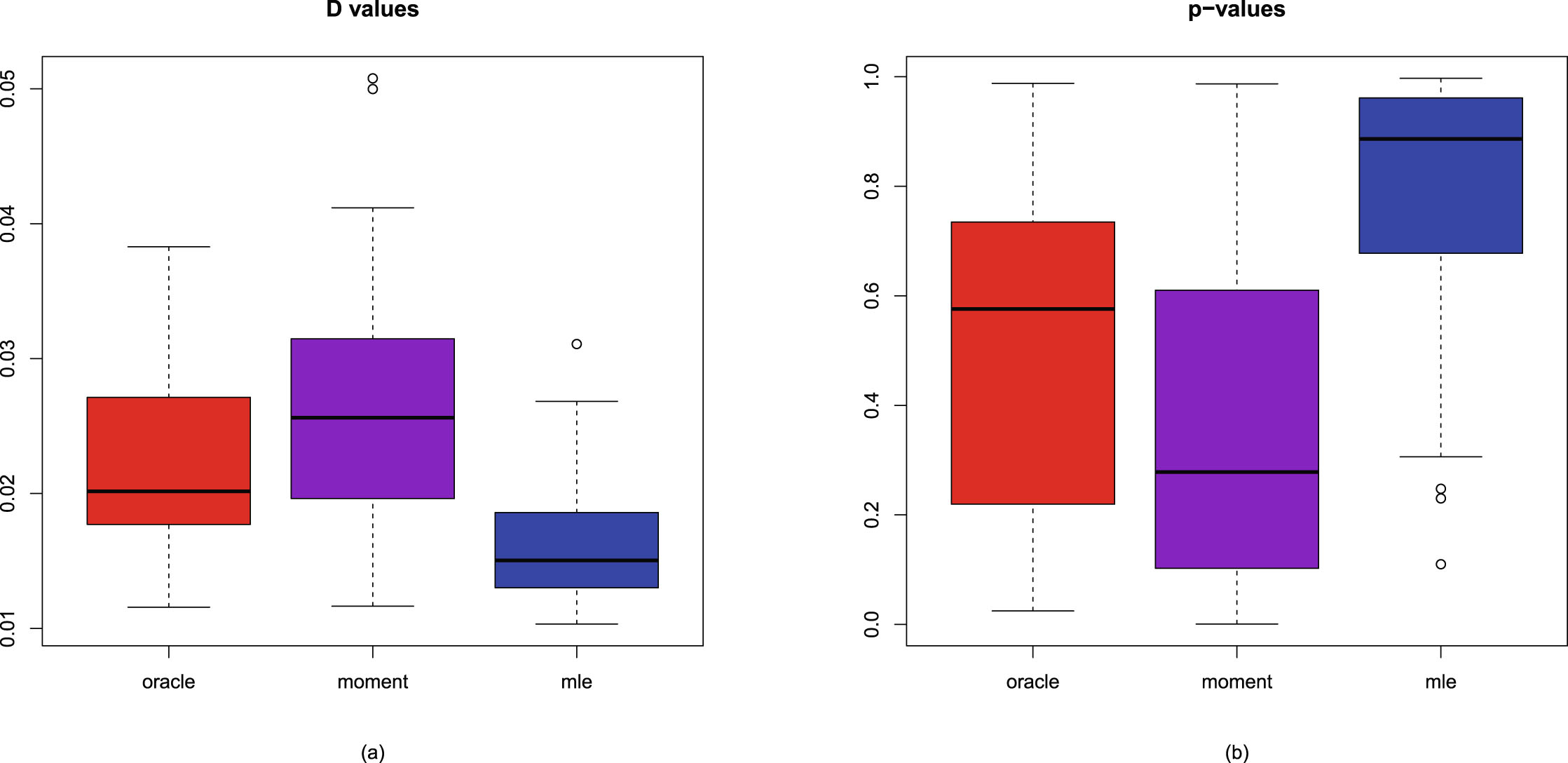

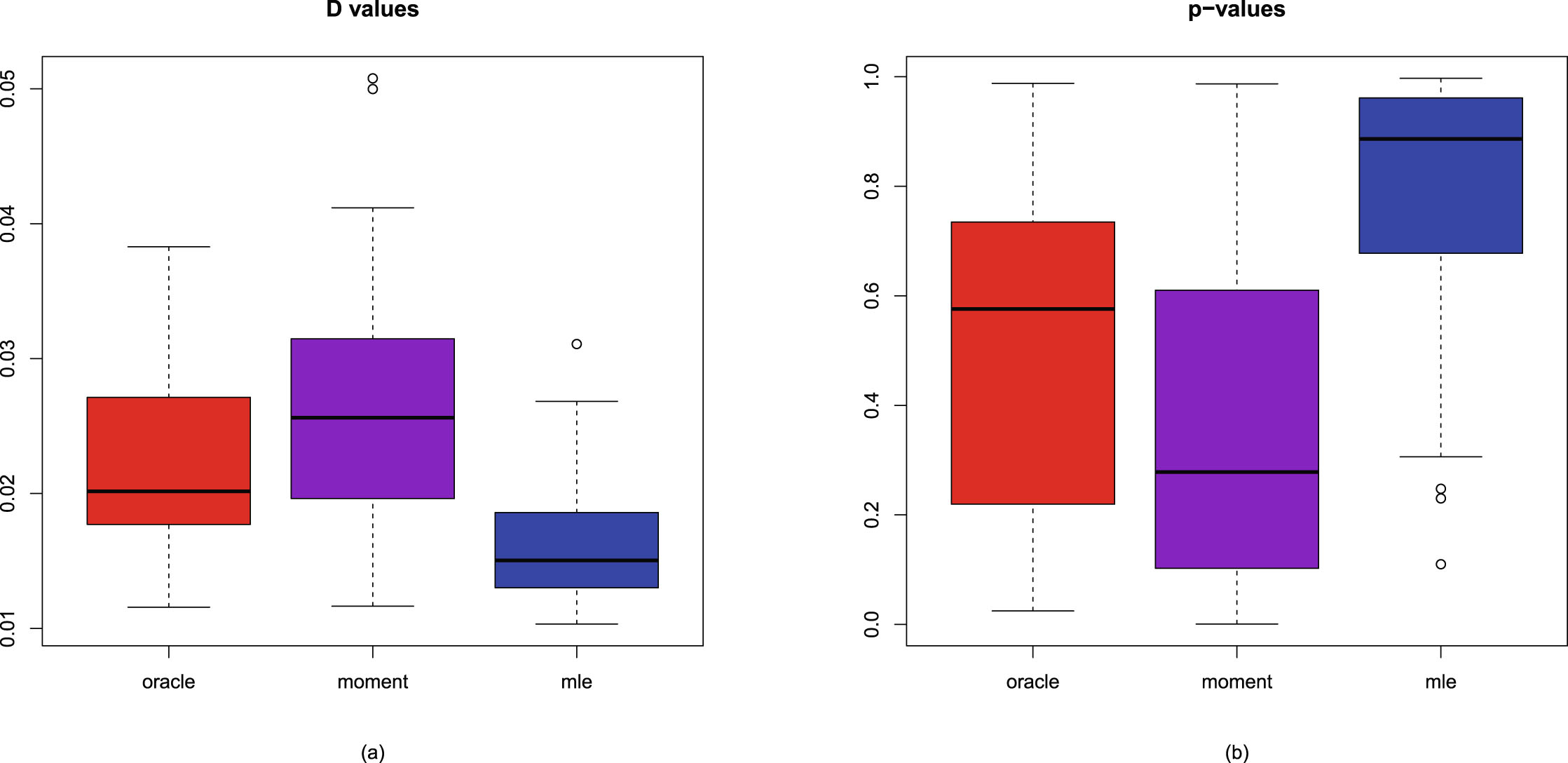

The boxplots of the  values and the p-values for the three methods are displayed in figure 2.5. From the figure, we observe the following facts.

values and the p-values for the three methods are displayed in figure 2.5. From the figure, we observe the following facts.

1. The  values of the oracle method are significantly larger than those of the other two methods. Since for the